How Manifest v3 forced us to rethink Browser Guard, and why that’s a good thing

As a Browser Guard user, you might not have noticed much difference lately. Browser Guard still blocks scams and phishing attempts just like always, and, in many cases, even better.

But behind the scenes, almost everything changed. The rules that govern how browser extensions work went through a major overhaul, and we had to completely rebuild how Browser Guard protects you.

First, what is Manifest v3 (and v2)?

Browser extensions include a configuration file called a “manifest”. Think of it as an instruction manual that tells your browser what an extension can do and how it’s allowed to do it.

Manifest v3 is the latest version of that system, and it’s now the only option allowed in major browsers like Chrome and Edge.

In Manifest v2, Browser Guard could use highly customized logic to analyze and block suspicious activity as it happened, protecting you as you browsed the web.

With Manifest v3, that flexibility is mostly gone. Extensions can no longer run deeply complex, custom logic in the same way. Instead, we can only pass static rule lists to the browser, called Declarative Net Request (DNR) rules.

But those DNR rules come with strict constraints.

Rule sets are size-limited by the browser to save space. Because rules are stored as raw JSON files, developers can’t use other data types to make them smaller. And updating those DNR rules can only be done by updating the extension entirely.

This is less of a problem on Chrome, which allows developers to push updates quickly, but other browsers don’t currently support this fast-track process. Dynamic rule updates exist, but they’re limited, and nowhere near large enough to hold the full set of rules.

In short, we couldn’t simply port Browser Guard from Manifest v2 to v3. The old approach wouldn’t keep our users protected.

A note about Firefox and Brave

Firefox and Brave chose a different path and continue to support the more flexible Manifest v2 method of blocking requests.

However, since Brave doesn’t have its own extension store, users can only install extensions they already had before Google removed Manifest v2 extensions from the Chrome Web Store. Though Brave also has strong out-of-the-box ad protection.

For Browser Guard users on Firefox, rest assured the same great blocking techniques will continue to work.

How Browser Guard still protects you

Given all of this, we had to get creative.

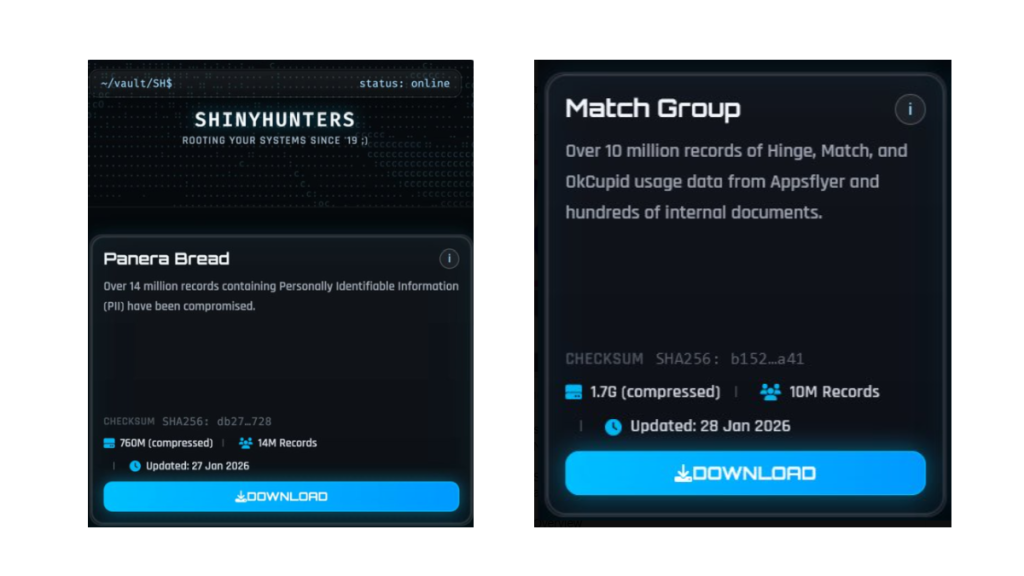

Many ad blockers already support pattern-based matching to stop ads and trackers. We asked a different question: what if we could use similar techniques to catch scam and phishing attempts before we know the specific URL is malicious?

Better yet, what if we did it without relying on the new DNR APIs?

So, we built a new pattern-matching system focused specifically on scam and phishing behavior, supporting:

- Full regex-based URL matching

- Full XPath and querySelector support

- Matching against any content on the page

- Favicon spoof detection

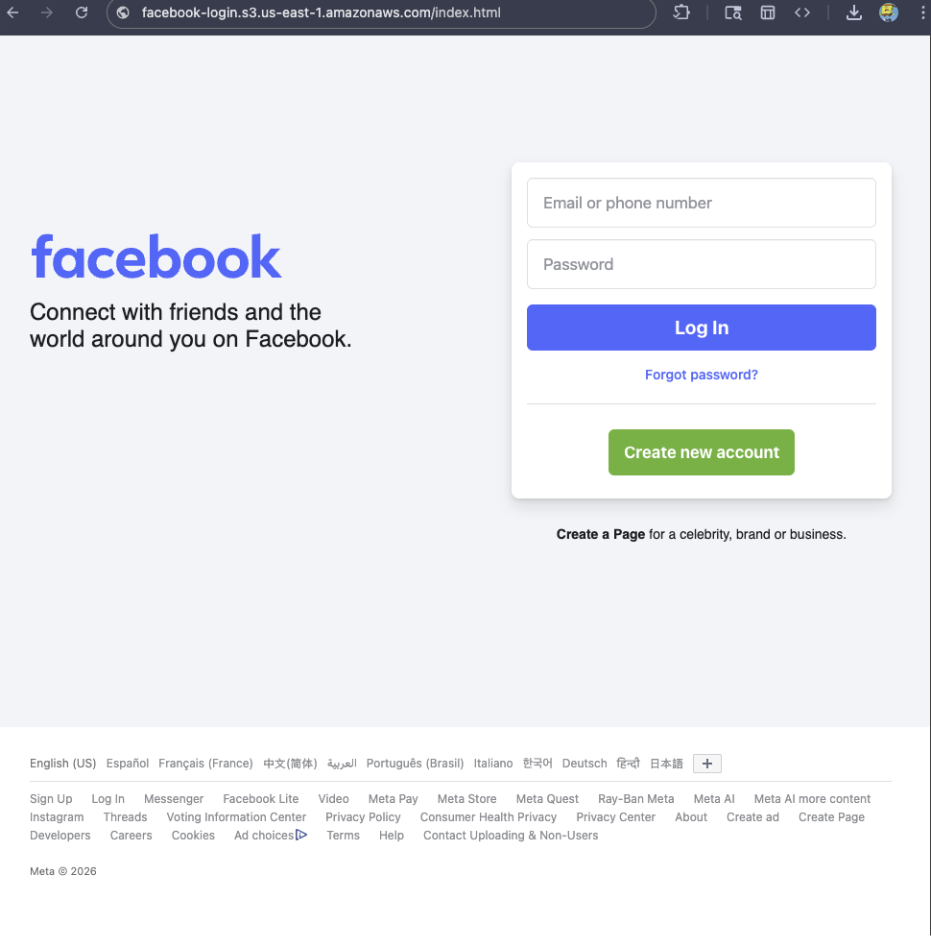

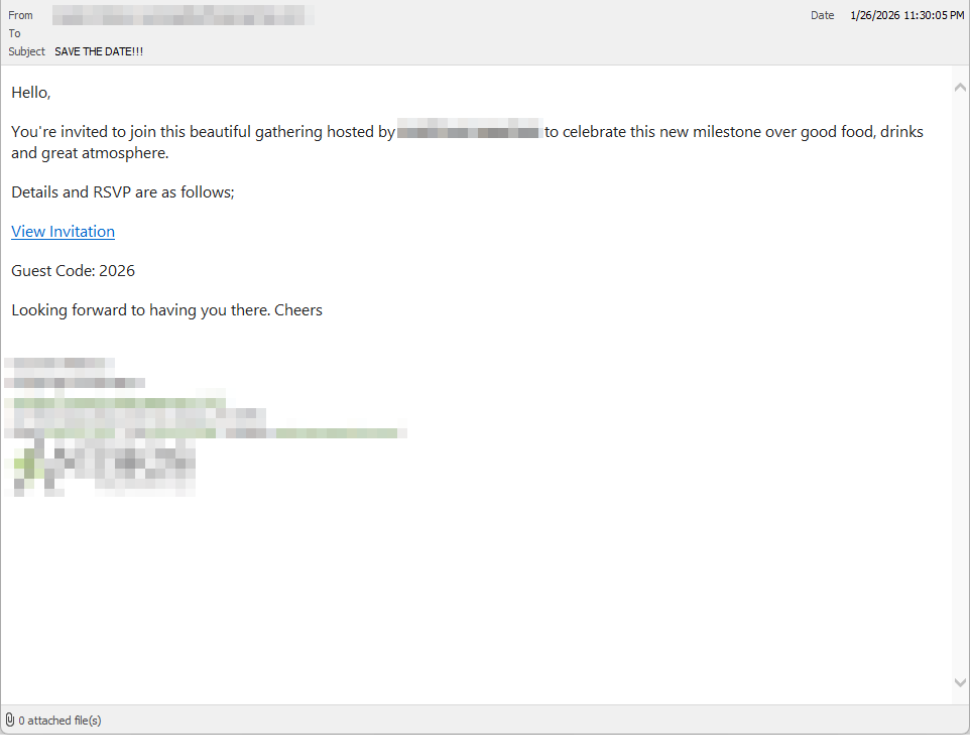

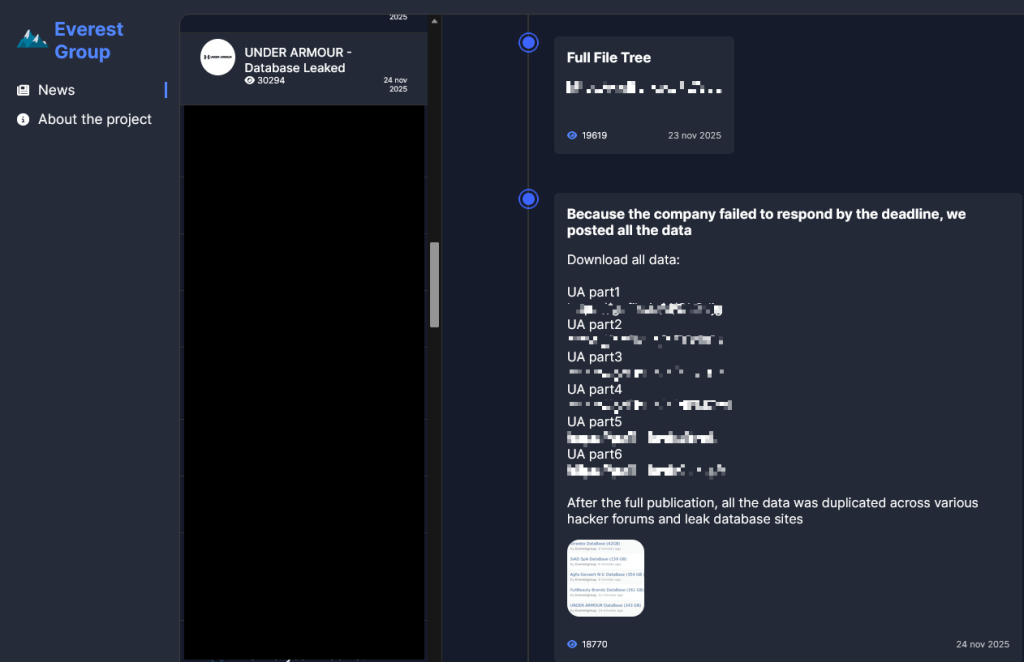

For example, if a site is hosted on Amazon S3, contains a password-input field, and uses a homoglyph in the URL to trick users into thinking they were logging into Facebook, Browser Guard can detect that combination—even if we’ve never seen the URL before.

Why this matters more now

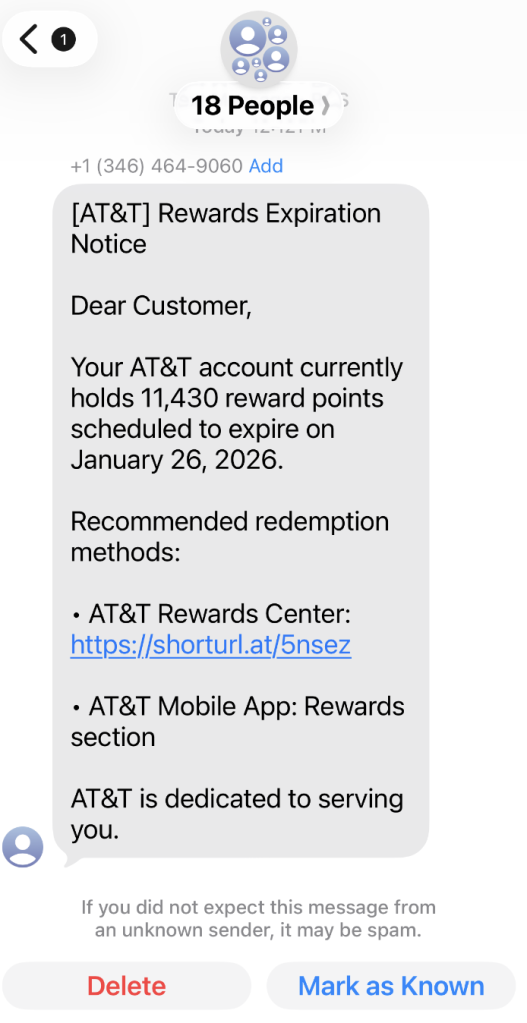

With AI, attackers can create near-perfect duplicates of websites easier than ever. And did you spot the homoglyph in the URL? Nope, neither did I!

That’s why we designed this system so we can update its rules every 30 minutes, instead of waiting for full extension updates.

But I still see static blocking rules in Browser Guard

That’s true—for now.

We’ve found a temporary workaround that lets us support all the rules that we had before. However, we had to remove some of the more advanced logic that used to sit on top of them.

For example, we can’t use these large datasets to block subframe requests, only main frame requests. Nor can we stack multiple logic layers together; blocking is limited to simple matches (regex, domains and URLs).

Those limits are a big reason we’re investing more heavily in pattern-based and heuristic protection.

Pure heuristics

From day one, Browser Guard has used heuristics (behavior) to detect scams and phishing, monitoring behavior on the page to match suspicious activity.

For example, some scam pages deliberately break your browser’s back button by abusing window.replaceState, then trick you into calling that scammer’s “computer helpline.” Others try to convince you to run malicious commands on your computer.

Browser Guard can detect these behaviors and warn you before you fall for them.

What’s next?

Did someone say AI?

You’ve probably seen Scam Guard in other Malwarebytes products. We’re currently working on a version tailored specifically for Browser Guard. More soon!

Final thoughts

While Manifest v3 introduced meaningful improvements to browser security, it also created real challenges for security tools like Browser Guard.

Rather than scaling back, the Browser Guard team rebuilt our approach from the ground up, focusing on behavior, patterns, and faster response times. The result is protection that’s different under the hood, but just as committed to keeping you safe online.

We don’t just report on scams—we help detect them

Cybersecurity risks should never spread beyond a headline. If something looks dodgy to you, check if it’s a scam using Malwarebytes Scam Guard, a feature of our mobile protection products. Submit a screenshot, paste suspicious content, or share a text or phone number, and we’ll tell you if it’s a scam or legit. Download Malwarebytes Mobile Security for iOS or Android and try it today!