Fake apps, NFC skimming attacks, and other Android issues in 2026 | Kaspersky official blog

The year 2025 saw a record-breaking number of attacks on Android devices. Scammers are currently riding a few major waves: the hype surrounding AI apps, the urge to bypass site blocks or age checks, the hunt for a bargain on a new smartphone, the ubiquity of mobile banking, and, of course, the popularity of NFC. Let’s break down the primary threats of 2025–2026, and figure out how to keep your Android device safe in this new landscape.

Sideloading

Malicious installation packages (APK files) have always been the Final Boss among Android threats, despite Google’s multi-year efforts to fortify the OS. By using sideloading — installing an app via an APK file instead of grabbing it from the official store — users can install pretty much anything, including straight-up malware. And neither the rollout of Google Play Protect, nor the various permission restrictions for shady apps have managed to put a dent in the scale of the problem.

According to preliminary data from Kaspersky for 2025, the number of detected Android threats grew almost by half. In the third quarter alone, detections jumped by 38% compared to the second. In certain niches, like Trojan bankers, the growth was even more aggressive. In Russia alone, the notorious Mamont banker attacked 36 times more users than it did the previous year, while globally this entire category saw a nearly fourfold increase.

Today, bad actors primarily distribute malware via messaging apps by sliding malicious files into DMs and group chats. The installation file usually sports an enticing name (think “party_pics.jpg.apk” or “clearance_sale_catalog.apk”), accompanied by a message “helpfully” explaining how to install the package while bypassing the OS restrictions and security warnings.

Once a new device is infected, the malware often spams itself to everyone in the victim’s contact list.

Search engine spam and email campaigns are also trending, luring users to sites that look exactly like an official app store. There, they’re prompted to download the “latest helpful app”, such as an AI assistant. In reality, instead of an installation from an official app store, the user ends up downloading an APK package. A prime example of these tactics is the ClayRat Android Trojan, which uses a mix of all these techniques to target Russian users. It spreads through groups and fake websites, blasts itself to the victim’s contacts via SMS, and then proceeds to steal the victim’s chat logs and call history; it even goes as far as snapping photos of the owner using the front-facing camera. In just three months, over 600 distinct ClayRat builds have surfaced.

The scale of the disaster is so massive that Google even announced an upcoming ban on distributing apps from unknown developers starting in 2026. However, after a couple of months of pushback from the dev community, the company pivoted to a softer approach: unsigned apps will likely only be installable via some kind of superuser mode. As a result, we can expect scammers to simply update their how-to guides with instructions on how to toggle that mode on.

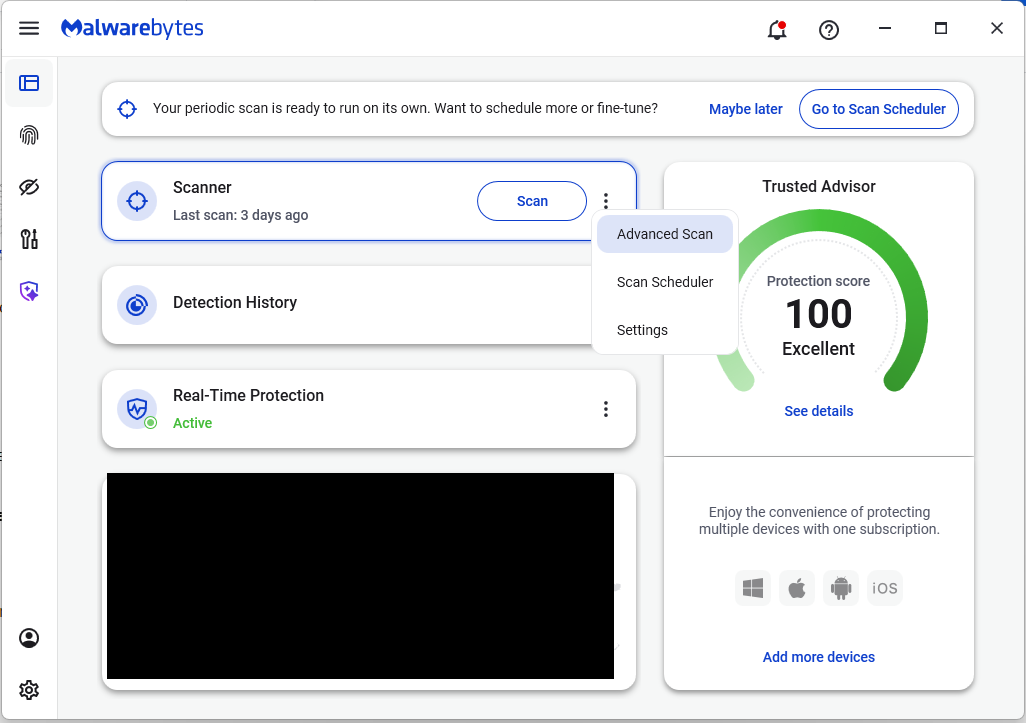

Kaspersky for Android will help you protect yourself from counterfeit and trojanized APK files. Unfortunately, due to Google’s decision, our Android security apps are currently unavailable on Google Play. We’ve previously provided detailed information on how to install our Android apps with a 100% guarantee of authenticity.

NFC relay attacks

Once an Android device is compromised, hackers can skip the middleman to steal the victim’s money directly thanks to the massive popularity of mobile payments. In the third quarter of 2025 alone, over 44 000 of these attacks were detected in Russia alone — a 50% jump from the previous quarter.

There are two main scams currently in play: direct and reverse NFC exploits.

Direct NFC relay is when a scammer contacts the victim via a messaging app and convinces them to download an app — supposedly to “verify their identity” with their bank. If the victim bites and installs it, they’re asked to tap their physical bank card against the back of their phone and enter their PIN. And just like that the card data is handed over to the criminals, who can then drain the account or go on a shopping spree.

Reverse NFC relay is a more elaborate scheme. The scammer sends a malicious APK and convinces the victim to set this new app as their primary contactless payment method. The app generates an NFC signal that ATMs recognize as the scammer’s card. The victim is then talked into going to an ATM with their infected phone to deposit cash into a “secure account”. In reality, those funds go straight into the scammer’s pocket.

We break both of these methods down in detail in our post, NFC skimming attacks.

NFC is also being leveraged to cash out cards after their details have been siphoned off through phishing websites. In this scenario, attackers attempt to link the stolen card to a mobile wallet on their own smartphone — a scheme we covered extensively in NFC carders hide behind Apple Pay and Google Wallet.

The stir over VPNs

In many parts of the world, getting onto certain websites isn’t as simple as it used to be. Some sites are blocked by local internet regulators or ISPs via court orders; others require users to pass an age verification check by showing ID and personal info. In some cases, sites block users from specific countries entirely just to avoid the headache of complying with local laws. Users are constantly trying to bypass these restrictions —and they often end up paying for it with their data or cash.

Many popular tools for bypassing blocks — especially free ones — effectively spy on their users. A recent audit revealed that over 20 popular services with a combined total of more than 700 million downloads actively track user location. They also tend to use sketchy encryption at best, which essentially leaves all user data out in the open for third parties to intercept.

Moreover, according to Google data from November 2025, there was a sharp spike in cases where malicious apps are being disguised as legitimate VPN services to trick unsuspecting users.

The permissions that this category of apps actually requires are a perfect match for intercepting data and manipulating website traffic. It’s also much easier for scammers to convince a victim to grant administrative privileges to an app responsible for internet access than it is for, say, a game or a music player. We should expect this scheme to only grow in popularity.

Trojan in a box

Even cautious users can fall victim to an infection if they succumb to the urge to save some cash. Throughout 2025, cases were reported worldwide where devices were already carrying a Trojan the moment they were unboxed. Typically, these were either smartphones from obscure manufacturers or knock-offs of famous brands purchased on online marketplaces. But the threat wasn’t limited to just phones; TV boxes, tablets, smart TVs, and even digital photo frames were all found to be at risk.

It’s still not entirely clear whether the infection happens right on the factory floor or somewhere along the supply chain between the factory and the buyer’s doorstep, but the device is already infected before the first time it’s turned on. Usually, it’s a sophisticated piece of malware called Triada, first identified by Kaspersky analysts back in 2016. It’s capable of injecting itself into every running app to intercept information: stealing access tokens and passwords for popular messaging apps and social media, hijacking SMS messages (confirmation codes: ouch!), redirecting users to ad-heavy sites, and even running a proxy directly on the phone so attackers can browse the web using the victim’s identity.

Technically, the Trojan is embedded right into the smartphone’s firmware, and the only way to kill it is to reflash the device with a clean OS. Usually, once you dig into the system, you’ll find that the device has far less RAM or storage than advertised — meaning the firmware is literally lying to the owner to sell a cheap hardware config as something more premium.

Another common pre-installed menace is the BADBOX 2.0 botnet, which also pulls double duty as a proxy and an ad-fraud engine. This one specializes in TV boxes and similar hardware.

How to go on using Android without losing your mind

Despite the growing list of threats, you can still use your Android smartphone safely! You just have to stick to some strict mobile hygiene rules.

- Install a comprehensive security solution on all your smartphones. We recommend Kaspersky for Android to protect against malware and phishing.

- Avoid sideloading apps via APKs whenever you can use an app store instead. A known app store — even a smaller one — is always a better bet than a random APK from some random website. If you have no other choice, download APK files only from official company websites, and double-check the URL of the page you’re on. If you aren’t 100% sure what the official site is, don’t just rely on a search engine; check official business directories or at least Wikipedia to verify the correct address.

- Read OS warnings carefully during installation. Don’t grant permissions if the requested rights or actions seem illogical or excessive for the app you’re installing.

- Under no circumstances should you install apps from links or attachments in chats, emails, or similar communication channels.

- Never tap your physical bank card against your phone. There is absolutely no legitimate scenario where doing this would be for your own benefit.

- Do not enter your card’s PIN into any app on your phone. A PIN should only ever be requested by an ATM or a physical payment terminal.

- When choosing a VPN, stick to paid ones from reputable companies.

- Buy smartphones and other electronics from official retailers, and steer clear of brands you’ve never heard of. Remember: if a deal seems too good to be true, it almost certainly is.

Other major Android threats from 2025: