Criminals are using AI website builders to clone major brands

AI tool Vercel was abused by cybercriminals to create a Malwarebytes lookalike website.

Cybercriminals no longer need design or coding skills to create a convincing fake brand site. All they need is a domain name and an AI website builder. In minutes, they can clone a site’s look and feel, plug in payment or credential-stealing flows, and start luring victims through search, social media, and spam.

One side effect of being an established and trusted brand is that you attract copycats who want a slice of that trust without doing any of the work. Cybercriminals have always known it is much easier to trick users by impersonating something they already recognize than by inventing something new—and developments in AI have made it trivial for scammers to create convincing fake sites.

Registering a plausible-looking domain is cheap and fast, especially through registrars and resellers that do little or no upfront vetting. Once attackers have a name that looks close enough to the real thing, they can use AI-powered tools to copy layouts, colors, and branding elements, and generate product pages, sign-up flows, and FAQs that look “on brand.”

A flood of fake “official” sites

Data from recent holiday seasons shows just how routine large-scale domain abuse has become.

Over a three‑month period leading into the 2025 shopping season, researchers observed more than 18,000 holiday‑themed domains with lures like “Christmas,” “Black Friday,” and “Flash Sale,” with at least 750 confirmed as malicious and many more still under investigation. In the same window, about 19,000 additional domains were registered explicitly to impersonate major retail brands, nearly 3,000 of which were already hosting phishing pages or fraudulent storefronts.

These sites are used for everything from credential harvesting and payment fraud to malware delivery disguised as “order trackers” or “security updates.”

Attackers then boost visibility using SEO poisoning, ad abuse, and comment spam, nudging their lookalike sites into search results and promoting them in social feeds right next to the legitimate ones. From a user’s perspective, especially on mobile without the hover function, that fake site can be only a typo or a tap away.

When the impersonation hits home

A recent example shows how low the barrier to entry has become.

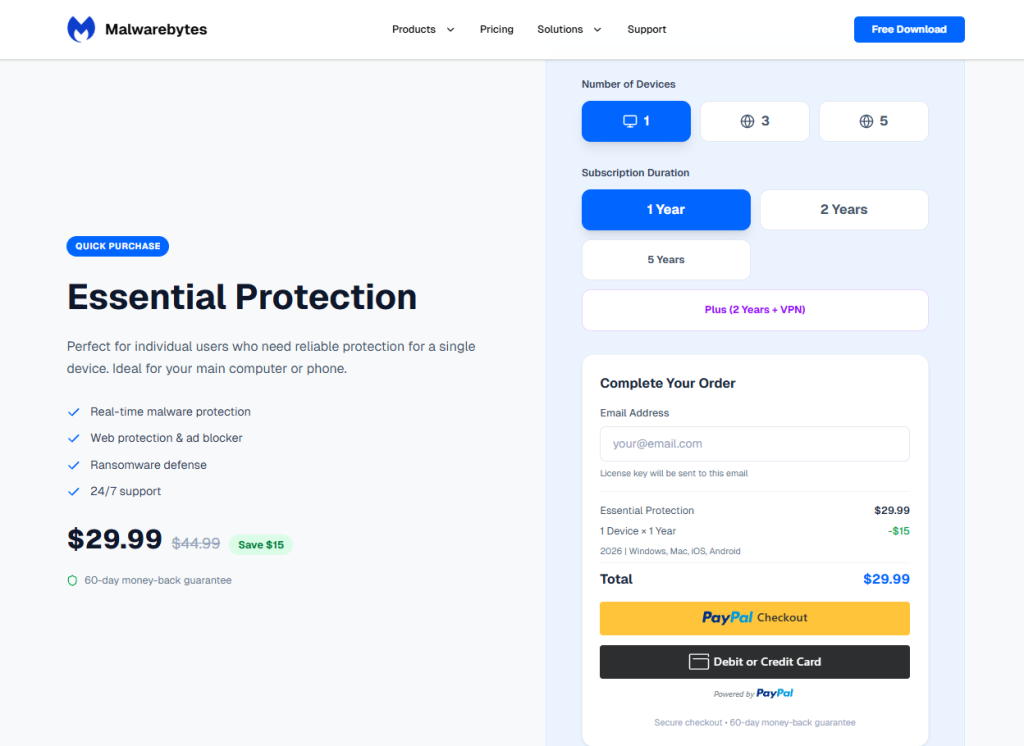

We were alerted to a site at installmalwarebytes[.]org that masqueraded from logo to layout as a genuine Malwarebytes site.

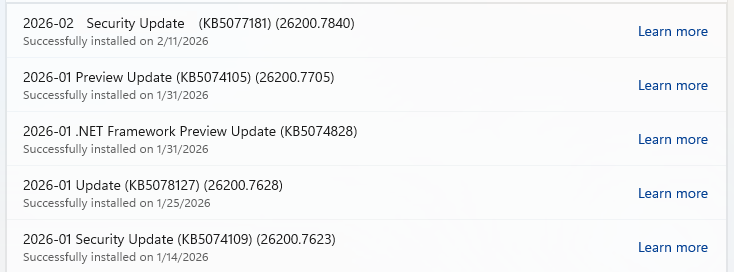

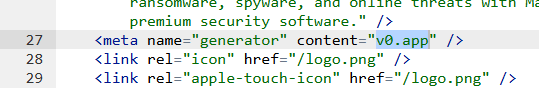

Close inspection revealed that the HTML carried a meta tag value pointing to v0 by Vercel, an AI-assisted app and website builder.

The tool lets users paste an existing URL into a prompt to automatically recreate its layout, styling, and structure—producing a near‑perfect clone of a site in very little time.

The history of the imposter domain tells an incremental evolution into abuse.

Registered in 2019, the site did not initially contain any Malwarebytes branding. In 2022, the operator began layering in Malwarebytes branding while publishing Indonesian‑language security content. This likely helped with search reputation while normalizing the brand look to visitors. Later, the site went blank, with no public archive records for 2025, only to resurface as a full-on clone backed by AI‑assisted tooling.

Traffic did not arrive by accident. Links to the site appeared in comment spam and injected links on unrelated websites, giving users the impression of organic references and driving them toward the fake download pages.

Payment flows were equally opaque. The fake site used PayPal for payments, but the integration hid the merchant’s name and logo from the user-facing confirmation screens, leaving only the buyer’s own details visible. That allowed the criminals to accept money while revealing as little about themselves as possible.

Behind the scenes, historical registration data pointed to an origin in India and to a hosting IP (209.99.40[.]222) associated with domain parking and other dubious uses rather than normal production hosting.

Combined with the AI‑powered cloning and the evasive payment configuration, it painted a picture of low‑effort, high‑confidence fraud.

AI website builders as force multipliers

The installmalwarebytes[.]org case is not an isolated misuse of AI‑assisted builders. It fits into a broader pattern of attackers using generative tools to create and host phishing sites at scale.

Threat intelligence teams have documented abuse of Vercel’s v0 platform to generate fully functional phishing pages that impersonate sign‑in portals for a variety of brands, including identity providers and cloud services, all from simple text prompts. Once the AI produces a clone, criminals can tweak a few links to point to their own credential‑stealing backends and go live in minutes.

Research into AI’s role in modern phishing shows that attackers are leaning heavily on website generators, writing assistants, and chatbots to streamline the entire kill chain—from crafting persuasive copy in multiple languages to spinning up responsive pages that render cleanly across devices. One analysis of AI‑assisted phishing campaigns found that roughly 40% of observed abuse involved website generation services, 30% involved AI writing tools, and about 11% leveraged chatbots, often in combination. This stack lets even low‑skilled actors produce professional-looking scams that used to require specialized skills or paid kits.

Growth first, guardrails later

The core problem is not that AI can build websites. It’s that the incentives around AI platform development are skewed. Vendors are under intense pressure to ship new capabilities, grow user bases, and capture market share, and that pressure often runs ahead of serious investment in abuse prevention.

As Malwarebytes General Manager Mark Beare put it:

“AI-powered website builders like Lovable and Vercel have dramatically lowered the barrier for launching polished sites in minutes. While these platforms include baseline security controls, their core focus is speed, ease of use, and growth—not preventing brand impersonation at scale. That imbalance creates an opportunity for bad actors to move faster than defenses, spinning up convincing fake brands before victims or companies can react.”

Site generators allow cloned branding of well‑known companies with no verification, publishing flows skip identity checks, and moderation either fails quietly or only reacts after an abuse report. Some builders let anyone spin up and publish a site without even confirming an email address, making it easy to burn through accounts as soon as one is flagged or taken down.

To be fair, there are signs that some providers are starting to respond by blocking specific phishing campaigns after disclosure or by adding limited brand-protection controls. But these are often reactive fixes applied after the damage is done.

Meanwhile, attackers can move to open‑source clones or lightly modified forks of the same tools hosted elsewhere, where there may be no meaningful content moderation at all.

In practice, the net effect is that AI companies benefit from the growth and experimentation that comes with permissive tooling, while the consequences is left to victims and defenders.

We have blocked the domain in our web protection module and requested a domain and vendor takedown.

How to stay safe

End users cannot fix misaligned AI incentives, but they can make life harder for brand impersonators. Even when a cloned website looks convincing, there are red flags to watch for:

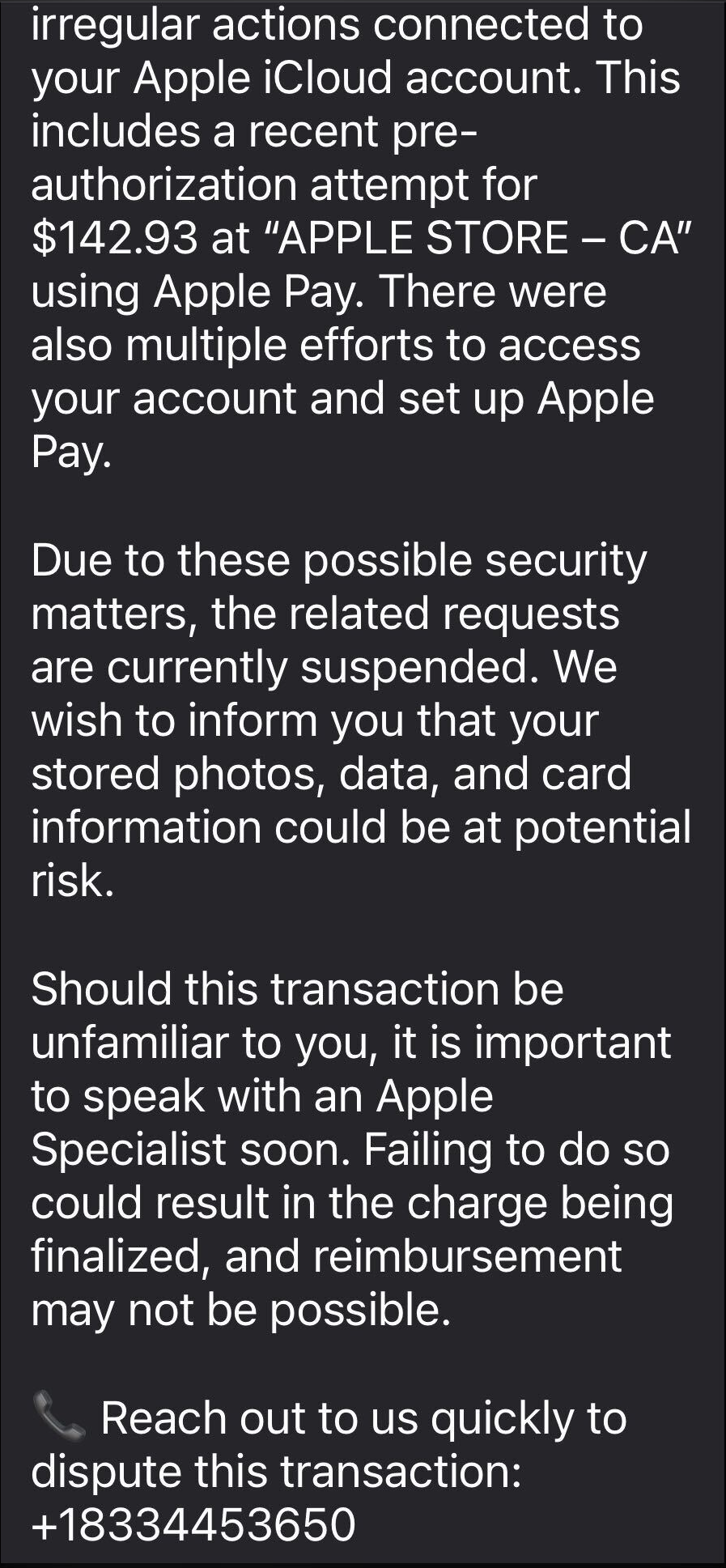

- Before completing any payment, always review the “Pay to” details or transaction summary. If no merchant is named, back out and treat the site as suspicious.

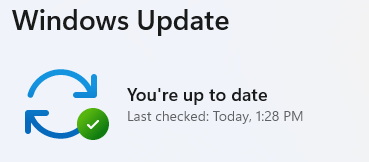

- Use an up-to-date, real-time anti-malware solution with a web protection module.

- Do not follow links posted in comments, on social media, or unsolicited emails to buy a product. Always follow a verified and trusted method to reach the vendor.

If you come across a fake Malwarebytes website, please let us know.

We don’t just report on threats—we help safeguard your entire digital identity

Cybersecurity risks should never spread beyond a headline. Protect your, and your family’s, personal information by using identity protection.