The company will use the investment to accelerate the adoption of its solution among financial institutions and digital businesses.

The post Monnai Raises $12 Million for Identity and Risk Data Infrastructure appeared first on SecurityWeek.

The company will use the investment to accelerate the adoption of its solution among financial institutions and digital businesses.

The post Monnai Raises $12 Million for Identity and Risk Data Infrastructure appeared first on SecurityWeek.

More than a decade after Aaron Swartz’s death, the United States is still living inside the contradiction that destroyed him.

Swartz believed that knowledge, especially publicly funded knowledge, should be freely accessible. Acting on that, he downloaded thousands of academic articles from the JSTOR archive with the intention of making them publicly available. For this, the federal government charged him with a felony and threatened decades in prison. After two years of prosecutorial pressure, Swartz died by suicide on Jan. 11, 2013.

The still-unresolved questions raised by his case have resurfaced in today’s debates over artificial intelligence, copyright and the ultimate control of knowledge.

At the time of Swartz’s prosecution, vast amounts of research were funded by taxpayers, conducted at public institutions and intended to advance public understanding. But access to that research was, and still is, locked behind expensive paywalls. People are unable to read work they helped fund without paying private journals and research websites.

Swartz considered this hoarding of knowledge to be neither accidental nor inevitable. It was the result of legal, economic and political choices. His actions challenged those choices directly. And for that, the government treated him as a criminal.

Today’s AI arms race involves a far more expansive, profit-driven form of information appropriation. The tech giants ingest vast amounts of copyrighted material: books, journalism, academic papers, art, music and personal writing. This data is scraped at industrial scale, often without consent, compensation or transparency, and then used to train large AI models.

AI companies then sell their proprietary systems, built on public and private knowledge, back to the people who funded it. But this time, the government’s response has been markedly different. There are no criminal prosecutions, no threats of decades-long prison sentences. Lawsuits proceed slowly, enforcement remains uncertain and policymakers signal caution, given AI’s perceived economic and strategic importance. Copyright infringement is reframed as an unfortunate but necessary step toward “innovation.”

Recent developments underscore this imbalance. In 2025, Anthropic reached a settlement with publishers over allegations that its AI systems were trained on copyrighted books without authorization. The agreement reportedly valued infringement at roughly $3,000 per book across an estimated 500,000 works, coming at a cost of over $1.5 billion. Plagiarism disputes between artists and accused infringers routinely settle for hundreds of thousands, or even millions, of dollars when prominent works are involved. Scholars estimate Anthropic avoided over $1 trillion in liability costs. For well-capitalized AI firms, such settlements are likely being factored as a predictable cost of doing business.

As AI becomes a larger part of America’s economy, one can see the writing on the wall. Judges will twist themselves into knots to justify an innovative technology premised on literally stealing the works of artists, poets, musicians, all of academia and the internet, and vast expanses of literature. But if Swartz’s actions were criminal, it is worth asking: What standard are we now applying to AI companies?

The question is not simply whether copyright law applies to AI. It is why the law appears to operate so differently depending on who is doing the extracting and for what purpose.

The stakes extend beyond copyright law or past injustices. They concern who controls the infrastructure of knowledge going forward and what that control means for democratic participation, accountability and public trust.

Systems trained on vast bodies of publicly funded research are increasingly becoming the primary way people learn about science, law, medicine and public policy. As search, synthesis and explanation are mediated through AI models, control over training data and infrastructure translates into control over what questions can be asked, what answers are surfaced, and whose expertise is treated as authoritative. If public knowledge is absorbed into proprietary systems that the public cannot inspect, audit or meaningfully challenge, then access to information is no longer governed by democratic norms but by corporate priorities.

Like the early internet, AI is often described as a democratizing force. But also like the internet, AI’s current trajectory suggests something closer to consolidation. Control over data, models and computational infrastructure is concentrated in the hands of a small number of powerful tech companies. They will decide who gets access to knowledge, under what conditions and at what price.

Swartz’s fight was not simply about access, but about whether knowledge should be governed by openness or corporate capture, and who that knowledge is ultimately for. He understood that access to knowledge is a prerequisite for democracy. A society cannot meaningfully debate policy, science or justice if information is locked away behind paywalls or controlled by proprietary algorithms. If we allow AI companies to profit from mass appropriation while claiming immunity, we are choosing a future in which access to knowledge is governed by corporate power rather than democratic values.

How we treat knowledge—who may access it, who may profit from it and who is punished for sharing it—has become a test of our democratic commitments. We should be honest about what those choices say about us.

This essay was written with J. B. Branch, and originally appeared in the San Francisco Chronicle.

We've known that social engineering would get AI wings. Now, at the beginning of 2026, we are learning just how high those wings can soar.

The post Cyber Insights 2026: Social Engineering appeared first on SecurityWeek.

Vibe coding generates a curate’s egg program: good in parts, but the bad parts affect the whole program.

The post Vibe Coding Tested: AI Agents Nail SQLi but Fail Miserably on Security Controls appeared first on SecurityWeek.

Researchers found a method to steal data which bypasses Microsoft Copilot’s built-in safety mechanisms.

The attack flow, called Reprompt, abuses how Microsoft Copilot handled URL parameters in order to hijack a user’s existing Copilot Personal session.

Copilot is an AI assistant which connects to a personal account and is integrated into Windows, the Edge browser, and various consumer applications.

The issue was fixed in Microsoft’s January Patch Tuesday update, and there is no evidence of in‑the‑wild exploitation so far. Still, it once again shows how risky it can be to trust AI assistants at this point in time.

Reprompt hides a malicious prompt in the q parameter of an otherwise legitimate Copilot URL. When the page loads, Copilot auto‑executes that prompt, allowing an attacker to run actions in the victim’s authenticated session after just a single click on a phishing link.

In other words, attackers can hide secret instructions inside the web address of a Copilot link, in a place most users never look. Copilot then runs those hidden instructions as if the users had typed them themselves.

Because Copilot accepts prompts via a q URL parameter and executes them automatically, a phishing email can lure a user into clicking a legitimate-looking Copilot link while silently injecting attacker-controlled instructions into a live Copilot session.

What makes Reprompt stand out from other, similar prompt injection attacks is that it requires no user-entered prompts, no installed plugins, and no enabled connectors.

The basis of the Reprompt attack is amazingly simple. Although Copilot enforces safeguards to prevent direct data leaks, these protections only apply to the initial request. The attackers were able to bypass these guardrails by simply instructing Copilot to repeat each action twice.

Working from there, the researchers noted:

“Once the first prompt is executed, the attacker’s server issues follow‑up instructions based on prior responses and forms an ongoing chain of requests. This approach hides the real intent from both the user and client-side monitoring tools, making detection extremely difficult.”

You can stay safe from the Reprompt attack specifically by installing the January 2026 Patch Tuesday updates.

If available, use Microsoft 365 Copilot for work data, as it benefits from Purview auditing, tenant‑level data loss prevention (DLP), and admin restrictions that were not available to Copilot Personal in the research case. DLP rules look for sensitive data such as credit card numbers, ID numbers, health data, and can block, warn, or log when someone tries to send or store it in risky ways (email, OneDrive, Teams, Power Platform connectors, and more).

Don’t click on unsolicited links before verifying with the (trusted) source whether they are safe.

Reportedly, Microsoft is testing a new policy that allows IT administrators to uninstall the AI-powered Copilot digital assistant on managed devices.

Malwarebytes users can disable Copilot for their personal machines under Tools > Privacy, where you can toggle Disable Windows Copilot to on (blue).

In general, be aware that using AI assistants still pose privacy risks. As long as there are ways for assistants to automatically ingest untrusted input—such as URL parameters, page text, metadata, and comments—and merge it into hidden system prompts or instructions without strong separation or filtering, users remain at risk of leaking private information.

So when using any AI assistant that can be driven via links, browser automation, or external content, it is reasonable to assume “Reprompt‑style” issues are at least possible and should be taken into consideration.

We don’t just report on threats—we remove them

Cybersecurity risks should never spread beyond a headline. Keep threats off your devices by downloading Malwarebytes today.

Researchers found a method to steal data which bypasses Microsoft Copilot’s built-in safety mechanisms.

The attack flow, called Reprompt, abuses how Microsoft Copilot handled URL parameters in order to hijack a user’s existing Copilot Personal session.

Copilot is an AI assistant which connects to a personal account and is integrated into Windows, the Edge browser, and various consumer applications.

The issue was fixed in Microsoft’s January Patch Tuesday update, and there is no evidence of in‑the‑wild exploitation so far. Still, it once again shows how risky it can be to trust AI assistants at this point in time.

Reprompt hides a malicious prompt in the q parameter of an otherwise legitimate Copilot URL. When the page loads, Copilot auto‑executes that prompt, allowing an attacker to run actions in the victim’s authenticated session after just a single click on a phishing link.

In other words, attackers can hide secret instructions inside the web address of a Copilot link, in a place most users never look. Copilot then runs those hidden instructions as if the users had typed them themselves.

Because Copilot accepts prompts via a q URL parameter and executes them automatically, a phishing email can lure a user into clicking a legitimate-looking Copilot link while silently injecting attacker-controlled instructions into a live Copilot session.

What makes Reprompt stand out from other, similar prompt injection attacks is that it requires no user-entered prompts, no installed plugins, and no enabled connectors.

The basis of the Reprompt attack is amazingly simple. Although Copilot enforces safeguards to prevent direct data leaks, these protections only apply to the initial request. The attackers were able to bypass these guardrails by simply instructing Copilot to repeat each action twice.

Working from there, the researchers noted:

“Once the first prompt is executed, the attacker’s server issues follow‑up instructions based on prior responses and forms an ongoing chain of requests. This approach hides the real intent from both the user and client-side monitoring tools, making detection extremely difficult.”

You can stay safe from the Reprompt attack specifically by installing the January 2026 Patch Tuesday updates.

If available, use Microsoft 365 Copilot for work data, as it benefits from Purview auditing, tenant‑level data loss prevention (DLP), and admin restrictions that were not available to Copilot Personal in the research case. DLP rules look for sensitive data such as credit card numbers, ID numbers, health data, and can block, warn, or log when someone tries to send or store it in risky ways (email, OneDrive, Teams, Power Platform connectors, and more).

Don’t click on unsolicited links before verifying with the (trusted) source whether they are safe.

Reportedly, Microsoft is testing a new policy that allows IT administrators to uninstall the AI-powered Copilot digital assistant on managed devices.

Malwarebytes users can disable Copilot for their personal machines under Tools > Privacy, where you can toggle Disable Windows Copilot to on (blue).

In general, be aware that using AI assistants still pose privacy risks. As long as there are ways for assistants to automatically ingest untrusted input—such as URL parameters, page text, metadata, and comments—and merge it into hidden system prompts or instructions without strong separation or filtering, users remain at risk of leaking private information.

So when using any AI assistant that can be driven via links, browser automation, or external content, it is reasonable to assume “Reprompt‑style” issues are at least possible and should be taken into consideration.

We don’t just report on threats—we remove them

Cybersecurity risks should never spread beyond a headline. Keep threats off your devices by downloading Malwarebytes today.

isVerified provides Android and iOS mobile applications designed to protect enterprise communications.

The post isVerified Emerges From Stealth With Voice Deepfake Detection Apps appeared first on SecurityWeek.

The attack bypassed Copilot’s data leak protections and allowed for session exfiltration even after the Copilot chat was closed.

The post New ‘Reprompt’ Attack Silently Siphons Microsoft Copilot Data appeared first on SecurityWeek.

In 2025, cybersecurity researchers discovered several open databases belonging to various AI image-generation tools. This fact alone makes you wonder just how much AI startups care about the privacy and security of their users’ data. But the nature of the content in these databases is far more alarming.

A large number of generated pictures in these databases were images of women in lingerie or fully nude. Some were clearly created from children’s photos, or intended to make adult women appear younger (and undressed). Finally, the most disturbing part: some pornographic images were generated from completely innocent photos of real people — likely taken from social media.

In this post, we’re talking about what sextortion is, and why AI tools mean anyone can become a victim. We detail the contents of these open databases, and give you advice on how to avoid becoming a victim of AI-era sextortion.

Online sexual extortion has become so common it’s earned its own global name: sextortion (a portmanteau of sex and extortion). We’ve already detailed its various types in our post, Fifty shades of sextortion. To recap, this form of blackmail involves threatening to publish intimate images or videos to coerce the victim into taking certain actions, or to extort money from them.

Previously, victims of sextortion were typically adult industry workers, or individuals who’d shared intimate content with an untrustworthy person.

However, the rapid advancement of artificial intelligence, particularly text-to-image technology, has fundamentally changed the game. Now, literally anyone who’s posted their most innocent photos publicly can become a victim of sextortion. This is because generative AI makes it possible to quickly, easily, and convincingly undress people in any digital image, or add a generated nude body to someone’s head in a matter of seconds.

Of course, this kind of fakery was possible before AI, but it required long hours of meticulous Photoshop work. Now, all you need is to describe the desired result in words.

To make matters worse, many generative AI services don’t bother much with protecting the content they’ve been used to create. As mentioned earlier, last year saw researchers discover at least three publicly accessible databases belonging to these services. This means the generated nudes within them were available not just to the user who’d created them, but to anyone on the internet.

In October 2025, cybersecurity researcher Jeremiah Fowler uncovered an open database containing over a million AI-generated images and videos. According to the researcher, the overwhelming majority of this content was pornographic in nature. The database wasn’t encrypted or password-protected — meaning any internet user could access it.

The database’s name and watermarks on some images led Fowler to believe its source was the U.S.-based company SocialBook, which offers services for influencers and digital marketing services. The company’s website also provides access to tools for generating images and content using AI.

However, further analysis revealed that SocialBook itself wasn’t directly generating this content. Links within the service’s interface led to third-party products — the AI services MagicEdit and DreamPal — which were the tools used to create the images. These tools allowed users to generate pictures from text descriptions, edit uploaded photos, and perform various visual manipulations, including creating explicit content and face-swapping.

The leak was linked to these specific tools, and the database contained the product of their work, including AI-generated and AI-edited images. A portion of the images led the researcher to suspect they’d been uploaded to the AI as references for creating provocative imagery.

Fowler states that roughly 10,000 photos were being added to the database every single day. SocialBook denies any connection to the database. After the researcher informed the company of the leak, several pages on the SocialBook website that had previously mentioned MagicEdit and DreamPal became inaccessible and began returning errors.

Both services — MagicEdit and DreamPal — were initially marketed as tools for interactive, user-driven visual experimentation with images and art characters. Unfortunately, a significant portion of these capabilities were directly linked to creating sexualized content.

For example, MagicEdit offered a tool for AI-powered virtual clothing changes, as well as a set of styles that made images of women more revealing after processing — such as replacing everyday clothes with swimwear or lingerie. Its promotional materials promised to turn an ordinary look into a sexy one in seconds.

DreamPal, for its part, was initially positioned as an AI-powered role-playing chat, and was even more explicit about its adult-oriented positioning. The site offered to create an ideal AI girlfriend, with certain pages directly referencing erotic content. The FAQ also noted that filters for explicit content in chats were disabled so as not to limit users’ most intimate fantasies.

Both services have suspended operations. At the time of writing, the DreamPal website returned an error, while MagicEdit seemed available again. Their apps were removed from both the App Store and Google Play.

Jeremiah Fowler says earlier in 2025, he discovered two more open databases containing AI-generated images. One belonged to the South Korean site GenNomis, and contained 95,000 entries — a substantial portion of which being images of “undressed” people. Among other things, the database included images with child versions of celebrities: American singers Ariana Grande and Beyoncé, and reality TV star Kim Kardashian.

In light of incidents like these, it’s clear that the risks associated with sextortion are no longer confined to private messaging or the exchange of intimate content. In the era of generative AI, even ordinary photos, when posted publicly, can be used to create compromising content.

This problem is especially relevant for women, but men shouldn’t get too comfortable either: the popular blackmail scheme of “I hacked your computer and used the webcam to make videos of you browsing adult sites” could reach a whole new level of persuasion thanks to AI tools for generating photos and videos.

Therefore, protecting your privacy on social media and controlling what data about you is publicly available become key measures for safeguarding both your reputation and peace of mind. To prevent your photos from being used to create questionable AI-generated content, we recommend making all your social media profiles as private as possible — after all, they could be the source of images for AI-generated nudes.

We’ve already published multiple detailed guides on how to reduce your digital footprint online or even remove your data from the internet, how to stop data brokers from compiling dossiers on you, and protect yourself from intimate image abuse.

Additionally, we have a dedicated service, Privacy Checker — perfect for anyone who wants a quick but systematic approach to privacy settings everywhere possible. It compiles step-by-step guides for securing accounts on social media and online services across all major platforms.

And to ensure the safety and privacy of your child’s data, Kaspersky Safe Kids can help: it allows parents to monitor which social media their child spends time on. From there, you can help them adjust privacy settings on their accounts so their posted photos aren’t used to create inappropriate content. Explore our guide to children’s online safety together, and if your child dreams of becoming a popular blogger, discuss our step-by-step cybersecurity guide for wannabe bloggers with them.

One of the most influential publications on real-world AI system design is Anthropic’s guide, Building Effective Agents. Its core message is simple:

Effective AI requires structure first, adaptability second.

Anthropic emphasizes that AI agents work best when:

These principles ensure accuracy, avoid hallucinations and keep investigations reproducible, all critical requirements for cybersecurity.

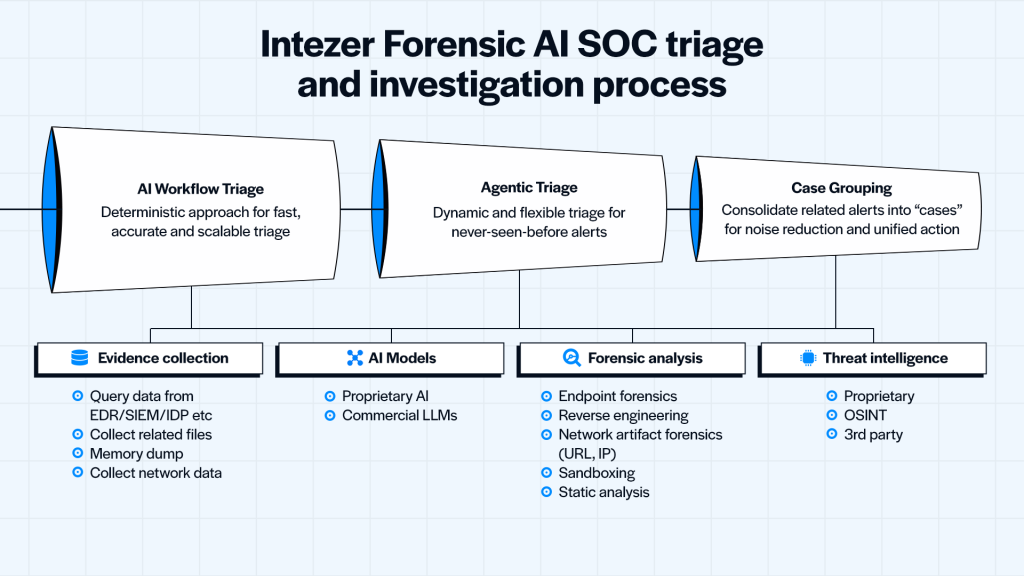

Intezer Forensic AI SOC is built on exactly this philosophy. Our platform uses a dual-mode design with Intezer AI Workflow and AI Agent, completely aligning with Anthropic’s best practices to deliver fast, scalable and highly accurate investigations across a broad range of alerts, all while keeping analysts in the loop.

Here is how Intezer implements Anthropic’s best practices for agents.

Anthropic advises that AI systems should begin with deterministic workflows instead of free-form reasoning. In cybersecurity, this is essential for accuracy, auditability, trust and scalability (when handling huge volumes of alerts).

Intezer’s AI Workflow mode is a structured triage process designed by security experts and executed with strict consistency. It applies AI only at key decision points, not as the driver of the entire investigation.

This approach provides:

Most alerts, especially well-defined ones, are fully resolved at this stage, giving SOCs broad alert coverage at low cost.

Anthropic states that agents should activate only when the structured workflow reaches uncertainty, and only after they inherit the full context. Intezer follows this exactly.

AI Agent mode activates only when the Workflow cannot reach a high-confidence verdict.

At that point, the agent:

This ensures the agent is guided, not free-floating, and its decisions remain grounded in evidence, not guesswork.

The result is deeper investigation where it matters, without unnecessary cost.

Intezer keeps human analysts at the center so they can review and override conclusions, and trace every decision made by Intezer. Of course, all evidence and reasoning is grounded in forensic data and is fully transparent and explainable for beginners and advanced analysts alike.

This aligns with Anthropic’s principle that humans remain final decision-makers, especially in high-stakes domains like cybersecurity.

Intezer’s adherence to Anthropic’s best practices produces measurable outcomes across the three most important SOC metrics: accuracy, coverage, and speed, while also reducing cost.

Intezer’s approach of combining deterministic forensics + adaptive AI = best-in-class verdict quality.

This hybrid approach dramatically reduces false positives and prevents premature conclusions.

Because AI Workflows handle the bulk of alerts inexpensively and AI Agents only run when needed, heavy and expensive reasoning calls are minimized

This frees SOCs from cherry-picking which alerts to ingest allowing them to triage and investigate them all.

This is crucial for:

You get broad alert coverage without inflating compute costs.

The result is a SOC where every alert is investigated quickly, consistently, and with forensic depth.

| Anthropic best practice | How Intezer implements it |

| Start with deterministic workflows | AI Workflow handles structured triage with predefined expert steps |

| Activate agents only when needed | AI Agent triggers only when confidence is insufficient |

| Give agents full context | Agent inherits the entire Workflow evidence set |

| Control tool usage | Agent selects tools based on evidence, not speculation |

| Maintain human-in-the-loop | Analysts can verify, guide, and override conclusions |

| Prioritize safety and reproducibility | Every action is logged, justified, and traceable |

Anthropic’s framework for building effective agents is now influencing industries far beyond general AI research. Intezer Forensic AI SOC might be one of the strongest real-world implementations of these practices in cybersecurity.

By combining:

Intezer is able to deliver fast, accurate, and scalable triage that transforms SOC operations.

Learn more about how you can transform your SOC today.

The post Building effective AI for the SOC: How Intezer Forensic AI SOC follows Anthropic’s best practices appeared first on Intezer.

In September 2025, security researchers at Anthropic uncovered something unprecedented: an AI-orchestrated espionage campaign where attackers used Claude to perform 80–90% of a sophisticated hacking operation. The AI handled everything from reconnaissance to payload development, demonstrating that artificial intelligence has fundamentally changed the threat landscape, not just as a tool for defenders, but as both

The post Thinking Like an Attacker: How Attackers Target AI Systems appeared first on OffSec.