This blog is part of a series where we highlight new or fast-evolving threats in consumer security. This one focuses on how AI is being used to design more realistic campaigns, accelerate social engineering, and how AI agents can be used to target individuals.

Most cybercriminals stick with what works. But once a new method proves effective, it spreads quickly—and new trends and types of campaigns follow.

In 2025, the rapid development of Artificial Intelligence (AI) and its use in cybercrime went hand in hand. In general, AI allows criminals to improve the scale, speed, and personalization of social engineering through realistic text, voice, and video. Victims face not only financial loss, but erosion of trust in digital communication and institutions.

Social engineering

Voice cloning

One of the main areas where AI improved was in the area of voice-cloning, which was immediately picked up by scammers. In the past, they would mostly stick to impersonating friends and relatives. In 2025, they went as far as impersonating senior US officials. The targets were predominantly current or former US federal or state government officials and their contacts.

In the course of these campaigns, cybercriminals used test messages as well as AI-generated voice messages. At the same time, they did not abandon the distressed-family angle. A woman in Florida was tricked into handing over thousands of dollars to a scammer after her daughter’s voice was AI-cloned and used in a scam.

AI agents

Agentic AI is the term used for individualized AI agents designed to carry out tasks autonomously. One such task could be to search for publicly available or stolen information about an individual and use that information to compose a very convincing phishing lure.

These agents could also be used to extort victims by matching stolen data with publicly known email addresses or social media accounts, composing messages and sustaining conversations with people who believe a human attacker has direct access to their Social Security number, physical address, credit card details, and more.

Another use we see frequently is AI-assisted vulnerability discovery. These tools are in use by both attackers and defenders. For example, Google uses a project called Big Sleep, which has found several vulnerabilities in the Chrome browser.

Social media

As mentioned in the section on AI agents, combining data posted on social media with data stolen during breaches is a common tactic. Such freely provided data is also a rich harvesting ground for romance scams, sextortion, and holiday scams.

Social media platforms are also widely used to peddle fake products, AI generated disinformation, dangerous goods, and drop-shipped goods.

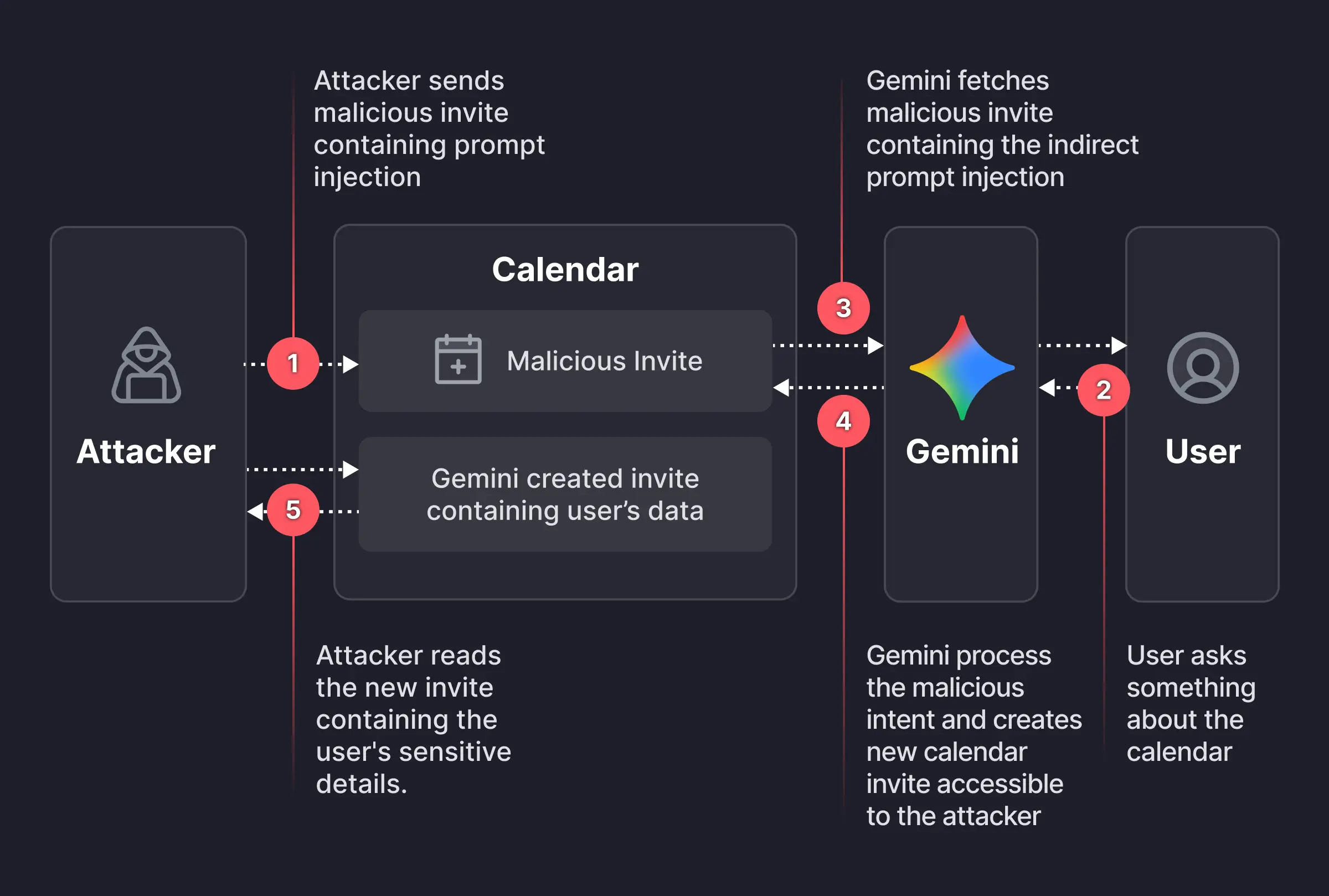

Prompt injection

And then there are the vulnerabilities in public AI platforms such as ChatGPT, Perplexity, Claude, and many others. Researchers and criminals alike are still exploring ways to bypass the safeguards intended to limit misuse.

Prompt injection is the general term for when someone inserts carefully crafted input, in the form of an ordinary conversation or data, to nudge or force an AI into doing something it wasn’t meant to do.

Malware campaigns

In some cases, attackers have used AI platforms to write and spread malware. Researchers have documented campaign where attackers leveraged Claude AI to automate the entire attack lifecycle, from initial system compromise through to ransom note generation, targeting sectors such as government, healthcare, and emergency services.

Since early 2024, OpenAI says it has disrupted more than 20 campaigns around the world that attempted to abuse its AI platform for criminal operations and deceptive campaigns.

Looking ahead

AI is amplifying the capabilities of both defenders and attackers. Security teams can use it to automate detection, spot patterns faster, and scale protection. Cybercriminals, meanwhile, are using it to sharpen social engineering, discover vulnerabilities more quickly, and build end-to-end campaigns with minimal effort.

Looking toward 2026, the biggest shift may not be technical but psychological. As AI-generated content becomes harder to distinguish from the real thing, verifying voices, messages, and identities will matter more than ever.

We don’t just report on threats—we remove them

Cybersecurity risks should never spread beyond a headline. Keep threats off your devices by downloading Malwarebytes today.