Reading view

Vulnerability Allows Hackers to Hijack OpenClaw AI Assistant

OpenClaw (aka Moltbot and Clawdbot) is vulnerable to one-click remote code execution attacks.

The post Vulnerability Allows Hackers to Hijack OpenClaw AI Assistant appeared first on SecurityWeek.

French prosecutors raid X offices, summon Musk over Grok deepfakes

Malicious MoltBot skills used to push password-stealing malware

How does cyberthreat attribution help in practice?

Not every cybersecurity practitioner thinks it’s worth the effort to figure out exactly who’s pulling the strings behind the malware hitting their company. The typical incident investigation algorithm goes something like this: analyst finds a suspicious file → if the antivirus didn’t catch it, puts it into a sandbox to test → confirms some malicious activity → adds the hash to the blocklist → goes for coffee break. These are the go-to steps for many cybersecurity professionals — especially when they’re swamped with alerts, or don’t quite have the forensic skills to unravel a complex attack thread by thread. However, when dealing with a targeted attack, this approach is a one-way ticket to disaster — and here’s why.

If an attacker is playing for keeps, they rarely stick to a single attack vector. There’s a good chance the malicious file has already played its part in a multi-stage attack and is now all but useless to the attacker. Meanwhile, the adversary has already dug deep into corporate infrastructure and is busy operating with an entirely different set of tools. To clear the threat for good, the security team has to uncover and neutralize the entire attack chain.

But how can this be done quickly and effectively before the attackers manage to do some real damage? One way is to dive deep into the context. By analyzing a single file, an expert can identify exactly who’s attacking his company, quickly find out which other tools and tactics that specific group employs, and then sweep infrastructure for any related threats. There are plenty of threat intelligence tools out there for this, but I’ll show you how it works using our Kaspersky Threat Intelligence Portal.

A practical example of why attribution matters

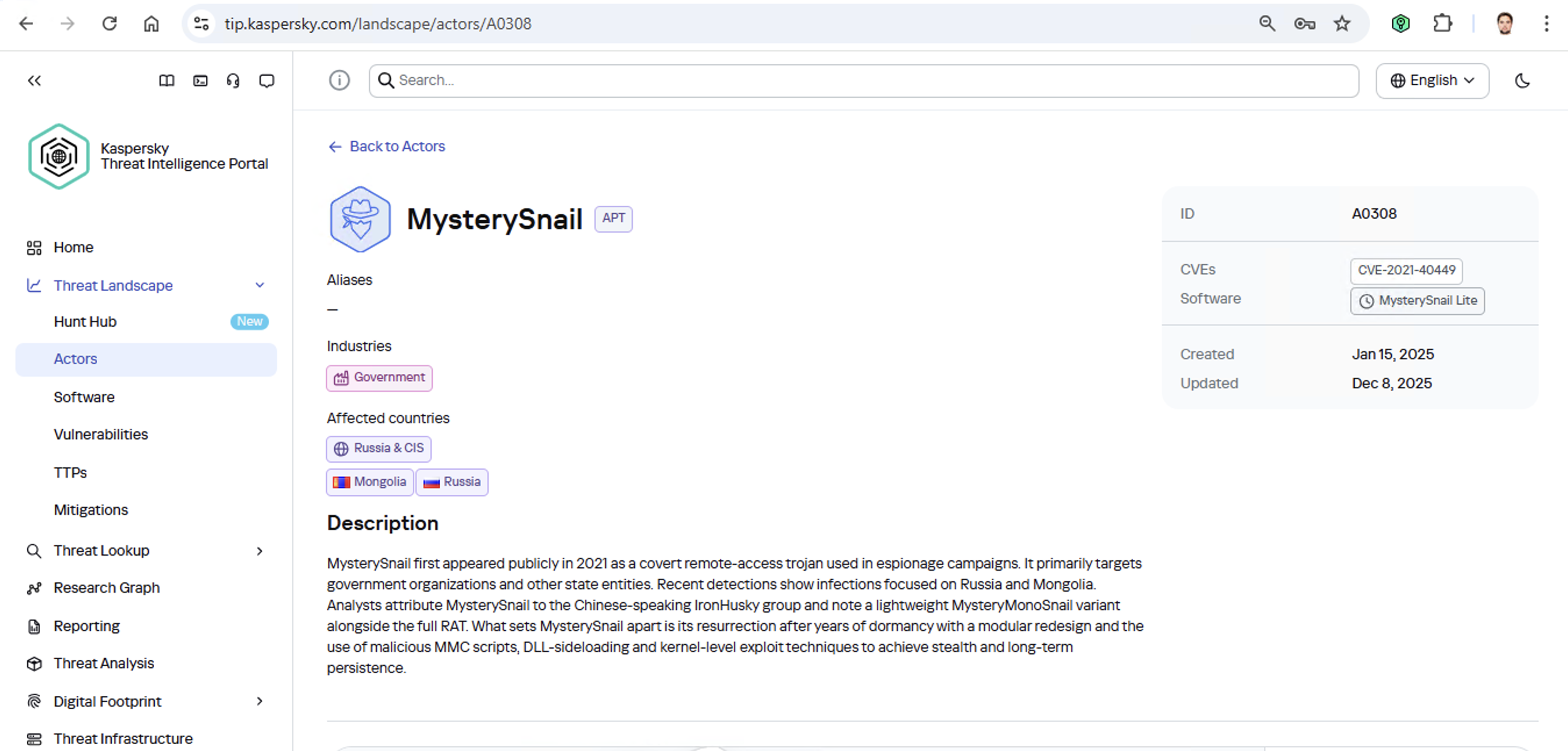

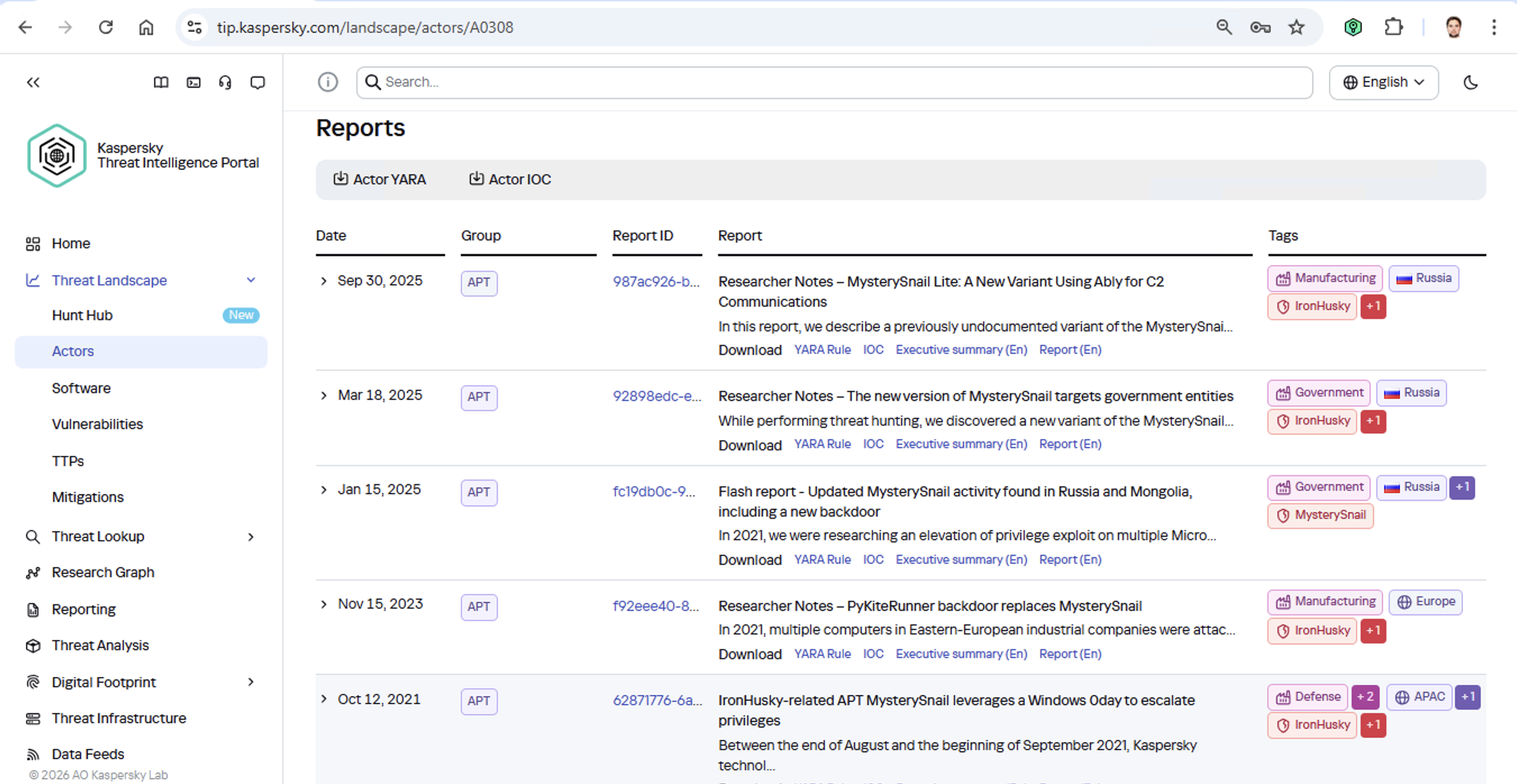

Let’s say we upload a piece of malware we’ve discovered to a threat intelligence portal, and learn that it’s usually being used by, say, the MysterySnail group. What does that actually tell us? Let’s look at the available intel:

First off, these attackers target government institutions in both Russia and Mongolia. They’re a Chinese-speaking group that typically focuses on espionage. According to their profile, they establish a foothold in infrastructure and lay low until they find something worth stealing. We also know that they typically exploit the vulnerability CVE-2021-40449. What kind of vulnerability is that?

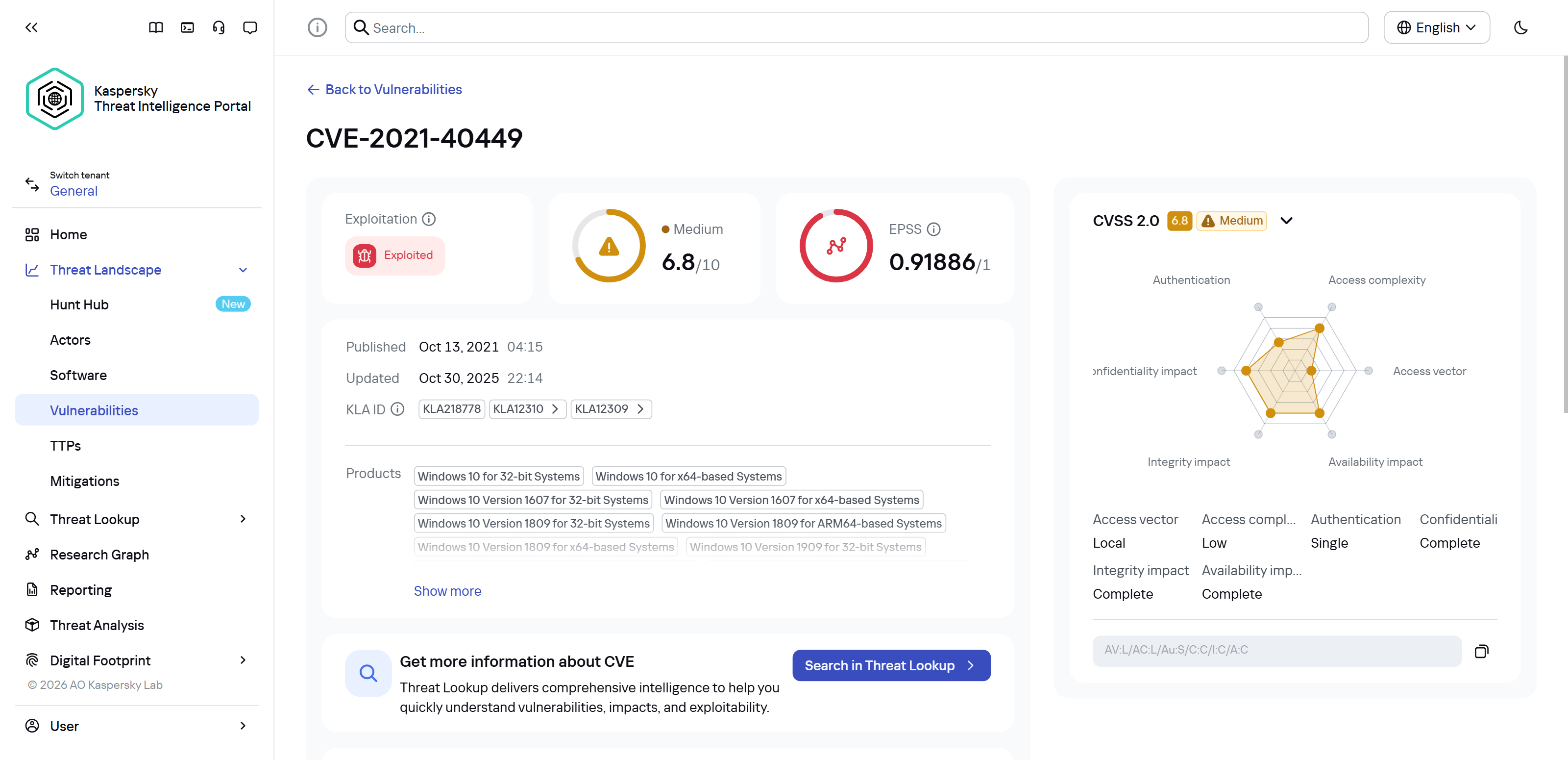

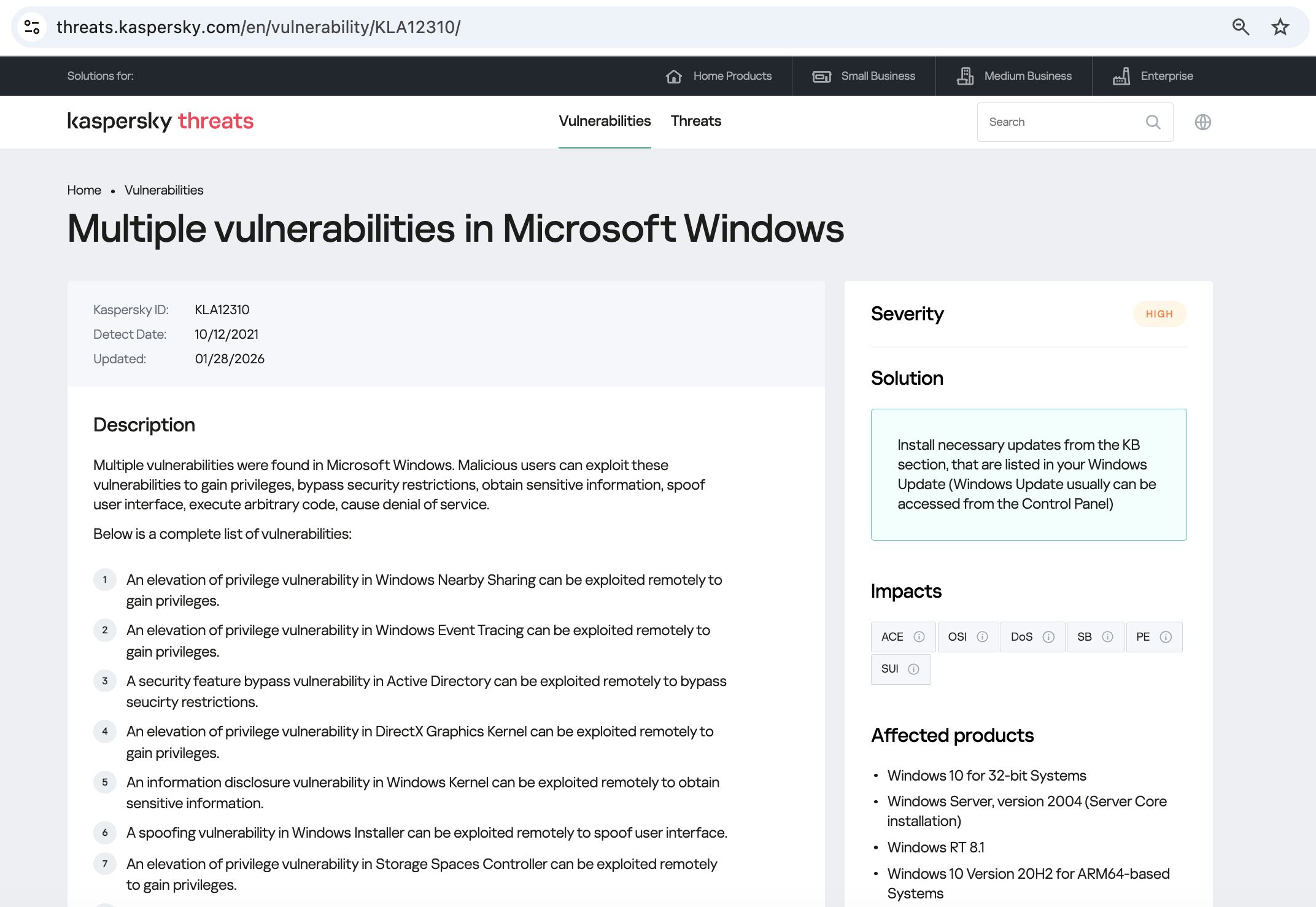

As we can see, it’s a privilege escalation vulnerability — meaning it’s used after hackers have already infiltrated the infrastructure. This vulnerability has a high severity rating and is heavily exploited in the wild. So what software is actually vulnerable?

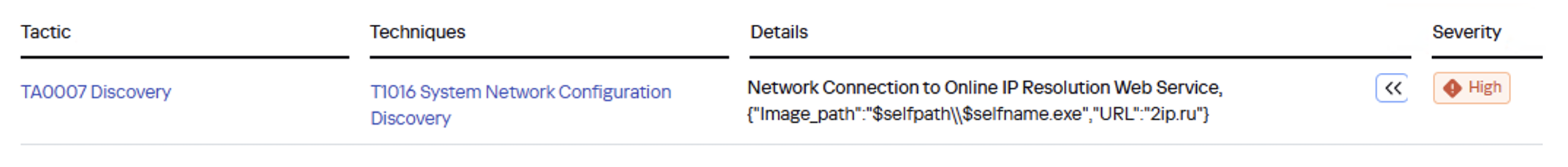

Got it: Microsoft Windows. Time to double-check if the patch that fixes this hole has actually been installed. Alright, besides the vulnerability, what else do we know about the hackers? It turns out they have a peculiar way of checking network configurations — they connect to the public site 2ip.ru:

So it makes sense to add a correlation rule to SIEM to flag that kind of behavior.

Now’s the time to read up on this group in more detail and gather additional indicators of compromise (IoCs) for SIEM monitoring, as well as ready-to-use YARA rules (structured text descriptions used to identify malware). This will help us track down all the tentacles of this kraken that might have already crept into corporate infrastructure, and ensure we can intercept them quickly if they try to break in again.

Kaspersky Threat Intelligence Portal provides a ton of additional reports on MysterySnail attacks, each complete with a list of IoCs and YARA rules. These YARA rules can be used to scan all endpoints, and those IoCs can be added into SIEM for constant monitoring. While we’re at it, let’s check the reports to see how these attackers handle data exfiltration, and what kind of data they’re usually hunting for. Now we can actually take steps to head off the attack.

And just like that, MysterySnail, the infrastructure is now tuned to find you and respond immediately. No more spying for you!

Malware attribution methods

Before diving into specific methods, we need to make one thing clear: for attribution to actually work, the threat intelligence provided needs a massive knowledge base of the tactics, techniques, and procedures (TTPs) used by threat actors. The scope and quality of these databases can vary wildly among vendors. In our case, before even building our tool, we spent years tracking known groups across various campaigns and logging their TTPs, and we continue to actively update that database today.

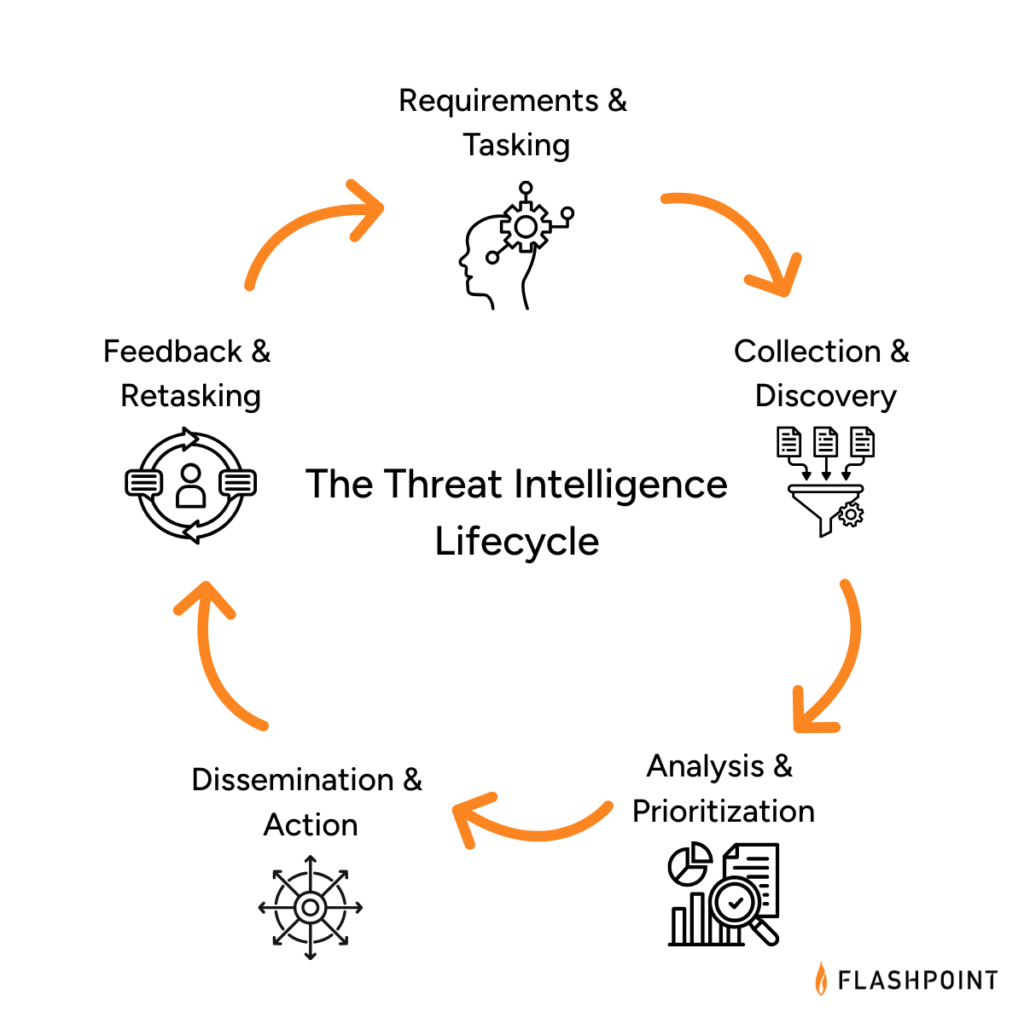

With a TTP database in place, the following attribution methods can be implemented:

- Dynamic attribution: identifying TTPs through the dynamic analysis of specific files, then cross-referencing that set of TTPs against those of known hacking groups

- Technical attribution: finding code overlaps between specific files and code fragments known to be used by specific hacking groups in their malware

Dynamic attribution

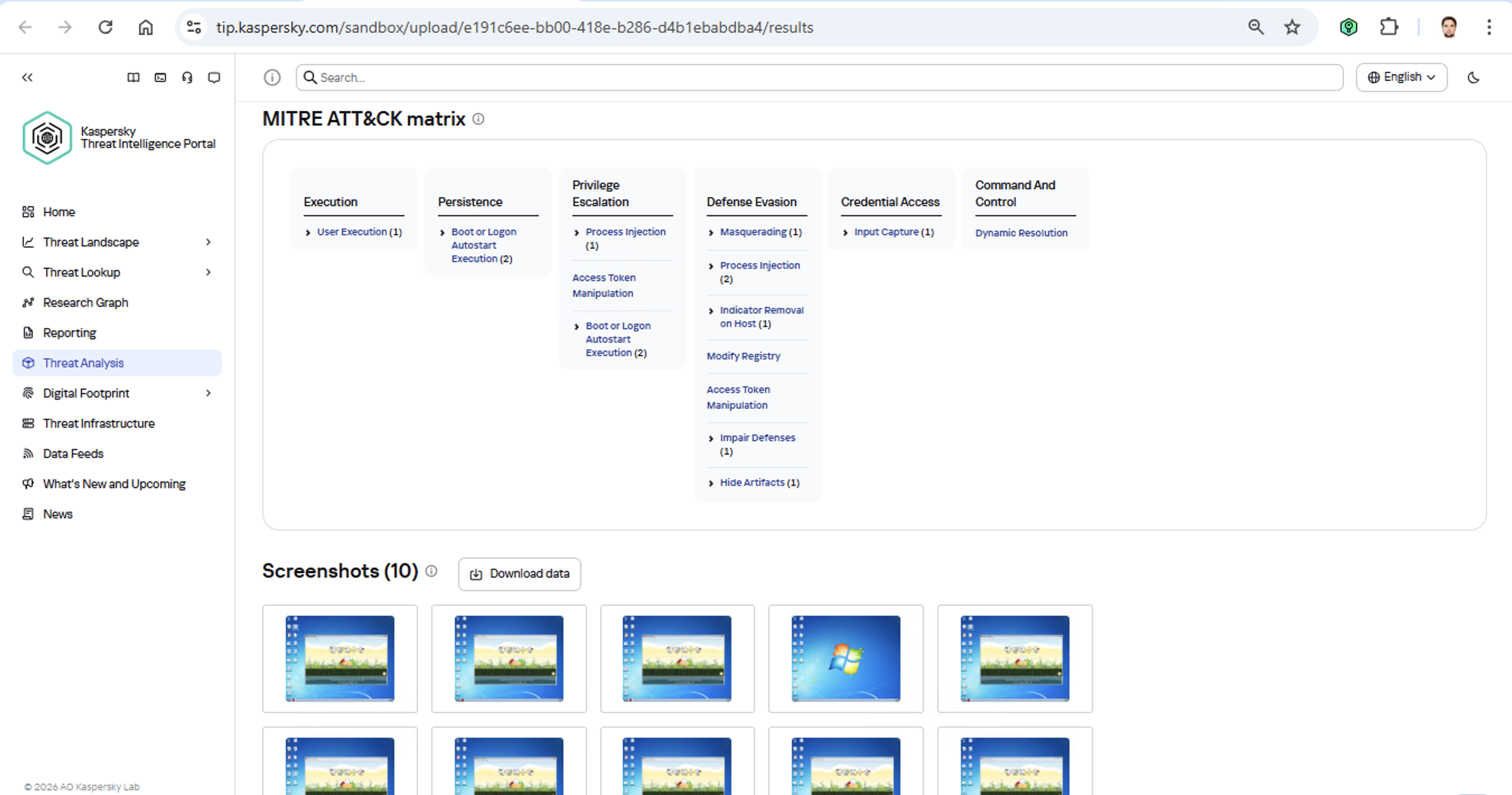

Identifying TTPs during dynamic analysis is relatively straightforward to implement; in fact, this functionality has been a staple of every modern sandbox for a long time. Naturally, all of our sandboxes also identify TTPs during the dynamic analysis of a malware sample:

The core of this method lies in categorizing malware activity using the MITRE ATT&CK framework. A sandbox report typically contains a list of detected TTPs. While this is highly useful data, it’s not enough for full-blown attribution to a specific group. Trying to identify the perpetrators of an attack using just this method is a lot like the ancient Indian parable of the blind men and the elephant: blindfolded folks touch different parts of an elephant and try to deduce what’s in front of them from just that. The one touching the trunk thinks it’s a python; the one touching the side is sure it’s a wall, and so on.

Technical attribution

The second attribution method is handled via static code analysis (though keep in mind that this type of attribution is always problematic). The core idea here is to cluster even slightly overlapping malware files based on specific unique characteristics. Before analysis can begin, the malware sample must be disassembled. The problem is that alongside the informative and useful bits, the recovered code contains a lot of noise. If the attribution algorithm takes this non-informative junk into account, any malware sample will end up looking similar to a great number of legitimate files, making quality attribution impossible. On the flip side, trying to only attribute malware based on the useful fragments but using a mathematically primitive method will only cause the false positive rate to go through the roof. Furthermore, any attribution result must be cross-checked for similarities with legitimate files — and the quality of that check usually depends heavily on the vendor’s technical capabilities.

Kaspersky’s approach to attribution

Our products leverage a unique database of malware associated with specific hacking groups, built over more than 25 years. On top of that, we use a patented attribution algorithm based on static analysis of disassembled code. This allows us to determine — with high precision, and even a specific probability percentage — how similar an analyzed file is to known samples from a particular group. This way, we can form a well-grounded verdict attributing the malware to a specific threat actor. The results are then cross-referenced against a database of billions of legitimate files to filter out false positives; if a match is found with any of them, the attribution verdict is adjusted accordingly. This approach is the backbone of the Kaspersky Threat Attribution Engine, which powers the threat attribution service on the Kaspersky Threat Intelligence Portal.

OpenAI says you can trust ChatGPT answers, as it kicks off ads rollout preparation

OpenAI is retiring famous GPT-4o model, says GPT 5.2 is good enough

U.S. convicts ex-Google engineer for sending AI tech data to China

How China’s “Walled Garden” is Redefining the Cyber Threat Landscape

Blog

How China’s “Walled Garden” is Redefining the Cyber Threat Landscape

In our latest webinar, Flashpoint unpacks the architecture of the Chinese threat actor cyber ecosystem—a parallel offensive stack fueled by government mandates and commercialized hacker-for-hire industry.

For years, the global cybersecurity community has operated under the assumption that technical information was a matter of public record. Security research has always been openly discussed and shared through a culture of global transparency. Today, that reality has fundamentally shifted. Flashpoint is witnessing a growing opacity—a “Walled Garden”—around Chinese data. As a result, the competence of Chinese threat actors and APTs has reached an industrialized scale.

In Flashpoint’s recent on-demand webinar, “Mapping the Adversary: Inside the Chinese Pentesting Ecosystem,” our analysts explain how China’s state policies surrounding zero-day vulnerability research have effectively shut out the cyber communities that once provided a window into Chinese tradecraft. However, they haven’t disappeared. Rather, they have been absorbed by the state to develop a mature, self-sustaining offensive stack capable of targeting global infrastructure.

Understanding the Walled Garden: The Shift from Disclosure to Nationalization

The “Walled Garden” is a direct result of a Chinese regulatory turning point in 2021: the Regulations on the Management of Security Vulnerabilities (RMSV). While the gradual walling off of China’s data is the cumulative result of years of implementing regulatory and policy strategies, the 2021 RMSV marks a critical turning point that effectively nationalized China’s vulnerability research capabilities. Under the RMSV, any individual or organization in China that discovers a new flaw must report it to the Ministry of Industry and Information Technology (MIIT) within 48 hours. Crucially, researchers are prohibited from sharing technical details with third parties—especially foreign entities—or selling them before a patch is issued.

It is important to note that this mandate is not limited to Chinese-based software or hardware; it applies to any vulnerability discovered, as long as the discoverer is a Chinese-based organization or national. This effectively treats software vulnerabilities as a national strategic resource for China. By centralizing this data, the Chinese government ensures it has an early window into zero-day exploits before the global defensive community.

For defenders, this means that by the time a vulnerability is public, there is a high probability it has already been analyzed and potentially weaponized within China’s state-aligned apparatus.

The Indigenous Kill Chain: Reconnaissance Beyond Shodan

Flashpoint analysts have observed that within this Walled Garden, traditional Western reconnaissance tools are losing their effectiveness. Chinese threat actors are utilizing an indigenous suite of cyberspace search engines that create a dangerous information asymmetry, allowing them to peer at defender infrastructure while shielding their own domestic base from Western scrutiny.

While Shodan remains the go-to resource for security teams, Flashpoint has seen Chinese threat actors favor three IoT search engines that offer them a massive home-field advantage:

- FOFA: Specializes in deep fingerprinting for middleware and Chinese-specific signatures, often indexing dorks for new vulnerabilities weeks before they appear in the West.

- Zoomai: Built for high-speed automation, offering APIs that integrate with AI systems to move from discovery to verified target in minutes.

- 360 Quake: Provides granular, real-time mapping through a CLI with an AI engine for complex asset portraits.

In the full session, we demonstrate exactly how Chinese operators use these tools to fuse reconnaissance and exploitation into a single, automated step—a capability most Western EDRs aren’t yet tuned to detect.

Building a State-Aligned Offensive Stack

Leveraging their knowledge of vulnerabilities and zero-day exploits, the illicit Chinese ecosystem is building tools designed to dismantle the specific technologies that power global corporate data centers and business hubs.

In the webinar, our analysts explain purpose-built cyber weapons designed to hunt VMware vCenter servers that support one-click shell uploads via vulnerabilities like Log4Shell. Beyond the initial exploit, Flashpoint highlights the rising use of Behinder (Ice Scorpion)—a sophisticated web shell management tool. Behinder has become a staple for Chinese operators because it encrypts command-and-control (C2) traffic, allowing attackers to evade conventional inspection and deep packet analytics.

Strengthen Your Defenses Against the Chinese Offensive Stack with Flashpoint

By understanding this “Walled Garden” architecture, defenders can move beyond generic signatures and begin to hunt for the specific TTPs—such as high-entropy C2 traffic and proprietary Chinese scanning patterns—that define the modern Chinese threat actor.

How can Flashpoint help? Flashpoint’s cyber threat intelligence platform cuts through the generic feed overload and delivers unrivaled primary-source data, AI-powered analysis, and expert human context.

Watch the on-demand webinar to learn more, or request a demo today.

Request a demo today.

The post How China’s “Walled Garden” is Redefining the Cyber Threat Landscape appeared first on Flashpoint.

175,000 Exposed Ollama Hosts Could Enable LLM Abuse

Among them, 23,000 hosts were persistently responsible for the majority of activity observed over 293 days of scanning.

The post 175,000 Exposed Ollama Hosts Could Enable LLM Abuse appeared first on SecurityWeek.

Guidance from the Frontlines: Proactive Defense Against ShinyHunters-Branded Data Theft Targeting SaaS

Introduction

Mandiant is tracking a significant expansion and escalation in the operations of threat clusters associated with ShinyHunters-branded extortion. As detailed in our companion report, 'Vishing for Access: Tracking the Expansion of ShinyHunters-Branded SaaS Data Theft', these campaigns leverage evolved voice phishing (vishing) and victim-branded credential harvesting to successfully compromise single sign-on (SSO) credentials and enroll unauthorized devices into victim multi-factor authentication (MFA) solutions.

This activity is not the result of a security vulnerability in vendors' products or infrastructure. Instead, these intrusions rely on the effectiveness of social engineering to bypass identity controls and pivot into cloud-based software-as-a-service (SaaS) environments.

This post provides actionable hardening, logging, and detection recommendations to help organizations protect against these threats. Organizations responding to an active incident should focus on rapid containment steps, such as severing access to infrastructure environments, SaaS platforms, and the specific identity stores typically used for lateral movement and persistence. Long-term defense requires a transition toward phishing-resistant MFA, such as FIDO2 security keys or passkeys, which are more resistant to social engineering than push-based or SMS authentication.

Containment

Organizations responding to an active or suspected intrusion by these threat clusters should prioritize rapid containment to sever the attacker’s access to prevent further data exfiltration. Because these campaigns rely on valid credentials rather than malware, containment must prioritize the revocation of session tokens and the restriction of identity and access management operations.

Immediate Containment Actions

-

Revoke active sessions: Identify and disable known compromised accounts and revoke all active session tokens and OAuth authorizations across IdP and SaaS platforms.

-

Restrict password resets: Temporarily disable or heavily restrict public-facing self-service password reset portals to prevent further credential manipulation. Do not allow the use of self-service password reset for administrative accounts.

-

Pause MFA registration: Temporarily disable the ability for users to register, enroll, or join new devices to the identity provider (IdP).

-

Limit remote access: Restrict or temporarily disable remote access ingress points, such as VPNs, or Virtual Desktops Infrastructure (VDI), especially from untrusted or non-compliant devices.

-

Enforce device compliance: Restrict access to IdPs and SaaS applications so that authentication can only originate from organization-managed, compliant devices and known trusted egress locations.

-

Implement 'shields up' procedures: Inform the service desk of heightened risk and shift to manual, high-assurance verification protocols for all account-related requests. In addition, remind technology operations staff not to accept any work direction via SMS messages from colleagues.

During periods of heightened threat activity, Mandiant recommends that organizations temporarily route all password and MFA resets through a rigorous manual identity verification protocol, such as the live video verification described in the Hardening section of this post. When appropriate, organizations should also communicate with end-users, HR partners, and other business units to stay on high-alert during the initial containment phase. Always report suspicious activity to internal IT and Security for further investigation.

1. Hardening

Defending against threat clusters associated with ShinyHunters-branded extortion begins with tightening manual, high-risk processes that attackers frequently exploit, particularly password resets, device enrollments, and MFA changes.

Help Desk Verification

Because these campaigns often target human-driven workflows through social engineering, vishing, and phishing, organizations should implement stronger, layered identity verification processes for support interactions, especially for requests involving account changes such as password resets or MFA modifications. Threat actors have also been known to impersonate third-party vendors to voice phish (vish) help desks and persuade staff to approve or install malicious SaaS application registrations.

As a temporary measure during heightened risk, organizations should require verification that includes the caller’s identity, a valid ID, and a visual confirmation that the caller and ID match.

To implement this, organizations should require help desk personnel to:

-

Require a live video call where the user holds a physical government ID next to their face. The agent must visually verify the match.

-

Confirm the name on the ID matches the employee’s corporate record.

-

Require out-of-band approval from the user's known manager before processing the reset.

-

Reject requests based solely on employee ID, SSN, or manager name. ShinyHunters possess this data from previous breaches and may use it to verify their identity.

-

If the user calls the helpdesk for a password reset, never perform the reset without calling the user back at a known good phone number to prevent spoofing.

-

If a live video call is not possible, require an alternative high-assurance path. It may be required for the user to come in person to verify their identity.

-

Optionally, after a completed interaction, the help desk agent can send an email to the user’s manager indicating that the change is complete with a picture from the video call of the user who requested the change on camera.

Special Handling for Third-Party Vendor Requests

Mandiant has observed incidents where attackers impersonate support personnel from third-party vendors to gain access. In these situations, the standard verification principals may not be applicable.

Under no circumstances should the Help Desk move forward with allowing access. The agent must halt the request and follow this procedure:

-

End the inbound call without providing any access or information

-

Independently contact the company's designated account manager for that vendor using trusted, on-file contact information

-

Require explicit verification from the account manager before proceeding with any request

End User Education

Organizations should educate end users on best practices especially when being reached out directly without prior notice.

-

Conduct internal Vishing and Phishing exercises to validate end user adoption of security best practices.

-

Educate that passwords should not be shared, regardless of who is asking for it.

-

Encourage users to exercise extreme caution when being requested to reset their own passwords and MFA; especially during off-business hours.

-

If they are unsure of the person or number they are being contacted by, have them cease all communications and contact a known support channel for guidance.

Identity & Access Management

Organizations should implement a layered series of controls to protect all types of identities. Access to cloud identity providers (IdPs), cloud consoles, SaaS applications, document and code repositories should be restricted since these platforms often become the control plane for privilege escalation, data access, and long-term persistence.

This can be achieved by:

- Limiting access to trusted egress points and physical locations

- Review and understand what “local accounts” exist within SaaS platforms:

- Ensure any default username/passwords have been updated according to the organization’s password policy.

- Limit the use of ‘local accounts’ that are not managed as part of the organization’s primary centralized IdP.

- Reducing the scope of non-human accounts (access keys, tokens, and non-human accounts)

- Where applicable, organizations should implement network restrictions across non-human accounts.

- Activity correlating to long-lived tokens (OAuth / API) associated with authorized / trusted applications should be monitored to detect abnormal activity.

- Limit access to organization resources from managed and compliant devices only. Across managed devices:

- Implement device posture checks via the Identity Provider.

- Block access from devices with prolonged inactivity.

- Block end users ability to enroll personal devices.

- Where access from unmanaged devices is required, organizations should:

- Limit non-managed devices to web only views.

- Disable ability to download/store corporate/business data locally on unmanaged personal devices.

- Limit session durations and prompt for re-authentication with MFA.

- Rapid enhancement to MFA methods, such as:

- Removal of SMS, phone call, push notification, and/or email as authentication controls.

- Requiring strong, phishing resistant MFA methods such as:

- Authenticator apps that require phishing resistant MFA (FIDO2 Passkey Support may be added to existing methods such as Microsoft Authenticator.)

- FIDO2 security keys for authenticating identities that are assigned privileged roles.

- Enforce multi-context criteria to enrich the authentication transaction.

- Examples include not only validating the identity, but also specific device and location attributes as part of the authentication transaction.

- For organizations that leverage Google Workspace, these concepts can be enforced by using context-aware access policies.

- For organizations that leverage Microsoft Entra ID, these concepts can be enforced by using a Conditional Access Policy.

- For organizations that leverage Okta, these concepts can be enforced by using Okta policies and rules.

- Examples include not only validating the identity, but also specific device and location attributes as part of the authentication transaction.

Attackers are consistently targeting non-human identities due to the limited number of detections around them, lack of baseline of normal vs abnormal activity, and common assignment of privileged roles attached to these identities. Organizations should:

-

Identify and track all programmatic identities and their usage across the environment, including where they are created, which systems they access, and who owns them.

-

Centralize storage in a secrets manager (cloud-native or third-party) and prevent credentials from being embedded in source code, config files, or CI/CD pipelines.

-

Restrict authentication IPs for programmatic credentials so they can only be used from trusted third-party or internal IP ranges wherever technically feasible.

-

Transition to workload identity federation: Where feasible, replace long-lived static credentials (such as AWS access keys or service account keys) with workload identity federation mechanisms (often based on OIDC). This allows applications to authenticate using short-lived, ephemeral tokens issued by the cloud provider, dramatically reducing the risk of credential theft from code repositories and file systems.

-

Enforce strict scoping and resource binding by tying credentials to specific API endpoints, services, or resources. For example, an API key should not simply have “read” access to storage, but be limited to a particular bucket or even a specific prefix, minimizing blast radius if it is compromised.

-

Baseline expected behavior for each credential type (typical access paths, destinations, frequency, and volume) and integrate this into monitoring and alerting so anomalies can be quickly detected and investigated.

Additional platform-specific hardening measures include:

-

Okta

-

Enable Okta ThreatInsight to automatically block IP addresses identified as malicious.

-

Restrict Super Admin access to specific network zones (corporate VPN).

-

Microsoft Entra ID

-

Implement common Conditional Access Policies to block unauthorized authentication attempts and restrict high-risk sign-ins.

-

Configure risk-based policies to trigger password changes or MFA when risk is detected.

-

Restrict who is allowed to register applications in Entra ID and require administrator approval for all application registrations.

-

Google Workspace

-

Use Context-Aware Access levels to restrict Google Drive and Admin Console access based on device attributes and IP address.

-

Enforce 2-Step Verification (2SV) for all Google Workspace users.

-

Use Advanced Protection to protect high-risk users from targeted phishing, malware, and account hijacking.

Infrastructure and Application Platforms

Infrastructure and application platforms such as Cloud consoles and SaaS applications are frequent targets for credential harvesting and data exfiltration. Protecting these systems typically requires implementing the previously outlined identity controls, along with platform-specific security guardrails, including:

-

Restrict management-plane access so it’s only reachable from the organization’s network and approved VPN ranges.

-

Scan for and remediate exposed secrets, including sensitive credentials stored across these platforms.

-

Enforce device access controls so access is limited to managed, compliant devices.

-

Monitor configuration changes to identify and investigate newly created resources, exposed services, or other unauthorized modifications.

-

Implement logging and detections to identify:

-

Newly created or modified network security group (NSG) rules, firewall rules, or publicly exposed resources that enable remote access.

-

Creation of programmatic keys and credentials (e.g., access keys).

-

Disable API/CLI access for non-essential users by restricting programmatic access to those who explicitly require it for management-plane operations.

Platform Specifics

-

GCP

-

Configure security perimeters with VPC Service Controls (VPC-SC) to prevent data from being copied to unauthorized Google Cloud resources even if they have valid credentials.

Set additional guardrails with organizational policies and deny policies applied at the organization level. This stops developers from introducing misconfigurations that could be exploited by attackers. For example, enforcing organizational policies like “iam.disableServiceAccountKeyCreation” will prevent generating new unmanaged service account keys that can be easily exfiltrated. -

Apply IAM Conditions to sensitive role bindings. Restrict roles so they only activate if the resource name starts with a specific prefix or if the request comes during specific working hours. This limits the blast radius of a compromised credential.

-

AWS

-

Apply Service Control Policies (SCPs) at the root level of the AWS Organization that limit the attack surface of AWS services. For example, deny access in unused regions, block creation of IAM access keys, and prevent deletion of backups, snapshots, and critical resources.

-

Define data perimeters through Resource Control Policies (RCPs) that restrict access to sensitive resources (like S3 buckets) to only trusted principals within your organization, preventing external entities from accessing data even with valid keys.

-

Implement alerts on common reconnaissance commands such as GetCallerIdentity API calls originating from non-corporate IP addresses. This is often the first reconnaissance command an attacker runs to verify their stolen keys.

-

- Azure

- Enforce Conditional Access Policies (CAPs) that block access to administrative applications unless the device is "Microsoft Entra hybrid joined" and "Compliant." This prevents attackers from accessing resources using their own tools or devices.

- Eliminate standing admin access and require Just-In-Time (JIT) through Privileged Identity Management (PIM) for elevation for roles such as Global Administrator, mandating an approval workflow and justification for each activation.

- Enforce the use of Managed Identities for Azure resources accessing other services. This removes the need for developers to handle or rotate credentials for service principals, eliminating the static key attack vector.

- Source Code Management

- Enforce Single Sign-On (SSO) with SCIM for automated lifecycle management and mandate FIDO2/WebAuthn to neutralize phishing. Additionally, replace broad access tokens with short-lived, Fine-Grained Personal Access Tokens (PATs) to enforce least privilege.

- Prevent credential leakage by enabling native "Push Protection" features or implementing blocking CI/CD workflows (such as TruffleHog) that automatically reject commits containing high-entropy strings before they are merged.

- Mitigate the risk of malicious code injection by requiring cryptographic commit signing (GPG/S/MIME) and mandating a minimum of two approvals for all Pull Requests targeting protected branches.

- Conduct scheduled historical scans to identify and purge latent secrets that evaded preventative controls, ensuring any compromised credentials are immediately rotated and forensically investigated.

- Salesforce

- Reference Mandiant’s Salesforce Hardening blog post

- Reference Salesforce “Protecting Salesforce Data After an Identity Compromise” blog post

2. Logging

Modern SaaS intrusions rarely rely on payloads or technical exploits. Instead, Mandiant consistently observes attackers leveraging valid access (frequently gained via vishing or MFA bypass) to abuse native SaaS capabilities such as bulk exports, connected apps, and administrative configuration changes.

Without clear visibility into these environments, detection becomes nearly impossible. If an organization cannot track which identity authenticated, what permissions were authorized, and what data was exported, they often remain unaware of a campaign until an extortion note appears.

This section focuses on ensuring your organization has the necessary visibility into identity actions, authorizations, and SaaS export behaviors required to detect and disrupt these incidents before they escalate.

Identity Provider

If an adversary gains access through vishing and MFA manipulation, the first reliable signals will appear in the SSO control plane, not inside a workstation. In this example, the goal is to ensure Okta and Entra ID ogs identify who authenticated, what MFA changes occurred, and where access originated from.

What to Enable and Ingest into the SIEM

Okta

-

Authentication events (successful and failed sign-ins)

-

MFA lifecycle events (enrollment/activation and changes to authentication factors or devices)

-

Administrative identity events that capture security-relevant actions (e.g., changes that affect authentication posture)

Entra ID

-

Authentication events

-

Audit logs for MFA changes / authentication method

-

Audit logs for security posture changes that affect authentication

-

Conditional Access policy changes

-

Changes to Named Locations / trusted locations

What “Good” Looks Like Operationally

You should be able to quickly identify:

-

Authentication factor, device enrollment activity, and the user responsible

-

Source IP, geolocation, (and ASN if available) associated with that enrollment

-

Whether access originated from the organization’s expected egress and identify access paths

Platform

Google Workspace Logging

Defenders should ensure they have visibility into OAuth authorizations, mailbox deletion activity (including deletion of security notification emails), and Google Takeout exports.

What You Need in Place Before Logging

-

Correct edition + investigation surfaces available: Confirm your Workspace edition supports the Audit and investigation tool and the Security Investigation tool (if you plan to use it).

-

Correct admin privileges: Ensure the account has Audit & Investigation privilege (to access OAuth/Gmail/Takeout log events) and Security Center privilege.

-

If you need Gmail message content: Validate edition + privileges allow viewing message content during investigations.

What to Enable and Ingest into the SIEM

OAuth / App authorization logs

Enable and ingest token/app authorization logs to observe:

-

Which application was authorized (app name + identifier)

-

Which user granted access

-

What scopes were granted

-

Source IP and geolocation for the authorization

This is the telemetry required to detect suspicious app authorizations and add-on enablement that can support mailbox manipulation.

Gmail audit logs

Enable and ingest Gmail audit events that capture:

-

Message deletion actions (including permanent delete where available)

-

Message direction indicators (especially useful for outbound cleanup behavior)

-

Message metadata (e.g., subject) to support detection of targeted deletions of security notification emails

Google Takeout audit logs

Enable and ingest Takeout logs to capture:

-

Export initiation and completion events

-

User and source IP/geo for the export activity

Salesforce Logging

Activity observed by Mandiant includes the use of Salesforce Data Loader and large-scale access patterns that won’t be visible if only basic login history logs are collected. Additional Salesforce telemetry that captures logins, configuration changes, connected app/API activity, and export behavior is needed to investigate SaaS-native exfiltration. Detailed implementation guidance for these visibility gaps can be found in Mandiant’s Targeted Logging and Detection Controls for Salesforce.

What You Need in Place Before Logging

- Entitlement check (must-have)

- Most security-relevant Salesforce logs are gated behind Event Monitoring, delivered through Salesforce Shield or the Event Monitoring add-on. Confirm you are licensed for the event types you plan to use for detection.

- Choose the collection method that matches your operations

- Use real-time event monitoring (RTEM) if you need near real-time detection.

- Use event log files (ELF) if you need predictable batch exports for long-term storage and retrospective investigations.

- Use event log objects (ELO) if you require queryable history via Salesforce Object Query Language (often requires Shield/add-on).

- Enable the events you intend to detect on

- Use Event Manager to explicitly turn on the event categories you plan to ingest, and ensure the right teams have access to view and operationalize the data (profiles/permission sets).

- Threat Detection and Enhanced Transaction Security

- If your environment uses Threat Detection or ETS, verify the event types that feed those controls and ensure your log ingestion platform doesn’t omit the events you expect to alert on.

What to Enable and Ingest into the SIEM

Authentication and access

-

LoginHistory (who logged in, when, from where, success/failure, client type)

-

LoginEventStream (richer login telemetry where available)

Administrative/configuration visibility

-

SetupAuditTrail (changes to admin and security configurations)

API and export visibility

-

ApiEventStream (API usage by users and connected apps)

-

ReportEventStream (report export/download activity)

-

BulkApiResultEvent (bulk job result downloads—critical for bulk extraction visibility)

Additional high-value sources (if available in your tenant)

-

LoginAsEventStream (impersonation / “login as” activity)

-

PermissionSetEvent (permission grants/changes)

SaaS Pivot Logging

Threat actors often pivot from compromised SSO providers into additional SaaS platforms, including DocuSign and Atlassian. Ingesting audit logs from these platforms into a SIEM environment enables the detection of suspicious access and large-scale data exfiltration following an identity compromise.

What You Need in Place Before Logging

-

You need tenant-level admin permissions to access and configure audit/event logging.

-

Confirm your plan/subscriptions include the audit/event visibility you are trying to collect (Atlassian org audit log capabilities can depend on plan/Guard tier; DocuSign org-level activity monitoring is provided via DocuSign Monitor).

-

API access (If you are pulling logs programmatically): Ensure the tenant is able to use the vendor’s audit/event APIs (DocuSign Monitor API; Atlassian org audit log API/webhooks depending on capability).

-

Retention reality check: Validate the platform’s native audit-log retention window meets your investigation needs.

What to Enable and Ingest into the SIEM

DocuSign (audit/monitoring logs)

-

Authentication events (successful/failed sign-ins, SSO vs password login if available)

-

Administrative changes (user/role changes, org-level setting changes)

-

Envelope access and bulk activity (envelope viewed/downloaded, document downloaded, bulk send, bulk download/export where available)

-

API activity (API calls, integration keys/apps used, client/app identifiers)

-

Source context (source IP/geo, user agent/client type)

Atlassian (Jira/Confluence audit logs)

-

Authentication events (SSO sign-ins, failed logins)

-

Privilege and admin changes (role/group membership changes, org admin actions)

-

Confluence/Jira data access at scale:

-

Confluence: space/page view/download/export events (especially exports)

-

Jira: project access, issue export, bulk actions (where available)

-

API token and app activity (API token created/revoked, OAuth app connected, marketplace app install/uninstall)

-

Source context (source IP/geolocation, user agent/client type)

Microsoft 365 Audit Logging

Mandiant has observed threat actors leveraging PowerShell to download sensitive data from SharePoint and OneDrive as part of this campaign. To detect the activity, it is necessary to ingest M365 audit telemetry that records file download operations along with client context (especially the user agent).

What You Need in Place Before Logging

-

Microsoft Purview Audit is available and enabled: Your tenant must have Microsoft Purview Audit turned on and usable (Audit “Standard” vs “Premium” affects capabilities/retention).

-

Correct permissions to view/search audit: Assign the compliance/audit roles required to access audit search and records.

-

SharePoint/OneDrive operations are present in the Unified Audit Log: Validate that SharePoint/OneDrive file operations are being recorded (this is where operations like file download/access show up).

-

Client context is captured: Confirm audit records include UserAgent (when provided by the client) so you can identify PowerShell-based access patterns in SharePoint/OneDrive activity.

What to Enable and Ingest into the SIEM

-

FileDownloadedandFileAccessed(SharePoint/OneDrive) -

User agent/client identifier (to surface WindowsPowerShell-style user agents)

-

User identity, source IP, geolocation

-

Target resource details

3. Detections

The following detections target behavioral patterns Mandiant has identified in ShinyHunters related intrusions. In these scenarios, attackers typically gain initial access by compromising SSO platforms or manipulating MFA controls, then leverage native SaaS capabilities to exfiltrate data and evade detection.The following use cases are categorized by area of focus, including Identity Providers and Productivity Platforms.

Note: This activity is not the result of a security vulnerability in vendors' products or infrastructure. Instead, these intrusions rely on the effectiveness of ShinyHunters related intrusions.

Implementation Guidelines

These rules are presented as YARA-L pseudo-code to prioritize clear detection logic and cross-platform portability. Because field names, event types, and attribute paths vary across environments, consider the following variables:

-

Ingestion Source: Differences in how logs are ingested into Google SecOps.

-

Parser Mapping: Specific UDM (Unified Data Model) mappings unique to your configuration.

-

Telemetry Availability: Variations in logging levels based on your specific SaaS licensing.

-

Reference Lists: Curated allowlists/blocklists the organization will need to create to help reduce noise and keep alerts actionable.

Note: Mandiant recommends testing these detections prior to deployment by validating the exact event mappings in your environment and updating the pseudo-fields to match your specific telemetry.

Okta

MFA Device Enrollment or Changes (Post-Vishing Signal)

Detects MFA device enrollment and MFA life cycle changes that often occur immediately after a social-engineered account takeover. When this alert is triggered, immediately review the affected user’s downstream access across SaaS applications (Salesforce, Google Workspace, Atlassian, DocuSign, etc.) for signs of large-scale access or data exports.

Why this is high-fidelity: In this intrusion pattern, MFA manipulation is a primary “account takeover” step. Because MFA lifecycle events are rare compared to routine logins, any modification occurring shortly after access is gained serves as a high-fidelity indicator of potential compromise.

Key signals

-

Okta system Log MFA lifecycle events (enroll/activate/deactivate/reset)

-

principal.user,principal.ip,client.user_agent, geolocation/ASN (if enriched) -

Optional: proximity to password reset, recovery, or sign-in anomalies (same user, short window)

Pseudo-code (YARA-L)

events:

$mfa.metadata.vendor_name = "Okta"

$mfa.metadata.product_event_type in ( "okta.user.mfa.factor.enroll", "okta.user.mfa.factor.activate", "okta.user.mfa.factor.deactivate", "okta.user.mfa.factor.reset_all" )

$u= $mfa.principal.user.userid

$t_mfa = $mfa.metadata.event_timestamp

$ip = coalesce($mfa.principal.ip, $mfa.principal.asset.ip)

$ua = coalesce($mfa.network.http.user_agent, $mfa.extracted.fields["userAgent"], "")

$reset.metadata.vendor_name = "Okta"

$reset.metadata.product_event_type in (

"okta.user.password.reset", "okta.user.account.recovery.start" )

$t_reset = $reset.metadata.event_timestamp

$auth.metadata.vendor_name = "Okta"

$auth.metadata.product_event_type in ("okta.user.authentication.sso", "okta.user.session.start")

$t_auth = $auth.metadata.event_timestamp

match:

$u over 30m

condition:

// Always alert on MFA lifecycle change

$mfa and

// Optional sequence tightening (enrichment only, not mandatory):

// If reset/auth exists in the window, enforce it happened before the MFA change.

(

(not $reset and not $auth) or

(($reset and $t_reset < $t_mfa) or ($auth and $t_auth < $t_mfa))

)Suspicious admin.security Actions from Anonymized IPs

Alert on Okta admin/security posture changes when the admin action occurs from suspicious network context (proxy/VPN-like indicators) or immediately after an unusual auth sequence.

Why this is high-fidelity: Admin/security control changes are low volume and can directly enable persistence or reduce visibility.

Key signals

-

Okta admin/system events (e.g., policy changes, MFA policy, session policy, admin app access)

-

“Anonymized” network signal: VPN/proxy ASN, “datacenter” reputation, TOR list, etc.

-

Actor uses unusual client/IP for admin activity

Reference lists

-

VPN_TOR_ASNS(proxy/VPN ASN list)

Pseudo-code (YARA-L)

events:

$a.metadata.vendor_name = "Okta"

$a.metadata.product_event_type in ("okta.system.policy.update","okta.system.security.change","okta.user.session.clear","okta.user.password.reset","okta.user.mfa.reset_all")

userid=$a.principal.user.userid

// correlate with a recent successful login for the same actor if available

$l.metadata.vendor_name = "Okta"

$l.metadata.product_event_type = "okta.user.authentication.sso"

userid=$l.principal.user.userid

match:

userid over 2h

condition:

$a and $lGoogle Workspace

OAuth Authorization for ToogleBox Recall

Detects OAuth/app authorization events for ToogleBox recall (or the known app identifier), indicating mailbox manipulation activity.

Why this is high-fidelity: This is a tool-specific signal tied to the observed “delete security notification emails” behavior.

Key signals

-

Workspace OAuth / token authorization log event

-

App name, app ID, scopes granted, granting user, source IP/geo

-

Optional: privileged user context (e.g., admin, exec assistant)

Pseudo-code (YARA-L)

events:

$e.metadata.vendor_name = "Google Workspace"

$e.metadata.product_event_type in ("gws.oauth.grant", "gws.token.authorize") // placeholders

// match app name OR app id if you have it

(lower($e.target.application) contains "tooglebox" or

lower($e.target.application) contains "recall")

condition:

$eGmail Deletion of Okta Security Notification Email

Detects deletion actions targeting Okta security notification emails (e.g., “Security method enrolled”).

Why this is high-fidelity: Targeted deletion of security notifications is intentional evasion, not normal email behavior.

Key signals

-

Gmail audit log delete/permanent delete (or mailbox cleanup) event

-

Subject matches a small set of security-notification strings

-

Time correlation: deletion shortly after receipt (optional)

Pseudo-code (YARA-L)

events:

$d.metadata.vendor_name = "Google Workspace"

$d.metadata.product_event_type in ("gws.gmail.message.delete",

"gws.gmail.message.trash",

"gws.gmail.message.permanent_delete") // PLACEHOLDER

regex_match(lower($d.target.email.subject),

"(security method enrolled|new sign-in|new device|mfa|authentication|verification)")

$u = $d.principal.user.userid

$t = $d.metadata.event_timestamp

match:

$u over 30m

condition:

$d and count($d) >= 2 // tighten: at least 2 in 30m; adjust if too strict

}Google Takeout Export Initiated/Completed

Detects Google Takeout export initiation/completion events.

Why this is high-fidelity: Takeout exports are uncommon in corporate contexts; in this campaign they represent a direct data export path.

Key signals

-

Takeout audit events (e.g., initiated, completed)

-

User, source IP/geo, volume

Reference lists

-

TAKEOUT_ALLOWED_USERS(rare; HR offboarding workflows, legal export workflows)

Pseudo-code (YARA-L)

events:

$start.metadata.vendor_name = "Google Workspace"

$start.metadata.product_event_type = "gws.takeout.export.start"

$user = $start.principal.user.userid

$job = $start.target.resource.id // if available; otherwise remove job join

$done.metadata.vendor_name = "Google Workspace"

$done.metadata.product_event_type = "gws.takeout.export.complete"

$bytes = coalesce($done.target.file.size, $done.extensions.bytes_exported)

match:

// takeout can take hours; don't use 10m here, adjust accordingly

$start.principal.user.userid = $done.principal.user.userid over 24h

// if you have a job/export id, this makes it *much* cleaner

$start.target.resource.id = $done.target.resource.id

condition:

$start and $done and

$start.metadata.event_timestamp < $done.metadata.event_timestamp and

$bytes >= 500000000 // 500MB start point; tune

not ($u in %TAKEOUT_ALLOWED_USERS) // OPTIONAL: remove if you don't maintain itCross-SaaS

Attempted Logins from Known Campaign Proxy/IOC Networks

Detects authentication attempts across SaaS/SSO providers originating from IPs/ASNs associated with the campaign.

Why this is high-fidelity: These IPs and ASNs lack legitimate business overlap; matches indicate direct interaction between compromised credentials and known adversary-controlled infrastructure.

Key signals

-

Authentication attempts across Okta / Salesforce / Workspace / Atlassian / DocuSign

-

principal.ipmatches IOC IPs or ASN list

Reference lists

-

SHINYHUNTERS_PROXY_IPS -

VPN_TOR_ASNS

Pseudo-code (YARA-L)

events:

$e.metadata.product_event_type in (

"okta.login.attempt", "workday.sso.login.attempt",

"gws.login.attempt", "salesforce.login.attempt",

"atlassian.login.attempt", "docusign.login.attempt"

)

(

$e.principal.ip in %SHINYHUNTERS_PROXY_IPS or

$e.principal.ip.asn in %VPN_TOR_ASNS

)

condition:

$eIdentity Activity Outside Normal Business Hours

Detects identity events occurring outside normal business hours, focusing on high-risk actions (sign-ins, password reset, new MFA enrollment and/or device changes).

Why this is high-fidelity: A strong indication of abnormal user behavior when also constrained to sensitive actions and users who rarely perform them.

Key signals

-

User sign-ins, password resets, MFA enrollment, device registrations

-

Timestamp bucket: late evening / friday afternoon / weekends

Pseudo-code (YARA-L)

events:

$e.metadata.vendor_name = "Okta"

$e.metadata.product_event_type in ("okta.user.password.reset","okta.user.mfa.factor.activate","okta.user.mfa.factor.reset_all") // PLACEHOLDER

outside_business_hours($e.metadata.event_timestamp, "America/New_York")

// Include the business hours your organization functions in

$u = $e.principal.user.userid

condition:

$eSuccessful Sign-in From New Location and New MFA Method

Detects a successful login that is simultaneously from a new geolocation and uses a newly registered MFA method.

Why this is high-fidelity: This pattern represents a compound condition that aligns with MFA manipulation and unfamiliar access context.

Key signals

-

Successful authentication

-

New geolocation compared to user baseline

-

New factor method compared to user baseline (or recent MFA enrollment)

-

Optional sequence: MFA enrollment occurs after login

Pseudo-code (YARA-L)

events:

$login.metadata.vendor_name = "Okta"

$login.metadata.product_event_type = "okta.login.success"

$u = $login.principal.user.userid

$geo = $login.principal.location.country

$t_l = $login.metadata.event_timestamp

$m = $login.security_result.auth_method // if present; otherwise join to factor event

condition:

$login and

first_seen_country_for_user($u, $geo) and

first_seen_factor_for_user($u, $m)Multiple MFA Enrollments Across Different Users From the Same Source IP

Detects the same source IP enrolling/changing MFA for multiple users in a short window.

Why this is high-fidelity:This pattern mirrors a known social engineering tactic where threat actors manipulate help desk admins to enroll unauthorized devices into a victim’s MFA - spanning multiple users from the same source address

Key signals

-

Okta MFA lifecycle events

-

Same

src_ip -

Distinct user count threshold

-

Tight window

Pseudo-code (YARA-L)

events:

$m.metadata.vendor_name = "Okta"

$m.metadata.product_event_type in ("<OKTA_MFA_ENROLL_EVENT>", "<OKTA_MFA_DEVICE_ENROLL_EVENT>")

$ip = coalesce($m.principal.ip, $m.principal.asset.ip)

$uid = $m.principal.user.userid

match:

$ip over 10m

condition:

count_distinct($uid) >= 3Network

Web/DNS Access to Credential Harvesting, Portal Impersonation Domains

Detects DNS queries or HTTP referrers matching brand and SSO/login keyword lookalike patterns.

Why this is high-fidelity: Captures credential-harvesting infrastructure patterns when you have network telemetry.

Key signals

-

DNS question name or HTTP referrer/URL

-

Regex match for brand + SSO keywords

-

Exclusions for your legitimate domains

Reference lists

-

Allowlist (small) of legitimate domains (optional)

Pseudo-code (YARA-L)

events:

$event.metadata.event_type in ("NETWORK_HTTP", "NETWORK_DNS")

// pick ONE depending on which log source you're using most

// DNS:

$domain = lower($event.network.dns.questions.name)

// If you’re using HTTP instead, swap the line above to:

// $domain = lower($event.network.http.referring_url)

condition:

regex_match($domain, ".*(yourcompany(my|sso|internal|okta|access|azure|zendesk|support)|(my|sso|internal|okta|access|azure|zendesk|support)yourcompany).*"

)

and not regex_match($domain, ".*yourcompany\\.com.*")

and not regex_match($domain, ".*okta\\.yourcompany\\.com.*")Microsoft 365

M365 SharePoint/OneDrive: FileDownloaded with WindowsPowerShell User Agent

Detects SharePoint/OneDrive downloads with PowerShell user-agent that exceed a byte threshold or count threshold within a short window.

Why this is high-fidelity: PowerShell-driven SharePoint downloading and burst volume indicates scripted retrieval.

Key signals

-

FileDownloaded/FileAccessed

-

User agent contains PowerShell

-

Bytes transferred OR number of downloads in window

-

Timestamp window (ordering implicit) and min<max check

Pseudo-code (YARA-L)

events:

$e.metadata.vendor_name = "Microsoft"

(

$e.target.application = "SharePoint" or

$e.target.application = "OneDrive"

)

$e.metadata.product_event_type = /FileDownloaded|FileAccessed/

$e.network.http.user_agent = /PowerShell/ nocase

$user = $e.principal.user.userid

$bytes = coalesce($e.target.file.size, $e.extensions.bytes_transferred)

$ts = $e.metadata.event_timestamp

match:

$user over 15m

condition:

// keep your PowerShell constraint AND require volume

$e and (sum($bytes) >= 500000000 or count($e) >= 20) and min($ts) < max($ts)M365 SharePoint: High Volume Document FileAccessed Events

Detects SharePoint document file access events that exceed a count threshold and minimum unique file types within a short window.

Why this is high-fidelity: Burst volume may indicate scripted retrieval or usage of the Open-in-App feature within SharePoint.

Key signals

-

FileAccessed

-

Filtering on common document file types (e.g., PDF)

-

Number of downloads in window

-

Minimum unique file types

Pseudo-code (YARA-L)

events:

$e.metadata.vendor_name = "Microsoft"

$e.metadata.product_event_type = "FileAccessed"

$e.target.application = "SharePoint"

$e.target.file.full_path = /\.(doc[mx]?|xls[bmx]?|ppt[amx]?|pdf)$/ nocase)

$file_extension_extract = re.capture($e.target.file.full_path, `\.([^\.]+)$`)

$session_id = $e.network.session_id

match:

$session_id over 5m

outcome:

$target_url_count = count_distinct(strings.coalesce($e.target.file.full_path))

$extension_count = count_distinct($file_extension_extract)

condition:

$e and $target_url_count >= 50 and $extension_count >= 3M365 SharePoint: High Volume Document FileDownloaded Events

Detects SharePoint document file downloaded events that exceed a count threshold and minimum unique file types within a short window.

Why this is high-fidelity: Burst volume may indicate scripted retrieval, which may also be generated by legitimate backup processes.

Key signals

-

FileDownloaded

-

Filtering on common document file types (e.g., PDF)

-

Number of downloads in window

-

Minimum unique file types

Pseudo-code (YARA-L)

events:

$e.metadata.vendor_name = "Microsoft"

$e.metadata.product_event_type = "FileDownloaded"

$e.target.application = "SharePoint"

$e.target.file.full_path = /\.(doc[mx]?|xls[bmx]?|ppt[amx]?|pdf)$/ nocase)

$file_extension_extract = re.capture($e.target.file.full_path, `\.([^\.]+)$`)

$session_id = $e.network.session_id

match:

$session_id over 5m

outcome:

$target_url_count = count_distinct(strings.coalesce($e.target.file.full_path))

$extension_count = count_distinct($file_extension_extract)

condition:

$e and $target_url_count >= 50 and $extension_count >= 3M365 SharePoint: Query for Strings of Interest

Detects SharePoint queries for files relating to strings of interest, such as sensitive documents, clear-text credentials, and proprietary information.

Why this is high-fidelity: Multiple searches for strings of interest by a single account occurs infrequently. Generally, users will search for project or task specific strings rather than general labels (e.g., “confidential”).

Key signals

-

SearchQueryPerformed

-

Filtering on strings commonly associated with sensitive or privileged information

Pseudo-code (YARA-L)

events:

$e.metadata.vendor_name = "Microsoft"

$e.metadata.product_event_type = "SearchQueryPerformed"

$e.target.application = "SharePoint"

$e.additional.fields["search_query_text"] = /\bpoc\b|proposal|confidential|internal|salesforce|vpn/ nocase

condition:

$eM365 Exchange Deletion of MFA Modification Notification Email

Detects deletion actions targeting Okta and other platform security notification emails (e.g., “Security method enrolled”).

Why this is high-fidelity: Targeted deletion of security notifications can be intentional evasion and is not typically performed by email users.

Key signals

-

M365 Exchange audit log delete/permanent delete (or mailbox cleanup) event

-

Subject matches a small set of security-notification strings

-

Time correlation: deletion shortly after receipt (optional)

Pseudo-code (YARA-L)

events:

$e.metadata.vendor_name = "Microsoft"

$e.target.application = "Exchange"

$e.metadata.product_event_type = /^(SoftDelete|HardDelete|MoveToDeletedItems)$/ nocase

$e.network.email.subject = /new\s+(mfa|multi-|factor|method|device|security)|\b2fa\b|\b2-Step\b|(factor|method|device|security|mfa)\s+(enroll|registered|added|change|verify|updated|activated|configured|setup)/ nocase

// filtering specifically for new device registration strings

$e.network.email.subject = /enroll|registered|added|change|verify|updated|activated|configured|setup/ nocase

// tuning out new device logon events

$e.network.email.subject != /(sign|log)(-|\s)?(in|on)/ nocase

condition:

$eVishing for Access: Tracking the Expansion of ShinyHunters-Branded SaaS Data Theft

Introduction

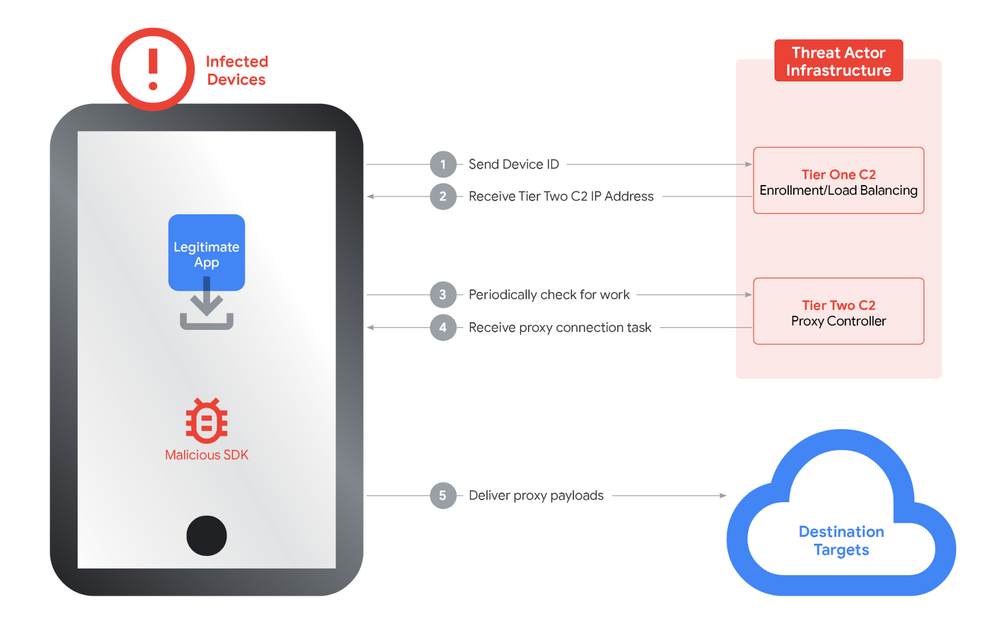

Mandiant has identified an expansion in threat activity that uses tactics, techniques, and procedures (TTPs) consistent with prior ShinyHunters-branded extortion operations. These operations primarily leverage sophisticated voice phishing (vishing) and victim-branded credential harvesting sites to gain initial access to corporate environments by obtaining single sign-on (SSO) credentials and multi-factor authentication (MFA) codes. Once inside, the threat actors target cloud-based software-as-a-service (SaaS) applications to exfiltrate sensitive data and internal communications for use in subsequent extortion demands.

Google Threat Intelligence Group (GTIG) is currently tracking this activity under multiple threat clusters (UNC6661, UNC6671, and UNC6240) to enable a more granular understanding of evolving partnerships and account for potential impersonation activity. While this methodology of targeting identity providers and SaaS platforms is consistent with our prior observations of threat activity preceding ShinyHunters-branded extortion, the breadth of targeted cloud platforms continues to expand as these threat actors seek more sensitive data for extortion. Further, they appear to be escalating their extortion tactics with recent incidents including harassment of victim personnel, among other tactics.

This activity is not the result of a security vulnerability in vendors' products or infrastructure. Instead, it continues to highlight the effectiveness of social engineering and underscores the importance of organizations moving towards phishing-resistant MFA where possible. Methods such as FIDO2 security keys or passkeys are resistant to social engineering in ways that push-based or SMS authentication are not.

Mandiant has also published a comprehensive guide with proactive hardening and detection recommendations, and Google published a detailed walkthrough for operationalizing these findings within Google Security Operations.

Figure 1: Attack path diagram

UNC6661 Vishing and Credential Theft Activity

In incidents spanning early to mid-January 2026, UNC6661 pretended to be IT staff and called employees at targeted victim organizations claiming that the company was updating MFA settings. The threat actor directed the employees to victim-branded credential harvesting sites to capture their SSO credentials and MFA codes, and then registered their own device for MFA. The credential harvesting domains attributed to UNC6661 commonly, but not exclusively, use the format <companyname>sso.com or <companyname>internal.com and have often been registered with NICENIC.

In at least some cases, the threat actor gained access to accounts belonging to Okta customers. Okta published a report about phishing kits targeting identity providers and cryptocurrency platforms, as well as follow-on vishing attacks. While they associate this activity with multiple threat clusters, at least some of the activity appears to overlap with the ShinyHunters-branded operations tracked by GTIG.

After gaining initial access, UNC6661 moved laterally through victim customer environments to exfiltrate data from various SaaS platforms (log examples in Figures 2 through 5). While the targeting of specific organizations and user identities is deliberate, analysis suggests that the subsequent access to these platforms is likely opportunistic, determined by the specific permissions and applications accessible via the individual compromised SSO session. These compromises did not result from security vulnerabilities in the vendors' products or infrastructure.

In some cases, they have appeared to target specific types of information. For example, the threat actors have conducted searches in cloud applications for documents containing specific text including "poc," "confidential," "internal," "proposal," "salesforce," and "vpn" or targeted personally identifiable information (PII) stored in Salesforce. Additionally, UNC6661 may have targeted Slack data at some victims' environments, based on a claim made in a ShinyHunters-branded data leak site (DLS) entry.

{

"AppAccessContext": {

"AADSessionId": "[REDACTED_GUID]",

"AuthTime": "1601-01-01T00:00:00",

"ClientAppId": "[REDACTED_APP_ID]",

"ClientAppName": "Microsoft Office",

"CorrelationId": "[REDACTED_GUID]",

"TokenIssuedAtTime": "1601-01-01T00:02:56",

"UniqueTokenId": "[REDACTED_ID]"

},

"CreationTime": "2026-01-10T13:17:11",

"Id": "[REDACTED_GUID]",

"Operation": "FileDownloaded",

"OrganizationId": "[REDACTED_GUID]",

"RecordType": 6,

"UserKey": "[REDACTED_USER_KEY]",

"UserType": 0,

"Version": 1,

"Workload": "SharePoint",

"ClientIP": "[REDACTED_IP]",

"UserId": "[REDACTED_EMAIL]",

"ApplicationId": "[REDACTED_APP_ID]",

"AuthenticationType": "OAuth",

"BrowserName": "Mozilla",

"BrowserVersion": "5.0",

"CorrelationId": "[REDACTED_GUID]",

"EventSource": "SharePoint",

"GeoLocation": "NAM",

"IsManagedDevice": false,

"ItemType": "File",

"ListId": "[REDACTED_GUID]",

"ListItemUniqueId": "[REDACTED_GUID]",

"Platform": "WinDesktop",

"Site": "[REDACTED_GUID]",

"UserAgent": "Mozilla/5.0 (Windows NT; Windows NT 10.0; en-US) WindowsPowerShell/5.1.20348.4294",

"WebId": "[REDACTED_GUID]",

"DeviceDisplayName": "[REDACTED_IPV6]",

"EventSignature": "[REDACTED_SIGNATURE]",

"FileSizeBytes": 31912,

"HighPriorityMediaProcessing": false,

"ListBaseType": 1,

"ListServerTemplate": 101,

"SensitivityLabelId": "[REDACTED_GUID]",

"SiteSensitivityLabelId": "",

"SensitivityLabelOwnerEmail": "[REDACTED_EMAIL]",

"SourceRelativeUrl": "[REDACTED_RELATIVE_URL]",

"SourceFileName": "[REDACTED_FILENAME]",

"SourceFileExtension": "xlsx",

"ApplicationDisplayName": "Microsoft Office",

"SiteUrl": "[REDACTED_URL]",

"ObjectId": "[REDACTED_URL]/[REDACTED_FILENAME]"

}Figure 2: SharePoint/M365 log example

"Login","20260120163111.430","SLB:[REDACTED]","[REDACTED]","[REDACTED]","192","25","/index.jsp","","1jVcuDh1VIduqg10","Standard","","167158288","5","Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/IP_ADDRESS_REMOVED Safari/537.36","","9998.0","user@[REDACTED_DOMAIN].com","TLSv1.3","TLS_AES_256_GCM_SHA384","","https://[REDACTED_IDP_DOMAIN]/","[REDACTED].my.salesforce.com","CA","","","0LE1Q000000LBVK","2026-01-20T16:31:11.430Z","[REDACTED]","76.64.54[.]159","","LOGIN_NO_ERROR","76.64.54[.]159",""Figure 3: Salesforce log example

{

"Timestamp": "2026-01-21T12:5:2-03:00",

"Timestamp UTC": "[REDACTED]",

"Event Name": "User downloads documents from an envelope",

"Event Id": "[REDACTED_EVENT_ID]",

"User": "[REDACTED]@example.com",

"User Id": "[REDACTED_USER_ID]",

"Account": "[REDACTED_ORG_NAME]",

"Account Id": "[REDACTED_ACCOUNT_ID]",

"Integrator Key": "[REDACTED_KEY]",

"IP Address": "73.135.228[.]98",

"Latitude": "[REDACTED]",

"Longitude": "[REDACTED]",

"Country/Region": "United States",

"State": "Maryland",

"City": "[REDACTED]",

"Browser": "Chrome 143",

"Device": "Apple Mac",

"Operating System": "Mac OS X 10",

"Source": "Web",

"DownloadType": "Archived",

"EnvelopeId": "[REDACTED_ENVELOPE_ID]"

}Figure 4: Docusign log example

In at least one incident where the threat actor gained access to an Okta customer account, UNC6661 enabled the ToogleBox Recall add-on for the victim's Google Workspace account, a tool designed to search for and permanently delete emails. They then deleted a "Security method enrolled" email from Okta, almost certainly to prevent the employee from identifying that their account was associated with a new MFA device.

{

"Date": "2026-01-11T06:3:00Z",

"App ID": "[REDACTED_ID].apps.googleusercontent.com",

"App name": "ToogleBox Recall",

"OAuth event": "Authorize",

"Description": "User authorized access to ToogleBox Recall for specific Gmail and Apps Script scopes.",

"User": "user@[REDACTED_DOMAIN].com",

"Scope": "https://www.googleapis.com/auth/gmail.addons.current.message.readonly, https://www.googleapis.com/auth/gmail.addons.execute, https://www.googleapis.com/auth/script.external_request, https://www.googleapis.com/auth/script.locale, https://www.googleapis.com/auth/userinfo.email",

"API name": "",

"Method": "",

"Number of response bytes": "0",

"IP address": "149.50.97.144",

"Product": "Gmail, Apps Script Runtime, Apps Script Api, Identity, Unspecified",

"Client type": "Web",

"Network info": "{\n \"Network info\": {\n \"IP ASN\": \"201814\",\n \"Subdivision code\": \"\",\n \"Region code\": \"PL\"\n }\n}"

}Figure 5: ToogleBox Recall auth log entry example

In at least one case, after conducting the initial data theft, UNC6661 used their newly obtained access to compromised email accounts to send additional phishing emails to contacts at cryptocurrency-focused companies. The threat actor then deleted the outbound emails, likely in an attempt to obfuscate their malicious activity.

GTIG attributes the subsequent extortion activity following UNC6661 intrusions to UNC6240, based on several overlaps, including the use of a common Tox account for negotiations, ShinyHunters-branded extortion emails, and Limewire to host samples of stolen data. In mid-January 2026 extortion emails, UNC6240 outlined what data they allegedly stole, specifying a payment amount and destination BTC address, and threatening consequences if the ransom was not paid within 72 hours, which is consistent with prior extortion emails (Figure 6). They also provided proof of data theft via samples hosted on Limewire. GTIG also observed extortion text messages sent to employees and received reports of victim websites being targeted with distributed denial-of-service (DDoS) attacks.

Notably, in late January 2026 a new ShinyHunters-branded DLS named "SHINYHUNTERS" emerged listing several alleged victims who may have been compromised in these most recent extortion operations. The DLS also lists contact information (shinycorp@tutanota[.]com, shinygroup@onionmail[.]com) that have previously been associated with UNC6240.

Figure 6: Ransom note extract

Similar Activity Conducted by UNC6671

Also beginning in early January 2026, UNC6671 conducted vishing operations masquerading as IT staff and directing victims to enter their credentials and MFA authentication codes on a victim-branded credential harvesting site. The credential harvesting domains used the same structure as UNC6661, but were more often registered using Tucows. In at least some cases, the threat actors have gained access to Okta customer accounts. Mandiant has also observed evidence that UNC6671 leveraged PowerShell to download sensitive data from SharePoint and OneDrive. While many of these TTPs are consistent with UNC6661, an extortion email stemming from UNC6671 activity was unbranded and used a different Tox ID for further contact. The threat actors employed aggressive extortion tactics following UNC6671 intrusions, including harassment of victim personnel. The extortion tactics and difference in domain registrars suggests that separate individuals may be involved with these sets of activity.

Remediation and Hardening

Mandiant has published a comprehensive guide with proactive hardening and detection recommendations.

Outlook and Implications

This recent activity is similar to prior operations associated with UNC6240, which have frequently used vishing for initial access and have targeted Salesforce data. It does, however, represent an expansion in the number and type of targeted cloud platforms, suggesting that the associated threat actors are modifying their operations to gather more sensitive data for extortion operations. Further, the use of a compromised account to send phishing emails to cryptocurrency-related entities suggests that associated threat actors may be building relationships with potential victims to expand their access or engage in other follow-on operations. Notably, this portion of the activity appears operationally distinct, given that it appears to target individuals instead of organizations.

Indicators of Compromise (IOCs)

To assist the wider community in hunting and identifying activity outlined in this blog post, we have included indicators of compromise (IOCs) in a free GTI Collection for registered users.

Phishing Domain Lure Patterns

Threat actors associated with these clusters frequently register domains designed to impersonate legitimate corporate portals. At time of publication all identified phishing domains have been added to Chrome Safe Browsing. These domains typically follow specific naming conventions using a variation of the organization name:

|

Pattern |

Examples (Defanged) |

|

Corporate SSO |

<companyname>sso[.]com, my<companyname>sso[.]com, my-<companyname>sso[.]com |

|

Internal Portals |

<companyname>internal[.]com, www.<companyname>internal[.]com, my<companyname>internal[.]com |

|

Support/Helpdesk |

<companyname>support[.]com, ticket-<companyname>[.]support, support-<companyname>[.]com |

|

Identity Providers |

<companyname>okta[.]com, <companyname>azure[.]com, on<companyname>zendesk[.]com |

|

Access Portal |

<companyname>access[.]com, www.<companyname>access[.]com, my<companyname>acess[.]com |

Network Indicators

Many of the network indicators identified in this campaign are associated with commercial VPN services or residential proxy networks, including Mullvad, Oxylabs, NetNut, 9Proxy, Infatica, and nsocks. Mandiant recommends that organizations exercise caution when using these indicators for broad blocking and prioritize them for hunting and correlation within their environments.

|

IOC |

ASN |

Association |

|

24.242.93[.]122 |

11427 |

UNC6661 |

|

23.234.100[.]107 |

11878 |

UNC6661 |

|

23.234.100[.]235 |

11878 |

UNC6661 |

|

73.135.228[.]98 |

33657 |

UNC6661 |

|

157.131.172[.]74 |

46375 |

UNC6661 |

|

149.50.97[.]144 |

201814 |

UNC6661 |

|

67.21.178[.]234 |

400595 |

UNC6661 |

|

142.127.171[.]133 |

577 |

UNC6671 |

|

76.64.54[.]159 |

577 |

UNC6671 |

|

76.70.74[.]63 |

577 |

UNC6671 |

|

206.170.208[.]23 |

7018 |

UNC6671 |

|

68.73.213[.]196 |

7018 |

UNC6671 |

|

37.15.73[.]132 |

12479 |

UNC6671 |

|

104.32.172[.]247 |

20001 |

UNC6671 |

|

85.238.66[.]242 |

20845 |

UNC6671 |

|

199.127.61[.]200 |

23470 |

UNC6671 |

|

209.222.98[.]200 |

23470 |

UNC6671 |

|

38.190.138[.]239 |

27924 |

UNC6671 |

|

198.52.166[.]197 |

395965 |

UNC6671 |

Google Security Operations

Google Security Operations customers have access to these broad category rules and more under the Okta, Cloud Hacktool, and O365 rule packs. A walkthrough for operationalizing these findings within the Google Security Operations is available in Part Three of this series. The activity discussed in the blog post is detected in Google Security Operations under the rule names:

-

Okta Admin Console Access Failure

-

Okta Super or Organization Admin Access Granted

-

Okta Suspicious Actions from Anonymized IP

-

Okta User Assigned Administrator Role

-

O365 SharePoint Bulk File Access or Download via PowerShell

-

O365 SharePoint High Volume File Access Events

-

O365 SharePoint High Volume File Download Events

-

O365 Sharepoint Query for Proprietary or Privileged Information

-

O365 Deletion of MFA Modification Notification Email

-

Workspace ToogleBox Recall OAuth Application Authorized

$e.metadata.product_name = "Okta"

$e.metadata.product_event_type = /\.(add|update_|(policy.rule|zone)\.update|create|register|(de)?activate|grant|reset_all|user.session.access_admin_app)$/

(

$e.security_result.detection_fields["anonymized IP"] = "true" or

$e.extracted.fields["debugContext.debugData.tunnels"] = /\"anonymous\":true/

)