Building a safer digital future, together

As we mark Safer Internet Day 2026, we’re reflecting on a simple but enduring principle: safety must be designed into online services, not bolted on. Microsoft’s work in this space spans more than two decades—from technology solutions like PhotoDNA to our investments in responsible gaming, public-private partnerships, and empowering users through education. This foundation guides our approach as we help individuals and families navigate a rapidly evolving landscape shaped by new technologies and new risks and as we innovate with next-generation AI offerings. At a moment when 91% of people tell us they worry about harms introduced by AI, our commitment to responsible innovation has never been more important—especially for our youngest users.

Read on for more about our longstanding efforts to create a safer digital environment, plus key findings from our Global Online Safety Survey and new examples of our work to empower families and communities through tools, research, and educational resources—including the latest release in Minecraft Education’s CyberSafe series.

Ten years of safety research

2026 marks the tenth year of our annual Global Online Safety Survey research. For a decade, we have invested in surveying teens and adults around the world about their experiences and perceptions of life online—aiming to provide fresh insights to support our collective work. That’s 130,000+ interviews across 37 countries, with the results available on our website. Ten years later, respondents tell us that they feel more connected and more productive, but less safe online.

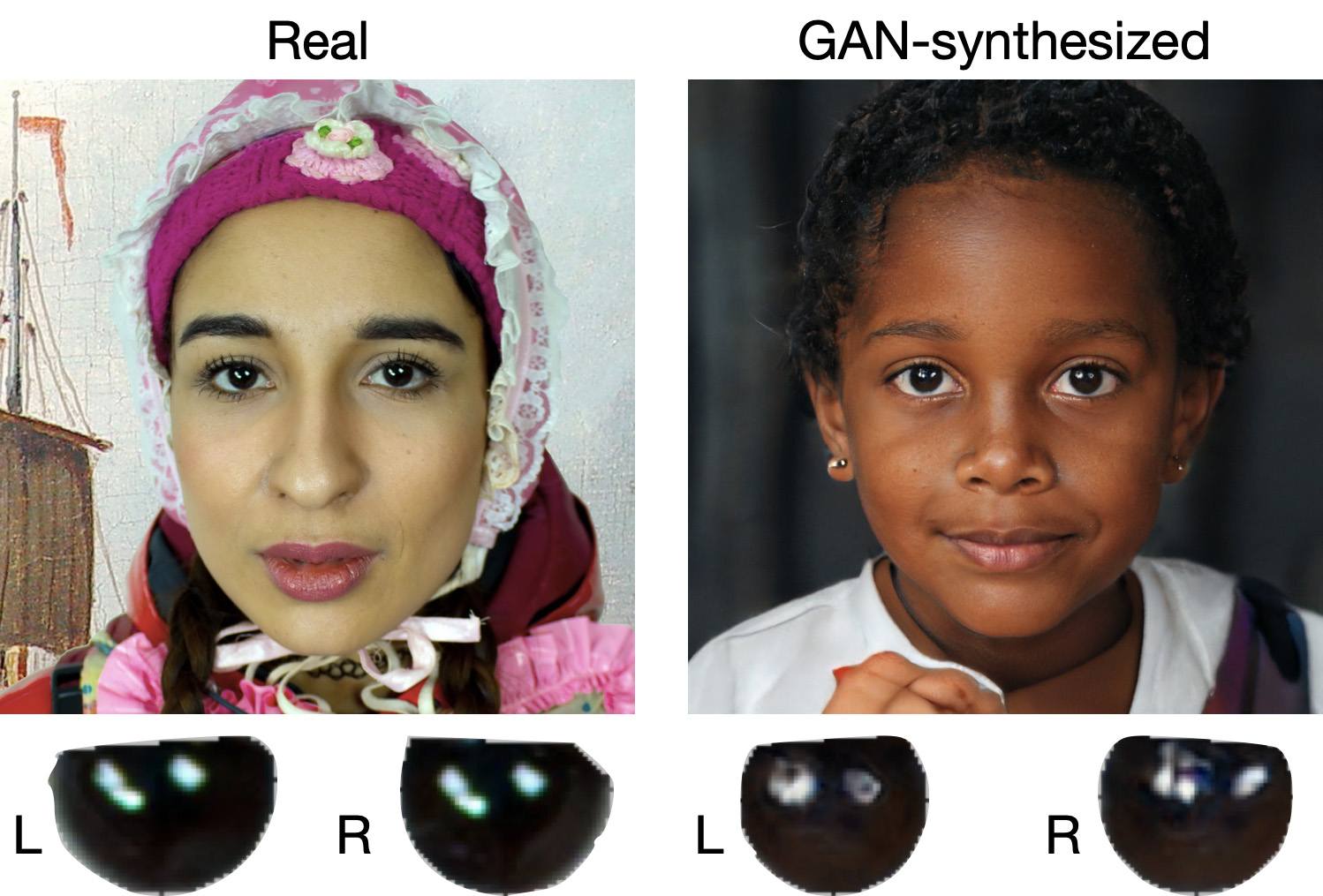

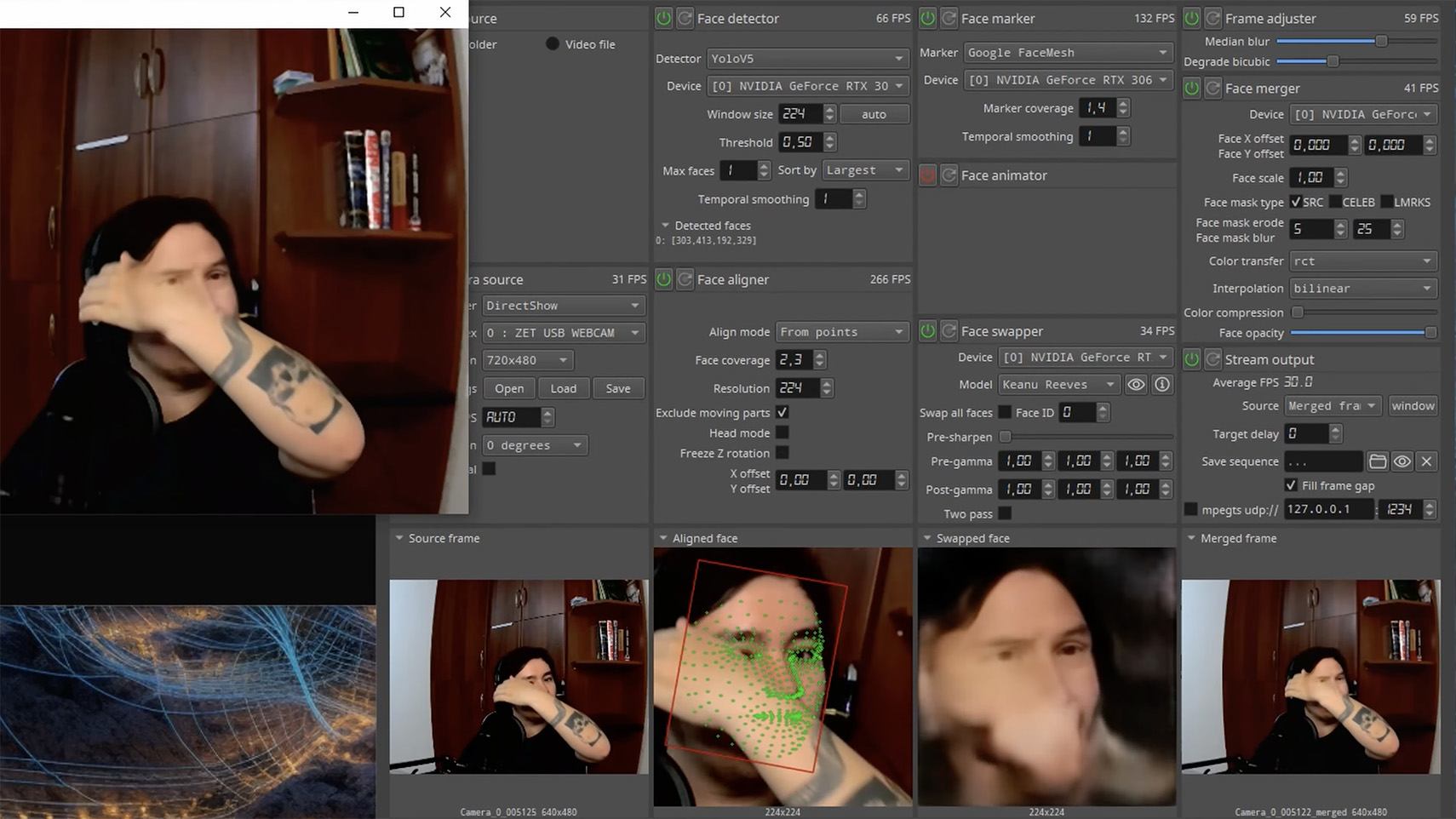

This year’s Global Online Safety Survey also highlights the complexity of the digital environment young people now inhabit. Teens’ exposure to risk rose again, with hate speech (35%), scams (29%), and cyberbullying (23%) among the most commonly experienced harms. At the same time, teens demonstrated striking resilience: 72% talked to someone after experiencing a risk, and reporting behavior increased for the second consecutive year. But worries about the misuse of AI continue, underscoring again why safety-by-design for AI is essential, not optional. Find the full results and country-level summaries here.

Year on year, the research has told a story of evolving online safety risks and of the real-world impact. In 2026, the call to action is more urgent than ever—unless industry can deliver safe and age-appropriate experiences, young people risk losing access to technology. At Microsoft, spanning across our teams from Windows to Xbox, we have sought to continuously evolve our approach and to lead industry in advancing tailored and thoughtful safety solutions.

Evolving to meet the moment

Looking ahead, we know we need to continue to build strong guardrails to tackle acute risks and to leverage our experience while being informed by new research, new perspectives, and new technologies. The application process closed yesterday for our first AI Futures Youth Council, to be comprised of teens from across the US and EU. We’re looking forward to bringing those teens together soon for a first meeting to get their direct feedback on the role they want emerging technology to play in their lives and how we can best support their safety.

Microsoft has partnered with Cyberlite on a second youth-centered initiative to understand how teens aged 13–17 are engaging with AI companions. Through codesign workshops with students in India and Singapore, we’re capturing young people’s own perspectives on the benefits, risks, and emotional dimensions of AI use—insights that will directly inform educational resources for teens, parents, and educators. Early findings from the first workshop in December 2025 show that young people value AI as a judgment free space while also recognizing the tradeoffs: privacy risks, overreliance, and erosion of critical thinking loom larger for them than bad advice.

We’re also thinking about how we define safety in the next era of Windows, leveraging the Family Safety controls that have been integrated for over a decade. As many countries have raised the local age for digital consent, more parents will have the option to enable parental controls for teens up to the age of 18—leveraging these tools as part of a holistic approach to digital parenting. And to help parents set up and understand Family Safety, we’ve developed a short new guide.

Safety is also about transparency, empowerment, and education. At Xbox, bringing the joy of gaming to everyone means remaining transparent about the many ways we innovate so players, parents, and caregivers can feel confident that Xbox continues to be a place for positive play. You can read more about our recently published Xbox Transparency Report and the tools and resources available to players on the Xbox Wire blog.

We’re also excited to announce the latest release in Minecraft Education’s CyberSafe series: CyberSafe: Bad Connection? This series of immersive Minecraft worlds and educational resources is free and helps translate complex risks into fun learning experiences that meet young people in their favorite blocky world. Bad Connection?—the fifth in the series—reflects our commitment to evolving to meet new and challenging risks, with a focus on tackling serious risks related to online recruitment and radicalization. Learn more about how to access this new Minecraft world here.

The CyberSafe series has reached more than 80 million downloads since 2022 through a partnership between Minecraft Education, Xbox, and Microsoft, helping a generation of young players build the agency, resilience, and digital citizenship they need to navigate an increasingly online world. As part of our commitment to ensure people have the knowledge and skills they need to benefit from technology and stay safe, Microsoft Elevate is empowering educators and students with tools and guidance to build safer, more responsible digital habits, recognizing that AI is transforming how people learn, work, and connect. Our commitment to helping young people access technology safely is also why we’ve partnered with organizations, like the National 4-H Council to prepare young people for an AI-powered world through AI literacy and digital safety curriculum and game-based learning with Minecraft Education.

As we look ahead, our goal is clear: build technology that is safe by design, guided by evidence, and informed through partnership. The internet has changed profoundly over the past decade, and so too have the expectations of the people who use it. Safer Internet Day is a reminder that progress requires sustained collaboration across industry, civil society, researchers, and families.

—

Global Online Safety Survey Methodology

Microsoft has published annual research since 2016 that surveys how people of varying ages use and view online technology. This latest consumer-based report is based on a survey of nearly 15,000 teens (13–17) and adults that was conducted this past summer in 15 countries examining people’s attitudes and perceptions about online safety tools and interactions. Responses to online safety differ depending on the country. Full results can be accessed here.

The post Building a safer digital future, together appeared first on Microsoft On the Issues.