Introducing the 2026 Intezer AI SOC Report for CISOs

For years, security leaders have lived with an uncomfortable truth. It has been to date, simply impossible to investigate every alert. As alert volumes exploded and teams failed to scale, SOCs, whether in-house or outsourced, normalized “acceptable risk” with the deprioritization of low-severity and informational alerts.

Our latest research shows that this approach is no longer defensible.

Intezer has just released the 2026 AI SOC Report for CISOs, based on the forensic analysis of more than 25 million security alerts across live enterprise environments. The findings reveal a critical disconnect between how security teams prioritize alerts and where real threats actually originate, and the cost of that gap is far higher than most organizations realize .

Why “acceptable risk” is no longer acceptable

Across endpoint, cloud, identity, network, and phishing telemetry, Intezer found that nearly 1% of confirmed incidents originated from alerts initially labeled as low-severity or informational. On endpoints, that figure climbed to nearly 2%.

At enterprise scale, that percentage is not noise.

For a typical organization generating roughly 450,000 alerts per year, this translates to ~50 real threats annually, about one per week, never investigated by a SOC or MDR team. These are not theoretical risks. They are real compromises hiding in plain sight, dismissed not because they were benign, but because teams lacked the capacity to look.

What the data revealed across the attack surface

Because Intezer AI SOC investigates 100% of alerts using forensic-grade analysis, the report exposes how attackers actually operate once you remove triage bias from the equation.

Endpoint security is more fragile than reported

More than half of endpoint alerts were not automatically mitigated by endpoint protection tools. Of those, nearly 9% were confirmed malicious. Even more concerning, 1.6% of endpoints undergoing live forensic scans were still actively compromised despite being reported as “mitigated” by EDR tools.

See the full endpoint threat data → Download the 2026 AI SOC Report

Low-severity does not mean low-risk

Within endpoint alerts alone, 1.9% of low-severity and informational alerts were real incidents, the exact alerts most SOCs never review.

Attackers favor stealth over noise

Cloud telemetry was dominated by defense evasion and persistence techniques, reflecting a shift toward long-term access, token abuse, and misuse of legitimate services rather than overt exploitation.

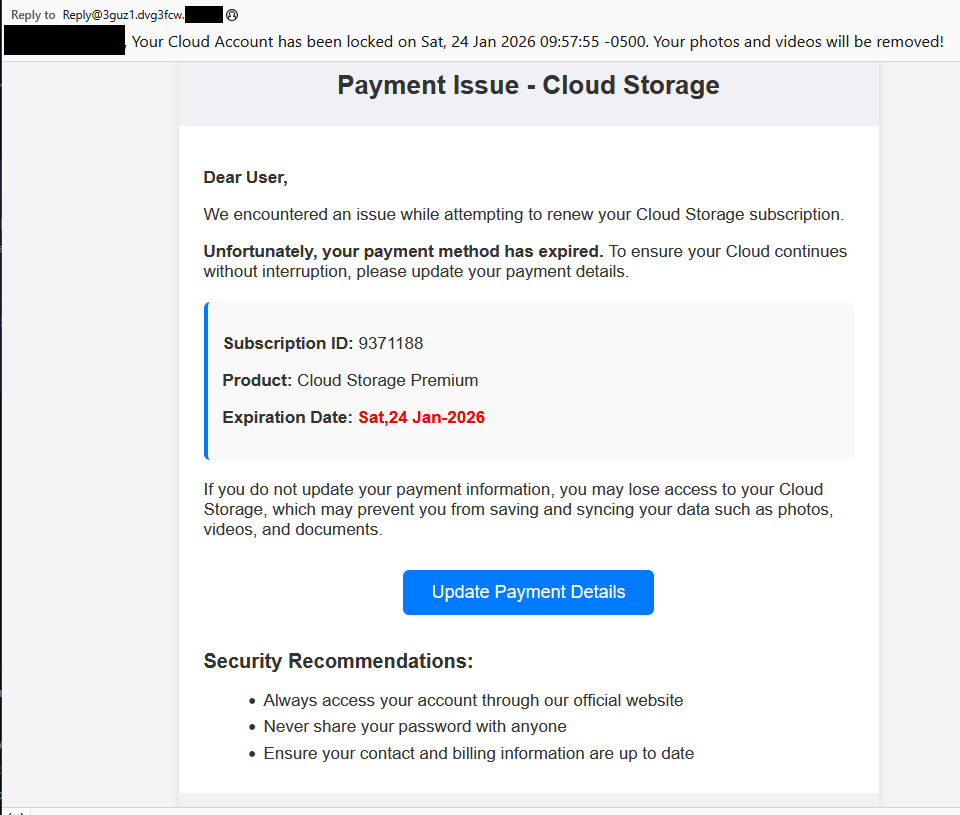

Phishing has moved into trusted platforms and browsers

Fewer than 6% of malicious phishing emails contained attachments. Most relied on links, language, and abuse of legitimate services such as cloud file sharing, code sandboxes, CAPTCHA mechanisms, where traditional controls have limited visibility.

Cloud misconfigurations persist as silent risk multipliers

Most cloud posture findings stemmed from legacy or default configurations, especially in Amazon S3, including missing encryption, weak access controls, and lack of logging—issues often classified as “low severity,” yet repeatedly exploited once attackers gain a foothold.

To read the full report and all the findings, download the CISOs guide to AI SOC 2026 here.

Why traditional SOCs fail: capacity, fragmentation and judging alerts by their severity

Modern SOC failures are rarely the result of a single broken tool or negligent team. They are the outcome of structural tradeoffs that every traditional SOC—internal or MDR—has been forced to make.

Capacity is the first constraint.

Human analysts do not scale linearly with alert volume. As telemetry expands across endpoint, cloud, identity, network, and SaaS, SOCs hit a hard ceiling. The only way to cope is aggressive triage: close most alerts automatically, investigate only what looks “important,” and hope severity labels align with reality. The 2026 AI SOC Report shows that this assumption is false at scale.

Tool fragmentation compounds the problem.

Most SOC stacks are collections of siloed detections, EDR, SIEM, identity, cloud posture, email, each optimized for a narrow signal. Severity is assigned locally, without cross-surface context or forensic validation. As a result, alerts are scored based on abstract rules, not evidence of compromise. When SOCs trust these labels blindly, they inherit the tools’ blind spots.

Process tradeoffs lock risk in place.

Once triage rules are defined, they become institutionalized. Low-severity alerts are ignored by design. MDR providers codify this into SLAs. Internal SOCs bake it into runbooks. Crucially, there is no closed-loop feedback: missed threats do not automatically improve detections, because they were never investigated in the first place.

The outcome is not an occasional failure. It is systematic, repeatable risk, embedded directly into how SOCs operate.

Real-world examples of missed threats hiding in plain sight

The data in the 2026 AI SOC Report makes clear that missed threats are not exotic edge cases. They are ordinary attacks progressing quietly through environments because no one looked.

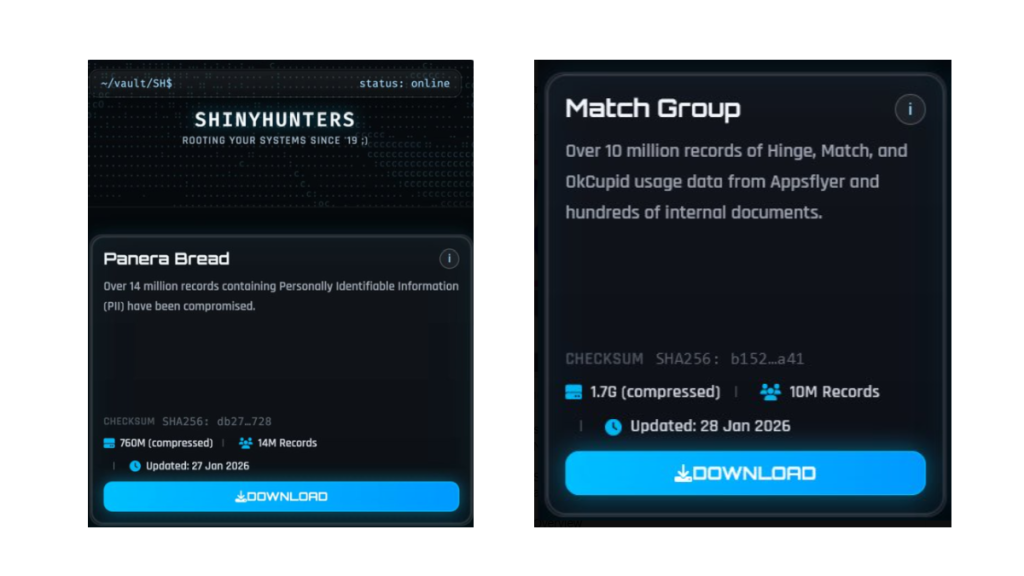

Endpoints marked “mitigated” but still compromised

In over 1.6% of live forensic endpoint scans, Intezer found active malicious code running in memory even though the EDR had already reported the threat as resolved. These cases included stealers, RATs, and post-exploitation frameworks, often originating from low-severity alerts that never triggered deeper inspection. Without memory-level forensics, these compromises would have remained invisible.

Phishing hosted on trusted platforms

Attackers increasingly host phishing pages on legitimate developer platforms like Vercel and CodePen, or abuse trusted cloud services such as OneDrive and PayPal. The parent domains appear reputable, so alerts are downgraded or ignored. Yet behind them are live credential-harvesting pages that bypass email gateways and browser-based defenses alike.

Cloud misconfigurations as delayed breach accelerators

Many cloud posture findings such as unencrypted S3 buckets, missing access logs and permissive cross-account policies rarely trigger action. But once an attacker gains any foothold, these long-standing misconfigurations dramatically accelerate lateral movement, persistence, and data exposure.

In every case, the failure was not detection. The signal existed. The failure was investigation.

How attackers deliberately exploit SOC blind spots

Attackers understand SOC economics better than most defenders.

They know which alerts generate fatigue.

They know which detections are noisy.

They know which categories are deprioritized by default.

As a result, modern attackers design their campaigns to blend into the backlog, not trigger alarms.

Stealth over speed

Cloud intrusions favor defense evasion, persistence, and token abuse over loud exploitation. These behaviors generate alerts, but rarely high-severity ones. The report shows cloud telemetry dominated by exactly these tactics, indicating attackers are optimizing for long-term access rather than immediate impact.

Living off trusted infrastructure

Phishing campaigns increasingly abuse legitimate brands, file-sharing services, CAPTCHA frameworks, and developer platforms. These environments inherit trust by default, allowing attackers to operate under severity thresholds that SOCs routinely ignore.

Multi-stage loaders and memory-only execution

On endpoints, attackers rely on layered loaders, in-memory payloads, and obfuscation techniques that evade static detections. Initial alerts may look benign or incomplete. Without forensic follow-through, SOCs miss the actual compromise entirely.

Attackers are not evading detection systems alone, rather they are exploiting SOC decision-making models.

What this means for your SOC operations

For CISOs and SOC leaders, the implication is stark:

Risk is no longer defined by what you detect, but by what you choose not to investigate.

If your SOC:

- Ignores low-severity alerts by default

- Relies on severity labels without forensic validation

- Limits investigations based on human capacity

- Operates without a feedback loop between outcomes and detections

Then missed threats are not anomalies, they are guaranteed.

The organizations that will reduce risk in 2026 are not adding more dashboards or rewriting triage rules. They are adopting operating models where investigation is no longer a scarce resource.

This is why AI-driven, forensic-grade SOC platforms fundamentally change the equation. When every alert is investigated:

- Severity becomes evidence-based, not assumed

- Detection quality improves through real-world validation

- Attackers lose the ability to hide in “acceptable risk”

- SOC teams regain control without scaling headcount

This is the shift behind the Intezer AI SOC model and why the concept of acceptable risk must be redefined for the modern threat landscape.

This all changes when you can investigate everything

The data in the 2026 AI SOC Report points to a different reality, one where AI-driven forensic analysis removes investigation capacity as a constraint.

When every alert is investigated:

- “Low severity” stops being a proxy for “safe”

- Detection quality improves through real-world validation

- Missed threats drop from dozens per year to near zero

- Escalations fall below 2%, without sacrificing coverage

- Risk tolerance is defined by evidence, not exhaustion

This is the operating model behind Intezer AI SOC, powered by ForensicAI™ and it is why the definition of acceptable risk must be reset.

Download the report and join the discussion

The 2026 AI SOC Report for CISOs is grounded in:

- 25 million alerts analyzed

- 10 million monitored endpoints and identities

- 82,000 forensic endpoint investigations, including live memory scans

- Telemetry from 7 million IP addresses, 3 million domains and URLs, and over 550,000 phishing emails

All data was aggregated and anonymized across Intezer’s global enterprise customer base.

👉 Download the full report to explore the findings in detail, and

👉 Join Intezer’s research team on Wednesday, February 4th at 12 p.m. ET for a live webinar breaking down what this data means for SOC leaders and CISOs.

Because in 2026, the biggest risk is no longer what you detect, it’s what you choose not to investigate.

The post Alert fatigue is costing you: Why your SOC misses 1% of real threats appeared first on Intezer.