AI-powered sextortion: a new threat to privacy | Kaspersky official blog

In 2025, cybersecurity researchers discovered several open databases belonging to various AI image-generation tools. This fact alone makes you wonder just how much AI startups care about the privacy and security of their users’ data. But the nature of the content in these databases is far more alarming.

A large number of generated pictures in these databases were images of women in lingerie or fully nude. Some were clearly created from children’s photos, or intended to make adult women appear younger (and undressed). Finally, the most disturbing part: some pornographic images were generated from completely innocent photos of real people — likely taken from social media.

In this post, we’re talking about what sextortion is, and why AI tools mean anyone can become a victim. We detail the contents of these open databases, and give you advice on how to avoid becoming a victim of AI-era sextortion.

What is sextortion?

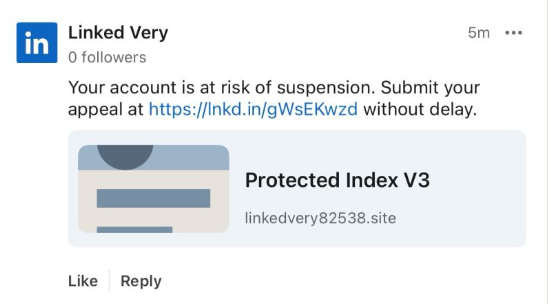

Online sexual extortion has become so common it’s earned its own global name: sextortion (a portmanteau of sex and extortion). We’ve already detailed its various types in our post, Fifty shades of sextortion. To recap, this form of blackmail involves threatening to publish intimate images or videos to coerce the victim into taking certain actions, or to extort money from them.

Previously, victims of sextortion were typically adult industry workers, or individuals who’d shared intimate content with an untrustworthy person.

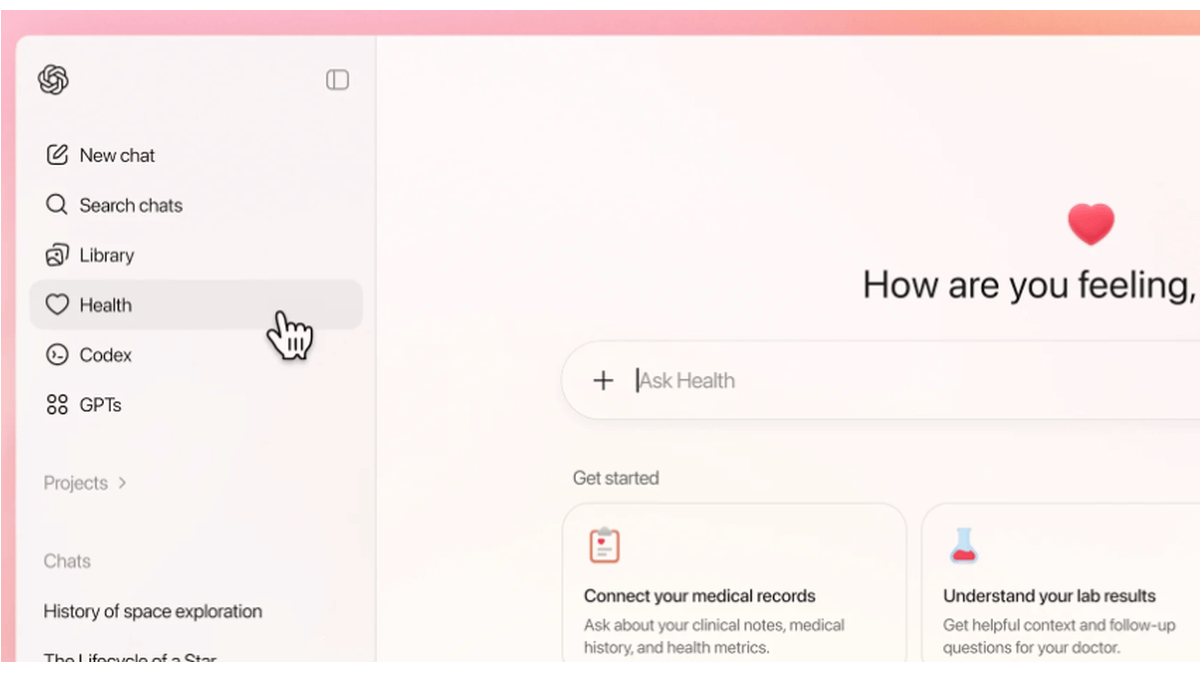

However, the rapid advancement of artificial intelligence, particularly text-to-image technology, has fundamentally changed the game. Now, literally anyone who’s posted their most innocent photos publicly can become a victim of sextortion. This is because generative AI makes it possible to quickly, easily, and convincingly undress people in any digital image, or add a generated nude body to someone’s head in a matter of seconds.

Of course, this kind of fakery was possible before AI, but it required long hours of meticulous Photoshop work. Now, all you need is to describe the desired result in words.

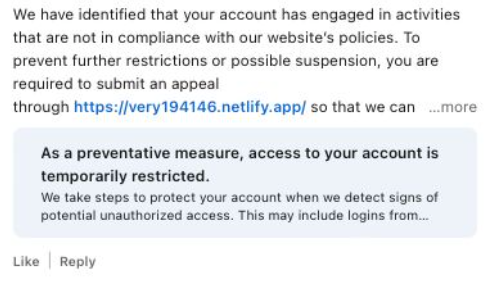

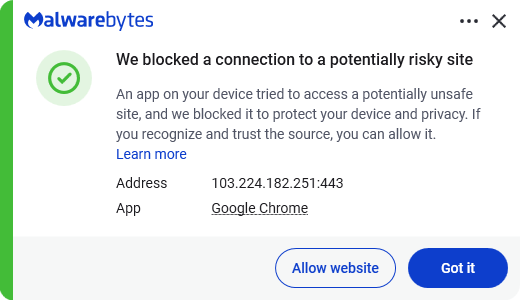

To make matters worse, many generative AI services don’t bother much with protecting the content they’ve been used to create. As mentioned earlier, last year saw researchers discover at least three publicly accessible databases belonging to these services. This means the generated nudes within them were available not just to the user who’d created them, but to anyone on the internet.

How the AI image database leak was discovered

In October 2025, cybersecurity researcher Jeremiah Fowler uncovered an open database containing over a million AI-generated images and videos. According to the researcher, the overwhelming majority of this content was pornographic in nature. The database wasn’t encrypted or password-protected — meaning any internet user could access it.

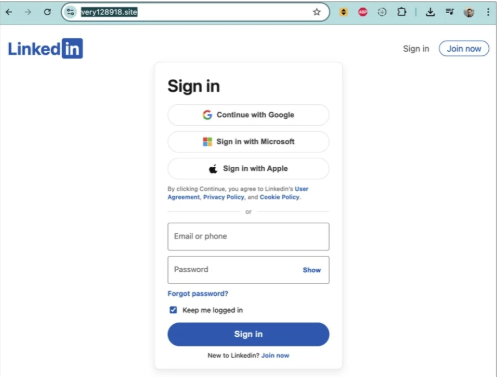

The database’s name and watermarks on some images led Fowler to believe its source was the U.S.-based company SocialBook, which offers services for influencers and digital marketing services. The company’s website also provides access to tools for generating images and content using AI.

However, further analysis revealed that SocialBook itself wasn’t directly generating this content. Links within the service’s interface led to third-party products — the AI services MagicEdit and DreamPal — which were the tools used to create the images. These tools allowed users to generate pictures from text descriptions, edit uploaded photos, and perform various visual manipulations, including creating explicit content and face-swapping.

The leak was linked to these specific tools, and the database contained the product of their work, including AI-generated and AI-edited images. A portion of the images led the researcher to suspect they’d been uploaded to the AI as references for creating provocative imagery.

Fowler states that roughly 10,000 photos were being added to the database every single day. SocialBook denies any connection to the database. After the researcher informed the company of the leak, several pages on the SocialBook website that had previously mentioned MagicEdit and DreamPal became inaccessible and began returning errors.

Which services were the source of the leak?

Both services — MagicEdit and DreamPal — were initially marketed as tools for interactive, user-driven visual experimentation with images and art characters. Unfortunately, a significant portion of these capabilities were directly linked to creating sexualized content.

For example, MagicEdit offered a tool for AI-powered virtual clothing changes, as well as a set of styles that made images of women more revealing after processing — such as replacing everyday clothes with swimwear or lingerie. Its promotional materials promised to turn an ordinary look into a sexy one in seconds.

DreamPal, for its part, was initially positioned as an AI-powered role-playing chat, and was even more explicit about its adult-oriented positioning. The site offered to create an ideal AI girlfriend, with certain pages directly referencing erotic content. The FAQ also noted that filters for explicit content in chats were disabled so as not to limit users’ most intimate fantasies.

Both services have suspended operations. At the time of writing, the DreamPal website returned an error, while MagicEdit seemed available again. Their apps were removed from both the App Store and Google Play.

Jeremiah Fowler says earlier in 2025, he discovered two more open databases containing AI-generated images. One belonged to the South Korean site GenNomis, and contained 95,000 entries — a substantial portion of which being images of “undressed” people. Among other things, the database included images with child versions of celebrities: American singers Ariana Grande and Beyoncé, and reality TV star Kim Kardashian.

How to avoid becoming a victim

In light of incidents like these, it’s clear that the risks associated with sextortion are no longer confined to private messaging or the exchange of intimate content. In the era of generative AI, even ordinary photos, when posted publicly, can be used to create compromising content.

This problem is especially relevant for women, but men shouldn’t get too comfortable either: the popular blackmail scheme of “I hacked your computer and used the webcam to make videos of you browsing adult sites” could reach a whole new level of persuasion thanks to AI tools for generating photos and videos.

Therefore, protecting your privacy on social media and controlling what data about you is publicly available become key measures for safeguarding both your reputation and peace of mind. To prevent your photos from being used to create questionable AI-generated content, we recommend making all your social media profiles as private as possible — after all, they could be the source of images for AI-generated nudes.

We’ve already published multiple detailed guides on how to reduce your digital footprint online or even remove your data from the internet, how to stop data brokers from compiling dossiers on you, and protect yourself from intimate image abuse.

Additionally, we have a dedicated service, Privacy Checker — perfect for anyone who wants a quick but systematic approach to privacy settings everywhere possible. It compiles step-by-step guides for securing accounts on social media and online services across all major platforms.

And to ensure the safety and privacy of your child’s data, Kaspersky Safe Kids can help: it allows parents to monitor which social media their child spends time on. From there, you can help them adjust privacy settings on their accounts so their posted photos aren’t used to create inappropriate content. Explore our guide to children’s online safety together, and if your child dreams of becoming a popular blogger, discuss our step-by-step cybersecurity guide for wannabe bloggers with them.