How to protect yourself from deepfake scammers and save your money | Kaspersky official blog

Technologies for creating fake video and voice messages are accessible to anyone these days, and scammers are busy mastering the art of deepfakes. No one is immune to the threat — modern neural networks can clone a person’s voice from just three to five seconds of audio, and create highly convincing videos from a couple of photos. We’ve previously discussed how to distinguish a real photo or video from a fake and trace its origin to when it was taken or generated. Now let’s take a look at how attackers create and use deepfakes in real time, how to spot a fake without forensic tools, and how to protect yourself and loved ones from “clone attacks”.

How deepfakes are made

Scammers gather source material for deepfakes from open sources: webinars, public videos on social networks and channels, and online speeches. Sometimes they simply call identity theft targets and keep them on the line for as long as possible to collect data for maximum-quality voice cloning. And hacking the messaging account of someone who loves voice and video messages is the ultimate jackpot for scammers. With access to video recordings and voice messages, they can generate realistic fakes that 95% of folks are unable to tell apart from real messages from friends or colleagues.

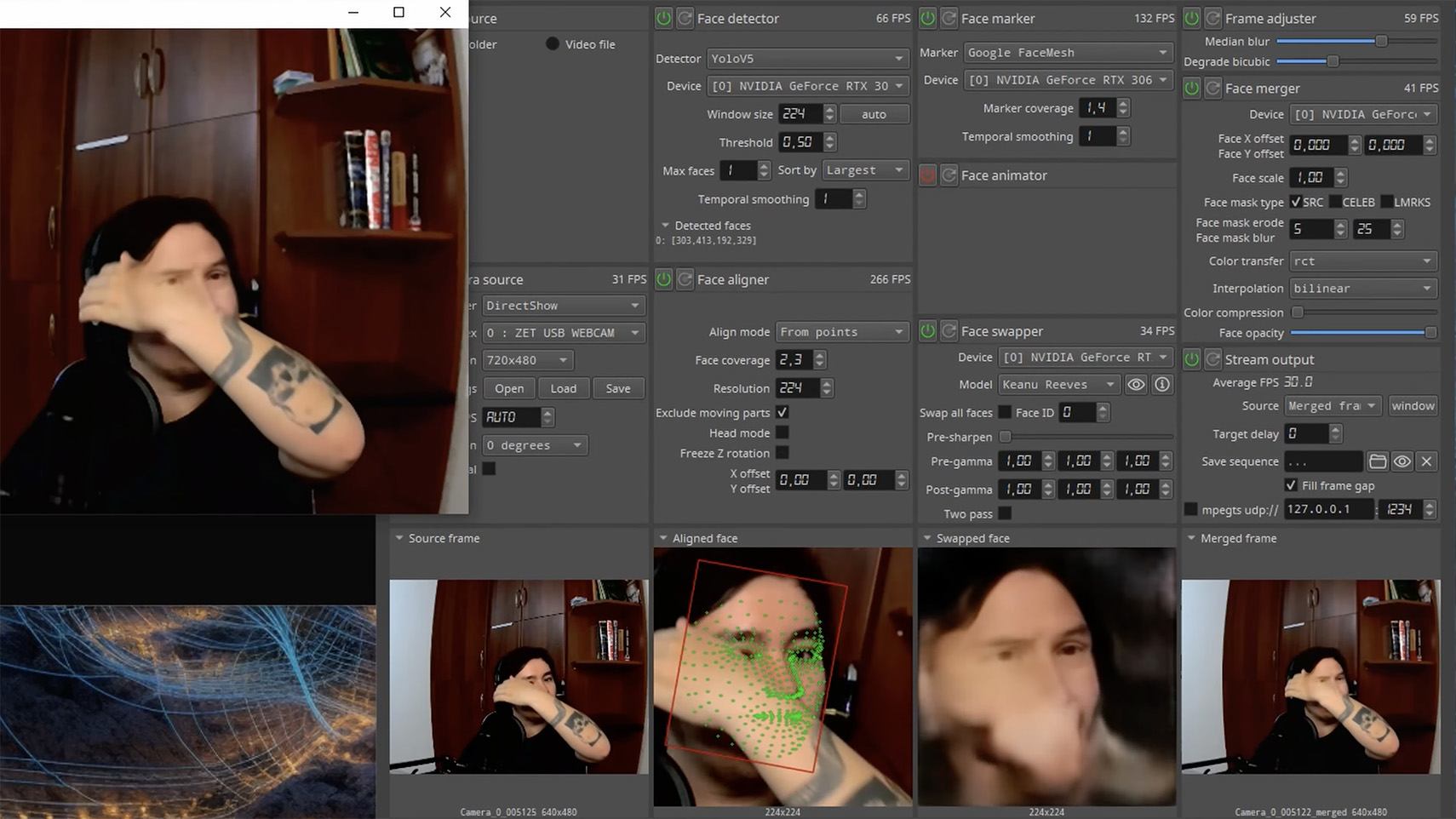

The tools for creating deepfakes vary widely, from simple Telegram bots to professional generators like HeyGen and ElevenLabs. Scammers use deepfakes together with social engineering: for example, they might first simulate a messenger app call that appears to drop out constantly, then send a pre-generated video message of fairly low quality, blaming it on the supposedly poor connection.

In most cases, the message is about some kind of emergency in which the deepfake victim requires immediate help. Naturally the “friend in need” is desperate for money, but, as luck would have it, they’ve no access to an ATM, or have lost their wallet, and the bad connection rules out an online transfer. The solution is, of course, to send the money not directly to the “friend”, but to a fake account, phone number, or cryptowallet.

Such scams often involve pre-generated videos, but of late real-time deepfake streaming services have come into play. Among other things, these allow users to substitute their own face in a chat-roulette or video call.

How to recognize a deepfake

If you see a familiar face on the screen together with a recognizable voice but are asked unusual questions, chances are it’s a deepfake scam. Fortunately, there are certain visual, auditory, and behavioral signs that can help even non-techies to spot a fake.

Visual signs of a deepfake

Lighting and shadow issues. Deepfakes often ignore the physics of light: the direction of shadows on the face and in the background may not match, and glares on the skin may look unnatural or not be there at all. Or the person in the video may be half-turned toward the window, but their face is lit by studio lighting. This example will be familiar to participants in video conferences, where substituted background images can appear extremely unnatural.

Blurred or floating facial features. Pay attention to the hairline: deepfakes often show blurring, flickering, or unnatural color transitions along this area. These artifacts are caused by flaws in the algorithm for superimposing the cloned face onto the original.

Unnaturally blinking or “dead” eyes. A person blinks on average 10 to 20 times per minute. Some deepfakes blink too rarely, others too often. Eyelid movements can be too abrupt, and sometimes blinking is out of sync, with one eye not matching the other. “Glassy” or “dead-eye” stares are also characteristic of deepfakes. And sometimes a pupil (usually just the one) may twitch randomly due to a neural network hallucination.

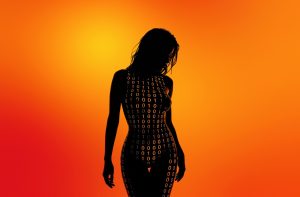

When analyzing a static image such as a photograph, it’s also a good idea to zoom in on the eyes and compare the reflections on the irises — in real photos they’ll be identical; in deepfakes — often not.

Look at the reflections and glares in the eyes in the real photo (left) and the generated image (right) — although similar, specular highlights in the eyes in the deepfake are different. Source

Lip-syncing issues. Even top-quality deepfakes trip up when it comes to synchronizing speech with lip movements. A delay of just a hundred milliseconds is noticeable to the naked eye. It’s often possible to observe an irregular lip shape when pronouncing the sounds m, f, or t. All of these are telltale signs of an AI-modeled face.

Static or blurred background. In generated videos, the background often looks unrealistic: it might be too blurry; its elements may not interact with the on-screen face; or sometimes the image behind the person remains motionless even when the camera moves.

Odd facial expressions. Deepfakes do a poor job of imitating emotion: facial expressions may not change in line with the conversation; smiles look frozen, and the fine wrinkles and folds that appear in real faces when expressing emotion are absent — the fake looks botoxed.

Auditory signs of a deepfake

Early AI generators modeled speech from small, monotonous phonemes, and when the intonation changed, there was an audible shift in pitch, making it easy to recognize a synthesized voice. Although today’s technology has advanced far beyond this, there are other signs that still give away generated voices.

Wooden or electronic tone. If the voice sounds unusually flat, without natural intonation variations, or there’s a vaguely electronic quality to it, there’s a high probability you’re talking to a deepfake. Real speech contains many variations in tone and natural imperfections.

No breathing sounds. Humans take micropauses and breathe in between phrases — especially in long sentences, not to mention small coughs and sniffs. Synthetic voices often lack these nuances, or place them unnaturally.

Robotic speech or sudden breaks. The voice may abruptly cut off, words may sound “glued” together, and the stress and intonation may not be what you’re used to hearing from your friend or colleague.

Lack of… shibboleths in speech. Pay attention to speech patterns (such as accent or phrases) that are typical of the person in real life but are poorly imitated (if at all) by the deepfake.

To mask visual and auditory artifacts, scammers often simulate poor connectivity by sending a noisy video or audio message. A low-quality video stream or media file is the first red flag indicating that checks are needed of the person at the other end.

Behavioral signs of a deepfake

Analyzing the movements and behavioral nuances of the caller is perhaps still the most reliable way to spot a deepfake in real time.

Can’t turn their head. During the video call, ask the person to turn their head so they’re looking completely to the side. Most deepfakes are created using portrait photos and videos, so a sideways turn will cause the image to float, distort, or even break up. AI startup Metaphysic.ai — creators of viral Tom Cruise deepfakes — confirm that head rotation is the most reliable deepfake test at present.

Unnatural gestures. Ask the on-screen person to perform a spontaneous action: wave their hand in front of their face; scratch their nose; take a sip from a cup; cover their eyes with their hands; or point to something in the room. Deepfakes have trouble handling impromptu gestures — hands may pass ghostlike through objects or the face, or fingers may appear distorted, or move unnaturally.

Ask a deepfake to wave a hand in front of its face, and the hand may appear to dissolve. Source

Screen sharing. If the conversation is work-related, ask your chat partner to share their screen and show an on-topic file or document. Without access to your real-life colleague’s device, this will be virtually impossible to fake.

Can’t answer tricky questions. Ask something that only the genuine article could know, for example: “What meeting do we have at work tomorrow?”, “Where did I get this scar?”, “Where did we go on vacation two years ago?” A scammer won’t be able to answer questions if the answers aren’t present in the hacked chats or publicly available sources.

Don’t know the codeword. Agree with friends and family on a secret word or phrase for emergency use to confirm identity. If a panicked relative asks you to urgently transfer money, ask them for the family codeword. A flesh-and-blood relation will reel it off; a deepfake-armed fraudster won’t.

What to do if you encounter a deepfake

If you’ve even the slightest suspicion that what you’re talking to isn’t a real human but a deepfake, follow our tips below.

- End the chat and call back. The surest check is to end the video call and connect with the person through another channel: call or text their regular phone, or message them in another app. If your opposite number is unhappy about this, pretend the connection dropped out.

- Don’t be pressured into sending money. A favorite trick is to create a false sense of urgency. “Mom, I need money right now, I’ve had an accident”; “I don’t have time to explain”; “If you don’t send it in ten minutes, I’m done for!” A real person usually won’t mind waiting a few extra minutes while you double-check the information.

- Tell your friend or colleague they’ve been hacked. If a call or message from someone in your contacts comes from a new number or an unfamiliar account, it’s not unusual — attackers often create fake profiles or use temporary numbers, and this is yet another red flag. But if you get a deepfake call from a contact in a messenger app or your address book, inform them immediately that their account has been hacked — and do it via another communication channel. This will help them take steps to regain access to their account (see our detailed instructions for Telegram and WhatsApp), and to minimize potential damage to other contacts, for example, by posting about the hack.

How to stop your own face getting deepfaked

- Restrict public access to your photos and videos. Hide your social media profiles from strangers, limit your friends list to real people, and delete videos with your voice and face from public access.

- Don’t give suspicious apps access to your smartphone camera or microphone. Scammers can collect biometric data through fake apps disguised as games or utilities. To stop such programs from getting on your devices, use a proven all-in-one security solution.

- Use passkeys, unique passwords, and two-factor authentication (2FA) where possible. Even if scammers do create a deepfake with your face, 2FA will make it much harder to access your accounts and use them to send deepfakes. A cross-platform password manager with support for passkeys and 2FA codes can help out here.

- Teach friends and family how to spot deepfakes. Elderly relatives, young children, and anyone new to technology are the most vulnerable targets. Educate them about scams, show them examples of deepfakes, and practice using a family codeword.

- Use content analyzers. While there’s no silver bullet against deepfakes, there are services that can identify AI-generated content with high accuracy. For graphics, these include Undetectable AI and Illuminarty; for video — Deepware; and for all types of deepfakes — Sensity AI and Hive Moderation.

- Keep a cool head. Scammers apply psychological pressure to hurry victims into acting rashly. Remember the golden rule: if a call, video, or voice message from anyone you know rouses even the slightest suspicion, end the conversation and make contact through another channel.

To protect yourself and loved ones from being scammed, learn more about how scammers deploy deepfakes: