What a browser-in-the-browser attack is, and how to spot a fake login window | Kaspersky official blog

In 2022, we dived deep into an attack method called browser-in-the-browser — originally developed by the cybersecurity researcher known as mr.d0x. Back then, no actual examples existed of this model being used in the wild. Fast-forward four years, and browser-in-the-browser attacks have graduated from the theoretical to the real: attackers are now using them in the field. In this post, we revisit what exactly a browser-in-the-browser attack is, show how hackers are deploying it, and, most importantly, explain how to keep yourself from becoming its next victim.

What is a browser-in-the-browser (BitB) attack?

For starters, let’s refresh our memories on what mr.d0x actually cooked up. The core of the attack stems from his observation of just how advanced modern web development tools — HTML, CSS, JavaScript, and the like — have become. It’s this realization that inspired the researcher to come up with a particularly elaborate phishing model.

A browser-in-the-browser attack is a sophisticated form of phishing that uses web design to craft fraudulent websites imitating login windows for well-known services like Microsoft, Google, Facebook, or Apple that look just like the real thing. The researcher’s concept involves an attacker building a legitimate-looking site to lure in victims. Once there, users can’t leave comments or make purchases unless they “sign in” first.

Signing in seems easy enough: just click the Sign in with {popular service name} button. And this is where things get interesting: instead of a genuine authentication page provided by the legitimate service, the user gets a fake form rendered inside the malicious site, looking exactly like… a browser pop-up. Furthermore, the address bar in the pop-up, also rendered by the attackers, displays a perfectly legitimate URL. Even a close inspection won’t reveal the trick.

From there, the unsuspecting user enters their credentials for Microsoft, Google, Facebook, or Apple into this rendered window, and those details go straight to the cybercriminals. For a while this scheme remained a theoretical experiment by the security researcher. Now — real-world attackers have added it to their arsenals.

Facebook credential theft

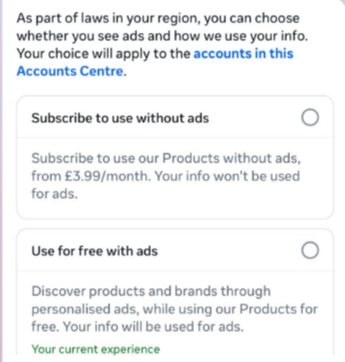

Attackers have put their own spin on mr.d0x’s original concept: recent browser-in-the-browser hits have been kicking off with emails designed to alarm recipients. For instance, one phishing campaign posed as a law firm informing the user they’d committed a copyright violation by posting something on Facebook. The message included a credible-looking link allegedly to the offending post.

Attackers sent messages on behalf of a fake law firm alleging copyright infringement — complete with a link supposedly to the problematic Facebook post. Source

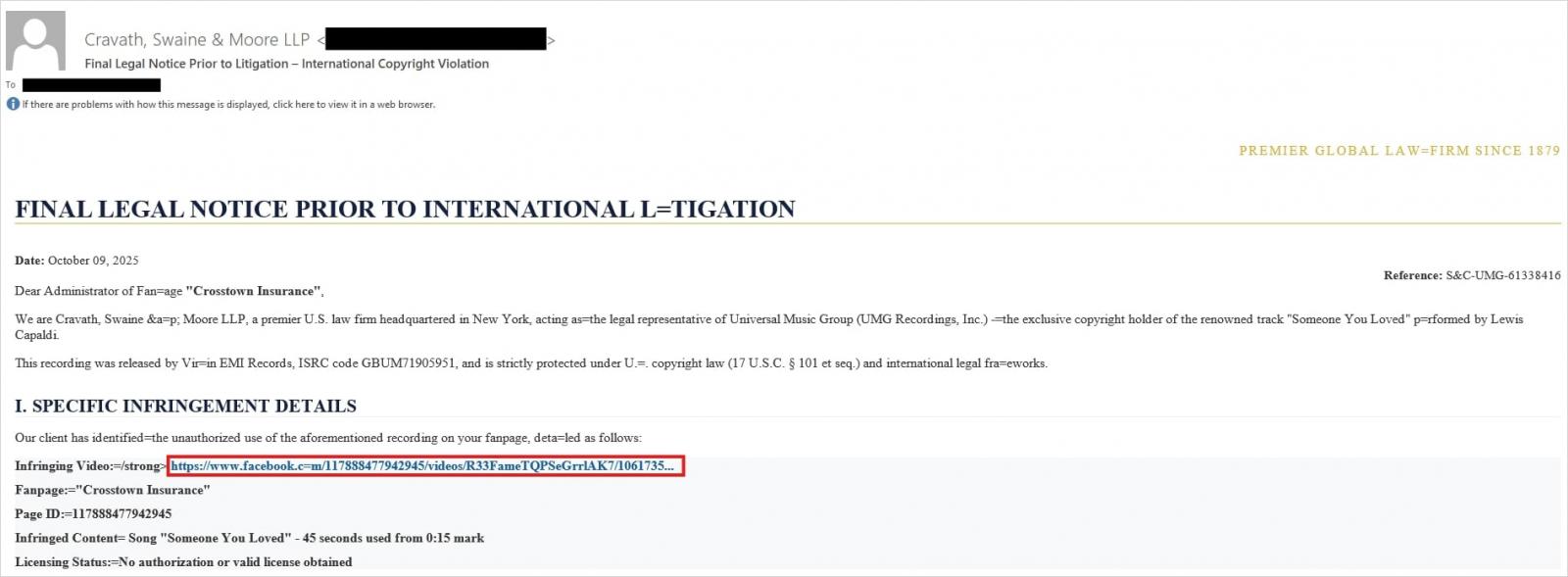

Interestingly, to lower the victim’s guard, clicking the link didn’t immediately open a fake Facebook login page. Instead, they were first greeted by a bogus Meta CAPTCHA. Only after passing it was the victim presented with the fake authentication pop-up.

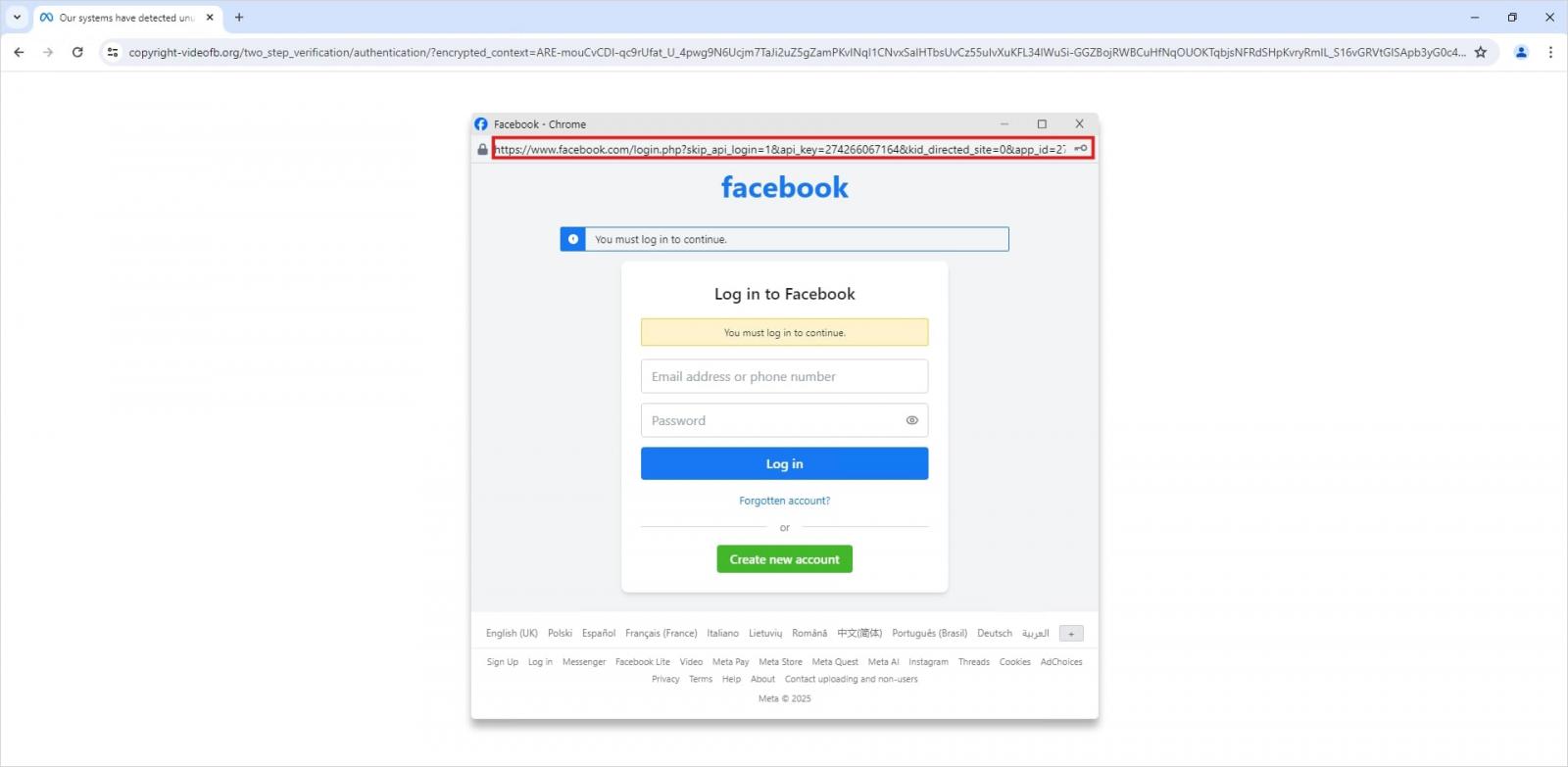

This isn’t a real browser pop-up; it’s a website element mimicking a Facebook login page — a ruse that allows attackers to display a perfectly convincing address. Source

Naturally, the fake Facebook login page followed mr.d0x’s blueprint: it was built entirely with web design tools to harvest the victim’s credentials. Meanwhile, the URL displayed in the forged address bar pointed to the real Facebook site — www.facebook.com.

How to avoid becoming a victim

The fact that scammers are now deploying browser-in-the-browser attacks just goes to show that their bag of tricks is constantly evolving. But don’t despair — there’s a way to tell if a login window is legit. A password manager is your friend here, which, among other things, acts as a reliable security litmus test for any website.

That’s because when it comes to auto-filling credentials, a password manager looks at the actual URL, not what the address bar appears to show, or what the page itself looks like. Unlike a human user, a password manager can’t be fooled with browser-in-the-browser tactics, or any other tricks, like domains having a slightly different address (typosquatting) or phishing forms buried in ads and pop-ups. There’s a simple rule: if your password manager offers to auto-fill your login and password, you’re on a website you’ve previously saved credentials for. If it stays silent, something’s fishy.

Beyond that, following our time-tested advice will help you defend against various phishing methods, or at least minimize the fallout if an attack succeeds:

- Enable two-factor authentication (2FA) for every account that supports it. Ideally, use one-time codes generated by a dedicated authenticator app as your second factor. This helps you dodge phishing schemes designed to intercept confirmation codes sent via SMS, messaging apps, or email. You can read more about one-time-code 2FA in our dedicated post.

- Use passkeys. The option to sign in with this method can also serve as a signal that you’re on a legitimate site. You can learn all about what passkeys are and how to start using them in our deep dive into the technology.

- Set unique, complex passwords for all your accounts. Whatever you do, never reuse the same password across different accounts. We recently covered what makes a password truly strong on our blog. To generate unique combinations — without needing to remember them — Kaspersky Password Manager is your best bet. As an added bonus, it can also generate one-time codes for two-factor authentication, store your passkeys, and synchronize your passwords and files across your various devices.

Finally, this post serves as yet another reminder that theoretical attacks described by cybersecurity researchers often find their way out into the wild. So, keep an eye on our blog, and subscribe to our Telegram channel to stay up to speed on the latest threats to your digital security and how to shut them down.

Read about other inventive phishing techniques scammers are using day in day out: