Received an Instagram password reset email? Here’s what you need to know

Last week, many Instagram users began receiving unsolicited emails from the platform that warned about a password reset request.

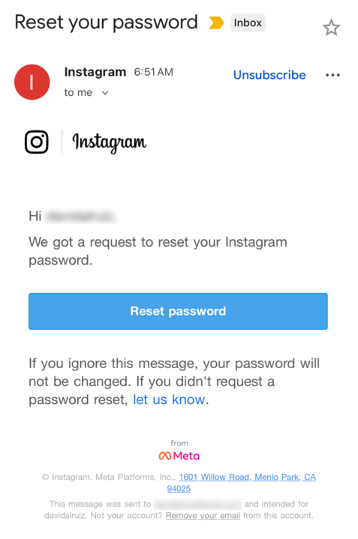

The message said:

“Hi {username},

We got a request to reset your Instagram password.

If you ignore this message, your password will not be changed. If you didn’t request a password reset, let us know.”

Around the same time that users began receiving these emails, a cybercriminal using the handle “Solonik” offered data that alleged contains information about 17 million Instagram users for sale on a Dark Web forum.

These 17 million or so records include:

- Usernames

- Full names

- User IDs

- Email addresses

- Phone numbers

- Countries

- Partial locations

Please note that there are no passwords listed in the data.

Despite the timing of the two events, Instagram denied this weekend that these events are related. On the platform X, the company stated they fixed an issue that allowed an external party to request password reset emails for “some people.”

So, what’s happening?

Regarding the data found on the dark web last week, Shahak Shalev, global head of scam and AI research at Malwarebytes, shared that “there are some indications that the Instagram data dump includes data from other, older, alleged Instagram breaches, and is a sort of compilation.” As Shalev’s team investigates the data, he also said that the earliest password reset requests reported by users came days before the data was first posted on the dark web, which might mean that “the data may have been circulating in more private groups before being made public.”

However, another possibility, Shalev said, is that “another vulnerability/data leak was happening as some bad actor tried spraying for [Instagram] accounts. Instagram’s announcement seems to reference that spraying. Besides the suspicious timing, there’s no clear connection between the two at this time.”

But, importantly, scammers will not care whether these incidents are related or not. They will try to take advantage of the situation by sending out fake emails.

“We felt it was important to alert people about the data availability so that everyone could reset their passwords, directly from the app, and be on alert for other phishing communications,” Shalev said.

If and when we find out more, we’ll keep you posted, so stay tuned.

How to stay safe

If you have enabled 2FA on your Instagram account, we think it is indeed safe to ignore the emails, as proposed by Meta.

Should you want to err on the safe side and decide to change your password, make sure to do so in the app and not click any links in the email, to avoid the risk that you have received a fake email. Or you might end up providing scammers with your password.

Another thing to keep in mind is that these are Meta-data. Which means some users may have reused or linked them to their Facebook or WhatsApp accounts. So, as a precaution, you can check recent logins and active sessions on Instagram, WhatsApp, and Facebook, and log out from any devices or locations you do not recognize.

If you want to find out whether your data was included in an Instagram data breach, or any other for that matter, try our free Digital Footprint scan.