New Microsoft Data Security Index report explores secure AI adoption to protect sensitive data

Generative AI and agentic AI are redefining how organizations innovate and operate, unlocking new levels of productivity, creativity and collaboration across industry teams. From accelerating content creation to streamlining workflows, AI offers transformative benefits that empower organizations to work smarter and faster. These capabilities, however, also introduce new dimensions of data risk—as AI adoption grows, so does the urgency for effective data security that keeps pace with AI innovation. In the 2026 Microsoft Data Security Index report, we explored one of the most pressing questions facing today’s organizations: How can we harness the power of AI while safeguarding sensitive data?

47% of surveyed organizations are implementing controls focused on generative AI workloads

To fully realize the potential of AI, organizations must pair innovation with responsibility and robust data security. This year, the Data Security Index report builds upon the responses of more than 1,700 security leaders to highlight three critical priorities for protecting organizational data and securing AI adoption:

- Moving from fragmented tools to unified data security.

- Managing AI-powered productivity securely.

- Strengthening data security with generative AI itself.

By consolidating solutions for better visibility and governance controls, implementing robust controls processes to protect data in AI-powered workflows, and using generative AI agents and automation to enhance security programs, organizations can build a resilient foundation for their next wave of generative AI-powered productivity and innovation. The result is a future where AI both drives efficiency and acts as a powerful ally in defending against data risk, unlocking growth without compromising protection.

In this article we will delve into some of the Data Security Index report’s key findings that relate to generative AI and how they are being operationalized at Microsoft. The report itself has a much broader focus and depth of insight.

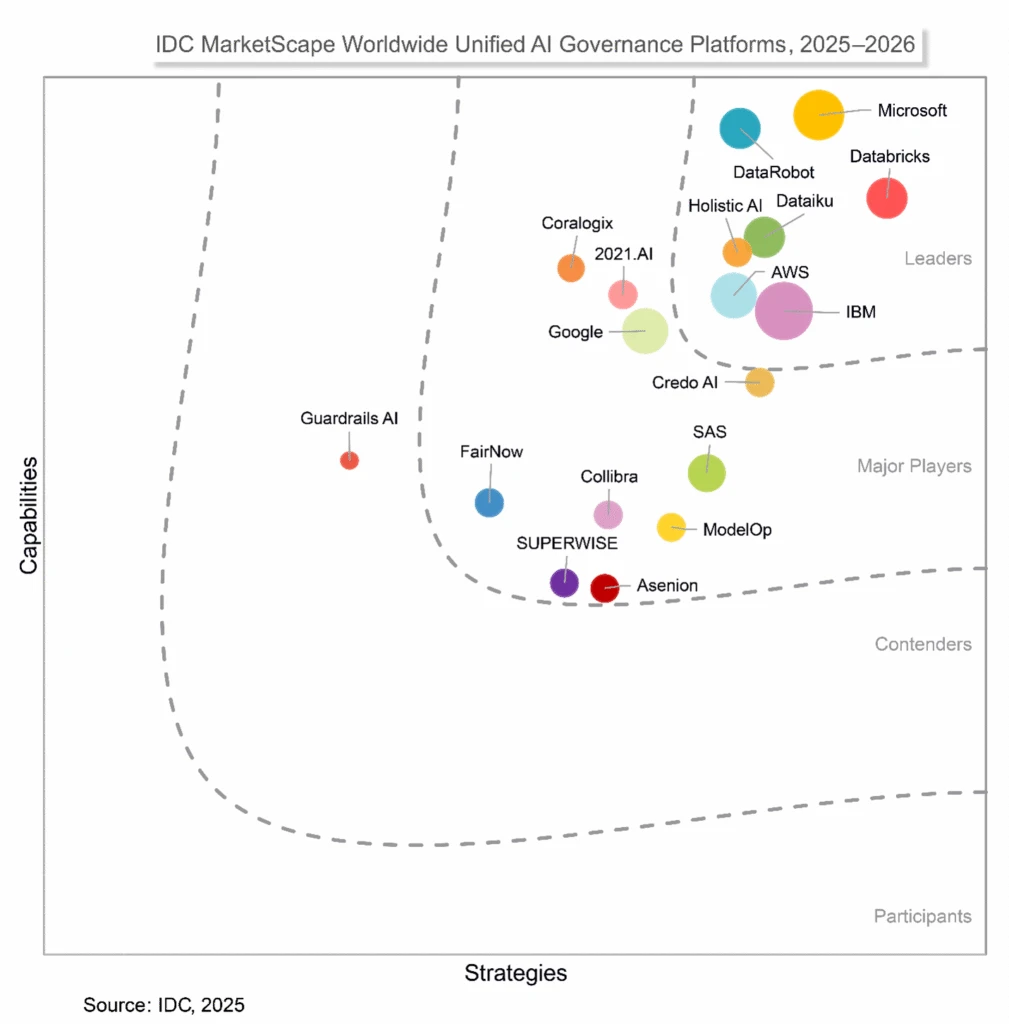

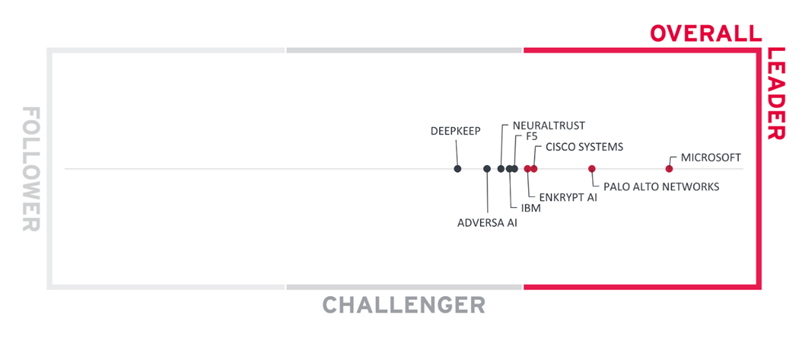

1. From fragmented tools to unified data security

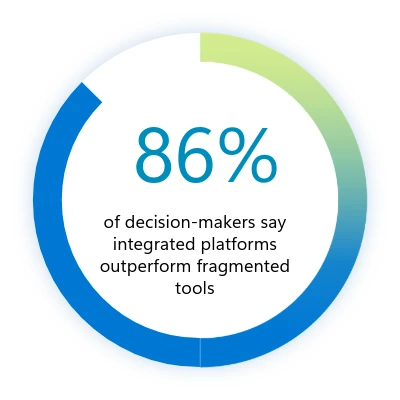

Many organizations still rely on disjointed tools and siloed controls, creating blind spots that hinder the efficacy of security teams. According to the 2026 Data Security Index, decision-makers cite poor integration, lack of a unified view across environments, and disparate dashboards as their top challenges in maintaining proper visibility and governance. These gaps make it harder to connect insights and respond quickly to risks—especially as data volumes and data environment complexity surge. Security leaders simply aren’t getting the oversight they need.

Why it matters

Consolidating tools into integrated platforms improves visibility, governance, and proactive risk management.

To address these challenges, organizations are consolidating tools, investing in unified platforms like Microsoft Purview that bring operations together while improving holistic visibility and control. These integrated solutions frequently outperform fragmented toolsets, enabling better detection and response, streamlined management, and stronger governance.

As organizations adopt new AI-powered technologies, many are also leaning into emerging disciplines like Microsoft Purview Data Security Posture Management (DSPM) to keep pace with evolving risks. Effective DSPM programs help teams identify and prioritize data‑exposure risks, detect access to sensitive information, and enforce consistent controls while reducing complexity through unified visibility. When DSPM provides proactive, continuous oversight, it becomes a critical safeguard—especially as AI‑powered data flows grow more dynamic across core operations.

More than 80% of surveyed organizations are implementing or developing DSPM strategies

We’re trying to use fewer vendors. If we need 15 tools, we’d rather not manage 15 vendor solutions. We’d prefer to get that down to five, with each vendor handling three tools.”

—Global information security director in the hospitality and travel industry

2. Managing AI-powered productivity securely

Generative AI is already influencing data security incident patterns: 32% of surveyed organizations’ data security incidents involve the use of generative AI tools. Understandably, surveyed security leaders have responded to this trend rapidly. Nearly half (47%) the security leaders surveyed in the 2026 Data Security Index are implementing generative AI-specific controls—an increase of 8% since the 2025 report. This helps enable innovation through the confident adoption of generative AI apps and agents while maintaining security.

Why it matters

Generative AI boosts productivity and innovation, but both unsanctioned and sanctioned AI tools must be managed. It’s essential to control tool use and monitor how data is accessed and shared with AI.

In the full report, we explore more deeply how AI-powered productivity is changing the risk profile of enterprises. We also explore several mechanisms, both technical and cultural, already helping maintain trust and reduce risk without sacrificing productivity gains or compliance.

3. Strengthening data security with generative AI

The 2026 Data Security Index indicates that 82% of organizations have developed plans to embed generative AI into their data security operations, up from 64% the previous year. From discovering sensitive data and detecting critical risks to investigating and triaging incidents, as well as refining policies, generative AI is being deployed for both proactive and reactive use cases at scale. The report explores how AI is changing the day-to-day operations across security teams, including the emergence of AI-assisted automation and agents.

Why it matters

Generative AI automates risk detection, scales protection, and accelerates response—amplifying human expertise while maintaining oversight.

Our generative AI systems are constantly observing, learning, and making recommendations for modifications with far more data than would be possible with any kind of manual or quasi-manual process.”

—Director of IT in the energy industry

Turning recommendations into action

As organizations confront the challenges of data security in the age of AI, the 2026 Data Security Index report offers three clear imperatives: unifying data security, increasing generative AI oversight, and using AI solutions to improve data security effectiveness.

- Unified data security requires continuous oversight and coordinated enforcement across your data estate. Achieving this scenario demands mechanisms that can discover, classify, and protect sensitive information at scale while extending safeguards to endpoints and workloads. Microsoft Purview DSPM operationalizes this principle through continuous discovery, classification, and protection of sensitive data across cloud, software as a service (SaaS), and on-premises assets.

- Responsible AI adoption depends on strict (but dynamic) controls and proactive data risk management. Organizations must enforce automated mechanisms that prevent unauthorized data exposure, monitor for anomalous usage, and guide employees toward sanctioned tools and responsible practices. Microsoft enforces these principles through governance policies supported by Microsoft Purview Data Loss Prevention and Microsoft Defender for Cloud Apps. These solutions detect, prevent, and respond to risky generative AI behaviors that increase the likelihood of data exposure, policy violations, or unsafe outputs, ensuring innovation aligns with security and compliance requirements.

- Modern security operations benefit from automation that accelerate detection and response alongside strong oversight. AI-powered agents can streamline threat investigation, recommend policies, and reduce manual workload while maintaining human oversight for accountability. We deliver this capability through Microsoft Security Copilot, embedded across Microsoft Sentinel, Microsoft Entra, Microsoft Intune, Microsoft Purview, and Microsoft Defender. These agents automate threat detection, incident investigation, and policy recommendations, enabling faster response and continuous improvement of security posture.

Stay informed, stay productive, stay protected

The insights we’ve covered here only scratch the surface of what the Microsoft Data Security Index reveals.The full report dives deeper into global trends, detailed metrics, and real-world perspectives from security leaders across industries and the globe. It provides specificity and context to help you shape your generative AI strategy with confidence.

If you want to explore the data behind these findings, see how priorities vary by region, and uncover actionable recommendations for secure AI adoption, read the full 2026 Microsoft Data Security Index to access comprehensive research, expert commentary, and practical guidance for building a security-first foundation for innovation.

Learn more

Learn more about the Microsoft Purview unified data security solutions.

To learn more about Microsoft Security solutions, visit our website. Bookmark the Security blog to keep up with our expert coverage on security matters. Also, follow us on LinkedIn (Microsoft Security) and X (@MSFTSecurity) for the latest news and updates on cybersecurity.

The post New Microsoft Data Security Index report explores secure AI adoption to protect sensitive data appeared first on Microsoft Security Blog.