Posted by Mateusz Jurczyk, Google Project Zero

In the first three blog posts of this series, I sought to outline what the Windows Registry actually is, its role, history, and where to find further information about it. In the subsequent three posts, my goal was to describe in detail how this mechanism works internally – from the perspective of its clients (e.g., user-mode applications running on Windows), the regf format used to encode hives, and finally the kernel itself, which contains its canonical implementation. I believe all these elements are essential for painting a complete picture of this subsystem, and in a way, it shows my own approach to security research. One could say that going through this tedious process of getting to know the target unnecessarily lengthens the total research time, and to some extent, they would be right. On the other hand, I believe that to conduct complete research, it is equally important to answer the question of how certain things are implemented, as well as why they are implemented that way – and the latter part often requires a deeper dive into the subject. And since I have already spent the time reverse engineering and understanding various internal aspects of the registry, there are great reasons to share the information with the wider community. There is a lack of publicly available materials on how various mechanisms in the registry work, especially the most recent and most complicated ones, so I hope that the knowledge I have documented here will prove useful to others in the future.

In this blog post, we get to the heart of the matter, the actual security of the Windows Registry. I'd like to talk about what made a feature that was initially meant to be just a quick test of my fuzzing infrastructure draw me into manual research for the next 1.5 ~ 2 years, and result in Microsoft fixing (so far) 53 CVEs. I will describe the various areas that are important in the context of low-level security research, from very general ones, such as the characteristics of the codebase that allow security bugs to exist in the first place, to more specific ones, like all possible entry points to attack the registry, the impact of vulnerabilities and the primitives they generate, and some considerations on effective fuzzing and where more bugs might still be lurking.

Let's start with a quick recap of the registry's most fundamental properties as an attack surface:

- Local attack surface for privilege escalation: As we already know, the Windows Registry is a strictly local attack surface that can potentially be leveraged by a less privileged process to gain the privileges of a higher privileged process or the kernel. It doesn't have any remote components except for the Remote Registry service, which is relatively small and not accessible from the Internet on most Windows installations.

- Complex, old codebase in a memory-unsafe language: The Windows Registry is a vast and complex mechanism, entirely written in C, most of it many years ago. This means that both logic and memory safety bugs are likely to occur, and many such issues, once found, would likely remain unfixed for years or even decades.

- Present in the core NT kernel: The registry implementation resides in the core Windows kernel executable (ntoskrnl.exe), which means it is not subject to mitigations like the win32k lockdown. Of course, the reachability of each registry bug needs to be considered separately in the context of specific restrictions (e.g., sandbox), as some of them require file system access or the ability to open a handle to a specific key. Nevertheless, being an integral part of the kernel significantly increases the chances that a given bug can be exploited.

- Most code reachable by unprivileged users: The registry is a feature that was created for use by ordinary user-mode applications. It is therefore not surprising that the vast majority of registry-related code is reachable without any special privileges, and only a small part of the interface requires administrator rights. Privilege escalation from medium IL (Integrity Level) to the kernel is probably the most likely scenario of how a registry vulnerability could be exploited.

- Manages sensitive information: In addition to the registry implementation itself being complex and potentially prone to bugs, it's important to remember that the registry inherently stores security-critical system information, including various global configurations, passwords, user permissions, and other sensitive data. This means that not only low-level bugs that directly allow code execution are a concern, but also data-only attacks and logic bugs that permit unauthorized modification or even disclosure of registry keys without proper permissions.

- Not trivial to fuzz, and not very well documented: Overall, it seems that the registry is not a very friendly target for bug hunting without any knowledge of its internals. At the same time, obtaining the information is not easy either, especially for the latest registry mechanisms, which are not publicly documented and learning about them basically boils down to reverse engineering. In other words, the entry bar into this area is quite high, which can be an advantage or a disadvantage depending on the time and commitment of a potential researcher.

Security properties

The above cursory analysis seems to indicate that the registry may be a good audit target for someone interested in EoP bugs on Windows. Let's now take a closer look at some of the specific low-level reasons why the registry has proven to be a fruitful research objective.

Broad range of bug classes

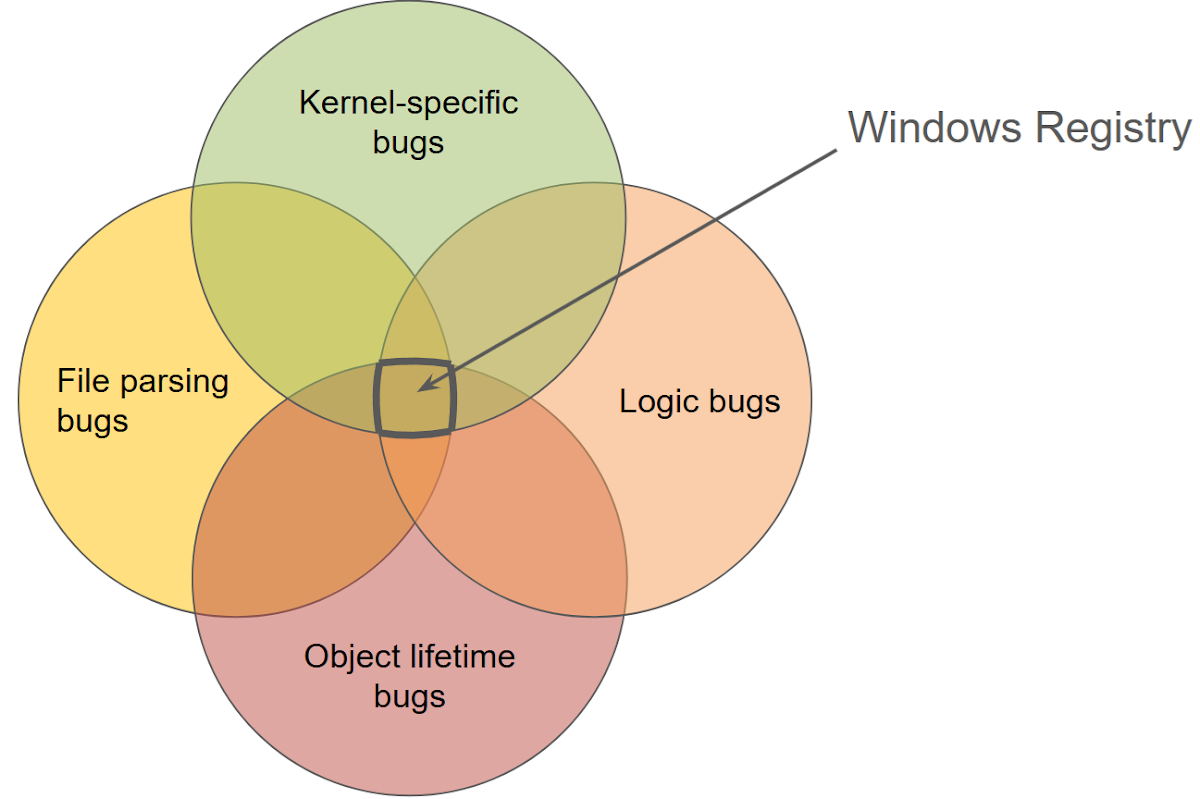

Due to the registry being both complex and a central mechanism in the system operating with kernel-mode privileges, numerous classes of bugs can occur within it. An example vulnerability classification is presented below:

- Hive memory corruption: Every invasive operation performed on the registry (i.e., a "write" operation) is reflected in changes made to the memory-mapped view of the hive's structure. Considering that objects within the hive include variable-length arrays, structures with counted references, and references to other cells via cell indexes (hives' equivalent of memory pointers), it's natural to expect common issues like buffer overflows or use-after-frees.

- Pool memory corruption: In addition to hive memory mappings, the Configuration Manager also stores a significant amount of information on kernel pools. Firstly, there are cached copies of certain hive data, as described in my previous blog post. Secondly, there are various auxiliary objects, such as those allocated and subsequently released within a single system call. Many of these objects can fall victim to memory management bugs typical of the C language.

- Information disclosure: Because the registry implementation is part of the kernel, and it exchanges large amounts of information with unprivileged user-mode applications, it must be careful not to accidentally disclose uninitialized data from the stack or kernel pools to the caller. This can happen both through output data copied to user-mode memory and through other channels, such as data leakage to a file (hive file or related log file). Therefore, it is worthwhile to keep an eye on whether all arrays and dynamically allocated buffers are fully populated or carefully filled with zeros before passing them to a lower-privileged context.

- Race conditions: As a multithreaded environment, Windows allows for concurrent registry access by multiple threads. Consequently, the registry implementation must correctly synchronize access to all shared kernel-side objects and be mindful of "double fetch" bugs, which are characteristic of user-mode client interactions.

- Logic bugs: In addition to being memory-safe and free of low-level bugs, a secure registry implementation must also enforce correct high-level security logic. This means preventing unauthorized users from accessing restricted keys and ensuring that the registry operates consistently with its documentation under all circumstances. This requires a deep understanding of both the explicit documentation and the implicit assumptions that underpin the registry's security from the kernel developers. Ultimately, any behavior that deviates from expected logic, whether documented or assumed, could lead to vulnerabilities.

- Inter-process attacks: The registry can serve as a security target, but also as a means to exploit flaws in other applications on the system. It is a shared database, and a local attacker has many ways to indirectly interact with more privileged programs and services. A simple example is when privileged code sets overly permissive permissions on its keys, allowing unauthorized reading or modification. More complex cases can occur when there is a race condition between key creation and setting its restricted security descriptor, or when a key modification involving several properties is not performed transactionally, potentially leading to an inconsistent state. The specifics depend on how the privileged process uses the registry interface.

If I were to depict the Windows Registry in a single Venn diagram, highlighting its various possible bug classes, it might look something like this:

Manual reference counting

As I have mentioned multiple times, security descriptors in registry hives are shared by multiple keys, and therefore, must be reference counted. The field responsible for this is a 32-bit unsigned integer, and any situation where it's set to a value lower than the actual number of references can result in the release of that security descriptor while it's still in use, leading to a use-after-free condition and hive-based memory corruption. So, we see that it's absolutely critical that this refcounting is implemented correctly, but unfortunately, there are (or were until recently) many reasons why this mechanism could be prone to bugs:

- Usually, a reference count is a construct that exists strictly in memory, where it is initialized with a value of 1, then incremented and decremented some number of times, and finally drops to zero, causing the object to be freed. However, with registry hives, the initial refcount values are loaded from disk, from a file that we assume is controlled by the attacker. Therefore, these values cannot be trusted in any way, and the first necessary step is to actually compare and potentially adjust them according to the true number of references to each descriptor. Even though this is done in theory, bugs can creep into this logic in practice (CVE-2022-34707, CVE-2023-38139).

- For a long time, all operations on reference counts were performed by directly referencing the _CM_KEY_SECURITY.ReferenceCount field, instead of using a secure wrapper. As a result, none of these incrementations were protected against integer overflow. This meant that not only a too small, but also a too large refcount value could eventually overflow and lead to a use-after-free situation (CVE-2023-28248, CVE-2024-43641). This weakness was gradually addressed in various places in the registry code between April 2023 and November 2024. Currently, all instances of refcount incrementation appear to be secure and involve calling the special helper function CmpKeySecurityIncrementReferenceCount, which protects against integer overflow. Its counterpart for refcount decrementation is CmpKeySecurityDecrementReferenceCount.

- It seems that there is a lack of clarity and understanding of how certain special types of keys, such as predefined keys and tombstone keys, behave in relation to security descriptors. In theory, the only type of key that does not have a security descriptor assigned to it is the exit node (i.e., a key with the KEY_HIVE_EXIT flag set, found solely in the virtual hive rooted at \Registry\), while all other keys do have a security descriptor assigned to them, even if it is not used for anything. In practice, however, there have been several vulnerabilities in Windows that resulted either from incorrect security refresh in KCB for special types of keys (CVE-2023-21774), from releasing the security descriptor of a predefined key without considering its reference count (CVE-2023-35356), or from completely forgetting the need for reference counting the descriptors of tombstone keys in the "rename" operation (CVE-2023-35382).

- When the reference count of a security descriptor reaches zero and is released, this operation is irreversible. There is no guarantee that upon reallocation, the descriptor would have the same cell index, or even that it could be reallocated at all. This is crucial for multi-step operations where individual actions could fail, necessitating a full rollback to the original state. Ideally, releasing security descriptors should always be the final step, only when the kernel can be certain that the entire operation will succeed. A vulnerability exemplifying this is CVE-2023-21772, where the registry virtualization code first released the old security descriptor and then attempted to allocate a new one. If the allocation failed, the key was left without any security properties, violating a fundamental assumption of the registry and potentially having severe consequences for system memory safety.

Aggressive self-healing and recovery

As I described in blog post #5, one of the registry's most interesting features, which distinguishes it from many other file format implementations, is that it is self-healing. The entire hive loading process, from the internal CmCheckRegistry function downwards, is focused on loading the database at all costs, even if some corrupted fragments are encountered. Only if the file damage is so extensive that recovering any data is impossible does the entire loading process fail. Of course, given that the registry stores critical system data such as its basic configuration, and the lack of access to this data virtually prevents Windows from booting, this decision made a lot of sense from the system reliability point of view. It's probably safe to assume that it has prevented the need for system reinstallation on numerous computers, simply because it did not reject hives with minor damage that might have appeared due to random hardware failure.

However, from a security perspective, this behavior is not necessarily advantageous. Firstly, it seems obvious that upon encountering an error in the input data, it is simpler to unconditionally halt its processing rather than attempt to repair it. In the latter case, it is possible for the programmer to overlook an edge case – forget to reset some field in some structure, etc. – and thus instead of fixing the file, allow for another unforeseen, inconsistent state to materialize within it. In other words, the repair logic constitutes an additional attack surface, and one that is potentially even more interesting and error-prone than other parts of the implementation. A classic example of a vulnerability associated with this property is CVE-2023-38139.

Secondly, in my view, the existence of this logic may have negatively impacted the secure development of the registry code, perhaps by leading to a discrepancy between what it guaranteed and what other developers thought it had guaranteed. For example, in 1991–1993, when the foundations of the Configuration Manager subsystem were being created in their current form, probably no one considered hive loading a potential attack vector. At that time, the registry was used only to store system configuration, and controlled hive loading was privileged and required admin rights. Therefore, I suspect that the main goal of hive checking at that time was to detect simple data inconsistencies due to hardware problems, such as single bit flips. No one expected a hive to contain a complex, specially crafted multi-kilobyte data structure designed to trigger a security flaw. Perhaps the rest of the registry code was written under the assumption that since data sanitization and self-healing occurred at load time, its state was safe from that point on and no further error handling was needed (except for out-of-memory errors). Then, in Windows Vista, a decision was made to open access to controlled hive loading by unprivileged users through the app hive mechanism, and it suddenly turned out that the existing safeguards were not entirely adequate. Attackers now became able to devise data constructs that were structurally correct at the low level, but completely beyond the scope of what the actual implementation expected and could handle.

Finally, self-healing can adversely affect system security by concealing potential registry bugs that could trigger during normal Windows operation. These problems might only become apparent after a period of time and with a "build-up" of enough issues within the hive. Because hives are mapped into memory, and the kernel operates directly on the data within the file, there exists a category of errors known as "inconsistent hive state". This refers to a data structure within the hive that doesn't fully conform to the file format specification. The occurrence of such an inconsistency is noteworthy in itself and, for someone knowledgeable about the registry, it could be a direct clue for finding vulnerabilities. However, such instances rarely cause an immediate system crash or other visible side effects. Consider security descriptors and their reference counting: as mentioned earlier, any situation where the active number of references exceeds the reference count indicates a serious security flaw. However, even if this were to happen during normal system operation, it would require all other references to that descriptor to be released and then for some other data to overwrite the freed descriptor. Then, a dangling reference would need to be used to access the descriptor. The occurrence of all these factors in sequence is quite unlikely, and the presence of self-healing further decreases these chances, as the reference count would be restored to its correct value at the next hive load. This characteristic can be likened to wrapping the entire registry code in a try/except block that catches all exceptions and masks them from the user. This is certainly helpful in the context of system reliability, but for security, it means that potential bugs are harder to spot during system run time and, for the same reason, quite difficult to fuzz. This does not mean that they don't exist; their detection just becomes more challenging.

Unclear boundaries between hard and conventional format requirements

This point is related to the previous section. In the regf format, there are certain requirements that are fairly obvious and must be always met for a file to be considered valid. Likewise, there are many elements that are permitted to be formatted arbitrarily, at the discretion of the format user. However, there is a third category, a gray area of requirements that seem reasonable and probably would be good if they were met, but it is not entirely clear whether they are formally required. Another way to describe this set of states is one that is not generated by the Windows kernel itself but is still not obviously incorrect. From a researcher's perspective, it would be worthwhile to know which parts of the format are actually required by the specification and which are only a convention adopted by the Windows code.

We might never find out, as Microsoft hasn't published an official format specification and it seems unlikely that they will in the future. The only option left for us is to rely on the implementation of the CmpCheck* functions (CmpCheckKey, CmpCheckValueList, etc.) as a sort of oracle and assume that everything there is enforced as a hard requirement, while all other states are permissible. If we go down this path, we might be in for a big surprise, as it turns out that there are many logical-sounding requirements that are not enforced in practice. This could allow user-controlled hives to contain constructs that are not obviously problematic, but are inconsistent with the spirit of the registry and its rules. In many cases, they allow encoding data in a less-than-optimal way, leading to unexpected redundancy. Some examples of such constructs are presented below:

- Values with duplicate names within a single key: Under normal conditions, only one value with a given name can exist in a key, and if there is a subsequent write to the same name, the new data is assigned to the existing value. However, the uniqueness of value names is not required in input hives, and it is possible to load a hive with duplicate values.

- Duplicate identical security descriptors within a single hive: Similar to the previous point, it is assumed that security descriptors within a hive are unique, and if an existing descriptor is assigned to another key, its reference count is incremented rather than allocating a new object. However, there is no guarantee that a specially crafted hive will not contain multiple duplicates of the same security descriptor, and this is accepted by the loader.

- Uncompressed key names consisting solely of ASCII characters: Under normal circumstances, if a given key has a name comprising only ASCII characters, it will always be stored in a compressed form, i.e., by writing two bytes of the name in each element of the _CM_KEY_NODE.Name array of type uint16, and setting the KEY_COMP_NAME flag (0x20) in _CM_KEY_NODE.Flags. However, once again, optimal representation of names is not required when loading the hive, and this convention can be ignored without issue.

- Allocated but unused cells: The Windows registry implementation deallocates objects within a hive when they are no longer needed, making space for new data. However, the loader does not require every cell marked "allocated" to be actively used. Similarly, security descriptors with a reference count of zero are typically deallocated. However, until a November 2024 refactor of the CmpCheckAndFixSecurityCellsRefcount function, it was possible to load a hive with unused security descriptors still present in the linked list. This behavior has since been changed, and unused security descriptors encountered during loading are now automatically freed and removed from the list.

These examples illustrate the issue well, but none of them (as far as I know) have particularly significant security implications. However, there were also a few specific memory corruption vulnerabilities that stemmed from the fact that the registry code made theoretically sound assumptions about the hive structure, but they were not unenforced by the loader:

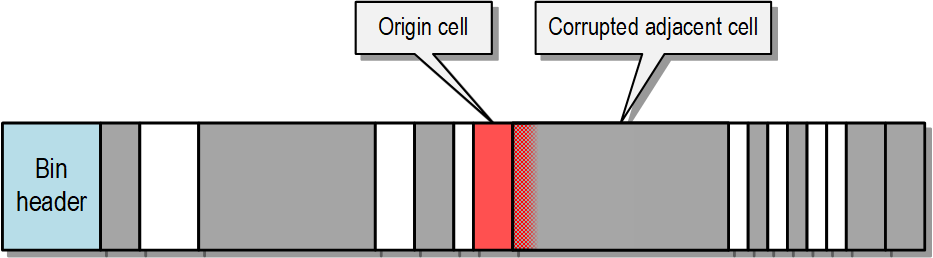

- CVE-2022-37988: This bug is closely related to the fact that cells larger than 16 KiB are aligned to the nearest power of two in Windows, but this condition doesn't need to be satisfied during loading. This caused the shrinking of a cell to fail, even though it should always succeed in-place, "surprising" the client of the allocator and resulting in a use-after-free condition.

- CVE-2022-37956: As I described in blog post #5, Windows has some logic to ensure that no leaf-type subkey list (li, lf, or lh) exceeds 511 or 1012 elements, depending on its specific type. If a list is expanded beyond this limit, it is automatically split into two lists, each half the original length. Another reasonable assumption is that the root index length would never approach the maximum value of _CM_KEY_INDEX.Count (uint16) under normal circumstances. This would require an unrealistically large number of subkeys or a very specific sequence of millions of key creations and deletions with specific names. However, it was possible to load a hive containing a subkey list of any of the four types with a length equal to 0xFFFF, and trigger a 16-bit integer overflow on the length field, leading to memory corruption. Interestingly, this is one of the few bugs that could be triggered solely with a single .bat file containing a long sequence of the reg.exe command executions.

- CVE-2022-38037: In this case, the kernel code assumed that the hive version defined in the header (_HBASE_BLOCK.Minor) always corresponded to the type of subkey lists used in a given hive. For example, if the file version is regf 1.3, it should be impossible for it to contain lists in a format introduced in version 1.5. However, for some reason, the hive loader doesn't enforce the proper relationship between the format version and the structures used in it, which in this case led to a serious hive-based memory corruption vulnerability.

As we can see, it is crucial to differentiate between format elements that are conventions adopted by a specific implementation, and those actually enforced during the processing of the input file. If we encounter some code that makes assumptions from the former group that don't belong to the latter one, this could indicate a serious security issue.

Susceptibility to mishandling OOM conditions

Generally speaking, the implementation of any function in the Windows kernel is built roughly according to the following scheme:

NTSTATUS NtHighLevelOperation(...) {

NTSTATUS Status;

Status = HelperFunction1(...);

if (!NT_SUCCESS(Status)) {

//

// Clean up...

//

return Status;

}

Status = HelperFunction2(...);

if (!NT_SUCCESS(Status)) {

//

// Clean up...

//

return Status;

}

//

// More calls...

//

return STATUS_SUCCESS;

}

Of course, this is a significant simplification, as real-world code contains keywords and constructs such as if statements, switch statements, various loops, and so on. The key point is that a considerable portion of higher-level functions call internal, lower-level functions specialized for specific tasks. Handling potential errors signalled by these functions is an important aspect of kernel code (or any code, for that matter). In low-level Windows code, error propagation occurs using the NTSTATUS type, which is essentially a signed 32-bit integer. A value of 0 signifies success (STATUS_SUCCESS), positive values indicate success but with additional information, and negative values denote errors. The sign of the number is checked by the NT_SUCCESS macro. During my research, I dedicated significant time to analyzing the error handling logic. Let's take a moment to think about the types of errors that could occur during registry operations, and the conditions that might cause them.

A common trait of all actions that modify data in the registry is that they allocate memory. The simplest example is the allocation of auxiliary buffers from kernel pools, requested through functions from the ExAllocatePool group. If there is very little available memory at a given point in time, one of the allocation requests may return the STATUS_INSUFFICIENT_RESOURCES error code, which will be propagated back to the original caller. And since we assume that we take on the role of a local attacker who has the ability to execute code on the machine, artificially occupying all available memory is potentially possible in many ways. So this is one way to trigger errors while performing operations on the registry, but admittedly not an ideal way, as it largely depends on the amount of RAM and the maximum pagefile size. Additionally, in a situation where the kernel has so little memory that single allocations start to fail, there is a high probability of the system crashing elsewhere before the vulnerability is successfully exploited. And finally, if several allocations are requested in nearby code in a short period of time, it seems practically impossible to take precise control over which of them will succeed and which will not.

Nonetheless, the overall concept of out-of-memory conditions is a very promising avenue for attack, especially considering that the registry primarily operates on memory-mapped hives using its own allocator, in addition to objects from kernel pools. The situation is even more favorable for an attacker due to the 2 GiB size limitation of each of the two storage types (stable and volatile) within a hive. While this is a relatively large value, it is achievable to occupy it in under a minute on today's machines. The situation is even easier if the volatile space that needs to be occupied, as it resides solely in memory and is not flushed to disk – so filling two gigabytes of memory is then a matter of seconds. It can be accomplished, for example, by creating many long registry values, which is a straightforward task when dealing with a controlled hive. However, even in system hives, this is often feasible. To perform data spraying on a given hive, we only need a single key granting us write permissions. For instance, both HKLM\Software and HKLM\System contain numerous keys that allow write access to any user in the system, effectively permitting them to fill it to capacity. Additionally, the "global registry quota" mechanism, implemented by the internal CmpClaimGlobalQuota and CmpReleaseGlobalQuota functions, ensures that the total memory occupied by registry data in the system does not exceed 4 GiB. Besides filling the entire space of a specific hive, this is thus another way to trigger out-of-memory conditions in the registry, especially when targeting a hive without write permissions. A concrete example where this mechanism could have been employed to corrupt the HKLM\SAM system hive is the CVE-2024-26181 vulnerability.

Considering all this, it is a fair assumption that a local attacker can cause any call to ExAllocatePool*, HvAllocateCell, and HvReallocateCell (with a length greater than the existing cell) to fail. This opens up a large number of potential error paths to analyze. The HvAllocateCell calls are a particularly interesting starting point for analysis, as there are quite a few of them and almost all of them belong to the attack surface accessible to a regular user:

There are two primary reasons why focusing on the analysis of error paths can be a good way to find security bugs. First, it stands to reason that on regular computers used by users, it is extremely rare for a given hive to grow to 2 GiB and run out of space, or for all registry data to simultaneously occupy 4 GiB of memory. This means that these code paths are practically never executed under normal conditions, and even if there were bugs in them, there is a very small chance that they would ever be noticed by anyone. Such rarely executed code paths are always a real treat for security researchers.

The second reason is that proper error handling in code is inherently difficult. Many operations involve numerous steps that modify the hive's internal state. If an issue arises during these operations, the registry code must revert all changes and restore the registry to its original state (at least from the macro-architectural perspective). This requires the developer to be fully aware of all changes applied so far when implementing each error path. Additionally, proper error handling must be considered during the initial design of the control flow as well, because some registry actions are irreversible (e.g., freeing cells). The code must thus be structured so that all such operations are placed at the very end of the logic, where errors cannot occur anymore and successful execution is guaranteed.

One example of such a vulnerability is CVE-2023-23421, which boiled down to the following code:

NTSTATUS CmpCommitRenameKeyUoW(_CM_KCB_UOW *uow) {

// ...

if (!CmpAddSubKeyEx(Hive, ParentKey, NewNameKey) ||

!CmpRemoveSubKey(Hive, ParentKey, OldNameKey)) {

CmpFreeKeyByCell(Hive, NewNameKey);

return STATUS_INSUFFICIENT_RESOURCES;

}

// ...

}

The issue here was that if the CmpRemoveSubKey call failed, the corresponding error path should have reversed the effect of the CmpAddSubKeyEx function in the previous line, but in practice it didn't. As a result, it was possible to end up with a dangling reference to a freed key in the subkey list, which was a typical use-after-free condition.

A second interesting example of this type of bug was CVE-2023-21747, where an out-of-memory error could occur during a highly sensitive operation, hive unloading. As there was no way to revert the state at the time of the OOM, the vulnerability was fixed by Microsoft by refactoring the CmpRemoveSubKeyFromList function and other related functions so that they no longer allocate memory from kernel pools and thus there is no longer a physical possibility of them failing.

Finally, I'll mention CVE-2023-38154, where the problem wasn't incorrect error handling, but a complete lack of it – the return value of the HvpPerformLogFileRecovery function was ignored, even though there was a real possibility it could end with an error. This is a fairly classic type of bug that can occur in any programming language, but it's definitely worth keeping in mind when auditing the Windows kernel.

Susceptibility to mishandling partial successes

The previous section discusses bugs in error handling where each function is responsible for reversing the state it has modified. However, some functions don't adhere to this operational model. Instead of operating on an "all-or-nothing" basis, they work on a best-effort basis, aiming to accomplish as much of a given task as possible. If an error occurs, they leave any changes made in place, e.g., because this result is still preferable to not making any changes. This introduces a third possible output state for such functions: complete success, partial success, and complete failure.

This might be problematic, as the approach is incompatible with the typical usage of the NTSTATUS type, which is best suited for conveying one of two (not three) states. In theory, it is a 32-bit integer type, so it could store the additional information of the status being a partial success, and not being unambiguously positive or negative. In practice, however, the convention is to directly propagate the last error encountered within the inner function, and the outer functions very rarely "dig into" specific error codes, instead assuming that if NT_SUCCESS returns FALSE, the entire operation has failed. Such confusion at the cross-function level may have security implications if the outer function should take some additional steps in the event of a partial success of the inner function, but due to the binary interpretation of the returned error code, it ultimately does not execute them.

A classic example of such a bug is CVE-2024-26182, which occurred at the intersection of the CmpAddSubKeyEx (outer) and CmpAddSubKeyToList (inner) functions. The problem here was that CmpAddSubKeyToList implements complex, potentially multi-step logic for expanding the subkey list, which could perform a cell reallocation and subsequently encounter an OOM error. On the other hand, the CmpAddSubKeyEx function assumed that the cell index in the subkey list should only be updated in the hive structures if CmpAddSubKeyToList fully succeeds. As a result, the partial success of CmpAddSubKeyToList could lead to a classic use-after-free situation. An attentive reader will probably notice that the return value type of the CmpAddSubKeyToList routine was BOOL and not NTSTATUS, but the bug pattern is identical.

Overall complexity introduced over time

One of the biggest problems with the modern implementation of the registry is that over the decades of developing this functionality, many changes and new features have been introduced. This has caused the level of complexity of its internal state to increase so much that it seems difficult to grasp for one person, unless they are a full-time registry expert that has worked on it full-time over a period of months or years. I personally believe that the registry existed in its most elegant form somewhere around Windows NT 3.1 – 3.51 (i.e. in the years 1993–1996). At the time, the mechanism was intuitive and logical for both developers and its users. Each object (key, value) either existed or not, each operation ended in either success or failure, and when it was requested on a particular key, you could be sure that it was actually performed on that key. Everything was simple, and black and white. However, over time, more and more shades of gray were being continuously added, departing from the basic assumptions:

- The existence of predefined keys meant that every operation could no longer be performed on every key, as this special type of key was unsafe for many internal registry functions to use due to its altered semantics.

- Due to symbolic links, opening a specific key doesn't guarantee that it will be the intended one, as it might be a different key that the original one points to.

- Registry virtualization has introduced further uncertainty into key operations. When an operation is performed on a key, it is unclear whether the operation is actually executed on that specific key or redirected to a different one. Similarly, with read operations, a client cannot be entirely certain that it is reading from the intended key, as the data may be sourced from a different, virtualized location.

- Transactions in the registry mean that a given state is no longer considered solely within the global view of the registry. At any given moment, there may also be changes that are visible only within a certain transaction (when they are initiated but not yet committed), and this complex scenario must be correctly handled by the kernel.

- Layered keys have transformed the nature of hives, making them interdependent rather than self-contained database units. This is due to the introduction of differencing hives, which function solely as "patch diffs" and cannot exist independently without a base hive. Additionally, the semantics of certain objects and their fields have been altered. Previously, a key's existence was directly tied to the presence of a corresponding key node within the hive. Layered keys have disrupted this dependency. Now, a key with a key node can be non-existent if marked as a Tombstone, and a key without a corresponding key node can logically exist if its semantics are Merge-Unbacked, referencing a lower-level key with the same name.

Of course, all of these mechanisms were designed and implemented for a specific purpose: either to make life easier for developers/applications using the Registry API, or to introduce some new functionality that is needed today. The problem is not that they were added, but that it seems that the initial design of the registry was simply not compatible with them, so they were sort of forced into the registry, and where they didn't fit, an extra layer of tape was added to hold it all together. This ultimately led to a massive expansion of the internal state that needs to be maintained within the registry. This is evident both in the significant increase in the size of old structures (like KCB) and in the number of new objects that have been added over the years. But the most unfortunate aspect is that each of these more advanced mechanisms seems to have been designed to solve one specific problem, assuming that they would operate in isolation. And indeed, they probably do under typical conditions, but a particularly malicious user could start combining these different mechanisms and making them interact. Given the difficulty in logically determining the expected behavior of some of these combinations, it is doubtful that every such case was considered, documented, implemented, and tested by Microsoft.

The relationships between the various advanced mechanisms in the registry are humorously depicted in the image below:

Some examples of bugs caused by incorrect interactions between these mechanisms include CVE-2023-21675, CVE-2023-21748, CVE-2023-35356, CVE-2023-35357 and CVE-2023-35358.

Entry points

This section describes the entry points that a local attacker can use to interact with the registry and exploit any potential vulnerabilities.

Hive loading

Let's start with the operation of loading user-controlled hives. Since hive loading is only possible from disk (and not, for example, from a memory buffer), this means that to actually trigger this attack surface, the process must be able to create a file with controlled content, or at least a controlled prefix of several kilobytes in length. Regular programs operating at Medium IL generally have this capability, but write access to disk may be restricted for heavily sandboxed processes (e.g. renderer processes in browsers).

When it comes to the typical type of bugs that can be triggered in this way, what primarily comes to mind are issues related to binary data parsing, and memory safety violations such as out-of-bounds buffer accesses. It is possible to encounter more logical-type issues, but they usually rely on certain assumptions about the format not being sufficiently verified, causing subsequent operations on such a hive to run into problems. It is very rare to find a vulnerability that can be both triggered and exploited by just loading the hive, without performing any follow-up actions on it. But as CVE-2024-43452 demonstrates, it can still happen sometimes.

App hives

The introduction of Application Hives in Windows Vista caused a significant shift in the registry attack surface. It allowed unprivileged processes to directly interact with kernel code that was previously only accessible to system services and administrators. Attackers gained access to much of the NtLoadKey syscall logic, including hive file operations, hive parsing at the binary level, hive validation logic in the CmpCheckRegistry function and its subfunctions, and so on. In fact, of the 53 serious vulnerabilities I discovered during my research, 16 (around 30%) either required loading a controlled hive as an app hive, or were significantly easier to trigger using this mechanism.

It's important to remember that while app hives do open up a broad range of new possibilities for attackers, they don't offer exactly the same capabilities as loading normal (non-app) hives due to several limitations and specific behaviors:

- They must be loaded under the special path \Registry\A, which means an app hive cannot be loaded just anywhere in the registry hierarchy. This special path is further protected from references by a fully qualified path, which also reduces their usefulness in some offensive applications.

- The logic for unloading app hives differs from unloading standard hives because the process occurs automatically when all handles to the hive are closed, rather than manually unloading the hive through the RegUnLoadKeyW API or its corresponding syscall from the NtUnloadKey family.

- Operations on app hive security descriptors are very limited: any calls to the RegSetKeySecurity function or RegCreateKeyExW with a non-default security descriptor will fail, which means that new descriptors cannot be added to such hives.

- KTM transactions are unconditionally blocked for app hives.

Despite these minor restrictions, the ability to load arbitrary hives remains one of the most useful tools when exploiting registry bugs. Even if binary control of the hive is not strictly required, it can still be valuable. This is because it allows the attacker to clearly define the initial state of the hive where the attack takes place. By taking advantage of the cell allocator's determinism, it is often possible to achieve 100% exploitation success.

User hives and Mandatory User Profiles

Sometimes, triggering a specific bug requires both binary control over the hive and certain features that app hives lack, such as the ability to open a key via its full path. In such cases, an alternative to app hives exists, which might be slightly less practical but still allows for exploiting these more demanding bugs. It involves directly modifying one of the two hives assigned to every user in the system: the user hive (C:\Users\NTUSER.DAT mounted under \Registry\User\<SID>, or in other words, HKCU) or the user classes hive (C:\Users\AppData\Local\Microsoft\Windows\UsrClass.dat mounted under \Registry\User\<SID>_Classes). Naturally, when these hives are actively used by the system, access to their backing files is blocked, preventing simultaneous modification, which complicates things considerably. However, there are two ways to circumvent this problem.

The first scenario involves a hypothetical attacker who has two local accounts on the targeted system, or similarly, two different users collaborating to take control of the computer (let's call them users A and B). User A can grant user B full rights to modify their hive(s), and then log out. User B then makes all the required binary changes to the hive and finally notifies user A that they can log back in. At this point, the Profile Service loads the modified hive on behalf of that user, and the initial goal is achieved.

The second option is more practical as it doesn't require two different users. It abuses Mandatory User Profiles, a system functionality that prioritizes the NTUSER.MAN file in the user's directory over the NTUSER.DAT file as the user hive, if it exists (it doesn't exist in the default system installation). This means that a single user can place a specially prepared hive under the NTUSER.MAN name in their home directory, then log out and log back in. Afterwards, NTUSER.MAN will be the user's active HKCU key, achieving the goal. However, the technique also has some drawbacks – it only applies to the user hive (not UsrClass.dat), and it is somewhat noisy. Once the NTUSER.MAN file has been created and loaded, there is no way to delete it by the same user, as it will always be loaded by the system upon login, effectively blocking access to it.

A few examples of bugs involving one of the two above techniques are CVE-2023-21675, CVE-2023-35356, and CVE-2023-35633. They all required the existence of a special type of key called a predefined key within a publicly accessible hive, such as HKCU. Even when predefined keys were still supported, they could not be created using the system API, and the only way to craft them was by directly setting a specific flag within the internal key node structure in the hive file.

Log file parsing: .LOG/.LOG1/.LOG2

One of the fundamental features of the registry is that it guarantees consistency at the level of interdependent cells that together form the structure of keys within a given hive. This refers to a situation where a single operation on the registry involves the simultaneous modification of multiple cells. Even if there is a power outage and the system restarts in the middle of performing this operation, the registry guarantees that all intermediate changes will either be applied or discarded. Such "atomicity" of operations is necessary in order to guarantee the internal consistency of the hive structure, which, as we know, is important to security. The mechanism is implemented by using additional files associated with the hive, where the intermediate state of registry modifications is saved with the granularity of a memory page (4 KiB), and which can be safely rolled forward or rolled back at the next hive load. Usually these are two files with the .LOG1 and .LOG2 extensions, but it is also possible to force the use of a single log file with the .LOG extension by passing the REG_HIVE_SINGLE_LOG flag to syscalls from the NtLoadKey family.

Internally, each LOG file can be encoded in one of two formats. One is the "legacy log file", a relatively simple format that has existed since the first implementation of the registry in Windows NT 3.1. Another one is the "incremental log file", a slightly more modern and complex format introduced in Windows 8.1 to address performance issues that plagued the previous version. Both formats use the same header as the normal regf format (the first 512 bytes of the _HBASE_BLOCK structure, up to the CheckSum field), with the Type field set to 0x1 (legacy log file on Windows XP and newer), 0x2 (legacy log file on Windows 2000 and older), or 0x6 (incremental log file). Further at offset 0x200, legacy log files contain the signature 0x54524944 ("DIRT") followed by the "dirty vector", while incremental log files contain successive records represented by the magic value 0x454C7648 ("HvLE").

These formats are well-documented in two unofficial regf documentations: GitHub: libyal/libregf and GitHub: msuhanov/regf. Additional information can be found in the "Stable storage" and "Incremental logging" subsections of the Windows Internals (Part 2, 7th Edition) book and its earlier editions.

From a security perspective, it's important to note that LOG files are processed for app hives, so their handling is part of the local attack surface. On the other hand, this attack surface isn't particularly large, as it boils down to just a few functions that are called by the two highest-level routines: HvAnalyzeLogFiles and HvpPerformLogFileRecovery. The potential types of bugs are also fairly limited, mainly consisting of shallow memory safety violations. Two specific examples of vulnerabilities related to this functionality are CVE-2023-35386 and CVE-2023-38154.

Log file parsing: KTM logs

Besides ensuring atomicity at the level of individual operations, the Windows Registry also provides two ways to achieve atomicity for entire groups of operations, such as creating a key and setting several of its values as part of a single logical unit. These mechanisms are based on two different types of transactions: KTM transactions (managed by the Kernel Transaction Manager, implemented by the tm.sys driver) and lightweight transactions, which were designed specifically for the registry. Notably, lightweight transactions exist in memory only and are never written to disk, so they do not represent an attack vector during hive loading, because there is no file recovery logic.

KTM transactions are available for use in any loaded hive that doesn't have the REG_APP_HIVE and REG_HIVE_NO_RM flags. To utilize them, a transaction object must first be created using the CreateTransaction API. The resulting handle is then passed to the RegOpenKeyTransacted, RegCreateKeyTransacted, or RegDeleteKeyTransacted registry functions. Finally, the entire transaction is committed via CommitTransaction. Windows attempts to guarantee that active transactions that are caught mid-commit during a sudden system shutdown will be rolled forward when the hive is loaded again. To achieve this, the Windows kernel employs the Common Log File System interface to save serialized records detailing individual operations to the .blf files that accompany the main hive file. When a hive is loaded, the system checks for unapplied changes in these .blf files. If any are found, it deserializes the individual records and attempts to redo all the actions described within them. This logic is primarily handled by the internal functions CmpRmAnalysisPhase, CmpRmReDoPhase, and CmpRmUnDoPhase, as well as the functions surrounding them in the control flow graph.

Given that KTM transactions are never enabled for app hives, the possibility of an unprivileged user exploiting this functionality is severely limited. The only option is to focus on KTM log files associated with regular hives that a local user has some control over, namely the user hive (NTUSER.DAT) and the user classes hive (UsrClass.dat). If a transactional operation is performed on a user's HKCU hive, additional .regtrans-ms and .blf files appear in their home directory. Furthermore, if these files don't exist at first, they can be planted on the disk manually, and will be processed by the Windows kernel after logging out and logging back in. Interestingly, even when the KTM log files are actively in use, they have the read sharing mode enabled. This means that a user can write data to these logs by performing transactional operations, and read from them directly at the same time.

Historically, the handling of KTM logs has been affected by a significant number of security issues. Between 2019 and 2020, James Forshaw reported three serious bugs in this code: CVE-2019-0959, CVE-2020-1377, and CVE-2020-1378. Subsequently, during my research, I discovered three more: CVE-2023-28271, CVE-2023-28272, and CVE-2023-28293. However, the strangest thing is that, according to my tests, the entire logic for restoring the registry state from KTM logs stopped working due to code refactoring introduced in Windows 10 1607 (almost 9 years ago) and has not been fixed since. I described this observation in another report related to transactions, in a section called "KTM transaction recovery code". I'm not entirely sure whether I'm making a mistake in testing, but if this is truly the case, it means that the entire recovery mechanism currently serves no purpose and only needlessly increases the system's attack surface. Therefore, it could be safely removed or, at the very least, actually fixed.

Direct registry operations through standard syscalls

Direct operations on keys and values are the core of the registry and make up most of its associated code within the Windows kernel. These basic operations don't need any special permissions and are accessible by all users, so they constitute the primary attack surface available to a local attacker. These actions have been summarized at the beginning of blog post #2, and should probably be familiar by now. As a recap, here is a table of the available operations, including the corresponding high-level API function, system call name, and internal kernel function name if it differs from the syscall:

Some additional comments:

- A regular user can directly load only application hives, using the RegLoadAppKey function or its corresponding syscalls with the REG_APP_HIVE flag. Loading standard hives, using the RegLoadKey function, is reserved for administrators only. However, this operation is still indirectly accessible to other users through the NTUSER.MAN hive and the Profile Service, which can load it as a user hive during system login.

- When selecting API functions for the table above, I prioritized their latest versions (often with the "Ex" suffix, meaning "extended"). I also chose those that are the thinnest wrappers and closest in functionality to their corresponding syscalls on the kernel side. In the official Microsoft documentation, you'll also find many older/deprecated versions of these functions, which were available in early Windows versions and now exist solely for backward compatibility (e.g., RegOpenKey, RegEnumKey). Additionally, there are also helper functions that implement more complex logic on the user-mode side (e.g., RegDeleteTree, which recursively deletes an entire subtree of a given key), but they don't add anything in terms of the kernel attack surface.

- There are several operations natively supported by the kernel that do not have a user-mode equivalent, such as NtQueryOpenSubKeys or NtSetInformationKey. The only way to use these interfaces is to call their respective system calls directly, which is most easily achieved by calling their wrappers with the same name in the ntdll.dll library. Furthermore, even when a documented API function exists, it may not expose all the capabilities of its corresponding system call. For example, the RegQueryKeyInfo function returns some information about a key, but much more can be learned by using NtQueryKey directly with one of the supported information classes.

Moreover, there is a group of syscalls that do require administrator rights (specifically SeBackupPrivilege, SeRestorePrivilege, or PreviousMode set to KernelMode). These syscalls are used either for registry management by the kernel or system services, or for purely administrative tasks (such as performing registry backups). They are not particularly interesting from a security research perspective, as they cannot be used to elevate privileges, but it is worth mentioning them by name:

- NtCompactKeys

- NtCompressKey

- NtFreezeRegistry

- NtInitializeRegistry

- NtLockRegistryKey

- NtQueryOpenSubKeysEx

- NtReplaceKey

- NtRestoreKey

- NtSaveKey

- NtSaveKeyEx

- NtSaveMergedKeys

- NtThawRegistry

- NtUnloadKey

- NtUnloadKey2

- NtUnloadKeyEx

Incorporating advanced features

Despite the fact that most power users are familiar with the basic registry operations (e.g., from using Regedit.exe), there are still some modifiers that can change the behavior of these operations, thereby complicating their implementation and potentially leading to interesting bugs. To use these modifiers, additional steps are often required, such as enabling registry virtualization, creating a transaction, or loading a differencing hive. When this is done, the information about the special key properties are encoded within the internal kernel structures, and the key handle itself is almost indistinguishable from other handles as seen by the user-mode application. When operating on such advanced keys, the logic for their handling is executed in the standard registry syscalls transparently to the user. The diagram below illustrates the general, conceptual control flow in registry-related system calls:

This is a very simplified outline of how registry syscalls work, but it shows that a function theoretically supporting one operation can actually hide many implementations that are dynamically chosen based on various factors. In terms of specifics, there are significant differences depending on the operation and whether it is a "read" or "write" one. For example, in "read" operations, the execution paths for transactional and non-transactional operations are typically combined into one that has built-in transaction support but can also operate without them. On the other hand, in "write" operations, normal and transactional operations are always performed differently, but there isn't much code dedicated to layered keys (except for the so-called key promotion operations), since when writing to a layered key, the state of keys lower on the stack is usually not as important. As for the "Internal operation handler" area marked within the large rectangle with the dotted line, these are internal functions responsible for the core logic of a specific operation, and whose names typically begin with "Cm" instead of "Nt". For example, for the NtDeleteKey syscall, the corresponding internal handler is CmDeleteKey, for NtQueryKey it is CmQueryKey, for NtEnumerateKey it is CmEnumerateKey, and so on.

In the following sections, we will take a closer look at each of the possible complications.

Predefined keys and symbolic links

Predefined keys were deprecated in 2023, so I won't spend much time on them here. It's worth mentioning that on modern systems, it wasn't possible to create them in any way using the API, or even directly using syscalls. The only way to craft such a key in the registry was to create it in binary form in a controlled hive file and have it loaded via RegLoadAppKey or as a user hive. These keys had very strange semantics, both at the key node level (unusual encoding of _CM_KEY_NODE.ValueList) and at the kernel key body object level (non-standard value of _CM_KEY_BODY.Type). Due to the need to filter out these keys at an early stage of syscall execution, there are special helper functions whose purpose is to open the key by handle and verify whether it is or isn't a predefined handle (CmObReferenceObjectByHandle and CmObReferenceObjectByName). Consequently, hunting for bugs related to predefined handles involved verifying whether each syscall used the above wrappers correctly, and whether there was some other way to perform an operation on this type of key while bypassing the type check. As I have mentioned, this is now just a thing of the past, as predefined handles in input hives are no longer supported and therefore do not pose a security risk to the system.

When it comes to symbolic links, this is a semi-documented feature that requires calling the RegCreateKeyEx function with the special REG_OPTION_CREATE_LINK flag to create them. Then, you need to set a value named "SymbolicLinkValue" and of type REG_LINK, which contains the target of the symlink as an absolute, internal registry path (\Registry\...) written using wide characters. From that point on, the link points to the specified path. However, it's important to remember that traversing symbolic links originating from non-system hives is heavily restricted: it can only occur within a single "trust class" (e.g., between the user hive and user classes hive of the same user). As a result, links located in app hives are never fully functional, because each app hive resides in its own isolated trust class, and they cannot reference themselves either, as references to paths starting with "\Registry\A" are blocked by the Windows kernel.

As for auditing symbolic links, they are generally resolved during the opening/creation of a key. Therefore, the analysis mainly involves the CmpParseKey function and lower-level functions called within it, particularly CmpGetSymbolicLinkTarget, which is responsible for reading the target of a given symlink and searching for it in existing registry structures. Issues related to symlinks can also be found in registry callbacks registered by third-party drivers, especially those that handle the RegNtPostOpenKey/RegNtPostCreateKey and similar operations. Correctly handling "reparse" return values and the multiple call loops performed by the NT Object Manager is not an easy feat to achieve.

Registry virtualization

Registry virtualization, introduced in Windows Vista, ensures backward compatibility for older applications that assume administrative privileges when using the registry. This mechanism redirects references between HKLM\Software and HKU\<SID>_Classes\VirtualStore subkeys transparently, allowing programs to "think" they write to the system hive even though they don't have sufficient permissions for it. The virtualization logic, integrated into nearly every basic registry syscall, is mostly implemented by three functions:

- CmKeyBodyRemapToVirtualForEnum: Translates a real key inside a virtualized hive (HKLM\Software) to a virtual key inside the VirtualStore of the user classes hive during read-type operations. This is done to merge the properties of both keys into a single state that is then returned to the caller.

- CmKeyBodyRemapToVirtual: Translates a real key to its corresponding virtual key, and is used in the key deletion and value deletion operations. This is done to delete the replica of a given key in VirtualStore or one of its values, instead of its real instance in the global hive.

- CmKeyBodyReplicateToVirtual: Replicates the entire key structure that the caller wants to create in the virtualized hive, inside of the VirtualStore.

All of the above functions have a complicated control flow, both in terms of low-level implementation (e.g., they implement various registry path conversions) and logically – they create new keys in the registry, merge the states of different keys into one, etc. As a result, it doesn't really come as a big surprise that the code has been affected by many vulnerabilities. Triggering virtualization doesn't require any special rights, but it does need a few conditions to be met:

- Virtualization must be specifically enabled for a given process. This is not the default behavior for 64-bit programs but can be easily enabled by calling the SetTokenInformation function with the TokenVirtualizationEnabled argument on the security token of the process.

- Depending on the desired behavior, the appropriate combination of VirtualSource/VirtualTarget/VirtualStore flags should be set in _CM_KEY_NODE.Flags. This can be achieved either through binary control over the hive or by setting it at runtime using the NtSetInformationKey call with the KeySetVirtualizationInformation argument.

- The REG_KEY_DONT_VIRTUALIZE flag must not be set in the _CM_KEY_NODE.VirtControlFlags field for a given key. This is usually not an issue, but if necessary, it can be adjusted either in the binary representation of the hive or using the NtSetInformationKey call with the KeyControlFlagsInformation argument.

- In specific cases, the source key must be located in a virtualizable hive. In such scenarios, the HKLM\Software\Microsoft\DRM key becomes very useful, as it meets this condition and has a permissive security descriptor that allows all users in the system to create subkeys within it.

With regards to the first two points, many examples of virtualization-related bugs can be found in the Project Zero bug tracker. These reports include proof-of-concept code that correctly sets the appropriate flags. For simplicity, I will share that code here as well; the two C++ functions responsible for enabling virtualization for a given security token and registry key are shown below:

BOOL EnableTokenVirtualization(HANDLE hToken, BOOL bEnabled) {

DWORD dwVirtualizationEnabled = bEnabled;

return SetTokenInformation(hToken,

TokenVirtualizationEnabled,

&dwVirtualizationEnabled,

sizeof(dwVirtualizationEnabled));

}

BOOL EnableKeyVirtualization(HKEY hKey,

BOOL VirtualTarget,

BOOL VirtualStore,

BOOL VirtualSource) {

KEY_SET_VIRTUALIZATION_INFORMATION VirtInfo;

VirtInfo.VirtualTarget = VirtualTarget;

VirtInfo.VirtualStore = VirtualStore;

VirtInfo.VirtualSource = VirtualSource;

VirtInfo.Reserved = 0;

NTSTATUS Status = NtSetInformationKey(hKey,

KeySetVirtualizationInformation,

&VirtInfo,

sizeof(VirtInfo));

return NT_SUCCESS(Status);

}

And their example use:

HANDLE hToken;

HKEY hKey;

//

// Enable virtualization for the token.

//

if (!OpenProcessToken(GetCurrentProcess(), TOKEN_ALL_ACCESS, &hToken)) {

printf("OpenProcessToken failed with error %u\n", GetLastError());

return 1;

}

EnableTokenVirtualization(hToken, TRUE);

//

// Enable virtualization for the key.

//

hKey = RegOpenKeyExW(...);

EnableKeyVirtualization(hKey,

/*VirtualTarget=*/TRUE,

/*VirtualStore=*/ TRUE,

/*VirtualSource=*/FALSE);

Transactions

There are two types of registry transactions: KTM and lightweight. The former are transactions implemented on top of the tm.sys (Transaction Manager) driver, and they try to provide certain guarantees of transactional atomicity both during system run time and even across reboots. The latter, as the name suggests, are lightweight transactions that exist only in memory and whose task is to provide an easy and quick way to ensure that a given set of registry operations is applied atomically. As potential attackers, there are three parts of the interface that we are interested in the most: creating a transaction object, rolling back a transaction, and committing a transaction. The functions responsible for all three actions in each type of transaction are shown in the table below:

|

Operation |

KTM (API) |

KTM (system call) |

Lightweight (API) |

Lightweight (system call) |

|

Create transaction |

CreateTransaction |

NtCreateTransaction |

- |

NtCreateRegistryTransaction |

|

Rollback transaction |

RollbackTransaction |

NtRollbackTransaction |

- |

NtRollbackRegistryTransaction |

|

Commit transaction |

CommitTransaction |

NtCommitTransaction |

- |

NtCommitRegistryTransaction |

As we can see, the KTM has a public, documented API interface, which cannot be said for lightweight transactions that can only be used via syscalls. Their definitions, however, are not too difficult to reverse engineer, and they come down to the following prototypes:

NTSTATUS NtCreateRegistryTransaction(PHANDLE OutputHandle, ACCESS_MASK DesiredAccess, POBJECT_ATTRIBUTES ObjectAttributes, ULONG Reserved);

NTSTATUS NtRollbackRegistryTransaction(HANDLE Handle, ULONG Reserved);

NTSTATUS NtCommitRegistryTransaction(HANDLE Handle, ULONG Reserved);

Upon the creation of a transaction object, whether of type TmTransactionObjectType (KTM) or CmRegistryTransactionType (lightweight), its subsequent usage becomes straightforward. The transaction handle is passed to either the RegOpenKeyTransacted or the RegCreateKeyTransacted function, yielding a key handle. The key's internal properties, specifically the key body structure, will reflect its transactional nature. Operations on this key proceed identically to the non-transactional case, using the same functions. However, changes are temporarily confined to the transaction context, isolated from the global registry view. Upon the completion of all transactional operations, the user may elect either to discard the changes via a rollback, or apply them atomically through a commit. From the developer's perspective, this interface is undeniably convenient.

From an attack surface perspective, there's a substantial amount of code underlying the transaction functionality. Firstly, the handler for each base operation includes code to verify that the key isn't locked by another transaction, to allocate and initialize a UoW (unit of work) object, and then write it to the internal structures that describe the transaction. Secondly, to maintain consistency with the new functionality, the existing non-transactional code must first abort all transactions associated with a given key before it can be modified.

But that's not the end of the story. The commit process itself is also complicated, as it must cleverly circumvent various registry limitations resulting from its original design. In 2023, most of the code responsible for KTM transactions was removed as a result of CVE-2023-32019, but there is still a second engine that was initially responsible for lightweight transactions and now handles all of them. It consists of two stages: "Prepare" and "Commit". During the prepare stage, all steps that could potentially fail are performed, such as allocating all necessary cells in the target hive. Errors are allowed and correctly handled in the prepare stage, because the globally visible state of the registry does not change yet. This is followed by the commit stage, which is designed so that nothing can go wrong – it no longer performs any dynamic allocations or other complex operations, and its whole purpose is to update values in both the hive and the kernel descriptors so that transactional changes become globally visible. The internal prepare handlers for each individual operation have names starting with "CmpLightWeightPrepare" (e.g., CmpLightWeightPrepareAddKeyUoW), while the corresponding commit handlers start with "CmpLightWeightCommit" (e.g., CmpLightWeightCommitAddKeyUoW). These are the two main families of functions that are most interesting from a vulnerability research perspective. In addition to them, it is also worth analyzing the rollback functionality, which is used both when the rollback is requested directly by the user and when an error occurs in the prepare stage. This part is mainly handled by the CmpTransMgrFreeVolatileData function.

Layered keys

Layered keys are the latest major change of this type in the Windows Registry, introduced in 2016. They overturned many fundamental assumptions that had been in place until then. A given logical key no longer consists solely of one key node and a maximum of one active KCB, but of a whole stack of these objects: from the layer height of the given hive down to layer zero, which is the base hive. A key that has a key node may in practice be non-existent (if marked as a tombstone), and vice versa, a key without a key node may logically exist if there is an existing key with the same name lower in its stack. In short, this whole containerization mechanism has doubled the complexity of every single registry operation, because:

- Querying for information about a key has become more difficult, because instead of gathering information from just one key, it has to be potentially collected from many keys at once and combined into a coherent whole for the caller.

- Performing any "write" operations has become more difficult because before writing any information to the key at a given nesting level, you first need to make sure that the key and all its ancestors in a given hive exist, which is done in a complicated process called "key promotion".

- Deleting and renaming a key has become more difficult, because you always have to consider and correctly handle higher-level keys that rely on the one you are modifying. This is especially true for Merge-Unbacked keys, which do not have their own representation and only reflect the state of the keys at a lower level. This also applies to ordinary keys from hives under HKLM and HKU, which by themselves have nothing to do with differencing hives, but as an integral part of the registry hierarchy, they also have to correctly support this feature.

- Performing security access checks on a key has become more challenging due to the need to accurately pinpoint the relevant security descriptor on the key stack first.

Overall, the layered keys mechanism is so complex that it could warrant an entire blog post (or several) on its own, so I won't be able to explain all of its aspects here. Nevertheless, its existence will quickly become clear to anyone who starts reversing the registry implementation. The code related to this functionality can be identified in many ways, for example:

- By references to functions that initialize the key node stack / KCB stack objects (i.e., CmpInitializeKeyNodeStack, CmpStartKcbStack, and CmpStartKcbStackForTopLayerKcb),

- By dedicated functions that implement a given operation specifically on layered keys that end with "LayeredKey" (e.g., CmDeleteLayeredKey, CmEnumerateValueFromLayeredKey, CmQueryLayeredKey),

- By references to the KCB.LayerHeight field, which is very often used to determine whether the code is dealing with a layered key (height greater than zero) or a base key (height equal to zero).

I encourage those interested in further exploring this topic to read Microsoft's Containerized Configuration patent (US20170279678A1), the "Registry virtualization" section in Chapter 10 of Windows Internals (Part 2, 7th Edition), as well as my previous blog post #6, where I briefly described many internal structures related to layered keys. All of these references are great resources that can provide a good starting point for further analysis.

When it comes to layered keys in the context of attack entry points, it's important to note that loading custom differencing hives in Windows is not straightforward. As I wrote in blog post #4, loading this type of hive is not possible at all through any standard NtLoadKey-family syscall. Instead, it is done by sending an undocumented IOCTL 0x220008 to \Device\VRegDriver, which then passes this request on to an internal kernel function named CmLoadDifferencingKey. Therefore, the first obstacle is that in order to use this IOCTL interface, one would have to reverse engineer the layout of its corresponding input structure. Fortunately, I have already done it and published it in the blog post under the VRP_LOAD_DIFFERENCING_HIVE_INPUT name. However, a second, much more pressing problem is that communicating with the VRegDriver requires administrative rights, so it can only be used for testing purposes, but not in practical privilege escalation attacks.

So, what options are we left with? Firstly, there are potential scenarios where the exploit is packaged in a mechanism that legitimately uses differencing hives, e.g., an MSIX-packaged application running in an app silo, or a specially crafted Docker container running in a server silo. In such cases, we provide our own hives by design, which are then loaded on the victim’s system on our behalf when the malicious program or container is started. The second option is to simply ignore the inability to load our own hive and use one already present in the system. In a default Windows installation, many built-in applications use differencing hives, and the \Registry\WC key can be easily enumerated and opened without any problems (unlike \Registry\A). Therefore, if we launch a program running inside an app silo (e.g., Notepad) as a local user, we can then operate on the differencing hives loaded by it. This is exactly what I did in most of my proof-of-concept exploits related to this functionality. Of course, it is possible that a given bug will require full binary control over the differencing hive in order to trigger it, but this is a relatively rare case: of the 10 vulnerabilities I identified in this code, only two of them required such a high degree of control over the hive.

Alternative registry attack targets

The most crucial attack surface associated with the registry is obviously its implementation within the Windows kernel. However, other types of software interact with the registry in many ways and can be also prone to privilege escalation attacks through this mechanism. They are discussed in the following sections.

Drivers implementing registry callbacks

Another area where potential registry-related security vulnerabilities can be found is Registry Callbacks. This mechanism, first introduced in Windows XP and still present today, provides an interface for kernel drivers to log or interfere with registry operations in real-time. One of the most obvious uses for this functionality is antivirus software, which relies on registry monitoring. Microsoft, aware of this need but wanting to avoid direct syscall hooking by drivers, was compelled to provide developers with an official, documented API for this purpose.